Robots are used in all sorts of industries on a wide variety of tasks. Typically, it’s because they’re far faster, more accurate, and more capable than we are. Expert humans could not compete with the consistent, speedy output of a robotic welder on an automotive production line, nor could they as delicately coat the chocolate on the back of a KitKat.

However, there are some tasks in which humans still have the edge. Those include driving, witty repartee, and yes, folding laundry. That’s not to say the robots aren’t trying, though, so let’s take a look at the state of the art.

The Challenges of Folding At Home

They’re less good at jobs where they have to figure out what the right moves are. Credit: Phasmatisnox, CC-BY-SA-3.0

Laundry automation was a game changer for home life when it first hit the mainstream. The washboard and the mangle gave way to the automatic washing machine and the clothes dryer, and the busy working people of the world rejoiced. No longer would they toil for hours just to get some clean clothes!

However, the next step in the process, folding, has not seen mainstream automation just yet. This is largely due to the fact that folding laundry is simply a much more complicated task than washing or drying. Those tasks simply involve chucking a bunch of clothes into a tub, and shaking them about with either water and soap or heat applied. It’s a batch process whose primary control input is the RPM of a single motor.

Folding first requires a single piece of laundry to be separated from the pack and identified. Machine vision has come a long way in the last decade, but this is still a challenging task. Plus, clothes can become entangled with others in a pile, further complicating the issue for a hypothetical robot folder. Towels, socks, skirts, and jeans are all different, and require their own techniques to deal with. Fancy clothes with straps and buckles and unique structures only add further challenges.

State of the Art

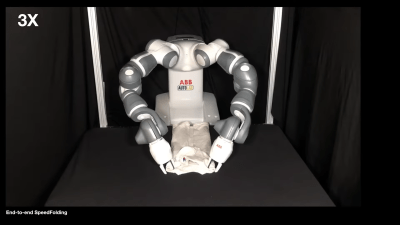

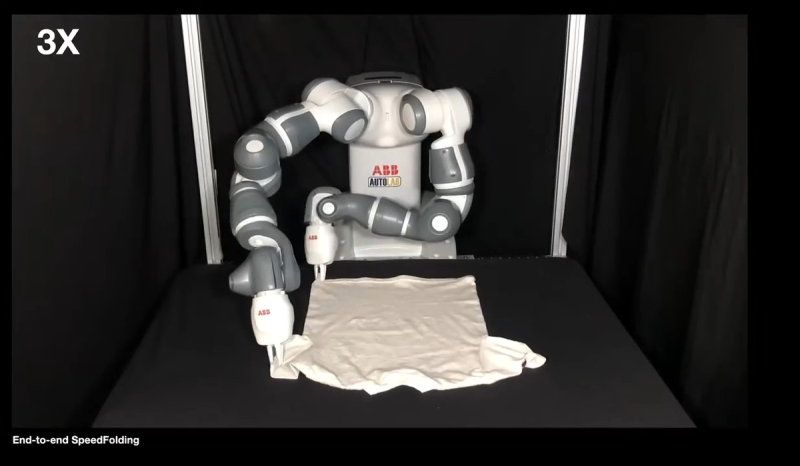

Researchers from UC Berkeley and the Karlsruhe Institute of Technology have developed a robot capable of folding laundry so quickly that they’ve termed the process “SpeedFolding.” It uses a robot with two “hands” which work together to fold clothes, much like we do. As per the research paper, the team claims the robot can fold 30-40 garments in an hour. That’s pretty much an order of magnitude faster than previous robotic folding efforts, which achieved fold times more on the order of 3-6 garments folded per hour.

The robot begins by scanning the laundry item to be folded with an overhead camera. It then uses a neural network trained on 4,300 different actions to identify how to get the randomly-aligned garment into the necessary initial state for folding. Various motions are used by the robot to achieve this, including moving the garment or parts of the garment around, flinging it around, or dragging it along a surface.

https://www.youtube.com/watch?v=em675-X2jfM

The neural network was trained in a variety of ways, with the primary smoothing step a key part of the process. The robot was allowed to perform different actions autonomously with the set goal of maximising the garment’s surface area. This was a useful way to teach the robot to get the garment into a controlled initial state for folding. Once the garment is in such a state, prescribed actions can be executed to complete the folding process. Useful techniques from the human world are applicable to the robot, too. The classic “2-second fold” that can be used on T-shirts is used by the robot to fold an already-flat tee in under 30 seconds. However, the general process takes longer than that. The full procedure of garment identification, state initialization, and then folding takes about two minutes on average.

As it stands, though, the technology is still very much at the demo stage. The twin-armed robot used is an advanced device costing tens of thousands of dollars, and the industrial 3D vision system likely doesn’t come cheap either. Plus, it’s not mobile, so can only work in a small space in front of its base.

The folding results obviously aren’t up to human speeds, but they also leave something to be desired in quality, too. The pincer manipulators are capable of picking up many different articles of clothing, but they lack finesse. The garments folded by the robot aren’t particularly neat and tidy, and certainly wouldn’t pass muster at your average Hot Topic or The Gap.

However, those keen to delve into the world of laundry automation can benefit from this cutting-edge research. Not only is the research paper available online, but the datasets and code repository are too.

SpeedFolding marks a major leap forward in the world of robot laundry folding, and we can’t wait to see the next one. Who knows where we’ll be in another ten or twenty years? Maybe we’ll all be running our laundry-folding robots off of affordable fusion power! We can only hope.

Imagining the caps at the shoulder joints as eyes makes it even funnier!

“It’s a batch process whose primary control input is the RPM of a single motor.”

And water pump speed and direction (or rather, volumetric metering both ways). And water temperature. And chemical addition. Anyone who has shrunk a woollen garment or distorted a synthetic one should be aware that there is more than one wash cycle available for a reason!

Then there’s the other fun hacks you can perform, like using a machine washer for dying (great for fibre-reactive dyes in front-loaders that allow powder addition mid-cycle)!

“like using a machine washer for dying”

I read that as “drying”. :P

I can imagine using the double arms because it’s more versatile to allow for other tasks but like most times trying to solve it “the human way” in robotics is entirely pointless when you can design devices like these:

https://youtu.be/8kzmZzsw4Js

https://youtu.be/2kv9eeKqmNk

And if you’re folding sheets –

https://youtu.be/Ia7uLtBEd_A

oh, you have fitted sheets?

https://www.youtube.com/watch?v=h6MkqEjdDmU

Both those machines use double arms too – the arms of the human operator who separates the laundry into individual garments and lays them flat in a known orientation.

Gives a new meaning to “Folding@home”

B^)

How comes I never thought of this until now?!

And finally not a weird association as usual with such comments. A nice one.

That’s OK, I suck at folding too!

Shirts are the worse.

I think this is still a domain that needs reverse thinking (like the paradigm change of having the wire’s hole in front of a needle for sewing machine, so it can be knotted while the machine is in the fabric). Here, the garments should probably be fitted on some pattern/template and have the pattern being folded instead of trying to reproduce the complex human joints.

That’s basically what it’s doing, except that the templates are all internal to the robot’s software, and it’s looking for ways to grab it that will simulate flat surfaces. We could probably get a speed-up by letting it figure out a more optimal folding pattern.

Clothes origami.

I’d rather have clothes that have not been folded over and over again in the exact same way.

Also this robot is doing the easy part of the work and the hard part is still being done by humans.

I don’t know about you but folding the laundry is a nice way to relax after washing a big load. When the robot can collect the dirty clothes and sort and wash them, I’ll be interested. Also I wanna see a robot sort socks and turn them right side out.

Easy. Just have several containers if you need to separate laundry. Usually two at most three should be enough. Then just take all out and put it in the washing machine. Seems overkill to use a robot for that.

The socks robot makes more sense. Then again, why is that not relaxing if folding is for you?

I only buy black socks of one style, no sorting required!

That was my tactic as well, then Sam’s Club changed either the mfgr, or the mfgr changed the product. After a couple of washings, the newer batch socks now have gray toes and heels!

I wonder if it would be easier for humans to adjust to a new way of receiving/storing folded clothes to accommodate the best way for a robot to do it? How a human washes dishes is different than how a dishwasher does it. (And to be fair, the way a dishwasher does it has also changed over time.) Maybe it would be easier to find an easy way for robots to consolidate the space that clothes take (w/out inducing wrinkles) and build storage systems around that instead of trying to get robots to fold clothes the way humans do it.

If the bags were reusable, I could go for one piece of clothing per bag, vacuum sealed, and ready to put on a hanger or lay flat in a chest of drawers w/out folding. The footprint would be different, but it might still be less than or at least functional vs. current/traditional.

Your comment sparked this thought:

A single hook.

The idea is you need to make sure you have one piece of clothes.

Once it has it out of the pile drop it into a vacuum seal bag by weight (Smaller bag for smaller items.) Reusable obviously to avoid waste.

Vacuum seal whatever the piece of clothes is preferably first by compressing in the bag then sucking air out.

You might be able to add a moisture sensor to the manipulator to assure clothes are dry.

When you need something you can visually inspect them, get them out of the bag and just reuse the bag, it’s not go anything but clothes fiber in it. There will be wear on it of course and bags will have a lifetime but we’re talking minimal here so good bags should be years, not months.

Before someone brings it up:

Yup, clothes will be wrinkled AF.

I do think the ironing clothes that need it vs time saved through this method would go on the side of time won. And energy.

Heck a simple steam ironing dummy you can throw shirts on would be a minimal add on.

I wonder how fast it would be if it didn’t have to figure out the details of each garment. A QR code or RFID tag could have the specs of the garment and it would take less time to work that part out. Once a particular item has been folded successfully then the same folding procedure would be done in subsequent folds.

99% of the time, I don’t fold clothes. (Towels, etc. = yes) I ROLL them, like some Navy, etc. guys.

Actually if you go onto some home sites and look at their bathrooms, a lot of people roll their towels.

https://www.houzz.com/

I’m old enough to not care what other people think. I just wear wrinkly clothes.

Old people are just youngsters with a higher fractal dimension to their surface.

Irony is the opposite of wrinkly.

Usually people will say that robotics is a software and not a hardware problem (especially Minsky would say that). In this case the problem it is clearly different.

Vision is not at all sufficient to solve this task. You need fine touch abilities, sensing the weight (and weight shifting) and fabric properites.

Then you need a vision system that is able to derive a 3d net of the fabric.

Once you have all of that you could train a system to rotate, hold or semi fold a cloth in various “poses”/states. You could define many such states simply by distorting the 3d net surface automatically/geometrically to cover most of the imaginable state space.

Then you can train a machine learning system to correlate the sensor information with this 3d net surface state/pose.

And only then when the system is very agile (and confident about the current state of the cloth, i.e., can represent it visually as 3d model), you can give it tasks to fold the cloth in a certain way as a sequence of steps.

As usual in robotics, people try to implement high level tasks, while ignoring the low level tasks you need to master, since we are unaware of them and do them intuitively.

Machine learning will fail when the sensor data is not enough to predict the output, or is too sensible to noise (because the sensor data is really only deriving the state indirectly, for example visually, rather than with touch).

It needs real awarness of the state the cloth is in in *detail*. Doing it with minimal sensor information will not work.

Also, physical cloth simulation is possible today, even if it’s not fully natural, it isn’t too bad. I would rather define a virtual robot with touch and weight sensing abilities, then train it in this simulation to identify poses from the camera and touch/weight sensors.

You really need to train it to predict how the cloth will behave in random situations (i.e., apply random external force vectors, but also predict the resulting state/state sequence from robot manipulations), and then compensate and stabilize this state back to a state you want.

This cannot be trained by giving predefined steps it should just reproduce. It needs to be able to handle unknown cases, and this is were simulation is perfect for creating that data.

A bit like walking robots are trained, but here it has to control the state of the cloth, not of it’s own body, first and foremost.

Once it is able to stabilize a state/compensate for disturbances (and that fast and agile, not by insisting to go back to the perfectly well defined starting state), then you can give it instructions to follow. It needs to adapt them to the situation, and for that it needs a good intuition what states are stable, similar, and which state serves what purpose.

For example it needs to recognize that if the cloth is flat but at a certain angle of inclination or rotation it is still fit for the purpose as starting point. What matters is that it is immobile and flat.

It also needs to understand that letting a cloth hang in a certain way gives it stability and how this depends on how the two hand relate to each other and at what angle.

We learned this too, you can’t skip this step, or you will just repeat instructions like … well a robot: mindlessly.

Robots agility is achieved by being able to adapt instructions to a situation, and for that they need to understand the purpose of said instructions, and for that, they need a meaningful “vocabulary”/set of concepts to map it onto.

This whole process needs to be visualized, including the assumptions (such as cloth is in a flat rigid resting state, or hanging stable state, etc), so it can be easily debugged and identified where training data is lacking.

The current approach seems to be simply done to show off a new neural network topology, not to really try to solve folding with robot arms.

Substandard robot from ABB. Major design flaws in the arms that have to replaced when they break due to their first Rev arms that have many manufacturing defects. ABB won’t help you on price one bit even though they had to redesign the arms.

How difficult would it be to get a robot to fold a shirt in under 2 seconds?

https://www.youtube.com/watch?v=uz6rjbw0ZA0

A robot, however inept, would be better at this chore than my teenager. Or most any teenager, for that matter.

But tv shows set with scenes in a laundry have “robots” that fold clothes. They were just machines, not robots, but they did it with no computers

Leave it to a research facility to spend thousands of dollars to research how to make a robot do a human task but with pinchers instead of hands. Endeffectors are one of the most important parts and these guys are like let’s go basic :p … good work with the rest though

This seems like a good problem to cheat on. Figure out the optimal folding process for one specific garment. Locate the two points you’d hold when starting to manually fold it, and mark them with UV ink fiducials. When the robot scans the basket, it can give it a shake until it sees a marker. Pick up the one point, shaking the garment free from the pile. Rotate and shake until the second point is located, then grab that. Once both fiducials are properly grabbed, it should be easier to proceed with the fluffing and folding from a consistent known good configuration.

Yes, I know this wouldn’t serve well as a solution for a hotel or commercial operation. It doesn’t have to fully solve every problem today, just be good enough to creep into the job. Doing even 20% of the typical housekeeping tasks would go a long way towards solving staffing issues.

This sounds like a very sensible idea. Start with the “easier,” high-margin niches like this, then work on the low-margin stuff later.

Thank me later,

But something like Magnetic bars on a whiteboard are your solution to this shifting fabric problem.