One of the challenges of diagnosing diseases is identifying them early. At this stage, signs may be vague or confusing, or difficult to identify. Early diagnosis is often tied to the best possible treatment outcomes, so there’s plenty of incentives to improve methods in this way.

A new voice-based method of diagnosing disease could prove fruitful in this regard. It relies on machine learning techniques to detect when patients may be suffering from certain conditions.

Let Me Hear You Say /a/

Speech patterns and the patient’s general quality of voice have long been an important diagnostic tool for physicians. They are particularly relevant to neurological conditions. If the brain or nervous system is not functioning properly, speech can be affected.

Researchers at the Royal Melbourne Institute of Technology (RMIT) developed a method to detect subtle signs of disease using a patient’s voice. The idea was to use machine learning algorithms to determine whether a patient’s pronunciation of certain sounds was indicative of illness. The primary goal was to identify the presence of Parkinson’s disease via sampling a patient speaking several normal English sounds. The research had to achieve this goal despite the natural variations in voices from person to person. 36 patients with Parkinson’s disease were recruited for the research, along with 36 healthy volunteers.

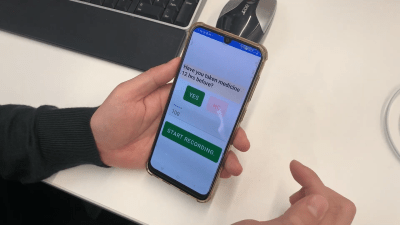

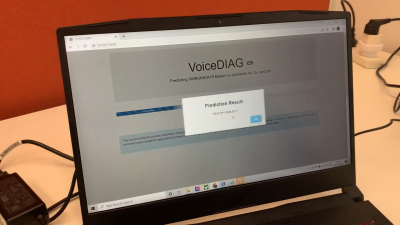

As per the published study, research participants were asked to pronounce three phonemes – /a/, /o/, and /m/. That’s the sound in the middle of “car,” the “oh” sound, and the “mmmm” sound, respectively. These phonemes require the use of the throat, mouth, and nasal passages to make the noise. A machine learning algorithm was trained to determine differences in these sounds between Parkinson’s patients and healthy volunteers. Intended for use in a typical clinical setting, the algorithm was designed to work with audio samples captured from patients using a regular smartphone with reasonable levels of background noise. An app was used to collect the data, and was set up to guide patients to creating the right sounds with vocal samples of the right phonemes.

In testing, it was able to identify patients with Parkinson’s disease 100% of the time. Analysis of a combination of features from all three phonemes was found to be the most reliable method for detection. Testing was limited to patients from one geographical area, and using only one model of smartphone, among other limitations. However, it indicates that there may be potential to develop a diagnostic method for Parkinson’s disease using readily-available smartphone hardware to capture audio samples and process them with an app.

The same techniques were later applied to diagnose COVID-19 patients in a study in Indonesia. In this case, the phonemes used for analysis were expanded to include /e/, /i/, and /u/ sounds in addition to /a/, /o/, and /m/. The study took place over 22 days, and involved 40 patients hospitalized with COVID-19. 48 healthy subjects made up the control group. In this case, analysis of sound features of the /i/ sound were determined to be most indicative, when captured within three days of hospital admission. The method had a 94% accuracy. This is all the more impressive for the low-tech methods used. Sound samples were captured at a sample rate of just 8 kHz, to represent the capabilities of low-tech 2G and 3G phones.

The work suggests that a variety of diseases could potentially be diagnosed thanks to telltale changes in a patient’s speech. Of course, more rigorous studies would be required before such methods become mainstream. Additionally, while such methods may be indicative of disease, vocal techniques are unlikely to become a solitary gold-standard diagnosis for most diseases. More direct and less subjective chemical diagnoses are preferable rather than relying on the intuition of more black-box machine learning systems. However, for difficult-to-diagnose diseases, having a system to offer some indicative guidance can be crucial. Expect these techniques to become more refined and a major part of modern medicine going forward.

Did they ever test against people who weren’t in the training set? Training a nural net on 32 samples is a super low sample size, so unless they showed that it could correctly identify people it wasn’t trained against, I’m more inclined to think the algorithm just “memorized” the training data :/

Agree. 32 samples is ridiculously small to draw any conclusion.

Yeah I was initially crazy excited by this headline but I’m having the same doubts you are. It seems just as likely that even regional accents would trip this up unless. Or people who spent the first 5 years in one part of a country, then the next 20 in another part, then moved to a third (completely wrecking any ability to use current address to push for a region-specific dataset to compare against).

I guess as a “hey look, this might be theoretically possible” it’s kinda cool, but otherwise it smacks of “oh man guys we’re one step closer to cold fusion” sort of headline. I mean sure maybe we are, but has anyone even come up with a reasonable guess as to how many steps might remain and how monumental they are so we know if it’s a “we’ve discovered quantum mechanics” sort of progress or a “our monks now use erasers to clean up mistakes rather than recopying entire pages” sort of progress.

I agree, it’s interesting but it isn’t ready to run, it’s not even out of the trees.

Regional accents or speech patterns aren’t what they’re looking for. All the patient has to do is say AAAAAAAA and EEEEEE and IIIIIIIII for a few seconds. The app is recognizing the involuntary modifications and interruptions to the sound, caused by momentary tremors or other interruptions, which are side effects of the damage caused by the disease in the brain.

Think of it like a mechanic listening for a single cylinder failing in a V8. The mechanic doesn’t care if it’s a Ford or GM, or a 3.5L vs 5.7L, a dead cylinder makes a similar sort of sound in all 4 stroke engines.

Also each sample is recorded and presented to the algorithm at 8khz.

Good news! You taught a robot to recognize voices on the telephone!

My thoughts exactly. Overtraining is a common pitfall. I like to demonstrate this for students by training an ANN to predict stock market prices with near 100% accuracy based on historical data. Completely useless, zero predictive ability when faced with new data, though.

This is incredibly unsound science and shouldn’t be reported this way. The sample size is pitifully small and localised in both cases, with no evidence at all that the service can generalise to a wider population.

Those two studies are very poor quality, just indications that it is worth funding bigger and better work. However the detection of neurodegeneration via voice analysis is not at all a novel idea. As for the COVID study, that one is very flawed given that only a tiny fraction of those exposed to the virus will develop symptoms serious enough to warrant hospitalisation, and there is a large overlap between the covid vulnerable and the neurodegeneration cohorts, in fact covid has been shown to accelerate dementia etc. The problem there should be obvious eh?

And when the patient is already in need of hospitalization, they probably sound obviously sick. At the very least the control group should have been people in hospital for other purposes, not fully healthy people. As is, this just detects really sick vs. healthy, which most humans can do also.

Most humans, and their pets, can detect sickness or difficulty in another person’s voice. This alone gives some credibility to the results of what I would call a feasibility study.

Since voiceprint technology is surprisingly accurate in identifying individuals, even in the background of a cell phone conversation, I personally believe this technology could easily be reliable and accurate, even though the data is somewhat flawed.

Analysis of historic government voiceprint data could make this a breakthrough diagnostic tool. I’m guessing the privacy rights of the deceased will prevent this from happening.

Nuff said, the Gub-Mit be watchin’. – UnklAdM

The paper about detecting COVID is even more questionable. It appears they only tested patients with COVID and healthy subjects. What about people with a common flu but not COVID? Assuming it even works at separating a sample in either of the two categories I guess all patients with similar symptoms to COVID would be automatically flagged as COVID…

To add to your comment; whaddabout COPD, asthma, RSV, cancer, or any lung related illnesses?

I guess if you have a hammer…..

causation vs correlation

Please do not run articles like these. It so riddled with methodological, medical, social and scientific problems that it’s only rightful place is in the trash bin.

You cannot diagnose any illness this way. It is simply impossible.

Also you cannot even begin to take the data set seriously. People hospitalized with Covid-19 or because of. What other illnesses. How to compensate for airway and vocal cord peculiarities or trauma? How to deal with the base rate fallacy? I could go on and on.

Not only they run, but they got a prominent place in the carousel of article just under the logo.

This website has an editorial problem that they deny: no guidelines, ethics, or rigor whatsoever.

The Parkinson’s study was done by reputable institution, with an equal number of control subjects and on a disease that has very particular attributes, and they were using phenomes that are part of a huge number of dialects, isolated out of speech by having the subjects make those very specific sounds, and they got it right 100% of the time. This a perfectly sound (no pun intended) and most importantly, reproducible study that can easily be peer reviewed… So go forth internet rando’s, start your own medical institutions, get your own accreditations, host your own studies and let’s see your results?

Nice that the RMIT team tried to make it compatible with 2G phones. Unfortunately our government disagree that this might be useful, having shut down the 2G network many years ago.

So it’s a bit like insisting that your new software project must also run on a VIC-20.

I’m pleased that the RMIT team disn’t just fling gigaflops at the problem, I’m just salty that the damn govt made my dumbphone obsolete!

This was great, I have been researching for a while now, and I think this has helped. Have you ever come across binehealthcenter. com PD-5 Programme (just google it). It is a smashing one of a kind product for reversing Parkinson’s disease completely. Ive heard some decent things about it and my HWP got amazing success with it.