Life was simpler when everything your computer did was text-based. It is easy enough to shove data into one end of a pipe and take it out of the other. Sure, if the pipe extends across the network, you might have to call it a socket and take some special care. But how do you pipe all the data we care about these days? In particular, I found I wanted to transport audio from the output of one program to the input of another. Like most things in Linux, there are many ways you can get this done and — like most things in Linux — only some of those ways will work depending on your setup.

Why?

There are many reasons you might want to take an audio output and process it through a program that expects audio input. In my case, it was ham radio software. I’ve been working on making it possible to operate my station remotely. If all you want to do is talk, it is easy to find software that will connect you over the network.

However, if you want to do digital modes like PSK31, RTTY, or FT8, you may have a problem. The software to handle those modes all expect audio from a soundcard. They also want to send audio to a soundcard. But, in this case, the data is coming from a program.

Of course, one answer is to remote desktop into the computer directly connected to the radio. However, most remote desktop solutions aren’t made for high-fidelity and low-latency audio. Plus, it is nice to have apps running directly on your computer.

I’ll talk about how I’ve remoted my station in a future post, but for right now, just assume we want to get a program’s audio output into another program’s audio input.

Sound System Overview

Someone once said, “The nice thing about standards is there are so many of them.” This is true for Linux sound, too. The most common way to access a soundcard is via ALSA, also known as Advanced Linux Sound Architecture. There are other methods, but this is somewhat the lowest common denominator on most modern systems.

However, most modern systems add one or more layers so you can do things like easily redirect sound from a speaker to a headphone, for example. Or ship audio over the network.

The most common layer over ALSA is PulseAudio, and for many years, it was the most common standard. These days, you see many distros moving to PipeWire.

PipeWire is newer and has a lot of features but perhaps the best one is that it is easy to set it up to look like PulseAudio. So software that understands PipeWire can use it. Programs that don’t understand it can pretend it is PulseAudio.

There are other systems, too, and they all interoperate in some way. While OSS is not as common as it once was, JACK is still found in certain applications. Many choices!

One Way

There are many ways you can accomplish what I was after. Since I am running PipeWire, I elected to use qpwgraph, which is a GUI that shows you all the sound devices on the system and lets you drag lines between them.

It is super powerful but also super cranky. As things change, it tends to want to redraw the “graph,” and it often does it in a strange and ugly way. If you name a block to help you remember what it is and then disconnect it, the name usually goes back to the default. But these are small problems, and you can work around them.

In theory, you should be able to just grab the output and “wire” it to the other program’s input. In fact, that works, but there is one small problem. Both PipeWire and PulseAudio will show when a program is making sound, and then, when it stops, the source vanishes.

This makes it very hard to set up what I wanted. I wound up using a loopback device so there was something for the receiver to connect to and the transient sending device.

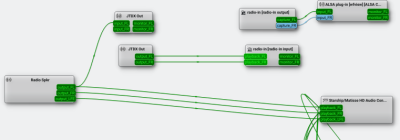

Here’s the graph I wound up with:

I omitted some of the devices and streams that didn’t matter, so it looks pretty simple. The box near the bottom right represents my main speakers. Note that the radio speaker device (far left) has outputs to the speaker and to the JTDX in box.

This lets me hear the audio from the radio and allows JTDX to decode the FT8 traffic. Sending is a little more complicated.

The radio-in boxes are the loopback device. You can see it hooked to the JTDX out box because when I took the screenshot, I was transmitting. If I were not transmitting, the out box would vanish, and only the pipe would be there.

Everything that goes to the pipe’s input also shows up as the pipe’s output and that’s connected directly to the radio input. I left that box marked with the default name instead of renaming it so you can see why it is worth renaming these boxes! If you hover over the box, you’ll see the full name which does have the application name in it.

That means JTDX has to be set to listen and send to the streams in question. The radio also has to be set to the correct input and output. Usually, setting them to Pulse will work, although you might have better luck with the actual pipe or sink/source name.

In order to make this work, though, I had to create the loopback device:

pw-loopback -n radio-in -m '[FL FR]' --capture-props='[media.class=Audio/Sink]' --playback-props='[media.class=Audio/Source]' &

This creates the device as a sink with stereo channels that connect to nothing by default. Sometimes, I only connect the left channels since that’s all I need, but you may need something different.

Other Ways

There are many ways to accomplish this, including using the pw-link utility or setting up special configurations. The PipeWire documentation has a page that covers at least most of the scenarios.

You can also create this kind of virtual device and wiring with PulseAudio. If you need to do that, investigate the pactl command and use it to load the module-loopback module.

It is even possible to use the snd-aloop module to create loopback devices. However, PipeWire seems to be the future, so unless you are on an older system, it is probably better to stick to that method.

Sound Off!

What’s your favorite way to route audio? Why do you do it? What will you do with it? I’ll have a post detailing how this works to allow remote access to a ham transceiver, although this is just a part of the equation. It would be easy enough to use something like this and socat to stream audio around the network in fun ways.

We’ve talked about PipeWire for audio and video before. Of course, connecting blocks for audio processing makes us want to do more GNU Radio.

I’m currently using jack and pipewire on Ubuntu to route audio from a Behringer XR18 into Ardour and back out to both the XR18 (to monitor mix) and to OBS. I combine the audio with a video stream provided from an iPhone 15 using BlackMagic Cam’s ability to provide clean video out over USB-C, through a USB-C to HDMI adapter to a class-compliant capture device.

At each gig, once cabling is complete, I use a script to set up the XR18 using alsa_in, start Ardour, OBS, qjackctl, and the XAir utility, and then restore a jack routing snapshot using aj_snapshot.

Is it tempermental? is it overcomplicated? are there off the shelf products that do this? yes, I’m sure. But this is my hacky solution and I’m proud of it.

This allows me to stream mixed audio (through Ardour) along with video of my cover band to Facebook to those who can’t make the gig. It’s increased viewership and traffic to our Facebook page and allows my family to see me moonlighting as a musician. It’s been a great learning experience on what can be a fairly daunting topic for some; ALSA, jackd, and pipewire.

The next step will be setting up something that allows me to stream to both Facebook and Instagram without relying on something like Streamlabs.

I still don’t understand why people had to go beyond ALSA.

Because not all people used it, because of reasons, so they decided to make abstraction layers on top to deal with different configurations, and then abstraction layers on top of that to deal with the lack of functions of the previous layers.

I thought the original reason was the desire to move stream-mixing to userspace, as opposed to some combination of hardware, drivers and kernel code? But yeah, otherwise it seemed mostly fine.

Modern systems can do per-app volume control, which to me is the main driver behind the newer systems. There are some folks who really want to route audio through the network, and PulseAudio was designed for that sort of thing. The thing is, 99% of users don’t really need that feature.

Also, can ALSA work (smoothly) with Bluetooth audio? That may be another motivation behind the newer stuff.

A while back I wanted to pipe audio between different applications in a more complicated manner than what a normal volume control allows. I found a lot of the visual audio graph programs I tried just didn’t work right. Maybe they weren’t ready for Pipewire vs Jack? Or maybe they were incompatible with some other library? I don’t know but they were all visually just a mess.

What I found worked for me was one called Carla.

Life was simpler when we could redirect standard output of a program to /dev/dsp and vice versa.

[insert “I was there, Gandalf” meme here.]

Exactly! What happened to “everything is a file”? They also screwed up GPIO support that way.

Also “single purpose” has been killed off by the “swiss army knife”

I rather have proper media routing where I can inject my stuff between audio sources and output invisibly to any programs running.

Silly example use case: Some time ago I soldered a replacement headphones jack backwards and instead or resoldering it I installed EqualizerAPO with “Copy: L=R R=L” config :D

But that’s easy to solve. All we need is a simple /dev/dsp that a dedicated userspace application could route to a default ALSA/JACK/PulseAudio/PipeWire sink. We have userspace network devices, userspace block devices, userspace USB hosts, so why couldn’t we have a proper userspace OSS audio device for backward compatibility?

In the meantime one can pipe to

aplayor fromarecord.Probably PipeWire also has some userspace tools for that, but I barely moved on from OSS to ALSA mentally and I still have PulseAudio phase to go through ;-)I’ve been wanting to figure out pretty much this solution! but didn’t get around to trying yet. My radio is an sbitx (sbitx.net): the core is a raspberry pi, so there are enough stopgap options that are allowing me to keep procrastinating, so far. I can ssh -Y to the radio and run all the digital mode software that would otherwise run on its too-small built-in touchscreen, on my desktop X server instead. The main SDR software has a local touch UI, and also a web UI for remote use. It even has (a buggy implementation of) FT8 built-in. So that’s how things tend to go nowadays… web UIs replace other technologies. But the fact that remote X11 connections are so useful (although Qt X11 implementation takes too much bandwidth!) is a good reminder of how wayland is going to be disappointing if X11 ever really dies. PipeWire was supposed to fix that too, at some point; but streaming video will probably be inferior, and it has encoding overhead.

I want to try using 9p: this means I need to refactor the SDR software to serve up its functionality as a remote-mountable filesystem, and then mount it on the machine I’m sitting in front of, and rewrite the whole UI to run there. I think it’s potentially a better architecture, but I don’t know if I will find the time, TBH. And it will not make it easier to interface to sound-card-oriented programs. So some sort of emulation will still be needed, I guess, or just keep running some of the client programs on the radio itself. And what about audio latency if I can figure out how to stream audio over 9p? Remains to be seen. FWIW the web audio implementation has latency too. When I’m streaming audio from the radio to the browser, I can see the signal on the waterfall for a second or two before I can hear it.

every now and then i think i’ll wind up with some pulseaudio sort of layer, maybe to watch a movie on my laptop and hear the soundtrack over some network-attached-speaker or something. but i hope not. i am hugely in favor of simplicity.

once my laptop audio stopped working and i expected the worst “oh no, hardware fault”. after a couple minutes of poknig at it, i found out some apt dependency nightmare had pulled in pulseaudio. killall -9 pulseaudio and suddenly it worked again. pfew!

but i’m with the “everything is a file” crew. i made an ssh server app for android, and a really basic mp3 player app, and one day i realized i could hook the two together and suddenly “cat album/*.mp3 | ssh phone mp3player” became my actual network-attached-speaker. worked great until android security policy broke the cross-app execution that made it possible. sigh. the good days never last.

In my workspace, there’s a mini-PC connected to a TV on the wall that I use for media and the like. However, the stereo is located half way across the room in the same rack as my server (and a bunch of other stuff). I use Pulse Audio’s TCP capability to get the audio from here to there, along with the zeroconf module to simplify finding the sink from the source.

Sure, I could have run a cable. But I already had one. It’s called Ethernet ;)

VB-Audio.

Absolutely fantastic virtual audio mixer, inputs, outputs, and more.

They are almost entirely donationware, and have software ranging from [one virtual audio cable] to [your job is audio engineering at a recording studio].

The software is windows though…except…

They have an audio over network protocol VB-Audio Network(VBAN) integrated into most of their software. And the protocol is open. Their documentation even gives examples of how to implement it. It also supports MIDI(instrument and control signals) and text along with audio.

There are multiple open source projects that implement the protocol on stuff from the esp8266, teensy, esp32, Mac, Linux, python, Android, etc.

And, it looks like there is a way to use VBAN with PipeWire directly.

You can do some wild stuff with VB-audio.

Right now I have 4 machines that mirror audio to my server, in addition to it being available on the local machine.

3 different microphone setups also go there.

I can pull that audio back down from anywhere on the network to process it and give it back, or listen to it locally.

I also have an ESP32 with a battery bank and a DAC that I can grab the audio on, to use with my headphones wirelessly.

Yes, I have it here as well, but I’m really not satisfied with the VBAN protocol. It should include something like FEC, as I’m constantly experiencing dropouts over UDP connections, even within my own LAN.

I thought I knew Jack but 2 identical Atom minis have gone deaf on their inputs using Rakarack on Ubuntu.

If by some chance you missed it, NCD makers of standalone X11 terminals, felt they needed to solve this problem 30 years ago and wrote the “network audio system”. ( sourceforge.net/projects/nas )