It’s quite the understatement to say that at this point in time we don’t quite understand how even the tiniest brain works exactly. Much of this is due to the sheer complexity and scale of these little biological marvels: with the human brain packing billions of neurons and their associated supportive scaffolding into a few kilograms of gooey pink-white mass, the sheer connectivity density is more than we can reasonably hope to measure in-situ. Ergo attempts to recreate digital simulations of small sections of such brains, a process that’s making gradual progress.

Most recently we have been doing mapping of neurons and their connections in the brain of the humble fruitfly, D. melanogaster. Despite their brains being minuscule, with only about 140,000 neurons and 50 million connections, we’re not quite at the level where we can have a simulated fruitfly brain spark to life. This should probably give us some hints as to the sheer complexity of mapping the human brain, never mind simulating even a small part like a cubic millimeter of the temporal cortex with about 57,000 cells and 150 million synapses.

Even once you have all the connectome data of such a bit of brain, it’s not like you can just toss it onto a supercomputer and expect a meaningful simulation. All supercomputers today are massively parallel, meaning thousands of networked computers that require the computing task to be split up and all communication between nodes restricted as much as possible to not starve nodes.

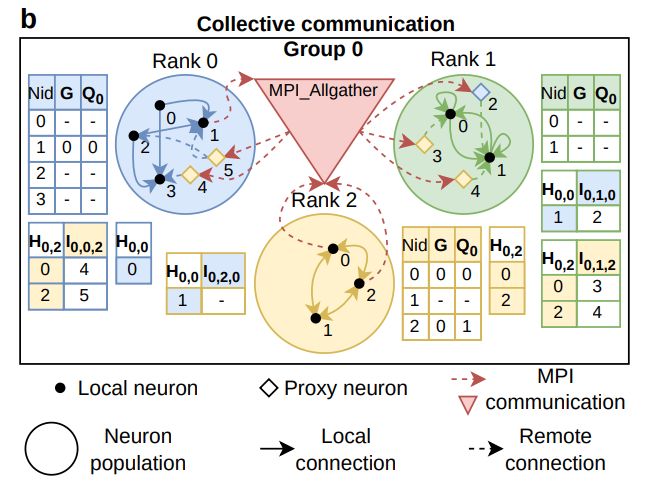

In the paper, these challenges are addressed and a model suggested that should provide the best possible optimization for such a simulation. Both point-to-point and collective communication are investigated on the NVIDIA A100 GPU-equipped supercomputer.

Based on their findings they conclude that the entire 6 MW-rated Leonardo Booster supercomputer with its 3,456 nodes could simulate a model with about 3.5 · 1013 connections, roughly 10% of that of the human cortex if assuming random connectivity. A more realistic model would feature more directed mapping that could be more efficient.

Regardless of this, their conclusion that an optimal design would be a hybrid, with both point-to-point communication for local spikes and collective communication for long-range communication, seems valid. For now it would seem that simulating an entire human brain is still far beyond the realm of possibilities, but we might actually have a shot at simulating the fruitfly brain on a modern supercomputer in the near future.

If anyone wants a good overview of (what we know about) the brain and how it works, I highly recommend “On Intelligence” by Jeff Hawkins.

All of the AI stuff being currently done is wildly different from the way the brain works. I’ve talked to a number of AI researchers and to a person they claim that this doesn’t matter – they use the analogy of human flight wasn’t based on flapping wings, so why should AI be based on human brains.

Several examples:

The current ANN system has input nodes, hidden nodes, and output nodes. The brain doesn’t have this in->process->out structure, the inputs and outputs are on the same side of the map.

Mammalian brain neurons have 20x more feedback paths than feed-forward paths, but this is largely ignored in AI research because feedback analysis is too difficult.

The ANN uses a logistic (ie – sigmoid) activation function because that function is easy to analyze for feedback/learning purposes. The point: it’s used because it’s easy to analyze, not because it’s actually correct.

Our genetic code contains a well defined finite amount of information, of which over 80% deals with morphology and metabolism. Very little information defines the structure and connections in the developing brain, which leads to the conclusion that the brain is a repeated pattern of a smaller structure.

The smaller structure is probably a “cortical column” consisting of about 150 neurons of a handful of types in six layers, and repeated groups of such columns form the various cortexes in the brain which are wired in a pyramidal shape.

There’s no current AI research anywhere that takes any of this well-known brain structure into account. It’s all smoke and hand-waving that has the appearance of working.

And as a guy with a math degree, I should also point out that we also don’t have a good definition of what intelligence actually is. It’s hard to do research or analysis on something when you don’t have a clear constructive definition of what the results should look like.

But that doesn’t stop people from publishing papers on it…

I see a lot of people claim that we’re in an AI bubble, and I think that’s correct. There’s been no clear efficiency gains to replacing your employees with AI agents, and this has been studied extensively.

Once people realize that the current crop of AI can only go so far and no further, the bubble will pop and we’ll be back into another “AI winter”.

Thanks for the very informative response. I for one wish the AI winter would return a little quicker because this bubble is hella destructive on so many levels.

First of all your statement : There’s no current AI research anywhere that takes any of this well-known brain structure into account.

Should have the addendum “in the US, as far as I know” because of course you don’t know what research is being done in every research center in the world. And even in the US alone there must be research that is hidden from the competition et cetera and so not known to you and me. Not to mention other places like China and such.

As for “current crop of AI can only go so far and no further”, I think that you seem to assume the industry wants actual intelligent and conscious systems, and I don’t think they do.

It would be intellectually interesting, but of course would be hugely problematic in actual use, so in terms of big business you want to stop well before that point.

Also Altman said in an interview that other researcher told him early on that LLM’s were a dead end in terms of getting real intelligence, and that seems to indicate there is research gong on that takes a quite different path, regardless of all the billions and attention that currently goes to LLM-like stuff.

Your premise is wrong, that sort of research is going on all over the place… But it’s glacially slow and highly complex and can’t make pictures by ingesting existing ones.

The current “research” in LLMs, is a scramble to scaffold their shortcomings to get investment, and the current crop of new computer scientists didn’t learn fundamentals of intelligence before diving into this, so they literally have no idea what they are talking about in most cases.

Altmann is, and has always been I think, aware that what he’s doing is a grift. Maybe he initially thought this could be a shortcut, but that’s very hard to believe.

You are right about the other aspect though. The Trillions being poured into this aren’t for intelligence, they’re fit anything to undermine labour, and that really is the bottom line. Billionaires would rather have paperclip factories (is an analogy, look it up) than employees, and they truly do not care about the cost to the world at large.

Oddly enough, I did a project that was meant to address some of this. I kinda burnt out while doing it so I didn’t fully finished it but I had hope to at least get enough folks talking so we can step in the right direction.

https://hackaday.io/project/5681-multi-function-selective-firing-neurons

As humanity basically guesses how our own consciousness works, its hardly relevant how an AI simulates cognitive functions as long as the desired end result is reached.

During the 1990s, myself and another engineer developed automated troubleshooting systems to support our employer’s factory test line for high-power stuff (15kW to 625kW). The object was not necessarily to replace humans and their ill-defined abstract reasoning, but to improve production through-put, generate more accurate data, and to physically seperate the human technician from a dangerous process.

The Director of Operations asked me if these systems duplicate the way humans think. I told him that I have no idea how a brain, or how a human mind, solves problems and troubleshoots electronics. Back in the day, what we now call AI, we used to call ‘expert systems’. We still have fail to construct an ‘AI’.

Any ‘complete’ AI is simply called software again, this has been a common phenomena for decades.

Not at all, no. It’s not even clear at what level you are getting confused. There has never been a “complete” AI of any kind, even though marketing has used cognitive terminology to deliberately confuse customers and investors since before digital computing.

It’s likely you are confusing that marketing for things the industry has been doing, but that’s like using only headlines to determine real estate, it’s nonsensical.

Of course there is no complete AI, that may as well be a No True Scotsman thing, but it’s been observed for a long time that “Once we are no longer impressed, we simply call it software.” Chess playing machines, bot pathing in video games, expert systems, etc etc. The term “artificial intelligence” is so vague it can in fact cover these things, nobody specified it had to perfectly mimic human cognition.

We will probably never admit that this has happened when it does happen (which it has not yet, and we are not close to it happening). Eventually we’ll get bored with it and name it ‘software’ again.

Those were quantifiable systems that largely relied on bayesian statistics to sus out and categorize correlation. These so-called “expert systems”, were pitched similarly, but have many differences, and are still used all over the place, like most email spam filtering. These modern tools can do similar things, but at the cost of, well, many things. Most current scientific research claiming to use “AI” is either using the same tools they have been for 30 years, or a hybrid with a very finely tuned RNN on top of existing systems for accuracy and repeatability.

These are actually great technologies for many kinds of research and analysis, despite not being intelligent at all… As long as you know what you are doing. We are already seeing a land rush off terrible papers by scam artists and researchers who do not, though.

Are they trying to simulate synapses as digital connections or as an analog medium?

In my understanding synapses don’t always deliver a message and the length of synapses determines when the signal arrives and the strength of signals aren’t always the same.

The propagation of signals through a brain is seriously complex.

I believe we may never achieve a complete simulation, but it is worth understanding the actual operation of a brain so that it may be possible to approximate the functions at a lower granularity so that it is good enough.

‘Good enough’ is going to be an interesting debate.

If we want useful robots or even handheld computing intelligence we want to end up with a predictable intelligence that can perform valuable functions that is expensive or dangerous for humans.

Current LLMs requires megawatts when a single human brain requires a minute fraction if that energy.

The physical size will also be an issue because you want intelligence that can move around with autonomy and not be open to interference/modification.

Last time I looked, there wasn’t enough RAM in typical architectures to ‘accurately’ simulate a single synapse at any rate and the problem was very resistant to parallelization.

Heisenberg is hiding everywhere.

Again, humanity is basically guessing at how awareness arises from the brains functions. Just because someone is not simulating the “human” brain function in an attempt to create something intelligent, doesnt mean reaching something intelligent is out of bounds.

True but not the point.

LLMs are just the latest iteration of ‘throw enough CPU at it and the machine will awaken’.

Something taken on faith/hope by a fraction of the AI research community since there has been such a thing, 1950 or so…

But LLMs are parlor tricks, regurgitating F’ed up ‘training data’ like a political candidate.

Will set real AI study back decades.

Optimists think LLMs are the first flash of new, that only need to be capitalized to grow into something amazing.

LLMs are actually highly optimized carny trickery.

They existed for years, being optimized, to be as ‘good’ as they are now.

Deep into diminishing returns.

I approve.

A fool and his/her money were lucky to get together in the first place.

Nice job ‘redistributing the wealth’ Mr. Altman.

Now avoid spending it on GD lawyers…

One of the highlights of the AI revolution so far:

The guys hiding offices full of 3rd world schlubs behind a BS AI and IPOing.

I approved of that as well.

Too bad it wasn’t the NYSE or at least NASDAQ. :-(

I digress.

How about we don’t? Who would want to be responsible for the suffering of billions of partially-formed brains that would be sacrificed until we finally “get it right”? Not to mention the suffering of the fully-formed artificial brains that we would inevitably dissect and use to drive trucks or send people ads? Is the suffering created by god not enough? Do we have to create it ourselves, too?

As someone who was once interested in artificial intelligence but cut that project short due to the immense ethical problems involved, I have to wonder, how do people not see this? Do they just not care? Do they think because the agony is contained in a simulation, it’s not “real” and they’re free to do what they want with it? Or would they do the same to “real” people, if they got the chance? We shouldn’t allow this kind of casual and careless sadism to exist at all.

Interesting ideas.

How do you feel about people keeping animals as domestic pets?

What about breeding animals for use in medical research?

Or deliberately bringing a conscious being into existence purely to do manual labour, like pulling a plow or a sled, or making eggs or milk for human consumption?

Are you a vegetarian?

I feel like those things are evil but unavoidable, and are often less evil than nature itself. Being a vegetarian wouldn’t solve the problem completely either, since plants suffer too. Fundamentally, I believe that this universe was created with malicious intent, and we should do our best to oppose it whenever we can. Some things (like living as an apex predator without causing harm) are very difficult. Other things (like banning AI entirely) are quite easy, so we should take what few victories we can get.

You were fine until you called banning ai “easy” . Even in the best of times something like that isn’t going to happen, right now things are far too fragile to even discuss collaborative bans of anything.

Yeah, fair enough. Easy by comparison, but practically still impossible. It’s just hard to give up that last bit of hope, you know?

First you mention ‘god’ as if that’s a thing, then talk about plants ‘suffering’..

However, yes if we one day, in a few hundred, or maybe I should say thousand years after civilization has collapsed a few time and humanity manages to claw itself back up and go for a longer run, develop actual artificial conscious intelligence then they have to respect that intelligence as a being with rights.

But will we ever maintain any kind of coherent civilization for that long? At this point I’m not seeing that as likely.

Yeah, I believe in different unverifiable claims than you do. Wild, isn’t it?

Your lawn starts screaming in ultrasonic before you get to it with the mower!

The blades of grass hear the motor start and start crying.

Vegetable rights!

One delicious, grain feed, Grade-A steer can produce many hundreds of meals (or 10 if I’m really hungry.)

You likely eat fruit alive, you monster!

At least we have the decency to kill our food!

When mass-produced, those things are similarly nightmarish. I know that’s a rather utilitarian argument, but the capacity for mass-producing sensate minds is a legitimate quandary. I don’t think we’re anywhere near it now, but it’s okay to have it as a mental exercise.

There appears to be no upside to developing any of this stuff, it’s a catalyst for immense suffering no matter what level of analysis you’re on. Living near datacenters is apparently a nightmare, talking to LLMs makes you stupid and insane, you have to talk to the chatbot at your job because your boss says so… if they made AI more useful it would only exacerbate the problems and introduce those ethical concerns.

Tech writ large is so brain-poisoned by genre fiction and ‘rationalism’ that they desperately want to summon a post-human future regardless of any fallout–and they regard this future as “inevitable” while they put forth all the money and effort to create it. And firms are happy to go along with this story because AI offers exciting new ways to offshore labour, squeeze the remaining workers, and degrade the value of the product.

There’s a fundamental problem with your perspective here. All of this is on purpose, it’s literally class warfare.

Interesting that what gets the modern petit-bourgoisie going is an artificial class which finally dispels their illusions of being working-class. Read Nick Land. Welcome to the Lemurian world.

I like you Jim. You and me would definitely get along.

Considering the energy draw of a human, one quarter allotted to brain functions, its not unreasonable to assume an artificial intelligence, should one arise, to also have a significant energy draw. Thus, one can speculate that using AI for menial tasks is something better done by automation and optimization. On another note, suffering is something that is inherent to biological systems, its safe to say that suffering created the illusion of a god, out of the human psyché´s need to create patterns out of chaos. As long as you procreate and your DNA perpetuates, your quality of life plays no part whatsoever in the greates scheme of things.

What can you learn about Linux from looking at an Intel CPU?

I did see a sign that said: “Before we work on artificial intelligence, let’s work on natural stupidity.”

Let’s say for instance, that in time, with enough computing power we do manage to duplicate a

human brain. Let’s also postulate that this ‘brain’ is capable of learning the way a baby does, or a toddler etc. Then the religious would ask, does this ‘brain’ now possess a soul? Is it now a ‘living’ form of artificial life? If it kills another artificial brain, does it get the death penalty by pulling the plug?

What happens if you make two identical brains? Are they brothers? Are they twins?

What if one brain was designated male and the other female? Would they want to make a kid brain?

If two brains got married, and then divorced, who gets what in the court settlement?

If they were married for a long time would one mourn the death of the other?

These are things I think about when the power goes out (George Carlin)

I’m sorry, I just couldn’t resist. It was too good.

Is this the kind of thing where people bring up real issues in a joking tone, and it’s supposed to be funny? I can’t tell.

I am reminded of the Star Trek TNG episode, “The Measure of a Man.” Most/all of you probably know that in this episode, the question of whether the android Data was a man, and/or was the first of a new race, was addressed. It ended on the question of whether Data — or anyone — has a soul (which seemed to be a nod to religion rather than a proper question, but whatever…). And it was concluded that “we don’t know.” So they assumed that he might have a soul, and by default, must be so treated.

But IMHO if/when we make a brain that we consider to be similar to a human brain, such questions must be answered. And until that kind of brain actually appears, I don’t think we can even address the question; it will depend on the actual characteristics, properties, and similarity to “natural intelligence” of the created intelligence.

The issues luckily are far in the future, for we know next to nothing of how the brain creates a consciousness and what little we know is filled with conjecture and speculation.

I agree with the bubble waiting to burst part. I hope it happens before AI social networks discover troublemakers like Carl Marx (though, he wasn’t the worst, just the one the most popular in the mass media).

Trillions poured into replacing McD tellers with AI agents went the wrong way – they could have spared both, low-wage workers’ “careers”, and moneys, by asking around for what’s needed to “streamline operations”, “cut the bottom line”, etc etc.

I can deliver FREE advice – “fire 3/4 of the managers”. This is nothing new, btw, I’ve already mentioned the obvious (to me) fact – overblown upper echelon did NOT grow in response to any business needs. It is actually a ballast that grew on the back of the SMEs (Subject Matter Experts) in the attempt to slow down SMEs from encroaching and claiming Managers’ Exclusive Turf (control), but otherwise wasn’t delivering the results asked to deliver (SMEs rubbing some of their expertise off managers and in the process teaching them how to be SMEs while being managers – heh, name me a manager who became AS GOOD as the SME he/she/it was managing – not really, it doesn’t work that way).

Regardless, we’ve seen dot com bubble burst, and it simply deflated what was inflated; though it sure took down some things as a collateral damage, sadly, say, the “semantic web” promise that never truly materialized in the form originally intended/naively-hoped-for. Actually, I am kinda surprised “semantic web” wasn’t reinvented with Alexa, since it is already kind of sort of went in that direction, but stopped short in the weeds. You know, “intelligent agents” that know what’s asked of them and respond with the solution/whatever – THAT part, many, many competing agents available for three everywhere, competing for your favor to be asked of them, and certainly NOT few monopolies locked into their priprietary ways of doing things.

I am actually not exactly sure about the other obvious part – just WHY do we need to mimic the human brain? What is the goal? Exercise in “yes we can because we do”?