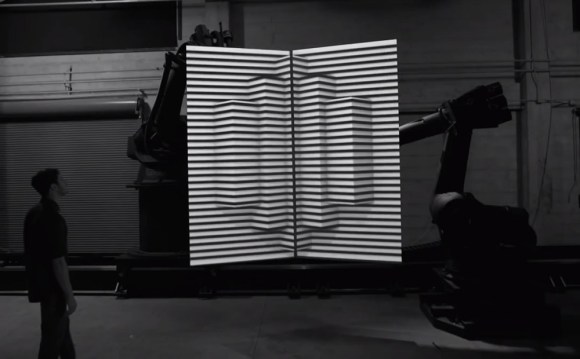

Take three industrial robots, two 4’ x 8’ canvases, and several powerful video projectors. Depending on who is doing the robot programming you may end up with a lot of broken glass and splinters, or you may end up with The Box. The latest video released by the creators project, The Box features industrial robots and projection mapping. We recently featured Disarm from the same channel.

The Box is one of those cases of taking multiple existing technologies and putting them together with breathtaking results. We can’t help but think of the possibilities of systems such as CastAR while watching the video. The robots move two large canvases while projectors display a series of 3D images on them. A third robot moves the camera.

In the behind the scenes video, the creators revealed that the robots are programmed using a Maya plugin. The plugin allowed them to synchronize the robot’s movements along with the animation. The entire video is a complex choreographed dance – even the position of the actor was pre-programmed into Maya.

The actor and robots describe several of the principles of magic: transformation, levitation, intersection, teleportation, and escape. All build up to the famous [Arthur C Clark] Quote: “Any sufficiently advanced technology is indistinguishable from magic”

While we loved the video, we can’t help but think of some changes. What if we didn’t have such repeatable robots – or robots at all? [Johnny Chung Lee] was showing off his foldable displays tracked live by Wiimote back in early 2007. Using [Johnny’s] system, even humans would be accurate enough to handle the canvases.

[Thanks Doug and Mark!]

That’s sweet. I wish I had the job of a graphics designer that paid well and would enable me to do some interesting things from time to time.

WOW! Ok, this is now officially my second favourite box!

She might be upset if you made this your first.

Heh, and a corollary to Clark’s quote that applies here:

“Any sufficiently advanced technology is indistinguishable from a rigged demo.”

— Andy Finkel

Even the position of the actor was pre-programmed into Maya.

Maya crashes and actor goes splat?

Any sufficiently advanced magic is indistinguishable from technology.

“Any sufficiently analyzed magic is indistinguishable from science.”

— Agatha Heterodyne

probably the best video I’ve ever seen…

They seem to have gone to great effort to brand it only as “Box” and not _THE_ Box… just FYI to the editor.

Can someone tell me what is so great about this?

Sure, it’s kinda pretty to look at. What I don’t understand is how this represents any kind of technological achievement at all. I’m not even sure how it’s considered a “hack”, all the equipment is obviously top-tier and it’s not like they cobbled together something in a garage. The projection planes are attached to high precision robots, and the camera is attached to a high precision robot. Everything is pre-planned and pre-rigged in the computer (I know for a fact that the majority of their fancy motion graphics were done in Cinema 4D, and since that stuff was exported into Maya it’s all statically computed).

There is absolutely nothing realtime about any of it, and I’m struggling to find out what the big deal is. It seems extremely generic when you consider the precision of the equipment involved.

Now, had they invented a realtime mapping system where actors could move the projection planes around and the camera was held by an actual human (and tracked accordingly, which would then update the projection plane perspective accordingly)- I’d be all “OMFG THAT IS SO RAD11111”. But it’s not. For all intents and purposes, all they did was whack play and stand back (except for the dude interacting with the stuff- god, I would not want to risk my life around those robots on the whim of Maya).

What am I missing?

> What am I missing?

It’s not that it’s doing anything new or anything that can be recreated directly by humbler budgets but it does do a good job of pulling together several concepts that have been covered on their own before and pulls it together into a single slick-demo.

It’s a bit like a magic trick – there’s no “magic”, it’s just slight of hand or distraction but when it’s done well, it looks great and I take the HaD post as an acknowledgement of that. As they say, there are ways it could be improved (to potentially open it up to smaller budgets) such as using tricks that [Johnny Chung Lee] demonstrated some time ago.

A lot like other HaD posts of late, we can either point at posts and derise them for “not a hack” (large budget, off-the-shelf solution, no details, etc) or use them as a point for inspiration, a starting point for discussing ideas (for ‘real’ hacks).

Okay, this HaD post is certainly not a hack and nothing new but it is a good demo of what can be done with today’s technology when it’s all pulled together (with a big budget) – let’s aspire to recreate it!

Sheldon – Thanks! The idea of using [Johnny Chung Lee’s] work to replicate The Box is exactly what I was getting at. If a person (or hackerspace) decided to re-create this with real time processing and people in place of robots, you’d definitely see it here on HackADay.

I’m not your babysitter. Maybe you should spend less time on comments.

What this shows is how easily our interpretation of reality can be manipulated. An extremely interesting video. thx hackaday for show casing it. The future of out living rooms will soon be quite similar to this. I could see a version of this that used motion tracking to adjust the projection to be based on the viewers head / eye position.

Oh golly! I hope you’re wrong! I couldn’t stand my telly being waved all over the place while I’m trying to watch M*A*S*H re-runs!!

I don’t know how anyone can gripe about this….that looked frickn awesome…