We should all be familiar with TV ambient lighting systems such as Philips’ Ambilight, a ring of LED lights around the periphery of a TV that extend the colors at the edge of the screen to the surrounding lighting. [Shiva Rajagopal] was inspired by his tutor to look at the mechanics of generating a more accurate color representation from video frames, and produced a project using an FPGA to perform the task in real-time. It’s not an Ambilight clone, instead it is intended to produce as accurate a color representation as possible to give the impression of a TV being on for security purposes in an otherwise empty house.

The concern was that simply averaging the pixel color values would deliver a color, but would not necessarily deliver the same color that a human eye would perceive. He goes into detail about the difference between RGB and HSL color spaces, and arrives at an equation that gives an importance rating to each pixel taking into account its saturation and thus how much the human eye perceives it. As a result, he can derive his final overall color by looking at these important pixels rather than the too-dark or too-saturated pixels whose color the user’s eye will not register.

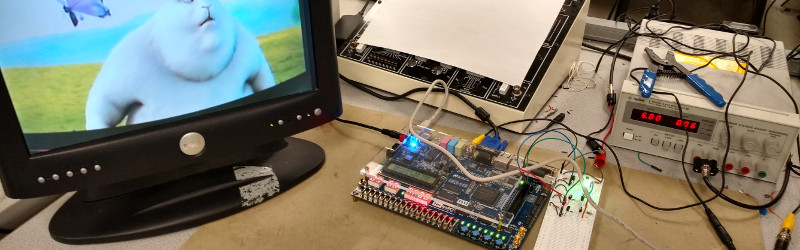

The whole project was produced on an Altera DE2-115 FPGA development and education board, and makes use of its NTSC and VGA decoding example code. All his code is available for your perusal in his appendices, and he’s produced a demo video shown here below the break.

This is not the first project in this field we’ve featured here at Hackaday. We’ve had another FPGA project, one based on a Raspberry Pi, and an entirely analog version that sums the RGB outputs. This project brings a new twist to the table, not through what it does but through both the approach it takes to the problem and its clear explanation of the process involved.

Via [Bruce Land].

“It is intended to produce as accurate a color representation as possible to give the impression of a TV being on for security purposes in an otherwise empty house.”

What’s the point when you use facebook to tell the whole world about your amazing holidays in Spain, Italy or Zimbabwe.

https://en.m.wikipedia.org/wiki/Straw_man

I would prefer he works on getting the colors right to do a RGB addressable bias light around a TV so that when I am watching a movie the colors at the edge seem to bleed onto the walls.

That would be massively more useful than a fake TV that makes your neighbors think you are home. and you can already buy for $19.99

http://faketv.com/

Note: 99% of all burglaries happen during the day, when they know that most people are not home so not even neighbors are around to report suspicious people.

Project author here. The cool thing about this project is that it can be applied to the situation you mention. All you would have to do is instantiate a few of my modules to take in different areas of the frame and output to the corresponding light. The whole point of the project was making something that would primarily be for an unoccupied home, but whose work can be applied elsewhere.