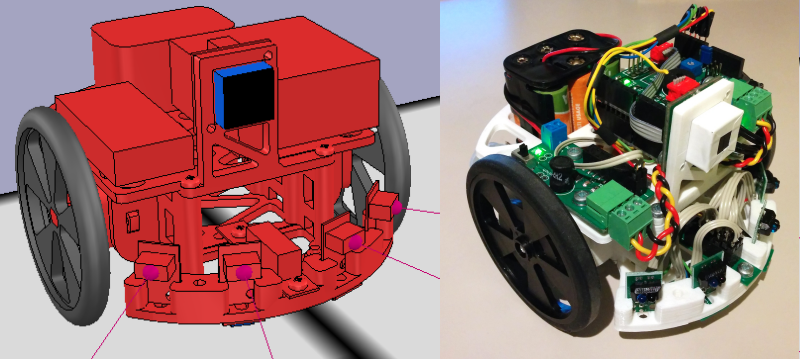

[Nurgak] shows how one can use some of the great robotic tools out there to simulate a robot before you even build it. To drive this point home he builds the tutorial off of the easily 3D printable and buildable Robopoly platform.

The robot runs on Robot Operating System at its core. ROS is interesting because of its decentralized and input/output agnostic messaging system. For example, if you leave everything alone but swap out the motor output from actual motors to a simulator, you can see how the robot would respond to any arbitrary input.

[Nurgak] uses another piece of software called V-REP to demonstrate this. V-REP is a simulation suite for robotics and has a few ROS nodes built in. So in order to make a simulated line-following robot, [Nurgak] tells V-REP to send a simulated camera image to the decision making node of the robot in ROS. It then sends the movement messages back to V-REP which drives the pretend robot around.

He runs through a few more examples, proving that it’s entirely possible to become if not a roboticist, at least a really good AI programmer without ever dropping the big money on parts to build a robot.

Great info. Thanks!

Simulated Robot == Simulated Fun

;-)

I prefer simulated failure. It’s cheaper.

+1

I was hoping for a discussion on how to [easily] simulate gears, levers, mechanisms etc.

Damn.

Have you RTFM yet?

http://www.coppeliarobotics.com/helpFiles/en/buildingAModelTutorial.htm

http://www.coppeliarobotics.com/helpFiles/en/designingDynamicSimulations.htm

Works as advertise using the virtual machine option, expect around 15 min for the build stage, give or take the size of the system you run virtual box on. Next I will build it on a Debian machine and see how fast it is when it is not in the VM.

I used the suggested Ubuntu VM from here: https://docs.google.com/uc?id=0B_HAFnYs6Ur-UGRwa0duTnV0ejA&export=download

Checksum can be found here, http://www.osboxes.org/ubuntu/

The entire system is very complex, and powerful, so if you plan to get into it expect to dedicate a lot of time to it.

For geometry FreeCAD http://www.freecadweb.org/ should be a good match.

Don’t know if it’s free ads’ for V-Rep or just coincidence …

There are plenty robotic simulators featuring ROS topic communications.

But this is a really good think that researchers consider the importance of using simulated robots/sensors in the early stages of the development of a robot AI. Simulators are able to emulate (expensive) several sensors in a wide variety of environments.

https://www.youtube.com/watch?v=sgxZbRKDWjE

Definitely not an ad for V-REP, but from all the simulators I know it’s the best and most complete I’ve used so far (and also free to use). Integrating it with ROS took me a while, thus the tutorial.

We actually tried the “demo” version (free) and the simulator couldn’t even handle a velodyne64 on a plane and run real-time. Thus we had to move to more optimized tools, featuring real sensors and their interfaces with real-time simulation.

The simulator is not limited in the free version, it only prevents you from commercialising your product if I understood correctly (and some physics engines require a paid licence). I suppose your project is very demanding in computing power and perhaps not suited for simulation. I tried a LIDAR as well, but it didn’t keep up, however there are ways to optimise the simulation to emulate the data you need, but keep the computing needs low…

So, you are telling me that the commercial version isn’t even better than the demo version ?

Nowadays in robotics research labs,using a velodyne32, 64 or a fleet of robots equipped with lidars+GPS+camera is quite common. Therefore, all simulators should be able to provide this kind of simulation, real-time, using a casual workstation!

You shouldn’t have to adapt yourself to the simulation unit. Optimize the simulation is the simulator developer job and must ensure an efficient software.