Many of us will have seen robotics or prosthetics operated by the electrical impulses detected from a person’s nerves, or their brain. In one form or another they are a staple of both mass-market technology news coverage and science fiction.

The point the TV journalists and the sci-fi authors fail to address though is this: how does it work? On a simple level they might say that the signal from an individual nerve is picked up just as though it were a wire in a loom, and sent to the prosthetic. But that’s a for-the-children explanation which is rather evidently not possible with a few electrodes on the skin. How do they really do it?

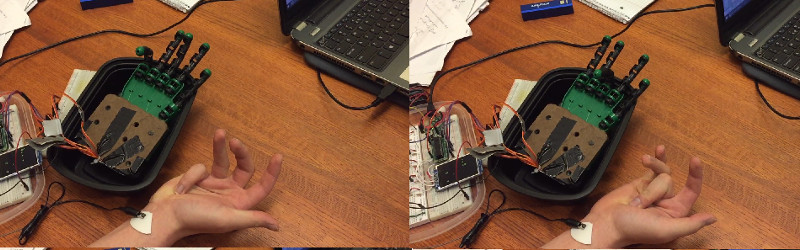

A project from [Bruce Land]’s Cornell University students [Michael Haidar], [Jason Hwang], and [Srikrishnaa Vadivel] seeks to answer that question. They’ve built an interface that allows them to control a robotic hand using signals gathered from electrodes placed on their forearms. And their write-up is a fascinating read, for within that project lie a multitude of challenges, of which the hand itself is only a minor one that they solved with an off-the-shelf kit.

The interface itself had to solve the problem of picking up the extremely weak nerve impulses while simultaneously avoiding interference from mains hum and fluorescent lights. They go into detail about their filter design, and their use of isolated power supplies to reduce this noise as much as possible.

Even with the perfect interface though they still have to train their software to identify different finger movements. Plotting the readings from their two electrodes as axes of a graph, they were able to map graph regions corresponding to individual muscles. Finally, the answer that displaces the for-the-children explanation.

There are several videos linked from their write-up, but the one we’re leaving you with below is a test performed in a low-noise environment. They found their lab had so much noise that they couldn’t reliably demonstrate all fingers moving, and we think it would be unfair to show you anything but their most successful demo. But it’s also worth remembering how hard it was to get there.

We’ve covered a huge number of robotic and prosthetic hands here over the years, but it is a mark of the challenges involved that we’ve covered very few that are controlled in this way. Even those that have are usually brain-controlled rather than nerve-controlled, and are thus considerably more complex. We applaud this team for their achievement, and we hope others will pick up on their work.

Mains hum and switching power bricks and PSU’s as well as the lights running on switchers as well. It could hardly be a place for such work. I have played around with on old CRT EEG-ECG scope before and noticed the spikes that I could see from my forearm as I clenched fingers. It can’t be that hard.

the devil is in repeatably and reliably deciphering those spikes in any environment. finding a solution that would allow you to, say, use your prosthetic arm to work on power lines without having it freak out from the interference, would advance bioengineering to the next stage

“It can’t be that hard.” Right next to “it should just work!”. I’m gonna feed the troll.

-> Muscles literally in contact with each other wrapped in membranes which allow a (arguably as much chemical as electrical) signal to enter and permeate the entirety of the muscle — but not disturb the one literally smushed up next to it.

-> The above system refined to the point where we have incredibly dextrous finger control signalling relying on what is essentially, from my understanding, a spikes/second intensity signal.

-> I heard (long ago) that the subconscious portions of the brain which translate an abstract idea of “grip a little tightly, but not too tightly” actually switch automatically between different subsections (“bundles”) of the same muscle in order to further modulate intensity and cycle through different portions of the muscle to keep from wearing everything out at once (circulation, that sort of thing)

-> There’s other signals too — I have no idea to what extent sensory signals would interfere with this (I suspect those other signals essentially show up as as background noise; again last I heard about the (psychosensory?) strategy of the body is that the brain receives constant signals from everywhere all the time and “only pays attention to” changing signals.

-> Also environment. I definitely saw my scope leads picking up ambient RF when I was messing with the oscilloscope doing basic labs in college. As [Jarek Lupinski] astutely pointed out, interference just exponentiates your problems.

Here’s my idea for a very crude (and very scaled-down) analog:

Cut yourself about 50 strands of magnet wire. Arrange them into ten bundles of five. Wrap each bundle, by hand, with electrical tape one layer thick (it can be sloppy). Dunk in slightly salty water. Wrap the whole bundle in a single layer of electrical tape. Solder two wires from each bundle to your control circuit, which will be programmed to send low-voltage (3V or less?) spikes down random wires. Dunk prepared midsection of this whole shebang in slightly salty water and leave it there for a bit — we want the water to penetrate, through and through, everything. While it’s soaking, prep some gelatin. Add a bit of salt to the mixture, and get it heating. Before it solidifies, drop your saltwater-soaked bundle of wires into it (test section only, obviously). Allow the gelatin to set. Now go ahead and shave that gelatin down to about a half-cm in thickness atop the test section of wires (adjust to your preferred body-fat ratio). You now have a very crude analogy of an arm.

Start your random-signal generator going, and try to figure out which wire is being energized with a couple of conductive sensors stuck to the outside of your gelatin. Try to separate some kind of signal from the noise. Try to discern what’s going on in those wire bundles.

I haven’t done much signal analysis, but I’m pretty sure this kind of thing is a nightmare. There’s a reason we don’t have artificial limbs being directed by 555’s and a couple handfuls of passives hooked up to the nearest nerve bundle.

The article says they are measuring nerve signals, but they are not. The source explains that they are measuring muscle signals: the signals that muscles themselves produce when contracting. Nerve signals are the signals that control the muscles, who in their part produce electrical signals when contracting.

Nerve signals are in the uV range, and muscle signals are in the mV range. At this point in time it is impossible to measure nerve signals from outside the body because of all the noise and crap in the air and those muscle signals producing a far more powerful signal. Even measuring nerve signals inside the body is still very much cutting edge science.

i guess THATs why they always call it “motor control” as the motor amplifiers are actually INSIDE the motors them selves. sort of a pun for technophiles?

Having been subjected to the process of diagnosing an ulnar nerve problem, I can say that the nerves can be _triggered_ from outside the skin, and can apparently be measured as well. I was a living Galvani experiment as the neurologist made precise measurements on my arm and induced signals into the nerve and measured the transmission time to another point to ascertain where the nerve was impacted. injecting a signal was a process of getting a probe which was more or less the equivalent of a non-extended ballpoint pen tip and jamming it into the skin to displace muscle tissue (not piercing the skin though, just a hard push) to get the probe closer to where the nerve passed by. Totally unlike an adhesive probe contact.

Anyway, as to measurement (even for the muscle signals), I would think that an instrumentation amplifier would be a good choice here.

What I am really curious enough is whether you have observed an effect similar to subvocalisation but with motor muscles.

Subvocalisation is when you think aloud in your mind – and there are observable impulses sent to the muscles around your lips. MIT AlterEgo uses that to recognise words that were never articulated.

My question & challenge is to figure out whether you can observe signals in the muscles/nerves in your hand when you *think* about moving your hand or *imagine* moving your hand?

Any thoughts on that?