Websites used to be uglier than they are now. Sure, you can still find a few disasters, but back in the early days of the Web you’d have found blinking banners, spinning text, music backgrounds, and bizarre navigation themes. Practices evolve, and now there’s much less variation between professionally-designed sites.

In a mirror of the world of hypertext, the same thing is going to happen with voice user interfaces (or VUIs). As products like Google Home and Amazon Echo get more users, developing VUIs will become a big deal. We are also starting to see hacker projects that use VUIs either by leveraging the big guys, using local code on a Raspberry Pi, or even using dedicated speech hardware. So what are the best practices for a VUI? [Frederik Goossens] shares his thoughts on the subject in a recent post.

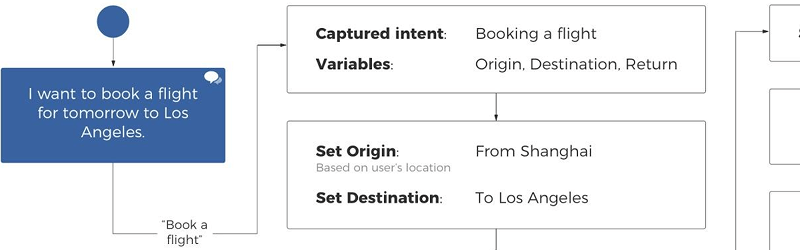

Truthfully, a lot of the design process [Frederik] suggests mimics conventional user interface design in defining the use case and mapping out the flow. However, there are some unique issues surrounding usable voice interactions.

In summary, the main points for a VUI are:

- Simple and conversational

- Confirm completion

- An error strategy

- Consider extra layers of security

You can find more details in the original post, which also covers some general advice about tools and design.

If you are looking for something a little more detailed, veteran voice developer [Jo Jaquinta] posted a video in the comments about his presentation on the same topic in Dublin, which you can see below. Meanwhile if you want to try your own voice development, we’ve seen that it is probably easier than you think. If you want to do it all locally, even an Arduino can hear what you have to say.

I taught our rescued-dog hand signals. When humans talk, I don’t want to interrupt to tell the dog something. I don’t talk to automated bank machines. I’ll be damned if I will interrupt myself or somebody to discuss brownness quotients with my toaster. Next, vibrators will be reacting to vocal ques. C’mon. This ain’t HAL, and enuf’s enuf. That said, great for assisting some handicapped. Rates right up there w the much lauded Folger’s Coffee Can design. Love their Columbian. Not needing the handle, hate HATE that they ruined the cans, as far as MY reuse of them goes. More paper, HAL. HAL would be my toilet paper dispenser… causing me to buy a bidet… o e with knobs and dials and levers. So, make these things for some, but don’t think that we ALL ONLY want Voice reactive products. If we did, we could get married. Or get ONLY a dog.

Funny, I’d gladly interrupt most people to interact with my dog.

I see your point. This is a tescued dog, not one we desired. It needed help. We had to be responsible. But I have gotten rid of most of that ilk. However, take my wife, please.

I feel like I have the same feeling about touchscreens,

IF you can’t work the buttons&knobs, What are the chances you can -safely- pick up a cup of hot coffee?

Speaking of Dogs and signals:

A Long time ago.

I met a fellow who made a decent bar hustle $$ with his dog and some veerry subtle hand/body cues.

“Next, vibrators will be reacting to vocal ques.” Already a thing, app on phone listens, controls vibrator via Bluetooth.

Am the only person who finds the possibility of everything I say being uploaded for keyword analysis to Google/Amazon as extremely creepy. I’m not saying that that is what the intended design is, what I am saying is that it would be trivial to subvert the technology to do that.

They probably won’t upload what you say, just the keywords. It’s cheaper to process everything on your side, so they don’t have to pay as much for bandwidth, storage and processing.

As a side note, I was discussing with my mother by audio on Hangouts about buying a house in a certain place. Not even 10 minutes after that, my brother was receiving ads from Google about houses in that certain place. Never typed about it, just by voice.

It is actually cheaper to grab an 8 second audio sample, compress it, upload it and then fully process it on remote high powered servers. At least in terms of hardware with the cheapest possible price point. Smaller CPU’s, less RAM, less storage, and zero possibility of completion disassembling your binaries and dissecting/backward engineering/stealing your algorithms. And by design allows for the automated collection of training data.

This is usually the case as most of these so called smart devices are not very smart themselves.

Though the possibility of the algorithms getting stolen and reverse engineered not zero as those servers can and have been hacked.

I see your point. This is a tescued dog, not one we desired. It needed help. We had to be responsible. But I have gotten rid of most of that ilk. However, take my wife, please.

Could be? Is it coincidental that afyer years of deleting emails so we have “space,” suddenky googke AND its search engine blooms, explodes! And space issues, vanished. Google an gubbamint in bed. Easy now for them to track you by voice, if desired. To even put words into your mouth, should you someday not want to play ball or be a patsy for a payoff for scurilous reason.

If/When you see the word “free” anywhere on the tin, you can rest assured that it is going to harvest YOU.

I truly wish we could stop calling this stuff “free” when it should be called tradeware.

As in you trade something (your life’s habits) for the software.

Would be much more honest that way.

Soylent Green is people.

“Websites used to be uglier than they are now. Sure, you can still find a few disasters, but back in the early days of the Web you’d have found blinking banners, spinning text, music backgrounds, and bizarre navigation themes. Practices evolve, and now there’s much less variation between professionally-designed sites.”

I’ve found CSS Zen garden quite good at showing what websites could be once standards evolved.

I strongly disagree with it they have gotten down right hideous after around 2014.

“Websites used to be uglier than they are now.”

I vehemently disagree with this statement

Websites now are just less usable with this “one word per screen” paradigm. It’s funny how much info you used to have on single screen in times of 800×600 displays compared to today’s websites.

True I blame developers who design primarily for viewing on a phone and not making a second version optimized for devices with larger screens.

Back when I had a Windows phone I would use request desktop site as viewing the small text and doing pinch zoom was less annoying than using the mobile version of a site.

Hire whoever designed the voice dialer for the RNS315 found in VW and Audis. The VW group already paid for them to learn from their mistakes.