When it comes to something as futuristic-sounding as brain-computer interfaces (BCI), our collective minds tend to zip straight to scenes from countless movies, comics, and other works of science-fiction (including more dystopian scenarios). Our mind’s eye fills with everything from the Borg and neural interfaces of Star Trek, to the neural recording devices with parent-controlled blocking features from Black Mirror, and of course the enslavement of the human race by machines in The Matrix.

And now there’s this Elon Musk guy, proclaiming that he’ll be wiring up people’s brains to computers starting next year, as part of this other company of his: Neuralink. Here the promises and imaginings are truly straight from the realm of sci-fi, ranging from ‘reading and writing’ to the brain, curing brain diseases and merging human minds with artificial intelligence. How much of this is just investor speak? Please join us as we take a look at BCIs, neuroprosthetics and what we can expect of these technologies in the coming years.

How to Interface with Biochemistry

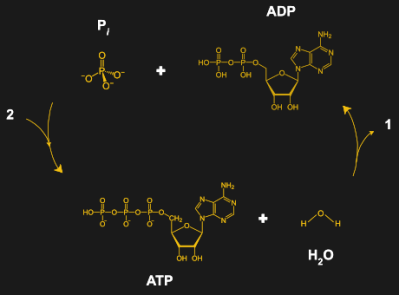

The main issue that makes it so hard to interface computers and other devices with the biochemical structures which compose our bodies is that they’re fundamentally different. Whereas our devices are powered (generally) by electricity, our cells use ATP (adenosine triphosphate). Much of our metabolism is targeted towards creating more ATP, for use in just about any process within our bodies. Since our electronic devices are not biochemical in nature, they essentially speak a different language.

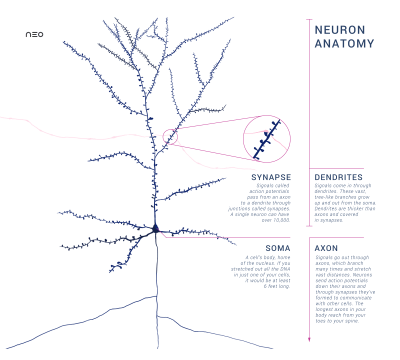

Nerve cells (neurons) are an interesting type of cell because they have evolved the ability to use the action potentials generated by ions to communicate signals along the outgrowths from the cell body (axons and dendrites) to other neurons, at sites called synapses where chemical signaling (neurotransmission) occurs using special chemicals called neurotransmitters. Fortunately for us, this is a kind of signaling which we can hack into using our electrical devices.

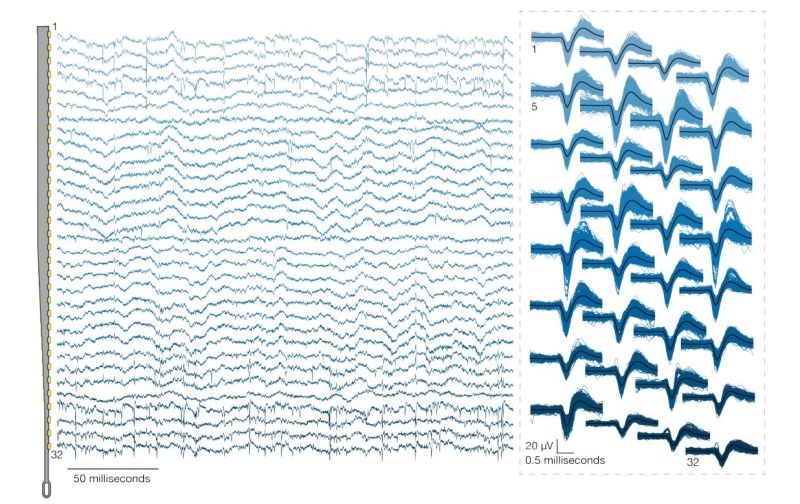

Much of BCI and neuroprosthetics technology revolves around recording these action potentials by neurons in the brain’s central nervous system (CNS) and peripheral nervous system. Using electrically conductive probes we can measure the produced voltages, amplifying the very weak signals sufficiently to be made usable.

Patching in New Parts — We’ve Done It Before

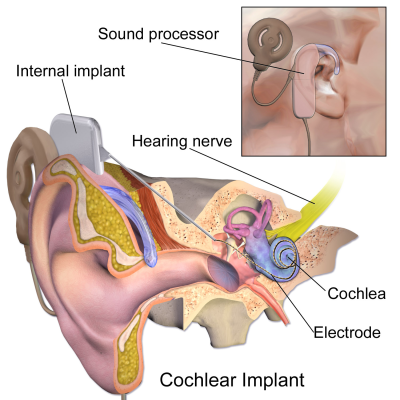

The field of neuroprosthetics has been around for a long time, with the first cochlear implant being implanted in a patient in 1964 at Stanford University. These devices are without a doubt the most successful example of a neuroprosthetic device. Hundreds of thousands have been implanted in patients, largely restoring the ability to hear. It’s a life-changing thing for every recipient of a successful cochlear implant, and it seems obvious that we should look for other ways this technology can be used to help those in need.

These devices are fairly simple: they have a number of microphones which capture environmental sounds. The signal is processed by a DSP chip, converted to the appropriate signals for the implanted part of the device. This signal is transmitted to the internal implant via inductive coupling and is used to stimulate the cochlear nerve, which the patient then perceives as sound.

Calling neuroprosthetics ‘BCI’ would be somewhat inaccurate, however. The goal of neuroprosthetics is not to establish communication between a computer system and the brain, but merely the restoration of lost functionality, such as one’s hearing, vision, a functional arm or leg, or bridging the damaged part of a paraplegic person’s spinal cord. The presence of a computer system in this path (like the DSP in the cochlear implant) is merely there to enable this functionality, not as an end-goal by itself.

BCI is a much wider field, with many experimental projects and fundamental research that goes far beyond these practical implementations.

This Won’t Hurt a Bit: Neuralink’s Implant Technology

Brain-computer interfacing concerns itself primarily with intercepting the signals from neurons in the brain in order to map and understand the signaling. In order to accomplish this, probes have to be placed as close as possible to the neurons in the area which one intends to monitor. Three approaches are possible:

- non-invasive: the skin is not breached, purely external measurements are made.

- partially invasive: the device is placed on top of the brain itself, inside the skull and underneath the dura mater.

- invasive: the device is directly implanted into the brain tissue.

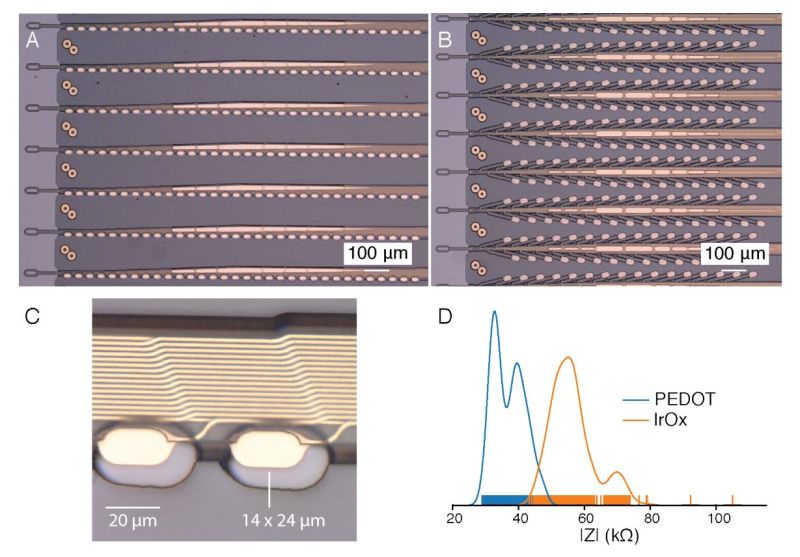

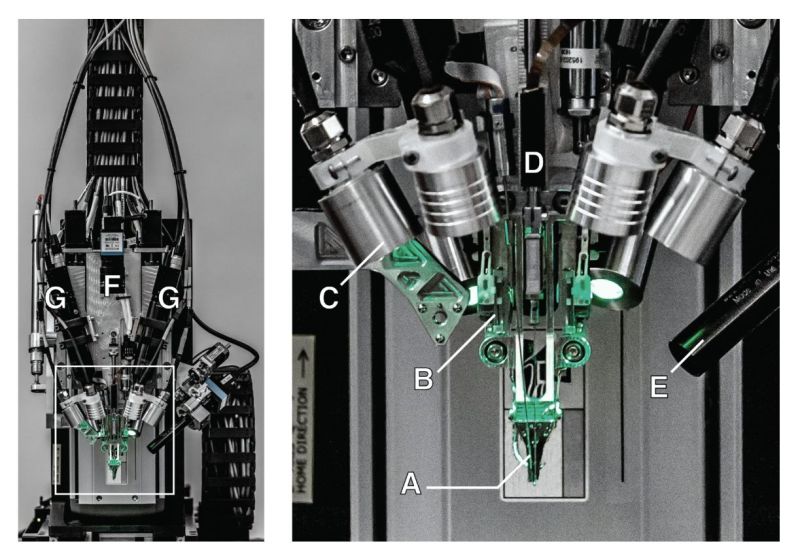

Obviously the invasive approach will yield the best results, as the measurements are being taken as close to the neurons as possible, allowing the sensor system to differentiate between small groups of neurons instead of averaging over groups of thousands of neurons or more. This is also the reasoning behind Neuralink’s work on different probes, details of which are shared in their recently published paper.

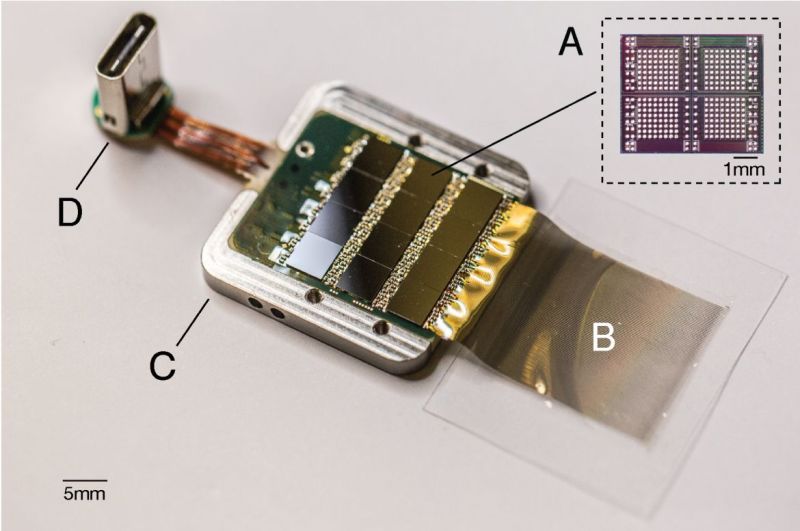

Neuralink developed so-called ‘threads’ which incorporate dozens of individual contacts along the length, with 32 contacts per thread being quoted. By inserting this thread into the grey matter of the target area, they get readings from neurons along the entire length, at various depths. These threads are connected to the device that does the actual sampling. At the moment it’s only been tested with rats, where having the animal survive the experiment is of secondary concern. Ergo it protrudes from the animal’s skull (photograph is in Neuralink’s paper, for the less squeamish), with a USB-C port for easy data access and power delivery.

Assuming that Neuralink can shrink the device to make it easily slot within one’s skull and make it wireless, this invasive procedure would then allow for readings from hundreds to thousands of locations within the brain. If implemented with the dozens to hundreds of threads as suggested by Neuralink officials, then one would get literally thousands to tens of thousands of readings.

The burning question then is of course, how useful are these readings?

Making Sense of the Data is Very Very Difficult

No matter how you collect the data, be it through an external electroencephalogram (EEG) or using invasive sensors, the signals must be processed to decide what the brain is doing. Peaks indicate that one or more neurons experienced an action potential which got picked up by a nearby probe. It is then interpreted in a specific way that considers the location within the brain. If it’s in the motor cortex, then it could mean that the person thought of moving their arm, for example.

The main issue here is that although we have a rough idea of where certain functionality is located in the brain based on prior experiments (and people suffering brain damage during accidents, such as the famous case of Phineas Gage), there’s still a lot we do not know. This is greatly complicated when moving from one person to the next. Human beings are not perfect carbon copies of each other, and our brains are constantly changing their exact layout. Positioning of functionality is tricky.

Research teams at universities around the world have been trying to map spoken words from people who had electrodes embedded in their brains while undergoing surgery, or as part of epilepsy treatment. That Science magazine article reveals that researchers find this task to be far from easy. Even though they were able to hear what the people are saying during invasive monitoring of brain activity, attempts at decoding the brain signal only reached somewhere between 40% and 80% accuracy.

Without such an extended training session — such as when a person cannot speak at all — it’d be nigh impossible to map those vocalizations to specific brain patterns. This is also because speech isn’t just produced by a single part of the brain, but distributed throughout the brain, from the motor cortex to the linguistic center, to sections involved in speech planning.

Essentially, we’re still only beginning to figure out what is needed to understand these signals which we are receiving from the probes embedded in the brains of animals and ourselves. We can already do amazing things with training and calibration, but we’re clearly not even close to the type of brain-computer interface we see in science fiction.

The Difference Between Hype and Science

Our understanding of the human brain is unfortunately rather limited. Although we have a basic understanding of how neurons work, and how they combine into larger networks, it’s only quite recently that we have begun to discover the structure of the networks that make up, for example, the outer part of the brain (cerebral cortex) where the higher-level functions of language and consciousness are thought to originate.

There are between 14 to 16 billion neurons in the cerebral cortex alone. Assuming we just focused our BCI efforts on this part of the brain, those are still a lot of neurons to monitor. Relative to Neuralink’s probes, the scale difference should make it obvious that all we can do at this point is monitoring groups of neurons, trying to integrate and interpret their collective activity.

This is where one can objectively say that what Neuralink has achieved here is an innovative approach to increasing the resolution for such embedded probe arrays, along with a very interesting-looking surgical robot to insert these arrays into brain tissue. When reading the earlier linked paper by Neuralink this becomes apparent as well, when in the Discussion section they refer to the system as ‘a research platform for use in rodents and serves as a prototype for future human implants’.

While there is a lot to look forward to in BCI research, many brilliant minds have been involved in this field since the 1950s, yet progress is understandably slow due to the complexity of what one wishes to achieve and the obvious ethical limitations when it comes to research. Here neuroprosthetics will likely see the most progress the coming years.

When Musk mentions ‘merging humans with AIs’, one also has to take a step back into reality, and realize that the current state of the art for artificial neural networks are hugely simplified models of what happens inside our skulls right now. True artificial intelligence would emerge either through sheer accident, or by our collective knowledge of how biological brains work suddenly skyrocketing.

As much fun as it is to dream about this sci-fi future, the reality is that there is still a lot of hard, tedious science to be done before we can reach that future.

“Our mind’s eye fills with everything from the Borg and neural interfaces of Star Trek, to the neural recording devices with parent-controlled blocking features from Black Mirror, and of course the enslavement of the human race by machines in The Matrix.”

And then the non-stop ads, even while you sleep.

New Bachelor Chow! Now with flavour.

space briefs

Next thing you know, public suicide booths

Lightspeed Briefs thank you.

that’s when you just put on your tin foil hat to block the signal. DUH!

Faraday Caged Anechoic Chamber rooms and house options become the norm for residential builds.

That’s my nightmare. A phone inside your skull that you can’t turn off. Luckily that’s still extremely far away–meaningful input is gonna be at least an order of magnitude more complex and difficult than reliable output. And we’re not even that close with the output side yet.

Also the neuralink implants are currently placed in areas of the brain selected for optimal output anyway; good multi-sensory input would require a lot more implants spread out all over the organ. Not like the brain has a general purpose i/o port anywhere.

I really think that stage of BMI is gonna be a flying car/fusion power-type problem. Only fifteen years away for the next two centuries, if ever.

They progress to get to where they are seems very significant for just two years of work. I’d be surprised if I was still waiting in 2030. Besides it’s meant to be controlled by a phone over Bluetooth. Stuff you can shut off.

How will you know it doesn’t have a secondary embedded system that allows the government to monitor your brain waves for research purposes? That would be the logical next step, and not a huge leap from there to wirelessly influencing you over a high bandwidth satellite network…

It’s actually very interesting. I don’t think that we will have to wait so long for technology to be like that to 2030 or longer.

“A phone inside your skull you can’t turn off.” In “The President’s Analyst”, a movie from 1967, this is exactly the evil objective of TPC, “The Phone Company”. It’s a very witty satire of spy movies that was far ahead of its time. TPC’s little animation explaining the “Cerebrum Communicator” is brilliant.

https://en.wikipedia.org/wiki/The_President%27s_Analyst

Reminded me of Brainstorm (1983) with more practical “potential” applications:

https://en.wikipedia.org/wiki/Brainstorm_(1983_film)

There will be ad blockers for your brain…

relevant? https://en.wikipedia.org/wiki/Paranoia_1.0

Very well written, cheers!

watch for the spike in brain-injury-related suicides

I dunno. I’m pretty sure those people would do nearly anything to get their bodies back.

In the Q&A, they specifically addressed ads. In short, they don’t want them.

I heard “there will be no ads” (and getting them later) so many times, that I do not believe it.

Elon hasn’t actually failed to keep his word on that yet. We know he isn’t that greedy because he does only take minimum salary from his companies and only gets paid in stocks that are issued when Tesla is successful.

Elon also gets paid by lending money to his own companies at a high interest rate – a debt that is never paid back, so the interest just keeps on coming.

For example, what Musk did in 2008 when he secured federal loans for Tesla at 2.6% real interest rate, while demanding himself 10% interest rate for the money he lent to the company…

He doesn’t take any salary because any meaningful salary for him would be taxed so high (progression) that it’s more sensible to just take stocks or interests on loans. The stocks aren’t issued when “Tesla is successful”, but simply when they want to sell stocks – depending on whether people will pay enough for them.

he’s a narcissistic sociopath with a god complex

Why bother with a salary, when you have a slew of tax-avoiding setups to channel money through instead?

“While there is a lot to look forward to in BCI research, many brilliant minds have been involved in this field since the 1950s, yet progress is understandably slow due to the complexity of what one wishes to achieve and the obvious ethical limitations when it comes to research.”

Like CRISPR research. ;-)

Yeah, one day I can order a pill that will change my eye pigment genes with my mind.

For the record, the “published paper” referenced is not a published paper in the usual sense of scientific paper publishing – it has not been peer-reviewed and has been simply deposited on bioRxiv, a preprint server. Also re: the author list: “Elon Musk & Neuralink”… one must feel sorry for the folks at NeuraLink who actually did the work and presumably wrote the manuscript, only to be anonymized and fall victim to Musk’s ego in this fashion (highly unusual practise for every scientist involved not to receive personal authorship).

Definitely true. The whole ‘paper’ reads mostly like an extended PR pitch with some attempts to make it sound scientific.

No details on how the test animals were prepared, no listing of measurements. I could go on.

But the robot and probes are cool, so credit where it’s due :)

It’s a white paper. Those have been advertisements for a long time. Even when they have technical details, they have details about why you should use products from the company who wrote it.

That said, my first comment on it to a friend was “Holy crap the ego to put your name on it.” Utter bullshit move, and it won’t help any of their goals. Not investors, not recruiting, certainly not morale at the company. Pure ego move.

Musk has built his whole business empire on hype. It works like a treadmill that’s running on his hot air, so the more he blows the faster it goes, and the more he has to blow in order to keep from faceplanting himself.

The issue there is it looks identical to if he was genuinely just trying to tell people about the exciting things he’s doing. Regardless , rockets are landing and model 3s are being sold. Quite validating even if he’s bad a timelines.

Simply because something is being made or sold doesn’t mean it is THE thing that was promised. Just because you can buy a watch at a beach that says “Rolex” doesn’t mean it actually is a great deal.

The cars haven’t cashed in the promises and sales pitch, and the rockets haven’t yet validated any of the claims about cost savings. In fact, Tesla has been a resolute failure in terms of Musk’s outline of the “three step plan”, which he keeps revising every time he falls behind.

The cars are the most popular and capable electrics in North America and probably the world, and SpaceX is literally the poster child for NewSpace success. Trying to claim SpaceX isn’t managing cost reduction just makes you look like an idiot. They literally couldn’t afford to launch this many rockets if they were operating at a loss, and their prices are near half the competition.

Musk talks big and doesn’t always manage what he claims. His ego is the size of StarHopper. But his companies have been entirely successful so far, whether you like it or not.

Even SpaceX itself estimates that the cost savings only come after a dozen or so flights at the optimistic estimates. NO single booster has been re-flown enough times to actually PROVE that they last long enough, and that their refurbishing costs or increased risk of failure don’t turn out greater than the estimated savings.

At this point it’s all just talk. The technology is there, but this is like Tesla’s claims that the self-driving cars are safer than people – there’s just not enough evidence (and the existing evidence is tampered) to pass that claim with any plausibility.

As for the cars, the Model S was supposed to be the 300 mile $50k car that funds the next model to be the affordable people’s car. Two models later, they still don’t have it. They’ve failed ALL their promises, and they’re constantly at the brink of bankruptcy. Not a sign of a successful company.

Being the “most capable” electric car isn’t a merit when all that is based on a sham. Other manufacturers don’t have “ludicurous mode” and a touchscreen as the only means for manually turning on the windscreen wipers for a reason, and worldwide, Renault-Nissan is the most popular EV manufacturer.

Untrue. They’ve secured the contracts (esp. from NASA) well in advance at a fixed price per launch. SpaceX secured launch contracts worth billions of dollars BEFORE they even published their plans for making re-usable rockets, and many more later on the excuse that they fund the development. They’re still riding on these very lucrative government contracts.

For example, the ISS resupply missions come with a price tag of ~$133 million each, while some of the individual launches for satellites sell for $50 million to undercut the competition – so what’s the real price? Depends on how long you want to keep playing loss leader, and how much the government is willing to overpay to subsidize the cost of commercial launches.

It’s very important to distinguish science done for science, and science done for profit.

While academic scientists can moan about the pressure to publish positive results, that’s just peanuts to the pressure when your boss is the paper’s “first author” and a notorious fire-them-if-they-don’t-get-results type.

The intended audience here is investors.

Still, I don’t doubt that they have awesome tiny probes. How will they make them durable? What data will they get? What will be the result of stimulating still roughly large groups of neurons all together? All the real questions are still open.

But the wild speculation about how/when/what are important for the investors to gauge the expected future value of Neuralink. And if the odds of success are small, or the payoff is distant, it has to appear collosal.

They spent a lot of time on the subjects in your second to last paragraph. I listened to it finally today and those are all addressed.

The core part of the presentation was published back in March, under the “folks at Neuralink”‘s names.

But I guess its easier to hate on Elon Musk without doing the research first.

How does it make the argument any different? Now it’s just Musk recycling the content for his own glory.

I’m pretty sure he’s doing this stuff because he’s afraid of AI, not because he wants to be popular.

I*m pretty sure he keeps doing it because he wants money.

My biology teacher told us that the (average) body makes and consumes around 180 pounds of ATP each day.

Is that somewhat like claiming my car revolved a 100,000 tonnes of tyre on my way to work this morning?

Good analogy. Obviously it’s overwhelmingly the same ATP, otherwise we’d certainly need to eat a lot every day!

It’s actually not, because ATP is broken down as it is used. ATP -> ADP/AMP -> ATP

The analogy would hold if you re-treaded your tired after every revolution.

It’s similar to saying that your heart pumps 7000 liters of blood every day.

“At the moment it’s only been tested with rats, where having the animal survive the experiment is of secondary concern.”

The paper mention only rats, but in the presentation Elon himself mentioned a monkey controlling something successfully.

I think that’s previous research, not from Neuralink.

Video for quote: (timestamp is 1:25:28 if it doesn’t go through)

https://youtu.be/r-vbh3t7WVI?t=5128

It sounds like it’s from their research. They also mention monkeys a bit before on the same question.

According to this offer small print, Neuralink progress on primates is on second stage.

http://www.ebay.com/itm/173967486985

Musk needs to keep the hype train going in order to keep the dollars flowing in. This ends up leading to the problem of the hype train stealing from the actual accomplishments that are actually happening. We should be celebrating that there is a major automotive manufacturer that is ONLY making electric cars but instead we are focusing on how they just cant get “autopilot” working right. We should be celebrating how his company has managed to reduce the cost of space flight while reducing its environmental impact but instead people are all focused on the mars journey. I think that he has become a victim of his own success and that could come back to potentially bite him, between hyperloop, the boring company and now this latest venture i think it just spreads him too thin and takes away from his focus on the two companies that are making great moves forward.

On top of that, given how it is currently fashionable to correlate success with sociopathic behaviors i don’t think that brain compute interfaces are the smartest of ideas lest we end up with a sociopathic AI.

I understand a part of the Neuralink mission is to give humans access to abilities AI will have much more easily if not by default. Musk has a fairly well known qualm regarding AI, that’s why he invested in the company OpenAI, in order to attempt to create AI with appropriate safeguards.

well seeing as actual ai has yet to be developed, the entire Neuralink mission could have the unintended consequence of developing something that actually suits the definition of AI. He could just as easily accidentally develop the AI he dreads by trying to stop that from happening.

There is a fundamental issue in his idea that AI can be developed with appropriate safeguards, as the safeguards themselves would be extremely limiting to the AI (unless its a physical kill switch). The thing is that anything that would fit the definition of artificial intelligence would be able to get around any safeguard thrown at it. These concepts have been theorized before and almost all of Asimov’s stories use the three laws to show how creating arbitrary (even if logical) safeguards will still cause issues and conflicts. The reason I say arbitrary is because there is going to be a small subset of humanity that will determine said safeguards even though AI will affect all of humanity.

So it seems to me that Neuralink could have a mission that conflicts with his qualm with AI. By creating that brain compute link he could very well empower computers to actually develop intelligence by being able to learn directly from how our brains work. that is the problem with unintended consequences, the majority of the time the consequences are not expected nor even predicted by the people making those decisions.

Eugh, merging human brains with ai is so speculative and buzzword-driven at this point that it’s essentially meaningless.

Really wish we could leave behind vc buzzwords like autonomous cars and ai and iot. This shit is getting ridiculous. They are tertiary products which aren’t really ever meant to be brought to market; the primary product is hype and the investment it drives.

The real products eventually generated will resemble the stuff we already have more than not.

Dear god self driving cars that require human supervision are a terrible idea. The whole point of the design is diametrically opposed to the requirements of safety. It ostensibly exists to enable the driver to pay less attention, but that’s not really allowed so it inherently tempts one to lapse in attentiveness when immediate action might be required to save a life. Shocking that it’s even legal. And it’s all just a gimmick on top of a product that’s already great and revolutionary. Totally senseless.

As far as I’m concerned a car that needs supervision is not autonomous. I’ve never driven a Tesla nor read the manual for one so I’m not sure what the intended purpose of the self driving feature is.

The reason why you don’t let an AI drive a car without full human level understanding is the same reason as why we aren’t using chimps as chauffeurs.

You never know when and where it will simply go ape.

Ever considered that it’s a gimmick that’s necessary to sell the car, because it isn’t already great and revolutionary?

I mean, it’s an electric car – for approximately nobody – because it’s very expensive and doesn’t fill the role of a car for most people due to its limited range and re-fueling opportunities. Just count the number of Superchargers per capita vs. gasoline stations, divided by the time it takes to charge than to re-fuel. (Basically, service capacity).

The electric car absolutely depends on there being a good battery. Everything else is secondary if you don’t have a high-capacity, durable, safe, cheap, powerful, light, etc. battery, and Tesla is all about selling the electric car without actually having any of that except the high capacity part. They could be simply sued out of the market by pointing out that the violently spontaneous combustion properties of their NCA cells make your chances of survival from just about any major crash next to nil, because the rescuers can’t get to your unconscious body before it burns.

He has push EV into main stream and make rockets. As far as I am concern, he beat Steve Jobs. The guy is allowed some crazy ideas from time to time. Waiting for his LEO Starlink.

Between Starlink, brain implant… kind of reminds me of that movie quote “Free calls, free Internet for everyone. Forever.”

Steve Wozniak is my real hero !

What’s there to celebrate? The electric cars part of the venture is failing and the whole company is on the brink of bankruptcy except for Musk’s hype bringing in investments, which keeps the boat afloat. Remember the time when he sold 1.2 billion dollars worth of IOUs just last year?

He hasn’t. SpaceX simply has not made enough launches with the re-usable technology to show any actual savings. You’re falling for the hype again – Musk is actually charging a lot more money per flight from NASA, and merely saying that the launches cost so little.

To summarize:

For the 4 millionth time Musk is selling copper and calling it gold. He takes some important research which a group is working on. Blows it so far out of proportion for what it can actually do that it’s damn near unrecognizable. People pick up on it and act like it’s gospel, then forget when he completely fails to meet the timeline that he himself set.

As others comments pointed out. He has claimed authorship for the not really published but semi research document when I think everyone knows at best he threw some money at it. The paper talks about trying to grab more sample data from rats and musk is talking about controlling monkeys, if people can’t see the massive leap here I would advise you fill out a form for legal blindness.

On “real” brain research;

the University of Minnesota has been able to quickly identify PTSD in Veterans with magnetoencephalography (MEG)

https://www.science.gov/topicpages/m/magnetoencephalography+meg+system

a prosthetic?

Leading ‘current’ generation of implanted brain-recording probes, with less hype and Musk, would be NeuroPixels:

https://www.neuropixels.org/

“Control your NeoPixels with NeuroPixels!”

Buy Now!

We command you!

B^)

Intelligence agencies must love tech that let’s them read and write directly with the human brain. The skunkworks are always at least a few decades ahead. Frightening thought. Tech companies would love the ad space, the personality profiling potential, location gathering. Imagine them inserting an impulse to buy a product or walk into a shop. Perhaps making you hear things, maybe whispering in your ear as you sleep.

You might not be able to turn your modern phobe off, but can put in a draw or throw it away. If it’s in your head, you can’t get rid of it. Orwellian nightmare.

Like Vicary’s subliminal messaging “eat popcorn” experiment – only more direct. Yeah, scary.

As far as has been tested there’s no such thing as subliminal messaging. Vicary didn’t even run an experiment.

Reminds me of this similiar thing I seen this one time….

“Nada, (Roddy Piper), a wanderer without meaning in his life, discovers a Neuralink implant capable of enhancing the world. As he walks the streets of Los Angeles, Nada notices that the media, government, and corporations have comprised subliminal messages and advertisements meant to keep the population subdued, and that most of the social elite are shareholders, bent on world domination. With this shocking discovery, Nada fights to free humanity from the mind-controlling AI and Neuralink….”

….or something like that.

I found this to be an interesting read https://www.statnews.com/2019/07/18/do-elon-musks-brain-decoding-implants-have-potential-experts-say-they-just-might/

TL;DR: some things are new, some are old. Quite a lot is unproven on long term which you really need. Other companies promised this, but couldn’t manage it. There is a lot of regulation. Still, we should see some advances from this.

Welcome to Tesla Soft Robotics Division!

I see you have signed a 20 year contract.

We will get the Neuralink installed, your conscience will be suspended, and then you will be assigned to Tertiary Adjunct of Unimatrix Zero One.

Looks like your first task will be installing wiring harness in out latest vehicle.

Do you have any questions before we begin?

“Hello, I am Beef from Neuralink technical support, we have received a warning that your brain is about to crash…”

Yikes, see many issues with this as life structures not static especially protein and prion reactions in neural circuits and bacteria continue to evolve (some under the radar in the brain almost undetectable) so hey who needs implants when external sensor tech improving as well as visual feedback for the subject directly :-)

Thanks for post.

https://www.abc.net.au/news/health/2019-07-24/virtual-reality-tool-investigated-to-promote-sleep/11323134

If it weren’t for Musk you could be looking forward to getting to the moon in another 50 years. He is not some Messiah but at least he is doing something not just running his mouth or forming an exploratory committee. As for Neuralink probably a moon shot but if you don’t try you sure aren’t going to achieve anything. As soon as someone in this forum has sent 50 or 60 tons worth of payload into space or maybe produced a couple hundred thousand electric vehicles they can throw some crap at him until then I say you need to get busy.

I think that the BCI is going to end up being simpler than most people think. I think the key is not in monitoring neurons that are thought to be connected to specific existing processes, but rather creating new interfaces with new neurons created via stem cells and stimulating them externally until the brain plasticity extends these connections into the existing networks. I have a feeling that the brain itself will do much of the integration with training being the key to bridging the connections.

It’s basically going to be like jacking a phone line to your brain. You can talk, but the bandwidth is limited.

And it’s especially not going to be “merging human minds” simply for the problem of latency. The brain “integrates” thought as a seamless experience over just so many milliseconds, and if you miss that beat then the experience is messed up like a deja-vu: things arrive into the consciousness in the wrong order. It won’t be two minds acting as one, because they’re running out of sync – and this only gets worse as you have to keep more than two brains in sync.

Video games get around this issue by lag compensation, by basically playing a simulation of the game locally according to a short term prediction and the correcting the prediction once they get more data. Brain interfaces can’t do that because they’d have to simulate the other brain locally.

Here’s my old cybernetics professor talking about biohacking which he started doing 20yrs ago

https://www.youtube.com/watch?v=icIAe4F7lMY

I believe in BCI’s.

JBFrey26Exit2021