The field of computer science has undeniably changed the world for virtually every single person by now. Certainly for you as Hackaday reader, but also for everyone around you, whether they’re working in the field themselves, or are simply enjoying the fruits of convenience it bears. What was once a highly specialized niche field for a few chosen people has since grown into a discipline that not only created one of the biggest industry in modern times, but also revolutionized every other industry, some a few times over.

The fascinating part about all this is the relatively short time span it took to get here, and with that the privilege to live in an era where some of the pioneers and innovators, the proverbial giants whose shoulders every one of us is standing on, are still among us. Sadly, one of them, [Tony Brooker], a pioneer of the early programming language concept known as Autocode, passed away in November. Reaching the remarkable age of 94, the truly sad part however is that this might be the first time you hear his name, and there’s a fair chance you never heard of Autocode either.

But Autocode was probably the first high-level computer language, and as such played a fundamental role in the development of whatever you’re coding in today. So to honor the memory of [Tony Brooker], let’s remember the work he did with Autocode, and the leap in computer science history that it represented.

The Early Days

When it comes to ancient programming languages, Fortran and COBOL are always prime candidates to mention. Created in the late 1950s, these languages are surrounded by an almost mythical vibe. Developers much younger than the languages themselves, who have never saw a line of COBOL in the wild, will tell each other equally mythical stories about a distant relative who was once working with it in a laboratory or some other too-good-to-be-true environment.

Not that age really matters here; assembly is even older, and it is highly unlikely that it will ever lose its relevance. However, the difference is that assembly is a low-level language, while most other languages out there, including Fortran and COBOL, are high-level languages. Programming in assembly means writing in whatever instruction set a processor provides. Assembly languages are just handy mnemonics for the raw bits that the machine speaks. High-level languages on the other hand care a lot less about a processor’s instruction set, and are abstracting those details away to resonate more with the dynamics of the human mind instead.

Take a simple multiplication as example: in a typical high-level language, you’d simply write a = b * c, while in assembly, you’d write whatever the target processor itself knows about multiplication. You might load the multiplicands into dedicated registers and the CPU might have a multiplication instruction, or it might need to loop through a series of additions. You have to know how the chip works. In a high-level language, it’s going to be a compiler’s task to figure out how to do the actual multiplication and create the corresponding assembly code for it.

The Missing Link

So how does Autocode fit into all this, and what is it anyway? It was designed after assembly was invented, but before any high-level language has existed. Assembly itself was a rather hot new topic at this point in time, when people were still carving their bits by hand. Well, obviously not literally, but “programming” the computers of that time was tedious, non-intuitive, and error-prone work. Except for an early version of a compiler-like construct named Autocode, developed by [Alick Glennie].

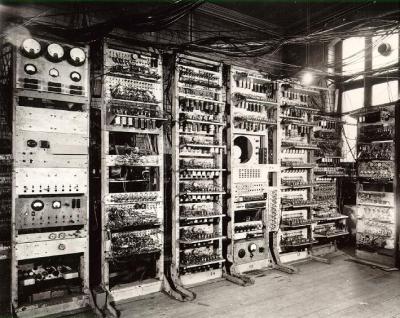

One computer of the early days was the Manchester Mark 1, the direct successor of the Baby, and the machine [Alan Turing] was working with in the computer lab at Manchester University. It supposedly had a rather nasty instruction set that made the tedious nature of machine-language programming even worse, which was the first motivation to create Autocode: taming the machine.

[Glennie]’s version of Autocode was a nice-syntax wrapper that remained otherwise machine-dependent, and we’d have to classify it as a low-level language. It wasn’t until the hero of our story, [Tony Brooker], came along to take a second shot at the idea of Autocode, that the first high-level language was written.

Legend says, one day, while on the way back to Cambridge where he was working as computer researcher, he popped into the Manchester University computer lab, introducing himself and his work to [Alan Turing]. Mutual interest in their work ensured, and [Tony Brooker] joined the computer lab to create his life’s work as part of his task to make the Mark 1 easier usable: designing an intuitive language with human-readable syntax that would hide away all the low-level nastiness, a language that wouldn’t require wasting countless hours of learning and deciphering each and every bit of the hardware, and ultimately turns the underlying hardware more or less into an irrelevant detail the programmer shouldn’t worry too much about. In short, it was planned as a true high-level language.

Starting development in 1954, the Mark 1 Autocode was ready the year after, and apart from the hardware independence, it also supported floating point arithmetic. While it had a few limitations with naming conventions and syntax, it was a big success in Manchester. [Tony Brooker] went on to continue developing the Autocode concept not only to other machines, but to the very concept of language creation itself. Taking Autocode further and further, he created the very first compiler-compiler in 1960, becoming virtually unstoppable with developing new languages.

Something To Build Upon

In a time where assembly was practically the epitome of convenience to operate a computer, the concepts of [Tony Brooker]’s version of Autocode took programming to the next level, and paved the way for a whole new realm of languages to come. And there was no one single Autocode language, just as there is no single, one assembly language. Autocode will probably be best thought of as a principle that has since evolved into the domain of compiled, high-level languages.

Unlike COBOL or Fortran, nobody codes in Autocode anymore. It’s a nearly forgotten relic of the past, regardless of the role it once played in the history of computer science. But the ideas that were embodied in Autocode live on. Without doubt, the creation of high-level programming languages was inevitable, yet it’s hard to fully appreciate today the work it took for pioneers like [Tony Brooker] to actually take the first steps towards it all those decades ago. Worth remembering.

Cute language. Reminds me of my (very machine-dependent) language that I wind up re-inventing for a certain level of embedded work… I’ll describe it here in case anyone is interested, or anyways because I’m nostalgic.

It’s kind of a brain-dead compiled forth. Like autocode, I used prefixes but on strings instead of on numbers… It generates assembler output,

“foo => emit foo directly to the assembler (a full line)

foo => push the assembler symbol foo (address of var or func) onto the stack

,foo => load variable value and push it on the stack

?foo => define global variable foo (32-bits, initialized to zero)

:foo => define label foo (beginning of function, typically)

.foo => call function foo

; => return

>foo => goto function foo (for tail-optimized calls)

The compiler is less than a hundred lines of C code, and then I define primitives for things like store-into-variable and conditional-branch and so on using the ” assembler output. Then the rest of it can be relatively generic code, almost feels like forth. Its only feature is that it’s simple to implement, and on things roughly between the size of a pic18 and an stm32, it is much more convenient than raw assembly and much fewer unknowns than C. Performance tends to suck, though.

Cheers!

‘Autocode’ is not a particular language; rather, it was an early computer science term for a class of tools that used a compiler. For example, COBOL and Fortran.

^This… Algol, Cobol, Fortan and others were all in development at nearly identical times… with Cobol and Fortran still in “popular” use (I don’t know what happened to Algol).

I think Algol morphed into Pascal, which got more or less superceded by C, and then C++.

We had something at Data General, called DG/L which was Algol/Pascal -like. I enjoyed programming in it, which must mean that the IO was more flexible than Pascal’s. Other than that, I remember little about it.

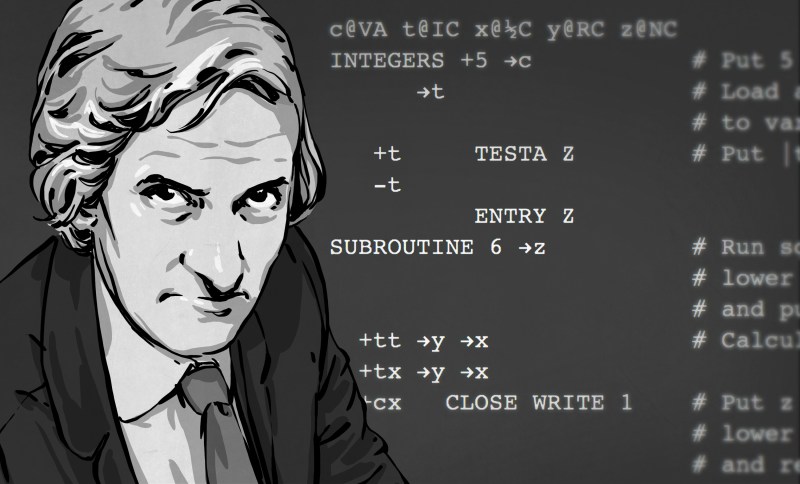

So why is Dr. Who in the banner photo?

YESSSSSSS!!!!!! I think exactly the same!!!! :-D

I only knew him when he was close to retirement age but that could be quite an accurate representation of him when he was younger.

I was lucky enough to have Professor Brooker as my tutor at Essex University in the 1980s. One of the pioneers. RIP

Isn’t that Glennie’s Autocode syntax in the image background image…?

“… “programming” the computers of that time was tedious, non-intuitive, and error-prone work”

“of that time” ??? … The description sounds familiar… ;-)

Best regards,

A/P Daniel F. Larrosa

It’s not done until it can translate high level power point and white broad presentations directly into working code, :P

Tony Brooker did terrific work, however this article seems to be trying to credit him with inventing high level languages and compiling code. It’s hard to assign those concepts to any one person, since many worked on the ideas in parallel. However, if you have to pick one person, that person has to be Grace Hopper. Her A-0 System from 1951 would be more what we would call a linker today, but it was a tool for reusable code and was arguably “high level adjacent”. Her A-2 System, developed in 1953, was generally considered high level, since it incorporated support for what was then being called “pseudocode generators” (what we now call high level language compiling). She wrote a nice article summarizing the state of everyone’s work in this area in 1955 and you can read it here. If you don’t want to credit her, several of the people she cites would also be fair candidates for “inventing” high level languages. She also likely coined the term “automatic coding” using it as far back as 1951. Though as others have said, it wasn’t a language, but an idea for a process to simplifying some of the steps in programming that led to the idea of “pesudocode generators”.

http://bitsavers.trailing-edge.com/pdf/computersAndAutomation/195509.pdf

Credit where credit is due. Please do not contribute to the erasure of womens’ contributions to math and science.

Great article by G.M. Hopper.. she nicely summarizes the state of the art in 1955. Thanks for that link!

Not to take away from your point, but Hackaday previously featured a full article on Grace Hopper.

https://hackaday.com/2017/12/05/disrupting-the-computer-industry-before-it-existed-rear-admiral-grace-hopper/

I just really like these more biographical posts in general.

The last paragraph is unnecessary and divisive rhetoric. As you said, this is an engineering and science website. A simple correction, especially one as informative and detailed as yours, is perfectly fine.

@Quinn: Sven actually had a link out to our article on Grace Hopper, where we absolutely have her as the mother of the complier, and I edited it out. Not because of wanting to keep her down, but because the transition into talking about Fortran was akward with the flow of the article in the last two paragraphs.

I actually spent quite a while trying to re-work that link back in and gave up in the end. Unfortunately you can’t tell all stories in a single article, and it ended up on the cutting-room floor.

As @Nebk said, definitely go check out our article on her. She’s a real hero. Great art by Joe too!

Hopper gave a talk at UMass when I was there as a grad student. I think she was working as a sort of goodwill ambassador for DEC at the time. She handed out “nanoseconds”:: foot long lengths of 22AWG telephone wire. It was a well-rehersed talk, nothing dramatic, and she was getting on at the time, so it was short. She was still sharp as a tack and one would need to be on one’s game in order to work with her.

This was a common problem even long after it was solved.

We didn’t have internet or any practical way to convey digital information.

If you were lucky enough to have access to a computer and you weren’t in a university or bank then you were on your own and without software resources.

You program in machine language and not asm because you don’t have an assembler.

Assembly: JP 1BD7

Machine code: C2 D7 1B

So the first thing you write is a disassembler so you can lift the bonnet to understand the firmware.

I then started writing an assembler but never finished. There wasn’t really any point for me to have an assembler as I was by then perfectly comfortable writing directly in machine code.

Then the next thing you do is to write your own high level language.

All of this had been done before, probably by many people as we just didn’t have the ability to share resources in any practical way.

What firmware?

EPROMS

Directed mainly to Sven Gregory

Please help me get over a brain barrier and suggest a modern language that might work for me.

I have great difficulty dealing with a language that has no apparent order.

In the 1960’s I had to learn FORTRAN using piles of Hollerith coded cards that became very voluminous as compilation required the addition of a stack for math functions. An orderly approach underlined successful program execution. I did not like it at all, but had to do it for completing a course.

In the early 1970’s I had to complete another course using FORTRAN, This time it was tedious but pleasurable and I designed a program to calculate the standard resistance values that provided any desired gain for an inverting or non-inverting Operational amplifier circuit. I was exempted from the final. Order again formed the basis for successful execution.

In the late 1970 I totally embraced BASIC that eventually turned into ROCKY MOUNT BASIC for me. Had a lot of fun with it in another course. Again order was a underlying requirement.

Finally in the 1980’s I had to design and construct a totally interrupt driven Motorola 6809 microprocessor based data acquisition system that would determine and print out the exact speed of a test car on a circular tract sensing buried wires, to determine the lateral adhesion of tires (an ambulance was on standby). The 2k program was written first in pseudo-code, then in mnemonics, order was imperative.

So my mind became very order oriented.

I now get frustrated when I can not relate an obvious order in the programming process.

I see all the small single board processors used almost like toys by kids, and I want to fit in.

Thanks

Gary (KF4GGK)

I’m not sure if they fit into what you would consider to be “ordered,” but I’ve always found both C and C++ to be very enjoyable languages (C for most applications, C++ for graphics). They’re both high-level enough to be reasonably easy to learn, but also expose the raw assembly when needed for speed or reliability.

Additionally, these two languages (especially C) are simple and ubiquitous enough to compile and run on nearly everything, from vintage 8088/z80 and 6502/6510 systems to cutting-edge Core i9 PC’s. I seriously doubt there was a single CPU architecture released in the last 45 years that doesn’t have a compatible C compiler.

Thanks Andrew

On order a several books:

Beginners Guide to C, FRICK

C Programming for Absolute Beginners, DAVENPORT

Welcome to the Miniature World of Wonders: The Arduino, DEBABITYA DAS

I will overcome my crutch for obvious order and work on being a programming poet.

Arduino is nice because the IDE includes links to example code which you can then modify to fit your needs.

If you want to learn C, you really should purchase a copy of “K&R”: “The C Programming Language” by Kernighan and Ritchie. I learned C from it and by trial and error.

Hi Antron

Found a new copy on AbeBooks. It is on order. Thanks for the suggestion.

Unfortunately I don’t have a direct answer for you here. In a way software has simply become so complex nowadays and for most parts outgrew the strictly ordered way of programming as you know it, so languages like that haven’t been developed much anymore (apart from esoteric fun languages maybe). But take this with a big grain of salt, I wasn’t even alive for most parts of the timeline you mentioned and am therefore rather spoiled with languages.

As Andrew mentioned in the previous response, C might be a good candidate that resonates well with you for all the reasons he stated. C++ is a bit more complex subject in my opinion, as there are many ways to do “C++” (if you ask ten people what C++ means to them, you’ll get fifteen different answers, making things easily confusing). What Arduino uses is sorta somewhere in between those two languages, and while it won’t help you become proficient in either of them, it provides you plenty of shortcuts that make life a lot easier in the beginning. If you want to make things blink and move around with a microcontroller, Arduino would definitely be a good starting point for you.

Or then take a completely different approach. Considering you would anyway have to shift your whole way of thinking about programming, you might as well go in a totally other direction and look into functional programming (Lisp, Haskell and such) or a hardware description language (HDL) like Verilog and VHDL. Anecdotal, but I still don’t fully get functional programming languages myself, I just feel I’m wired for imperative languages – maybe it’s the other way around for you. Maybe using a HDL to create your own digital hardware components in an FPGA is your thing. Or maybe a scripting language like Python could be for you, plenty of variety what to do with (including blinking LEDs on a Raspberry Pi) and few lines can already get you some first successes.

Like I said, I don’t have a direct answer for you, but maybe this helps to get some new ideas.

Good luck! :)

I was his secretary at Manchester University and typed up his first draft of the auto code in 1958

The Warwick site that is linked in the post actually includes an online interpreter for anyone who wants to play with the language. Looking at the code samples they provide, it still has an assembly-like feel because each line is so minimal.

Before Atlas Autocode, autocodes were effectively high level assembly languages. Atlas Autocode was mis-named because it was not remotely like any of the previous autocodes. Atlas Autocode was in fact a high level language in the same class as Algol60, and was Brooker’s response to the Algol 58 design. He went one way with Atlas Autocode and the Algol committee went another (very similar) way with Algol 60. It is a mistake to classify Atlas Autocode along with the previous autocodes.

Not to argue with your point. Interestingly, the Atlas Autocode compiler (at least, the one at the University of Essex) allowed imbedded assembly language. I learned a lot from using that feature.

Look up Konrad Zuse. Look up Plankalkul. Zuse invented the first high-level computer language. In fact he wrote the first computer language. He also invented the first programmable computer. By the way, please look up how to use the word “probably” in a sentence.

I was one of the first Computing Science students at Essex. Tony Brooker and I both debuted in 1967. (Iain McCallum taught me Fortran!) Tony Brooker taught “Atlas Autocode” implemented on the University’s ICL 1909. I recall one of his lectures when he was asked a question. He stumbled (good-narturedly) and said “I should remember. I INVENTED it!” I liked Tony a lot.