With the rise of WebAssembly (wasm) it’s become easier than ever to run native code in a browser. As mostly just another platform to target, it would be remiss if Fortran was not a part of this effort, which is why a number of projects have sought to get Fortran supported on wasm.

For the ‘why’, [George Stagg] makes the point that software packages like BLAS and LAPACK for Fortran are still great for scientific computing, while the ‘how’ is a bit more hairy, but getting better courtesy of the still-in-development LLVM front-end for Fortran (flang-new). Using it for wasm is not straightforward yet, due to the lack of a wasm32 target, but as [George] demonstrates, this is easily patched around.

We reported on Fortran and wasm back in 2016, with things having changed somewhat in the intervening eight years (yes, that long). The Fortran-to-C translator utility (f2c) is effectively EOL, while LFortran is coming along but still missing many features. The Dragonegg GCC-frontend-for-LLVM project was the best shot in 2020 for Fortran and WebAssembly, but obsolete now. Classic Flang has been in LLVM for a while, but is to be replaced with what is now called flang-new. The wish by [George] is now to find a way to get his patched flang-new code for wasm support into the project.

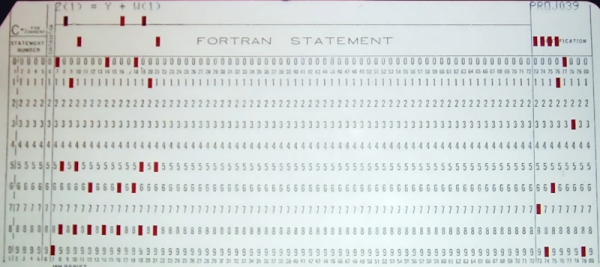

In the article, the diff for patching the flang-new toolchain to target wasm is provided. During compilation of the standard Fortran runtime it was then found that the flang-new code assumes that target system sizeof() results are identical to those of the host system, which of course falls flat for wasm32. One more patch (or hardcoded hack, rather) later the ‘Hello World’ example in Fortran was up and running, clearing the way to build the BLAS (Basic Linear Algebra Subprograms) and LAPACK (Linear Algebra Package) libraries and create a few example projects in Fortran-for-wasm32 which uses them.

The advantage of being able to use extremely well-optimized software packages like these when limited to a browser environment should be obvious, in addition to the benefit of using existing codebases. It is certainly [George]’s hope that flang-new will soon officially support wasm (32 and 64-bit) as targets, and he actively seeks help with making this a reality.