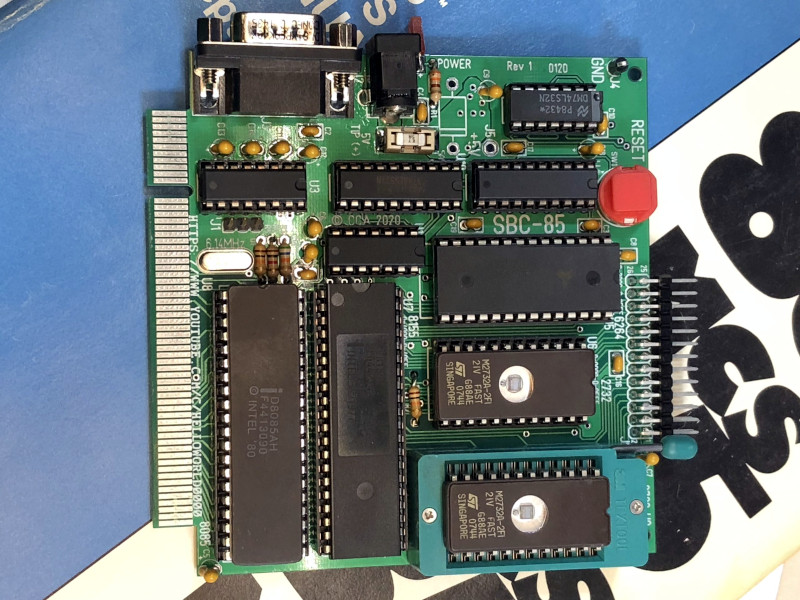

The world of 8-bit retrocomputing splits easily into tribes classified by their choice of processor. There are 6809 enthusiasts, 6502 diehards, and Z80 lovers, each sharing a bond to their particular platform that often threads back through time to whatever was the first microcomputer they worked with. Here it’s the Z80 as found in the Sinclair ZX81, but for you it might be the 6502 from an Apple ][. For [Craig Andrews] it’s the 8085, and after many years away from the processor he’s finally been able to return to it and recreate his first ever design using it. The SBC-85 is not wire-wrapped as the original was, instead he’s well on the way to creating an entire ecosystem based around an edge-connector backplane.

The CPU board is an entire computer in its own right as can be seen in the video below the break, and pairs the 8085 with 8k of RAM, a couple of 2732 4k EPROMs, and an 8155 interface chip. This last component is especially versatile, providing an address latch, timer, I/O ports, and even an extra 256 bytes of RAM. Finally there is some glue logic and a MAX232 level shifter for a serial port, with no UART needed since the 8085 has one built-in. The minimal computer capable with this board can thus be slimmed down significantly, something that competing processors of the mid 1970s often struggled with.

Craig’s web site is shaping up to be a fascinating resource for 8085 enthusiasts, and so far the system sports that backplane and a bus monitor card. We don’t see much of the 8085 here at Hackaday, perhaps because it wasn’t the driver for any of the popular 8-bit home computers. But it’s an architecture that many readers will find familiar due to its 8080 heritage, and could certainly be found in many control applications before the widespread adoption of dedicated microcontrollers. It would be interesting to see where Craig takes this next, with more cards, and perhaps making a rival to the RC2014 over in Z80 country.

Everyone has their own stuff that they like. This kind of “primitive” (non-Arduino, non-Pi) stuff is one of the things I like, so thanks for this article and video. I own a Raspberry Pi so I do move with the times somewhat but missed the whole Arduino revolution.

It can’t be your sole focus of course but please keep the primitive stuff coming!

Pretty sure the TRS-80 Model 100 was an 8085. Not sure if that qualifies as a “popular home computer” but they are classics.

Yes the trs80 model 100 I have contains a 8085 that’s where I did some of my first assembly language programming. And also many of the desktop keyboards were based on an 8085 especially those built by Cherry electronics

The M100 was definitely popular, and so useful it’s still in use today because nothing else quite matches its mix of portability and long life of disposable batteries.

My first computer was an 8080-based misfit that failed in the market and was surplussed in the electronics magazine classified ads. Not having an ecosystem forced me to learn everything about programming it and was wonderfully instructive. The 8085 was basically the same CPU but simpler to power and interface and with a few extra instructions and features, particularly an on-board UART. I did work with the 8085 in industry in some embedded devices that used it. The Z80 was a far more capable superset of the 8080 but most Z80 code was unrecognizable to an 8080 programmer because the extended instructions were so pervasively useful.

For a moment I got the 8085 and the 8088 confused. It was the 8088 that IBM used in the IBM PC.

I haven’t looked at the 8085 in great detail but I wonder if it would have made a great replacement for the 8088 on the IBM PC. Having a serial port for free would have been neat.

What was the relative clock speed of the two like?

The 8085 is 8bits, inside and out. IBM wanted the next step, so it wouod never have chosen the 8085.

However, the 8085 was used in the IBM Datamaster from 1981, so almost concurrent with the IBM PC.

The fastest 8085 was 6MHz, on par with the IBM PC’s clock, but I can’t remember if the 8085 divides the clock frequency internally before use. And the 8088 was 16 bit internally which surely speeded things up, and maybe was faster simply because Intel had more experience designing CPUs by the time they got to the 8988.

The future was 16bit, and IBM planned accordingly.

The 8085, internally has register pairs, BC,DE,HL and of course, SP , which can address 64K

One machine cycle took 3 clocks. So the effective speed as 2Mhz. And that doesn’t include all of three machine cycle instructions!

The 8085 was an 8080 with fewer needed external support (so no separate clock IC) and two pins for I/O, with instructions added for that I/O.

So it was easier to build with than the 8080, but lacked the improved instruction set of the Z80.

The I/O pins were serial, but as I recall software was needed to do conversion to and from serial.

I’m not sure how it fit in back then. Obviously many leaped to the Z80, but I remember some avoiding the extra instructions (the Z80 had its own hardware advantages) to avoid software incompatibility.

But as 16bit boards arrived, it wasn’t uncommon to see dual 8085/8088 boards. I was never sure if the 8085 made those boards simpler to design, or if they decided few used the extra instructions of the Z80 so why bother?

The Model 100 used a cmos version of the 8085, which maybe was why it was chosen. There weren’t a lot of CMOS CPUs at the time, and if you wanted 8080 then you weren’t going to build the laptop with an 1802 or Intersil 6100 or a 65C02 (I can’t remember if that was available when tye Model 100 was being designed. But the computer would have failed without a CMOS CPU.

The 8085 has its own improved instruction set that Intel then decided not to document at the time. It’s got instructions to set DE = SP+imm8 or HL+imm8, to load HL with (DE) and save HL into (DE) plus some other added maths/shifts and a fix for the DEC BC, Jcc weakness that Z80 never fixed.

Those provide a really nice basis for high level language usage particularly C.

The undocumented instructions are possibly an artifact of the underlying hardware, or kept secret for military clients, or were buggy at the rated clock speed, or ??

On a side note, the M100,M102 and M200 have a voltage detector that shuts down the system when battery voltage gets too low. It took me years to realize that NiCADs an NIMH cells are 1.2V, and that adding an extra cell will keep the system running longer. There is an online magazine that explains how to ,, ..

How do you know tgat?

Influenced by experience with mainframes, hobbyists set out to find “undocumented opcode”. But they were generally because of incomplete deciding. Many had little real value.

And nobody could count on them to be there on another IC, or the same CPU from another manufacturer.

So they were generally just novelty.

Nice to see another 8085 hacker!

Nitpick, the 8085 does not have a built-in UART, it has what amounts to hardware-assisted bit-bang serial.

We have an RC2014 8085/MMU board.

https://hackaday.io/project/167859-80c85-and-mmu-for-rc2014-bp80

and an earlier board

https://github.com/ancientcomputing/rc2014/tree/master/eagle/8085_board

The success and longevity of the 8085 wasn’t because it was a better CPU than the Z80, but because it was better at industrial control. Intel developed the Multibus architecture around the 8080 CPU, and the 8085 (with its serial interface, hardware interrupts, and powerful support chip family) was bred to become the industrial control successor to the 8080. It is not apparent to me that Intel even saw the personal computer as viable compared to the lucrative industrial market. Multibus offered open architecture (novel in an age of proprietary buses), multiple processors, bus master arbitration, redundancy, and (at the time) seemingly endless multi-vender expansion. It was the robust Multibus design that let the 8085 slide into that trusted platform and dominate the industrial control market for decades. Of all the manufacturers that adopted Multibus, few implemented Z80 based cards. In addition to Multibus, Pro Log came out with their 8085 based STD bus, which also became a major industrial control player, for the lower end of less demanding Industrial control applications. Remember, there were very limited software tools compared to today and code (much of it in assembly) had to last for several hardware generations and across multiple product platforms. Switching from an 8080/8085 platform to a Z80 would be unthinkable, even harder than switching between 8052 and ARM platforms because we were more intimately tied to the hardware back in the bare metal days. Many a time I specified an 8085 system just because we had an existing processor code base (it was relatively easy to switch between multibus and STD bus if using the same CPU). As amazing as it seems, there are still 8085 based industrial control systems in use today, and I still get tapped now and again to modify code, write diagnostic software, or otherwise keep 40 year old 8085 systems going. There is also a steady stream of 8085 based multibus hardware coming out of service and a company in Oregon (maybe others) that actively repairs multibus. So the 8085 may have missed the personal computer revolution, but it was very busy nonetheless.

“with no UART needed since the 8085 has one built-in”

Serial IO has to be bit-banged on the 8085 which has a single input pin (SID) and a single output pin (SOD) but no UART.

If it’s of interest to anyone, I’m currently working on a compiler for the 8080. The language is a strongly-typed Ada-like semantically with structured types, arrays, pointers, nested subroutines, and assorted good stuff, but optimised for 8-bit machines. The intention is that it’ll be self-hosted, and I’m currently working on the phase #2 compiler written in itself, but right now the bootstrap compiler (written in C) works mostly fine; I’m still tinkering with some of the language semantics. It currently targets the 8080, 386, thumb2 and terrible C, and it’s table-based so adding new backends should be (relatively) easy; the entire 8080 backend is 1300 loc. The code quality is… adequate. See https://github.com/davidgiven/cowgol.

My recollection is that the 8085 divided the clock by 6, and with some instructions taking more than one 6-clock cycle, I figured it to be a 0.6 MIPS CPU. (But that’s asking wet RAM to be accurate from 1982 to now.) I have no recollection of any UART, perhaps because the chip was embedded in a 2.048 Mb/s 30 Channel PCM MUX (E1), used in the newfangled plesiochronous digital telephone network which began to replace analogue trunking back then.

Hmmm, software tools: I remember CREDIT, a line editor with everything in capitals, and an assembler, hosted on the Intel Blue Box. I used assembler macros to implement a higher level language for creating multi-threaded state machines, and the 8080-based Blue Box cranked the right hand 8-inch floppy for a couple of seconds to assemble each macro invocation – the assembler ran off the left hand floppy. As each macro was processed, the drive groaned out “Niiiiiiiiiiiiiiiiiiiiik, Nik, Nik, Nik.”, during the disk seeks. They were simpler days – management stayed well away, and were simply grateful that they had a bod who could grok that newfangled stuff.

Nice to have another 8080 based system out there! Of course this is 8085, but it, along with the Z80 are just derivitives of the 8080. How many modern retro 8080 systems can you name???

RC2014

VCF SBC 8085

Lee Hart’s Z80 Membership Card and his ALTAID 8080

David Hunter’s ALTAID 8085

and of course, my S-100 JAIR 8080 board

Best,

Josh Bensadon

Not sure if you would count reproduction hardware, but Charles Baetsen’s the MIL MOD8 8008 / 8080 system is a hoot to play with.

don’t forget there’s still an 80C85, rad hardened , exploring Mars