The Raspberry Pi camera has become a de facto standard for many maker projects, making things like object recognition and remote streaming a breeze. However, the Sony IMX219 camera module used is capable of much more, and [Gaurav Singh] set out to unlock its capabilities.

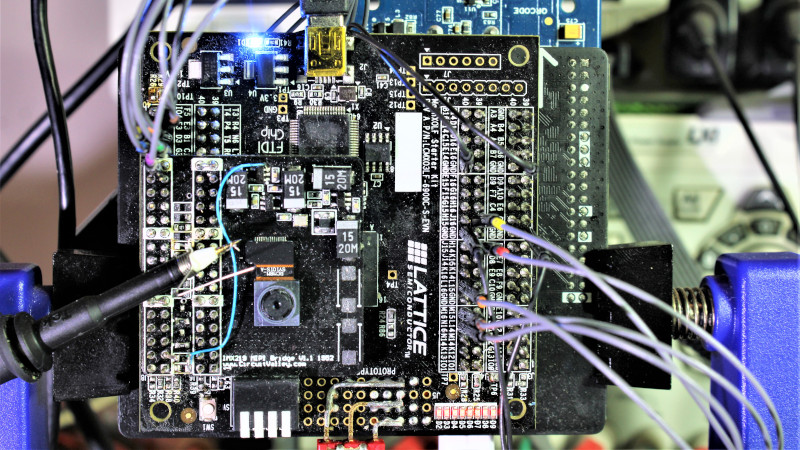

After investigating the IMX219 datasheet, it became clear that it could work at higher bandwidths when configured to use all four of its MIPI CSI lanes. In the Raspberry Pi module, only two MIPI lanes are used, limiting the camera’s framerate. Instead, [Gaurav] developed a custom IMX219 breakout module allowing the camera to be connected to an FPGA using all four lanes for greater throughput.

With this in place, it became possible to use the camera at framerates up to 1,000 fps. This was achieved by wiring the IMX219 direct to an FPGA and then to a USB 3.0 interface to a host computer, rather than using the original Raspberry Pi interface. While 1,000 fps is only available at a low resolution of 640 x 80, it’s also possible to shoot at 60 fps at 1080p, and even 15 fps at 3280 x 2464.

While it’s probably outside the realm of performance required for the average user, [Gaurav] ably demonstrates that there’s often capability left on the table if you really need it. Resources are available on Github for those eager to delve deeper. We’ve seen others use advanced techniques to up the frame rate of the IMX219, too. Video after the break.

(Grabs dslr camera, scrolls through settings) 30s, 20s, 10s, 5s, 3s, 1s, 5fps, 10fps,15fps, 30fps, 60, 100, 500, 1000, 2000, 3000, 4000, 5000. Neat project though perhaps I could do something similar with my one of my older cameras.

Please see my other comments on that no additional hardware is needed than the Raspberry v2 camera itself for capturing 1000fps video. And then show me a DSLR camera that can capture 1000fps for that price.

Exposure speed != frame rate

Exactly, while 1007fps allows for slightly less than 1ms shutter time, sometimes 12µs shutter time is needed to capture fast moving objects sharp, see:

https://hackaday.com/2020/03/11/getting-1000-fps-out-of-the-raspberry-pi-camera/#comment-6226967

And even that shows huge rolling shutter effect even though capturing 75 rows only.

That’s the idea. Assuming that link bandwidth is constant, you can get higher fps by using just a fraction of sensor data.

Neg. 640×80 is correct. The list of tested resolutions and frame-rate pairs from the github:

3280×2464 15FPS

1920×1080 60FPS

1920×1080 30FPS

1280×720 120FPS

1280×720 60FPS

1280×720 30FPS

640×480 200FPS

640×480 30FPS

640×128 682FPS

640×80 1000FPS

640×75@1007fps can be achieved without any additional hardware.

The formula for v2 framerate that I determined is “76034/height+67”, see the diagram

(… 640×240@383fps … 640×176@508fps … 640×128@667fps … 640×75@1007fps):

https://www.raspberrypi.org/forums/viewtopic.php?f=43&t=212518&p=1310445#p1320034

Well done. Nice project and implementation.

You can capture 640×75@1007fps WITHOUT any additional hardware, just with v2 camera connected to CSI-2 interface of Raspberry Pi, and many other framerates for bigger frames, eg. 640×224@410fps:

https://www.raspberrypi.org/forums/viewtopic.php?f=43&t=212518&p=1310445#p1320034

You can find many examples captured in my Raspberry camera gallery:

https://github.com/Hermann-SW/Raspberry_camera_gallery#raspberry-camera-gallery

For people thinking 640×75 is not useful, this was first 1007fps video I took of closing classical mousetrap bar. Closing takes 1/100th second in total, but with capturing 640×75@1007fps you will see 11 frames, first being last unreleased mouse trap bar, last being closed (played at 1fps):

https://stamm-wilbrandt.de/en/forum/mt.1000fps.75.gif

Raspberry PI hardware will be really limited how long recording can be performed, with This FPGA and USB3 setup , you can continuously record @1000FPS for hours and hours.

That is correct.

With 30s.

That is more than enough for eg. capturing closing mouse traps or other fast moving stuff.

I agree that your solution is good to have when needing to capture minutes or hours @1000fps.

What is the price one has to pay to build your solution?

Can your solution be adapted to capture v1 camera ov5647 MIPI traffic as well?

You can only get 640×64@665fps from that.

But you can make v1 camera do global external shutter capturing (just by setting some registers):

https://github.com/Hermann-SW/Raspberry_v1_camera_global_external_shutter#introduction

That would avoid the extreme rolling shutter effect that v2 camera always has, eg in this video (the closing bar should be vertical):

https://raw.githubusercontent.com/Hermann-SW/Raspberry_camera_gallery/master/res/mt.1007fps.10000lm.12us.anim.gif

Yeah but Raspberry Pis are cheap, easy, and all over the place. That’s likely to be useful to more people.

Solution Suggested here is also relatively cheap when compared to it’s FPGA implementation couterpart. Lattice Machxo3 is really quite cheap generic FPGA. When Compare to what FPGA vendors offer to be used with for Image solution it is order of magnitude less.

While looking at Work by Hermann, it is exceptional to be able achieve such a performance on the PI itself. But limitation Raspberry PI itself limits the applications as well. Comparing this solution to a Raspberry PI would not be in the correct direction.

It is a totally different class of solution.

It open up the door of using many other High performance Image sensor to be used in Open source projects.

how will it be limited? 640×75@1007fps has lower bitrate than 1920×1080@30

Hi, if you can tell me it would be very appreciated.

No matter what, the maximal framerate was always 1007fps.

It seems that I miss some register setting in that case.

I determined this formula for v2 framerate: 76034/height+67

This is quite goot for height=96: 76034/96+67=859, while real framerate is 841fps.

I really would like to capture 640×64@1255fps according that formula (or better 640x128_s@1255fps with capturing only every other line and then doubling each line in post processing):

https://www.raspberrypi.org/forums/viewtopic.php?f=43&t=212518&p=1310445#p1320034

One application would make the FPGA solution presented here not needed even for hour long capturing is real time frame processing on a fast moving robot. That way the frames do not need to get stored. I never tried, but sending frames via Pi3B+/Pi4B fast WLAN to other computer similar to this FPGA approach would allow for the same arbitray long capturing of 640×75@1007fps:

$ echo “640*75*10/8*1007/1024^2” | bc -q

57

$

Pi4B Wifi can do 58Mbps@2.4GHz and 114Mbps@5GHz Wifi.

I did saw Frame rate upto ~1500 FPS with Maximum MIPI output freqency all max possible ADC pll at around 640×80, right now because of pipeline and memoryless output reformatter fpga module This FPGA solution limited with because of GPIF frequency of FX3. If either FX3 could have gone higher than 32bit 100Mhz , or i would implement FIFO in output reformatter it can certainly got upto at least 1500FPS

640 x 80 is probably a perfectly cromulent aspect ratio for the best use of a 1000fps sample rate, which is motion detection in frame for your diy robot that can catch things thrown at it :)

With the no additional hardware raspiraw based solution mentioned in my other postings you can get even double FoV than 640×75 captured at 1007fps, the mode is called 640x150_s. Only every other line gets captured (so only 75 lines), out of 160 lines. In post processing each line is doubled, and you get 640×150 frames at 1007fps. This is 640x150_s@1007fps video of closing classical mouse trap bar. Because of the high speed of bar the camera shutter time is 12µs(!), and 5000lm bright focussed light was needed to compensate for that. But in the end the fast moving bar (with extreme rolling shutter effect) can be seen very sharp:

https://raw.githubusercontent.com/Hermann-SW/Raspberry_camera_gallery/master/res/mt.1007fps.10000lm.12us.anim.gif

Likely it is 640×80 – less overheads to be switching less scanlines and much less data to transfer. 640×80 give you a wide aspect ratio. It would be probably useful in recording/analyzing high speed projectile, or a wide angle view ahead of your robot etc.

I see, very neat.

It’s probably not massively useful, and might be the same aspect ratio, just low resolution and tall pixels. Depends which lines it sends. And aspect ratio could be altered with a lens, theoretically.

The 640 is probably fixed because it’s the smallest block of pixels the camera chip can read at once. Otherwise 320×160 would’ve been much more sensible.

A quick lookup for the Chronos.

It does 1080p @ 1000fps

It can also do 1920×8 @ 100000 fps. which is about the same bandwidth.

Getting 1000fps out of an EUR20 (or so I believe) camera is quite impressive.

And of course it’s not on par with a USD2500 camera such as the Chronos.

I would be more likely to get me some of these fun camera’s then a Chronos or equivalent.

Right, so you’re saying a camera that costs 125x more, can do better. Useful.

you can also do ~$1K 1,136×384 @ 1000fps with Sony RX10 II/III

Previous high speed using pi camera…

https://hackaday.com/2019/08/10/660-fps-raspberry-pi-video-captures-the-moment-in-extreme-slo-mo/

Why do we need 1000fps when rpi can not handle opencv even for 60fps?

This Project is targeted at a totally different class of solution.

Solution with FPGA is different from Raspberry PI. Goal of the project was to be able to use a MIPI CSI-2 camera at first with FPGA and then later implement intelligent solution on FPGA itself to do something useful with it

As other have pointed out it has some application in robotics vision at high speed.

But it would be awesome for spectroscopy.

640 x 80? You wanted to say: 640 x 480

And why on earth would you want 1000 FPS when the eye cannot tell the difference anymore over 30. It’s a nice show off in video game that look at man crysis is running with 100FPS whoa where it would look the same if it would be running with 50 so WHY?

It indeed 640×80 @1000FPS, Application of such a camera would be in recording slowmotion video, Fast scanner, Robotic vision.

What the hell are you talking about? Eye cant see past 24 fps, thats why Cinema and PAL both use ~this frequency. You got brainwashed by National Television System Committee and their wasteful 30 frame refresh rate conspiracy. Everyone knows those extra 6 frames are allocated for subliminal transmissions.

Most excellent article! Do you think it would be possible to use 2 (or more) cameras on the same FPGA?

If some way the FPGA could trigger them in sequence you could possibly double the (virtual) framerate

Nice idea.

Gaurav, is double/triple/quadruple of 1000fps possible?

Or is there some limitation in the bandwith of USB3 bus?

If so, could this be achieve utilizing multible USB connections?

USB FX3 can do 32bit 100Mhz, which translate to 400 MBytes Per Second, a little over clocking of FX3 GPIF upto around 112MHz will bring 448MBytes Per second. This bandwidth in theory Translates to at 640×80 to ~4000 FPS. FX3 is quite nice Chip i have found it delivers most of the time more than it claims. USB controller on FX3 can do full what USB 3.0 allows but 32bit Parallel port (GPIF) limits it to as mentioned above to 32bit @ 100MHz.

Cypress offers Other FX3 like controller CX3, CX3 is specifically for MIPI CSI camera. CX3 can active full USB 3.0 bandwidth. But one must implement custom application on PC side to receive RAW video data as CX3 does not have any logic to do any Debayer or for UVC, RGB to YUV conversion. (UVC neither support RAW nor RGB)

Idea with Double camera may be possible, Built in PLL on camera may cause issue and drift frames, but in theory FPGA can keep track of Frames and correct if necessary. A somewhat larger FPGA would be needed, Lattice MachXO3 is a relatively small FPGA if looked for crucial high speed resources. Multi camera design will LUT/Gate wise fit onto MachXO3 but because of limited clock resources will cause issues doing anything fast.

Multi USB Design is certainly possible but need lot of custom solution on both of Hardware and PC side. Generally when designer surpass what USB can offer, they go to PCIE

Lowest hanging fruit for optimization is output refromatter module in FPGA Right now FPGA YUV output is running at full 100Mhz ( which is driven by 200Mhz mipi clock /2 ) but output is fragmented and does not take full advantage of 100Mhz FX3 bus. If i would make a FIFO in reformatter we can de-couple MIPI clock and FPGA output. which make possible to run MIPI clock faster than 200Mhz. Wich ultimately makes it possible to get even faster frame rate, i have seen frame rate @ 640×80 to upto 1500 FPS when i ran MIPI clock faster form 270 to 320Mhz. Hardware in the current state may hinder reaching high MIPI frequency. May need to have custom FPGA board with correctly terminated CSI lanes.

Second optimization would be again in same reformatter but little complicated. buffer a lot more data and utilze 100 % of 32bit FX3 Bus. In theory one can utlize some other USB controller and get all bandwidth what USB 3.0 allow.

“…there’s often capability left on the table if you really need it.”

Literally the exact reason hackers hack. You have a old cell phone you’re going to toss? Well with a bit jiggery probery now I have a baseband radio, and some small TFTs, and a hand full of sensors, and button pads, and a small power pack, etc. I have a cheap drone that has a wealth of sensors on it that I’m currently turning into a room environmental sensor. Every no name 8 pin MCU tossed in the trash is another wasted opportunity. Want to stem the tide of e-waste? Learn to break components out of their prison of applied purpose, and make them do something, anything, new!