The OpenStreetMap project is an excellent example of how powerful crowdsourced data can be, but that’s not to say the system is perfect. Invalid data, added intentionally or otherwise, can sometimes slip through the cracks and lead to some interesting problems. A fact that developers Asobo Studio are becoming keenly aware of as players explore their recently released Microsoft Flight Simulator 2020.

Like a Wiki, users can update OpenStreetMap and about a year ago, user nathanwright120 marked a 2 story building near Melbourne, Australia as having an incredible 212 floors (we think it’s this commit). The rest of his edits seem legitimate enough, so it’s a safe bet that it was simply a typo made in haste. The sort of thing that could happen to anyone. Not long after, thanks to the beauty of open source, another user picked up on the error and got it fixed up.

But not before some script written by Asobo Studio went through sucked up the OpenStreetMap data for Australia and implemented it into their virtual recreation of the planet. The result is that the hotly anticipated flight simulator now features a majestic structure in the Melbourne skyline that rises far above…everything.

The whole thing is great fun, and honestly, players probably wouldn’t even mind if it got left in as a Easter egg. It’s certainly providing them with some free publicity; in the video below you can see a player by the name of Conor O’Kane land his aircraft on the dizzying edifice, a feat which has earned him nearly 100,000 views in just a few days.

But it does have us thinking about filtering crowdsourced data. If you ask random people to, say, identify flying saucers in NASA footage, how do you filter that? You probably don’t want to take one person’s input as authoritative. What about 10 people? Or a hundred?

The Army Marches on Data

When you think about geospatial data, what heuristics could you use to at least identify areas to look at closer? In this case, the fact that the tallest building in the world only has 163 floors would have been a good clue. Even if the building had 100 floors, the fact that nothing else near it has even a quarter of that number would be another clue. In either case, the Great Tower of Melbourne could have been avoided with a single line of code validating the height data pulled from OpenStreetMap.

For terrain, rapid changes in elevation might be another data indicator. That would have prevented the wall of ice that guards us from the White Walkers. We wondered if anyone had given this any thought before. Turns out, the US Army has. They even mention OpenStreetMap and many other sources, some of which we didn’t know about.

For terrain, rapid changes in elevation might be another data indicator. That would have prevented the wall of ice that guards us from the White Walkers. We wondered if anyone had given this any thought before. Turns out, the US Army has. They even mention OpenStreetMap and many other sources, some of which we didn’t know about.

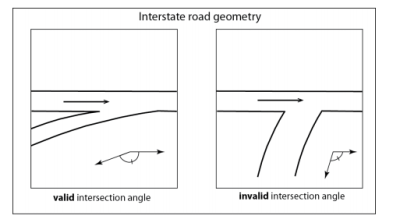

Section 4 of the aptly named Crowdsourced Geospatial Data talks about how to vet crowdsourced data and address errors due to sensor variability, language, and other technical factors. However, errors from logical inconsistency should be moderately simple to filter out, and the paper identifies efforts to automate that for geospatial data. For example, the angle between two intersecting roads is typically within a relatively narrow range of angles.

According to the paper, several researchers have validated data and found high error rates in public information sources. For example, in the United Kingdom and Ireland, OpenStreetMap data with more than 15 edits had errors in about 8% of the time. In France, about 5% of the crossroads had geometric inaccuracies.

Geospatial Graffiti

Of course, that assumes the errors are the result of honest mistakes. Protecting against malicious data entry is an entirely different problem, and one that’s potentially much harder to identify and fix.

This situation is also addressed in the Army’s report, but only briefly. It stands to reason that if the military has some particular tips and tricks they use to sniff out this sort of thing, they probably don’t want them to become public knowledge.

This situation is also addressed in the Army’s report, but only briefly. It stands to reason that if the military has some particular tips and tricks they use to sniff out this sort of thing, they probably don’t want them to become public knowledge.

With crowdsourced data growing in popularity, it would be easy to imagine wanting to displace key targets slightly or even significantly. A bunker “known” to be at the center of a facility might survive if the data says that the facility is a few hundred meters to the right of its actual position. Disinformation has always been a powerful tool, and it’s only amplified in the era of Big Data.

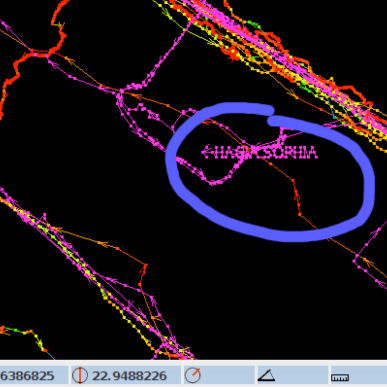

That said, some of it isn’t too hard to find. People actually use GPS tracks to spell out graffiti in OpenStreetMap, for example. So if you stumble upon any mile-wide letters written in the countryside, it’s probably safe to leave them out of your flight simulator.

Closer To Home

This doesn’t just apply to geospatial data, either. How often do you take data from a pressure or temperature sensor? Do you validate it? For high-reliability data, you might need multiple redundant sensors with some voting logic. That’s common in aircraft and spacecraft. You might have three sensors and take the average of the three if they read close together or reject one if it is way off compared to its counterparts.

I had a commercial drone once suddenly decide it was 4,096 feet below sea level due to a failed pressure sensor. The resulting rapid ascent to try to correct the altitude was both amazing and terrifying since it was a big drone. The firmware should have made some simple assumptions about data quality, such as realizing that it wasn’t likely to suddenly find itself hundreds of feet below seal level, or that the data wasn’t trending in the expected way as it tried to gain altitude. It certainly would have made my day easier, not to mention the pilot’s.

What’s your favorite data validation trick? How much do you trust crowdsource data? Wikipedia is usually right over the long term, but there are certainly cases where bad data slips through until someone catches it.

Thanks [ptkwilliams] for the tip about Flight Simulator.

Actually this is unlikely to be problem with the original map data, which does not contain the 3D building information.

It is all due to Microsoft’s AI model that attempts to reconstruct 3D geometry from the satellite photos and maps – and fails badly in many situations, putting bridges under the water, generating implausible buildings, etc.

Did you read?

The data was scrapped from an open source project which includes heights of buildings. It’s not AI predicting the height of that building, it’s open sourced data reporting it as 212 stories. You can follow the link and see clear as day that the building was set to 212 stories tall in that commit for that source of data from that open source project.

I love to blame AI for nonsense but first I like to read and make sure it’s not me missunderstanding something.

You’re misunderstanding something alright.

AI didnt cause this mistake. And the author DIDNT say that it did. No idea where you got that from. The author points out that “ai” could prevent this mistake, but your comment is nonsensical.

“The author points out that “ai” could prevent this mistake”

This article does not say that, anywhere! Read carefully! Talk about nonsense!

Here is what it actually says:

“the Great Tower of Melbourne could have been avoided with a single line of code validating the height data pulled from OpenStreetMap.”

Since when is one line of code “AI”? Wow apparently I’ve been writing AI code for years without realizing it!

And what do you think AI code is? It’s just multiplications and comparisons…

Well as terrible a thing it is to have a few inaccurately sized building in a worldwide flight simulator, or a bridge or two underwater, or Buckingham palace looking like a block of flats, that’s one of the great things about it being delivered from the cloud. It’s a simple fix by a single person, change the data and everybody automatically gets the update.

No redistributing millions of DVDs, no fuss.

It’s kind of incredible how seriously people are freaking out and discussing online about these very minor, quickly corrected issues, they were bound to happen. Quite frankly I’m amazed the software isn’t a lot more buggier and crash prone seeing some of the alpha / beta (?) recordings people have made.

I’m just blown away with the achievement they’ve done – in one fell swoop pretty much put X-Plane 11’s poor assed default gfx to shame and killed them.

Let’s just hope Microsoft / Asobo see the value in continued base product development, even MS should see it as a huge money spinner like Grand Theft Auto 5 lasted 5+ years. Just so long as they don’t just leave it to third party devs to pick up ALL the slack in the product, charging $$$. They do need to polish up the launch day tarnish.

I have these geodesic domes all over, every where I fly, in any aircraft. I wonder if I’m alone?

In fairness we have some pretty strange junctions all over Europe, because most of them have been built ‘following the land’, that intersection shown on the right totally exists and is just a slightly skewed T-junction ^^

I’d even say that there are *many* more skewed T-junctions than non-skewed ones and any roads and buildings making a grid are kinda unicorns and even then it’s 2-3 rows max. in very small area :D (At least in Czech and Slovak Republic.)

Plenty of skewed t junctions and cross roads where I live in Australia as well. It seems around here anything illogical and nonsensical is considered suitable for casting in bitumen and concrete.

Even T intersections where the “base” of the T has right of way as opposed to the more common “cross” of the T.

You can always tell the visitors when they automatically stop

Note they’re talking specifically about an INTERSTATE junction (ie, on-ramp or off-ramp). Yes that geometry can happen in “normal” junctions, but you’re unlikely to ever find something with such a low approach angle on a highway, though I wonder how an algorithm like that would handle some of the German Autobahn junctions with their ridiculously short ramps and tight curves.

So, does MS Flight Simulator have a DiscWorld option?

Yeah, but the altimeter is really *weird*, and you should see the ILS/RNAV charts…

Ace Combat 7 Space Elevator…

Wikipedia is completely and totally worthless on any subject with even a shred of bias or controversy.

The standard for “truth” is barely higher than “somebody once wrote this down somewhere”.

A perfect example is the wiki page showing how revolutionary and effective pot coolers are. Authors supporting and advocating similar projects blindly assume that the coolers work as well as one could possibly imagine. Wiki reports that as fact, then hackaday repeats the falsehood.

Maybe you can get back to us about the accuracy of random postings on hackaday, for example yours is chock full of bias and should probably be ignored or deleted.

I’m guessing you think the Wikipedia entry for transistors is total garbage because they talk a lot about how transistors are biased.

LOL!

you only write that to gain amplified power to your statement.

LOLOL!

might be a gpt-3 project. Mods: hopefully you have something for this kind of thing.

It’s too bad then Wikipedia is controlled by the vast numbers of Elites in the government establishment and no ordinary Joe can edit any page offering corrections backed up by cited evidence.

I joined Wikipedia and made edits before. You certainly don’t need to be in the government.

You might have missed sarcasm.

(at least I hope it was)

My brother used to work fact checking and correcting Wikipedia. One of his discoveries affected a major legal case. He is neither elite nor a Government employee.

“wasn’t likely to suddenly find itself hundreds of feet below seal level” I know seal level is only a pretty broad approximation, but according to Wikipedia, the Baikal seal is a freshwater seal species found in Lake Baikal, which (presuming said seals sometimes can be found along the shoreline at 1,494 ft amsl) means that a drone flying along (especially in a place like Death Valley) might easily be hundreds of ft below seal level, though I suppose it might not be suddenly.

The first carnival ride operator to put up a piece of cardboard stating “you must be this tall to ride the roller coaster” had no idea that he would someday be regarded as a pioneer in the field of artificial intelligence.

Fake news! I can see that building out my window, but the owners are trying to keep it secret – maybe they should have built it a few stories smaller..

Now that the story has leaked they will probably put an invisibility generate on it so that it will look like it’s not there…

Adding some inertia might help improve the quality of crowdsourced data.

Science (one of the more valuable examples of crowdsourced information) has significant inertia, changes take the preponderance of information on a topic.

Wikipedia (and similar) has very little inertia. Which is okay for a low quality article,

but a high quality article requires a lot of maintenance just to keep it from being degraded

by miscellanious changes.

Unlike evolution via natural selection, or science, there is not much of a ratchet – a mechanism to keep the changes going in a more useful/accurate direction.

Oh yes we never go backwards in science for example our increasing understanding of science means that we decrease our pollution every year! And our increasing knowledge of the human condition means we have less suffering and hunger every year! WOW!

” the Great Tower of Melbourne could have been avoided with a single line of code validating the height data pulled from OpenStreetMap” This really made me laugh – all so easy in hindsight, but at the time, trying to anticipate all the possible things to check for quickly becomes absurd.

It seems to me Microsoft have found the perfect way to use distributed biological neutral networks to check for Bing Maps data integration errors.

Microsoft, the owner of Bing Maps, uses Openstreetmap as their map source. That tells me two things: Bing is even more worthless than I thought it was. And, Microsoft LOVES open source, as long as they can USE it, not CONTRIBUTE to it.