You don’t have two ears by accident. [Stoppi] has a great post about this, along with a video you can see below. (The text is in German, but that’s what translation is for.) The point to having two ears is that you receive audio information from slightly different angles and distances in each ear and your amazing brain can deduce a lot of spatial information from that data.

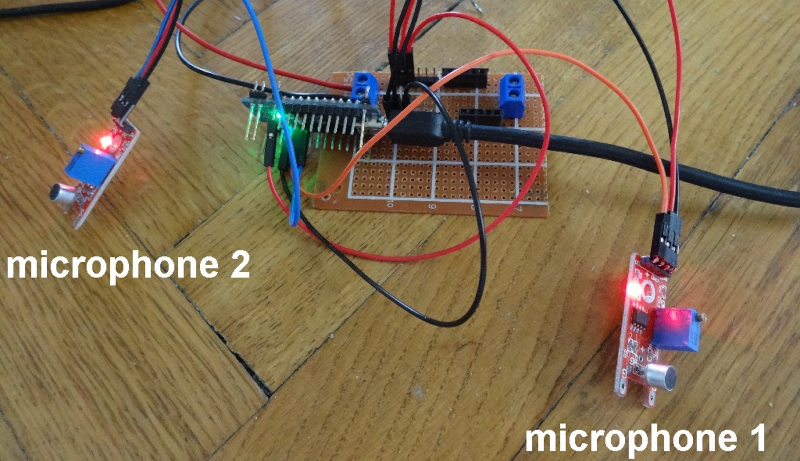

For the Arduino demonstration, cheap microphone boards take the place of your ears. A servo motor points to the direction of sound. This would be a good gimmick for a Halloween prop or a noise-sensitive security camera.

Math-wise, if you know the speed of sound, the distance between the sensors, and a few other pieces of data, you wind up with a fairly simple trigonometry problem. In non-math terms, it is easy to get a feel for why this works. If the sound hits both microphones at once, it must be coming from straight ahead. If it hits the left microphone first, it must be closer to that microphone and vice versa. If the sound were right in line with both microphones but closer to the left, the time delay would be exactly due to the speed of sound over the distance between the sensors. If the time is less than that, the sound must be somewhere in between.

The microphone modules have both analog outputs and digital outputs. The digital output triggers if the sound level exceeds a limit set by a potentiometer. By using these modules, the circuit is trivial. Just an Arudino, the two modules, and the servo motor.

Now imagine that you wanted all this spatial detail to come through your headphones. Recording binaural audio is a thing. You can 3D print a virtual head if you are interested. We’ve seen projects for this several times.

Um… if the sound hits both ears at the same time, how do you know the zombie isn’t *behind* you?

The human sense of hearing is exceptional. By tilting the head and hearing the same sound, you can get a 3d mapping of the sound locations.

The sense of hearing also has a tiny channel capacity: you can only distinguish about 5 levels of tone at any one time.

That is to say, subjects can identify which of 5 tones in one range (say, around 400 hz) with no errors, and which of 5 tones at a different range (say, around 1000 hz) with no errors, but if you ask them to identify which of 10 tones using both ranges they will make a lot of errors. The channel capacity of hearing tones is around 2.5 bits of information.

(And since I know people will ask, here’s a reference explaining it: http://www2.psych.utoronto.ca/users/peterson/psy430s2001/Miller%20GA%20Magical%20Seven%20Psych%20Review%201955.pdf)

Here is the segment of Smarter Everyday video “Shooting Down a Lost Drone and why Dogs Tilt their Heads – Smarter Every Day 173” that answer this question.

Temporal Cues – sound arrives at slightly different time to each ear gives the left/right direction.

Spectral Cues – sound reflects different due shape of your ears gives the vertical axis info.

https://youtu.be/Oai7HUqncAA?t=193

Let’s not forget attenuation clues. When sounds from behind pass through the ear, certain frequencies get through better than others. Along with the other factors you mentioned, this gives a full 3d sense of what direction the sound is coming from.

Cool project, I’m glad to see this can be done in a simple way.

…Billions and billions of suns.

Being profound deaf myself…this could be a very good tool for blind and deaf to warm them of load noise of danger…or even some one knocking on the door….wonder if possible to simulate the sound by jiggling the servo as it point to the source.

Same boat. Deaf in my right ear and my left one barely works. I’ve never had stereo hearing in my life and one of my more frequent difficulties is pinpointing where a sound is coming from.

I work in a store that has a few dozen anti-theft alarms that run off batteries. When those batteries are low it beeps once every minute to let ya know they need batteries. I can’t tell where in the room the sound is coming from and have to go and stand by each cabinet and wait for it to beep to see if I’m by the right one or not.

Same goes for unwanted noises in a car or motorcycle engine. I gotta run a bit of vinyl tubing to my ear and sweep around to locate where it’s coming from.

I know the feeling to well. Lol…being mono and having to rader for the sound source can be very hard …espacilay along with background noise. Can’t those alarm have a flashing light put on them?

You (or the store policy) could do what the auditorium overhead lightbulb replacers do: change them all at once. It’s a balance between wasting end-of-lifetime of individual consumables vs the labor of hauling out the scissor lift (or standing around waiting for a beep).

If it were light bulbs… absolutely we do that. But in this particular case it means taking all the product off a cabinet, then the shelves, then the panels of the cabinet off. Huge pain, but is what it is… standing around ain’t so bad! These things are like smoke detectors, you get years of life outta em. But when they go they are just as annoying!

My spouse and I toyed with this concept using haptic feedback earrings when they were applying to a Masters in Biotech.

Effectively, you can do it all without a brain, and using a pair of vibratory motors and an op-amp.

Since those mics don’t have ears attached to them, would adding a third give you the triangulation needed to tell if something is in front or behind you?

yes, that’s one way, for sure. Google Ambisonics for a multi-mic system for recording sound from every direction.

This is how the “Shot Spotter” system in NYC works. Microphones are around the city in known locations, They pick up gunfire and each microphone that hears it can generate a line of bearing. Get 3 LOB’s or more and you have a fairly accurate fix on the shot location.

I’ve been using dummy head mics for decades. It is the only way to do the human interface with sound, Ambisonics when set a certain way comes close. Binaural simply goes the full VR experience with sound. Since Clement Ader’s demo in 1888. 0 to 800 microseconds time time of arrival sonar. Some blind people can click and hear at least a door in a hall usually space aware indoors. It’s not just for bats and cetaceans.

What would happen if you put such a head on the servo? Spooky!

A Styrofoam wig stand is a good start bury a pair of mics in.

Binaural recording is awesome. I’ve been getting great results by just getting cheap open walkman-style headphones, and replacing the headphone speakers with omni electret capsules. The you just wear them – they’re cheap, stealthy and easy to use anywhere. I’ve done this for many years, recording to cassette, MiniDisc, and now ZOOM portable recorders.

One fun experiment is to make a recording while walking through an interesting sonic space, like a park. Include some soft-spoken commentary like “heading north from pine tree”, “just going past water fountain”, etc. Then, maybe at a later date, play back the recording through headphones while repeating the walk. The realism of hearing the playback in the same visual space is astounding. Warning – don’t try this where there’s physical danger, like crossing streets.