3D-scanning seems like a straightforward process — put the subject inside a motion control gantry, bounce light off the surface, measure the reflections, and do some math to reconstruct the shape in three dimensions. But traditional 3D-scanning isn’t good for subjects with complex topologies and lots of nooks and crannies that light can’t get to. Which is why volumetric 3D-scanning could become an important tool someday.

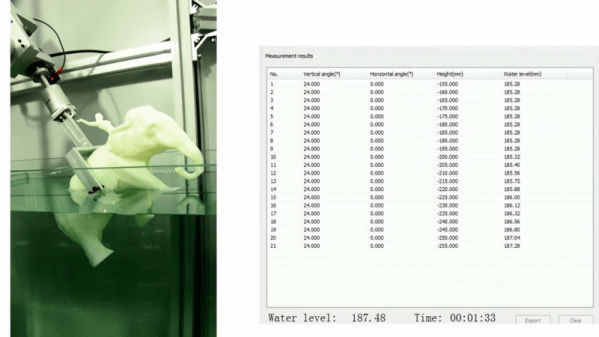

As the name implies, volumetric scanning relies on measuring the change in volume of a medium as an object is moved through it. In the case of [Kfir Aberman] and [Oren Katzir]’s “dip scanning” method, the medium is a tank of water whose level is measured to a high precision with a float sensor. The object to be scanned is dipped slowly into the water by a robot as data is gathered. The robot removes the object, changes the orientation, and dips again. Dipping is repeated until enough data has been collected to run through a transformation algorithm that can reconstruct the shape of the object. Anywhere the water can reach can be scanned, and the video below shows how good the results can be with enough data. Full details are available in the PDF of their paper.

While optical 3D-scanning with the standard turntable and laser configuration will probably be around for a while, dip scanning seems like a powerful method for getting topological data using really simple equipment.

[wpvideo xx88I1SN]

Thanks to [bmsleight] for the tip.