Imagine the power to clone your favorite LEGO piece—not just any piece, but let’s say, one that costs €50 second-hand. [Balazs] from RacingBrick posed this exact question: can a 3D scanner recreate LEGO pieces at home? Armed with Creality’s CR-Scan Otter, he set out to duplicate a humble DUPLO sheep and, of course, tackle the holy grail of LEGO collectibles: the rare LEGO goat.

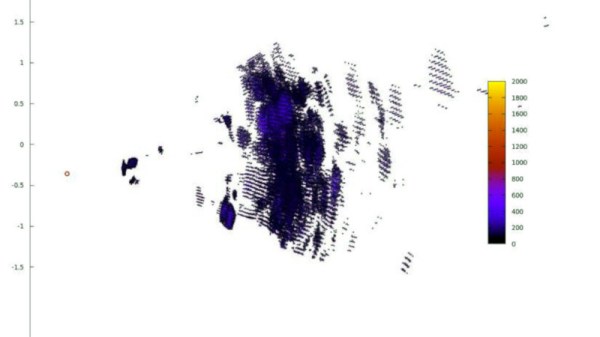

The CR-Scan Otter is a neat gadget for hobbyists, capable of capturing objects as small as a LEGO piece. While the scanner proved adept with larger, blocky pieces, reflective LEGO plastic posed challenges, requiring multiple scans for detailed accuracy. With clever use of 3D printed tracking points, even the elusive goat came to life—albeit with imperfections. The process highlighted both the potential and the limitations of replicating tiny, complex shapes. From multi-colored DUPLO sheep to metallic green dinosaur jaws, [Balazs]’s experiments show how scanners can fuel customization for non-commercial purposes.

For those itching to enhance or replace their builds, this project is inspiring but practical advice remains: cloning LEGO pieces with a scanner is fun but far from plug-and-play. Check out [Balazs]’s exploration below for the full geeky details and inspiration.