If a picture is worth a thousand words, a video must be worth millions. However, computers still aren’t very good at analyzing video. Machine vision software like OpenCV can do certain tasks like facial recognition quite well. But current software isn’t good at determining the physical nature of the objects being filmed. [Abe Davis, Justin G. Chen, and Fredo Durand] are members of the MIT Computer Science and Artificial Intelligence Laboratory. They’re working toward a method of determining the structure of an object based upon the object’s motion in a video.

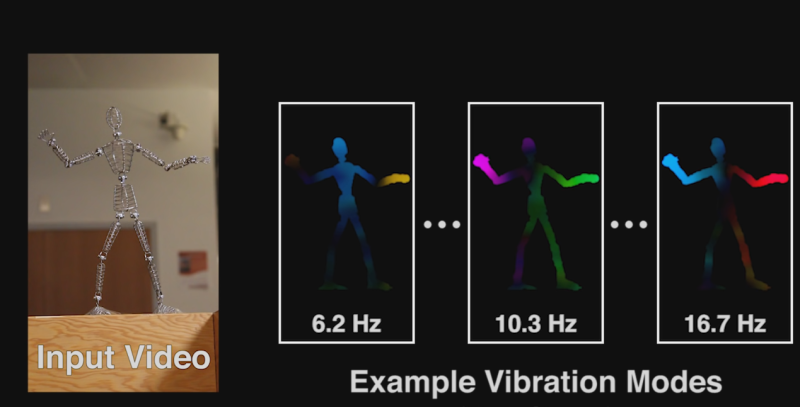

The technique relies on vibrations which can be captured by a typical 30 or 60 Frames Per Second (fps) camera. Here’s how it works: A locked down camera is used to image an object. The object is moved due to wind, or someone banging on it, or any other mechanical means. This movement is captured on video. The team’s software then analyzes the video to see exactly where the object moved, and how much it moved. Complex objects can have many vibration modes. The wire frame figure used in the video is a great example. The hands of the figure will vibrate more than the figure’s feet. The software uses this information to construct a rudimentary model of the object being filmed. It then allows the user to interact with the object by clicking and dragging with a mouse. Dragging the hands will produce more movement than dragging the feet.

The results aren’t perfect – they remind us of computer animated objects from just a few years ago. However, this is very promising. These aren’t textured wire frames created in 3D modeling software. The models and skeletons were created automatically using software analysis. The team’s research paper (PDF link) contains all the details of their research. Check it out, and check out the video after the break.

Why does this come across as “Video analysis solved…. we say screw it and use a form of sonar” ? :-D

Though good point that understanding an object comes through interacting with it, and babies begin to do it by stuffing things in their mouths… maybe need machines with mouths :-D

Movies are about to get a lot reeler.

freaky….

Beautiful. Well thought using the frequency domain of an image to find its moving parts. Of course, there should be antecedents, but i haven’t read any.

i wonder if the software can also tell us where the force or frequency needs to be to get an object to behave in the same way as a simulation.

Now this, I could actually see this having a lot of applications in making animations in video games more realistic. ESPECIALLY in making physics in games and bringing it up to a reasonable level. I could completely see taking a leaf off a plant, doing this to it and then making a 3d model of a plant with the same weights and whatnot to create more realistic animations.

16.7Hz is above what would be the Nyquist frequency when capturing at 30 FPS… so I’m guessing they used 60 FPS here. A question to ask though is, is the 6.2Hz actually 6.2Hz, or is some of it possibly at an image frequency?

In one of their video’s they use the “artefacts” from the rolling shutter to increase the FPS.

So they can analyze multiple kHz frequencies from 50Hz video

They should team up with the guys that were using FPGA based systems to extract real-time geometry from stereo camera feeds. I wonder if they can use a third central camera runing at low resolution and 120 fps to extract the dynamics that can then be used to distort the high resolution 3D video?

High FPS low res. sensor in the wiimote immediately jumps to mind.

Reminds me of myst.

Jesus, this just completely blew my mind. This is very impressive!