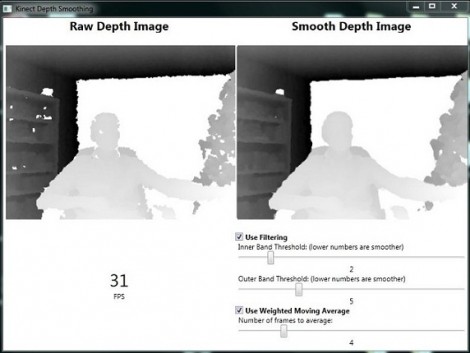

[Karl] set out to improve the depth image that the Kinect camera is able to feed into a computer. He’s come up with a pre-processing package which smooths the depth data in real-time.

There are a few problems here, one is that the Kinect has a fairly low resolution, it is also depth limited to a range of about 8 meters from the device (an issue we hadn’t considered when looking at Kinect-based mapping solutions). But the drawbacks of those shortcomings can be mitigated by improving the data that it does collect. [Karl’s] approach is twofold: pixel filtering, and averaging of movement.

The pixel filtering works with the depth data to help clarify the outlines of objects. Weighted moving average is used to help reduce the amount of flickering areas rendered from frame to frame. [Karl] included a nice GUI with the code which lets you tweak the filter settings until they’re just right. See a demo of that interface in the clip after the break and let us know what you might use this for by leaving a comment.

[youtube=http://www.youtube.com/watch?v=YZ64kJ–aeg&w=470]

there were so many kinect post that people just say next when they see one now. This been the only comment is proof of that.

I used to run a Half Life server and there was this little program to Boost FPS… Practical or not it might be worth a look under the hood Boost FPS a program that was used in Half Life 2 Source Dedicated Server

Won’t work for the Kinect unfortunately. Good idea though. The 30FPS limit for the Kinect is a limit of how fast the Kinect itself can process information, not the computer connected to it. To increase the FPS, Microsoft would need to upgrade the Kinect hardware itself.

That was an interesting read. I’m not getting the point between the inner and outer filter loops, though. Why not weight the values based on distance? That way, a couple of nonzero pixels close to the hole would be worth than several nonzero pixels further from the hole, and should help improve the accuracy of the fill.

At least, I’d assume so.

I need to get back to getting some Kinect programs buildable under my home environment.

Having a consistent depth map doesn’t really help a lot in my actual dev experience (going on 18 months now). The real issues surround update latency, the kinect itself takes 60ms to process data internally. When linked to any sort of deferred rendering process you are already looking at nearly 120ms action->display latency without accounting for your monitor/tv output.