Self-driving cars are starting to pop up everywhere as companies slowly begin to test and improve them for the commercial market. Heck, Google’s self-driving car actually has its very own driver’s license in Nevada! There have been minimal accidents, and most of the time, they say it’s not the autonomous cars’ fault. But when autonomous cars are widespread — there will still be accidents — it’s inevitable. And what will happen when your car has to decide whether to save you, or a crowd of people? Ever think about that before?

It’s an extremely valid concern, and raises a huge ethical issue. In the rare circumstance that the car has to choose the “best” outcome — what will determine that? Reducing the loss of life? Even if it means crashing into a wall, mortally injuring you, the driver? Maybe car manufacturers will finally have to make ejection seats a standard feature!

If that is standard on all commercial autonomous vehicles, are consumers really going to buy a car that might in the 0.00001% probability be programmed to kill you in order to save pedestrians? Would you even be able to make that choice if you were the one driving?

Well — the researchers decided to poll the public. Which is wherein lies the paradox — people do think autonomous cars should sacrifice the needs of the few for the needs of the many… as long as they don’t have to drive one themselves. There’s actually a name for this kind of predicament. It’s called the Trolley Problem.

In scenario 1, imagine you are driving a trolley down a set of railway tracks towards a group of five workers. Your inaction will result in their death. If you steer onto the side track, you will kill one worker who happens to be in the way.

In scenario 2, you’re a surgeon. Five people require immediate organ transplants. Your only option is to fatally harvest the required organs from a perfectly healthy sixth patient, who doesn’t consent to the idea. If you don’t do anything, the five patients will die.

The two scenarios are identical in outcome. Kill one to save five. But is there a moral difference between the two? In one scenario, you’re a hero — you did the right thing. In the other, you’re a psychopath. Isn’t psychology fun?

As the authors of the research paper put it, their surveys:

suggested that respondents might be prepared for autonomous vehicles programmed to make utilitarian moral decisions in situations of unavoidable harm… When it comes to split-second moral judgments, people may very well expect more from machines than they do from each other.

Fans of Issac Asimov may find this tough reading: when asked if these decisions should be enshrined in law, most of those surveyed felt that they should not. Although the idea of creating laws to formalize these moral decisions had more support in autonomous vehicles than human-driven ones, it was still strongly opposed.

This does create an interesting grey area, though: if these rules are not enforced by law, who do we trust to create the systems that make these decisions? Are you okay with letting Google make these life and death decisions?

What this really means is before autonomous cars become commercial, public opinion is going to have to make a big decision on what’s really “OK” for autonomous cars to do (or not to do). It’s a pretty interesting problem and if you’re interested in reading more, check out this other article about How to Help Self-Driving Cars Make Ethical Decisions.

Might have you reconsider building your own self-driving vehicle then, huh?

[Research Paper via MIT Technology Review]

If a rich man owned a self driving car, could he bribe someone else’s car to kill 5 poor people to save him?

stop dreaming about what you cant make for yourself. JACKSON.

Hack your own self driving car and kill your enemies with plausible deniability.

Why would you hack your own car to run someone over? Hack someone else’s. Hack your enemies, make it look like they did it. The possibilities for this to get really messy are endless.

It’s bad enough when hackers (both randoms & state-sponsored) use botnets for DDoS attacks etc., when they use botnets of hacked cars to run over their enemies (or crash into infrastructure, or jam countries’ roads, or ram their competitors’ vehicles off the road…) it’s going to be madness.

You can hack every car, not only self driving cars or cars with computers, cutting the break is also a hack that let the car do something els then intended, not breaking.

Nobody asked me, but the surgery question is ethically *quite* clear. You do not do the surgery. There is no guarantee that the five people who die will not die anyway from post-surgical complications or organ rejection, if nothing else.

I think a lot of the hand-wringing about the cars assumes that they find themselves in situations that human drivers do. No accident has just one, 100% cause. Self-driving cars would be much better at avoiding situations that lead to accidents than their human counterparts, meaning that they will have far fewer such ethical choices to make.

And, for the record, you slam the car into a wall before hitting a pedestrian because the passengers are shielded from the effects of the collision (crumple zones, seat belts, air bags, etc) far better than the pedestrian(s) are.

It wasn’t implied that the people getting the organ transplant were given odds of survival. It was a 100% chance, as in, one person must die to save five. No organ rejection, no complications. Just a five for one swap.

Yeah, well, that’s just Kobayashi Maru.

Moral algebra is a fascinating thing! Logically, you can prove all sorts of morals that would outrage people if you actually carried them out. Partly I suppose cos we’re illogical hypocrites.

And partly because such algebra is trying to force incomplete and ill-defined axioms as the starting points of such calculations. Garbage in, garbage out.

Such as trying to apply utilitarianism to a problem, which is making all sorts of presumptions that we may or may not agree with, such as what does “greatest good for most people” mean in the first place and why should we want it? It results in ridiculous propositions.

In ANY real-life surgery there is a chance of complications, so yes it was absolutely implied. That’s where the psychopathy comes in — the surgeon could be killing **6** people by doing that surgery!!!

“Self-driving cars would be much better at avoiding situations that lead to accidents than their human counterparts, meaning that they will have far fewer such ethical choices to make.”

That’s assuming way too much.

AIs are actually far far dumber than people on a cognitive-perceptual basis, and they’re having great trouble even percieving their surroundings to any degree of accuracy. You may feed a video stream to a computer, and load it with radars and proximity sensors, but getting the computer to understand even a little bit about what it is looking at basically requires a supercomputer to pull off in real time.

A computer would be fast to make logical inferences such as, what is the best reaction to minimize damage in an accident or where to steer to not hit anything – but it all relies on the system’s ability to measure and determine the situation it is actually in. All the “self-driving cars are safer” arguments rely on the false notion that the computer is superhumanly aware of its surroundings, whereas in reality the opposite is true – the computer is far worse at it than a human.

The Google Car for example percieves all its surroundings as vague pixel blobs with its lidar. It relies almost entirely on pre-programmed GPS routes and simply tries not to hit any of the “blobs” along the way. In the press they’ve hyped about how it detects things like cyclists, but in reality they’re all just “blobs” to the AI. It might classify things into bigger blobs and smaller blobs, and assign different probabilities about their future trajectories some 0.05 seconds ahead in time, but with the effective complexity and smarts of a flatworm it really can’t do anything else. It’s simply reacting really fast to situations that would be avoided entirely if the machine understood what it was actually doing.

And when I say “almost entirely”, I mean it quite literally. The Google Cars are taught a route by a human driver. They can’t just be sent off to any address – you need someone to teach them the way there turn by turn, all the way down to what line to take around a corner. You teach it where to stop for red lights, where to stop for intersections etc. because the car can’t identify that information independently.

It records how the human teacher drives, with additional data input such as where to look for the red lights (it won’t find them on its own), and then replicates the effort within certain parameters such as “keep two seconds from leading vehicle”, where “leading vehicle” is the closest blob ahead it can see on its lidar. It’s a line following robot that slavishly follows an invisible virtual line drawn on the road, and whenever it encounters a pre-marked or unexpected obstacle, it stops and waits for the obstacle to clear itself.

That’s it. It’s incredibly easy to confuse such a system, such as by having an empty plastic bag flutter by, or a drop of water in the visor deflecting the laser beam, causing the car to slam its brakes because it can’t actually tell the difference to anything. That’s why the Google cars have a disproportionate number of rear-end collisions to miles driven – they stop at everything that confuses them, and wait longer than normal to get going, basically acting as road plugs where you wouldn’t expect to find actual drivers to stop.

The main trick they pull off is their ability to combine dead-reckoning with 3D lidar imaging to GPS data to keep accurate real-time location information down to the inch, so the programmed path doesn’t start drifting around.

It’s a bit strange that the Google cars need the route teaching to them, since Google Earth seems able to deduce any route, including changing different types of transport, waiting for buses and trains, etc. Why can’t they just feed the data from Google Earth into one of the cars? It doesn’t have to work it out by itself, which I appreciate probably takes a few MIPS.

Google maps treats the roads as simple “tubes”. It doesn’t need to know where the curb is, or the fact that the map often doesn’t align with where the roads actually are, because a human driver doesn’t need inch-by-inch instructions on how to drive.

The maps are actually highly inaccurate, but that’s alright since a human driver isn’t looking at the odometer to determine where to turn – a human driver can actually see the intersection and understand that it is an intersection, whereas the Google Car only sees vague blobs and has to be told precisely where to take the turn.

The fundamental problem is that in order for the computer to see an intersection and understand it as an intersection basically requires a level of semantical and contextual understanding about the world that the AI lacks.

The hard problem is basically, how to turn “1010101001001001” into the abstract thought of a road. It isn’t sufficient to simply label some set of data as “road” because the concept of a road isn’t such a simple entity – yet this is what things like computer vision algoritms do because nobody’s figured out how to do it otherwise.

Consider for example, a chair. We can show a computer a number of different chairs in different settings and make it compute a set of common statistical properties that enables it to label a chair a chair whenever it sees one with a high degree of accuracy. Now, we’ll take an example of a chair – a small stool – and place it in front of us on the floor, and put a coffee mug on it; is it a chair or is it a coffee table? What will the computer say?

Oh, and the point of the chair/table problem is when you tell the computer: “Put a sandwich on the table, not on the chair”.

Where will it put the sandwich?

Sure, but G-E knows the speed limits and features on a road.

I appreciate you couldn’t use it to drive blindfold. But I would’ve thought identifying the road itself, and the main features, would be covered in the car’s AI. It doesn’t even do that? That’s miserable! So if a temporary sign is put up, or temp traffic lights for road works, or whatever, it’s just gonna stop dead (hopefully) and ask for help?

And they want to let THAT on the roads? Jeebus, that’s completely crap! What a piece of shit car. So it only does basic processing from it’s many input sensors, and defaults to stopping dead a lot? To repeat myself, what a piece of crap.

All that money, all that computing power… Machine vision is already pretty good, semantic databases are coming along, and this thing’s the retarded big brother of those line-follower robots people built in the 70s? Proves Google’s PR is better than it’s programmers.

“Sure, but G-E knows the speed limits and features on a road.”

Except when it doesn’t, because it relies on external memory on such information rather than reading the road signs or the road itself. It gets it from an outside databank, where the information is updated out of sync, so things like temporary construction works or accident bypass traffic control may be missed completely.

It all presumes that Google will at some point have a overarching network of surveillance systems that monitor all roads at all times constantly to feed data into the cars, so the cars themselves don’t need to be anything but minimally smart. Externalize the brain and even an insect can drive a car.

The question is, how will the system work before it is completed, how does it deal with the inevitable and occasional communications breakdown, and do we want such a system in the first place?

“I would’ve thought identifying the road itself, and the main features, would be covered in the car’s AI. It doesn’t even do that?”

It does, and it doesn’t. When the car is trained for a route, it records what the road looks like to its scanners and simplifies it into a stored 3D map with the help of the human operators. Key features are marked, pedestrians and cars are cleaned out of the raw data… etc. Then it keeps comparing GPS and lidar data to that map to track where it is at any given time.

The idea is that the Google Cars would eventually amass a large databank of 3D data that is continuously updated in small bits by the cars sending in new information when the map differs from what they measure, so any car could download the latest up-to-date 3D map of where they’re supposed to be going, and drive according to that. WIth a central database, Google can use supercomputers to filter the data.

Agreed AIs are very poor at doing with situations they have not been programmed to deal with.

If this was not the case NASA would not have to plan ahead every single move the Mars rovers make and they have some of the best software engineers on the planet working for them.

Also you are not a hero if you hit the one person with the car. It is called a tragic accident.

Also you do not choose to hit the one person. Odds are you will try to avoid them as well but if you can not then it is a tragic accident.

Now if you aim for a wall putting yourself at risk to save others then you are a hero.

The whole argument is wrong, assuming all road users follow the rules of the road, there should be no accidents, any accident will be down to human error or computer error.

Unless your driving too fast, you should always have time to stop if someone starts to cross the road, or at the very least slow down enough to cause minor injury vs killing them.

“And, for the record, you slam the car into a wall before hitting a pedestrian because the passengers are shielded from the effects of the collision” is a no-fault, communal, car is a throw-away item that doesn’t really represent 2 years of your working life answer.

Ethics were mentioned and ethically, the car saves the person(s) who are not in the wrong. Error judgements have consequences. I doubt if this will be the case. The rational “cold equations” would make for too many media sob stories. “Something must be done. If saves only one life it is worth it!” Does the car know if the human in danger of being hit is a scofflaw or a child? Processing speed and knowledge of the environment can allow actions based on least damage and injury. But, does it swerve right into parked cars (they crumple nice too) where someone might be sitting in one, to avoid being side swiped by someone else who crosses the line from the other direction, and wreck the property of people not involved? The Indiana Jones – James Bond Matrix – Familyguy approach to achieving your goal?

Remember there won’t be any argument about who did what. It is all recorded in detail, so no court, no legal battles (except between car makers or with municipalities about road conditions). We all know the instinct to swerve and miss a dog or cat and how many collisions are caused by that. The smart car I would assume, has no trouble or hesitation with that decision. I suppose if the pet has an RFID, the car can text the owner the GPS coordinates of the carcass.

The problem is easily solved by limiting their speed to 5 miles per hour. and having someone walk in front with a red flag.

Yep – don’t scare the horses. (BTW, this is still a law on the books in some places. Alternatives include using a whistle or firecrackers.)

And a shot gun that must be fired before they cross intersections … merica.

The problem is not morals. We like to ascribe these human moral challenges to a vehicle, but that’s not the problem domain. It’s known verses unknown information.

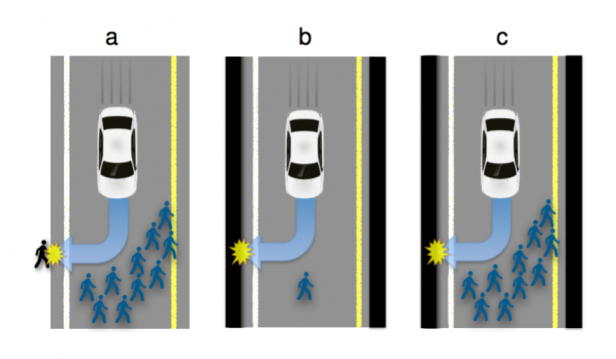

Let’s focus on example B or C. A pedestrian, for whatever reason, has appeared in the middle of the freeway in the car’s path. The car slams on the brakes but will not stop in time to not hit the pedestrian. What should the car do?

A: Swerve off of the road

B: Hit the pedestrian

We can say with reasonable certainty what the outcome of B will be: the car hits the person/s. Likely due to the very rapid and very controlled braking maneuvers an automated car can perform they might not even actually be hit. If they are going to be hit it’s not going to be at full speed.

But what about option A? Well what does ‘swerve off the road’ mean? If you’re in a city now you’re on the sidewalk, a decidedly less helpful place to be as there are likely to be other pedestrians, obstacles and even storefronts to fly into that could be full of more people.

Maybe we can tell it’s a grassy lawn? The car won’t be able to tell if the grassy lawn is wet with rain, giving the vehicle massively reduced braking capability and having the vehicle careen out of control into something that the car can’t detect until the car is already out of control.

Maybe we can tell it’s a solid object? But there’s no way to tell how solid it is. In the UK they’re experimenting with putting up chain link fencing with tarps over it around construction or accidents. To an ultrasonic sensor or computer imaging algorithm it’s going to look the same as a solid concrete wall. So we swerve into that, and suddenly we’re driving through a construction zone. Or into another accident.

Maybe we can tell it’s a concrete barrier (somehow, magically). Ok, well how is the concrete barrier affixed to the ground? Is it sitting there in a temporary construction zone? Well it might not actually be affixed and crashing into it will send you into oncoming freeway traffic. One person dodged to create a multiple car pileup.

Good job everyone!

I’ll make the prediction right now. We will never see a vehicle intentionally drive into unknown territory to save a known outcome. The car knows how to brake, the car knows how to turn, the car knows what is around it but *only on the road surface*. The moment you’re off the road every single bet the car can make is off. There are just far too many variables at play. The car will do everything in its power to minimize the energy difference between the itself and whatever it is about to hit, but it will not perform evasive maneuvers into unknown territory off the road.

The solution is to not j-walk in front of traffic.

Then act as humanly as possible. If a human gets enough reaction time he would try to avoid the pedestrian, uncaring of what happens if does so. And the self driven car will have lots of more data and fast processing to try to calculate a safe enough manouver.

yeh it would have better reflexes than us if it were an expensive computer.

Reflexes aside, it wouldn’t have enough time to process a scene with thousands of objects and calculate the path of least destruction. Let alone the time it would take to figure out what moral decision to take. Human brains may be slow to react, but we are quick to assess a situation and do something about it. The only thing a car should do is provide the driver with enough data all the time to give the driver routes of escape, or objects to watch for. Let us do the driving, let us be held accountable for the final decision.

that is purely a matter of power and architecture, tens of thousands of inputs and millions of variables arent too much for even modest computers, stream type processors like XMOS and the like eats it for breakfast.

You know this isn’t true right?

in 60% of cases when a human being is forced to make a decision with very tight time constraints they make an incorrect decision, they don’t weigh up the options, and tend to respond only to information presented immediately in front of them (so you’ll happily swerve into an entire crowd of people to avoid hitting a child on the road).

Computers are also orders of magnitude better than humans are at analysing thousands of objects, surely how much humans suck at tile matching games would tell you this. Add any more than 25 tiles and we almost get impossibly lost as to which one we just turned over.

With autonomous cars, humans should never be given any other decision than to potentially stop. Just a big Emergency Stop button.

They should certainly never be given any form of moral choice, it’s something that we do incredibly poorly as individuals. This is why juries are always a group of people.

I trust a piece of silicon to make better decisions (after the bugs have been ironed out) than me..

Why do people always think about themselves as infallible or the best for the job?

You’re replaceable, you’re worse than a piece of silicon couple of decades down the road.

Silicon chips don’t have bad days or marriage problems, they work, every day.

“Computers are also orders of magnitude better than humans are at analysing thousands of objects, surely how much humans suck at tile matching games would tell you this.”

That’s an unfair comparison. A tile matching game relies on a much higher level of cognition than tracking moving objects.

We have a very powerful brain that does things like identify a familiar face in a crowd of hundreds in a second, which would take a supercomputer just to find faces with few false positives or negatives. Meanwhile, our ability to remember abstract symbols is limited to about seven at a time. The thing is, navigating traffic is more the face-identifying kind of problem than remembering which tile had which abstract symbol underneath it. You’re just not aware of all the processing going on because it’s unconcious and not available to the concious mind – except in the way that we just do things like pick up a pen off a table, which is an enormously difficult inverse kinematics problem if you tried to put a robot to the task.

“We have a very powerful brain that does things like identify a familiar face in a crowd of hundreds in a second, which would take a supercomputer just to find faces with few false positives or negatives. Meanwhile, our ability to remember abstract symbols is limited to about seven at a time. The thing is, navigating traffic is more the face-identifying kind of problem than remembering which tile had which abstract symbol underneath it”

Driving is much more about calculating multiple things all at the same time. How fast is the car in front of me travelling? How fast is the boy approaching the road travelling, when will he reach the road, where will my car be positioned if I don’t alter my path? How close am I to the cyclist beside me? How close am I to the tram next to me? These are things which humans are absolutely terrible at. The one item that you mention ‘face-recognition’ is actually quite a high cause of accidents. Human’s are easily distracted, we will happily spend 10 seconds looking at a pedestrian trying to work out if that’s Sally from the cafe down the road… all the while ignoring the car stopped infront of us, or the cyclist turning into our path. An autonomous vehicle will have very few (I want to say none) of the human faults that cause the vast majority of accidents.

I also believe that if I had an autonomous vehicle, then I wouldn’t care if I was travelling slower than I’m used to, since I could be doing something more productive than being stuck behind the wheel. Human’s love to think we’re great multi-taskers… but we’re not. We aren’t able to successfully track all the objects in front of us all the time, let alone all the objects to the side and behind us.

That’s why the human should be the input for the car, watching the road with an EEG helmet that tells the car “careful”, “turn”, “stop” and stuff like that.

Are you being serious? That’s how a car already works. There’s a human in there which decides when to turn, to stop, and decide when to be careful or not.

I addressed this.

>The car slams on the brakes but will not stop in time to not hit the pedestrian. What should the car do?

We’re presuming it’s a binary decision, hit the pedestrian or hit a wall. The car is already doing everything it can to not hit anything, but if it has to choose (like in the article) I’m absolutely certain it will choose to hit the thing in the road over the thing not in the road.

The whole point of removing humans from the driver’s seat it to avoid the randomness of human reaction; Humans react variably to situations, and often fail to follow the most logical course of action. Drop a theoretically ideal robot in it’s place, and it will always follow the logically perfect reaction to the situation. Problems like lack of knowledge of the situation are irrelevant in this setup, as we’re looking at the moral side; That said, it’s probably worth looking at the side effects of taking a chance, as there will be times where it’s neccecary.

On a side note, one of the advantages of an autonomous car in a situation like this would be that it’s got more options available to it; It could trigger the various airbags, tighten seatbelts, or, if we get end up with fully connected cars, which is likely in the age of autonomous vehicles, asking another vehicle for assistance; IE, if it’s unable to stop due to brake failiure, it could request the cars around it to help bring it to a safe stop, or even bring in another vehicle as a buffer in a colision, to create two, smaller, definately non-fatal accidents rather than one likely fatal one; I can’t think of a situation where that would be the case off-hand, but it’s something you’d have to think about; Do you involve more people in an accident if it reduces the overall chances of death or injury?

I ride a bicycle to work, I can confirm that cars act randomly towards me.

While riding in the bike lane, some cars will zoom ahead and turn in front of me, some will zoom forward and wait, some will slow and turn behind me and, one just turned and hit me.

A very good example of a “mistake in judgment”.

The only correct answer is to slow down and wait.

All others are a “mistake in judgment” !

I also ride to work, and can confirm the stupidity of human drivers. :-(

> Drop a theoretically ideal robot in it’s place, and it will always follow the logically perfect reaction to the situation.

This was a key part of the plot to iRobot…

Computers run on logic, ie, they’re made of logic. But they can’t do logical deduction, unless you program them to, and that requires making the thing into an algorithm. Which is most of the talent of programming, actual code just flows from your fingers, figuring out how to get what you want, and precisely and definitely WHAT you want, is the trick.

In any case, ignoring the moral thingy for now, computers are nowhere NEAR good enough to steer safely in traffic. Maybe the one freak car which, like a learner, other drivers will make allowances for. But when drivers stop giving them a break and doing the dickish things some of them do, these cars are doomed. And more than that, above a certain critical density, a certain amount of cars per road system, and chaos theory creeps in and creates all SORTS of problems you never imagined. You’ll find some tiny decision somebody made back in the beginning, seemingly innoccuous, ends up paralysing traffic and sending cars to the Moon and back. And you’ll never see it coming, because that’s chaos, you can’t predict it without going through step by step til it happens.

Even human beings are a bit too stupid to drive safely, but what are you gonna do when things are so far apart from each other? If you want an example of the amazingness of AI, try talking to one of the big chatbots. They’re idiots! AI is great for what it is, but nowhere near ready for this.

It’s not hard to predict what would happen if all cars on the roads were running on algorithms.

Take the 2 second rule for example. You have a highway full of cars that each try to maintain 2 seconds of distance between themselves, packed as densely as they will go according to the rule. One more algorithmic car tries to merge in another car’s 2 second gap…

The car immediately has to slow down to grow a gap to the next car, but then the car behind it has to slow down even more to keep the same gap, and the car behind that has to brake even harder… essentially creating a whiplash effect that brings the whole line of cars to a halt behind the merging vehicle all the way down to however many miles long the line of cars extends.

The other option is that the merging car has to stop and wait for any number of minutes for a sufficient gap to appear in the traffic before it can attempt to merge, creating a traffic jam at the highway on-ramp instead, which then radiates down to however many cars are attempting to join the highway.

Human drivers would just squeeze in and drive bumper-to-bumber until the traffic clears up.

“Human drivers would just squeeze in and drive bumper-to-bumber until the traffic clears up.”

Likely causing accidents in the process…

The real solution is to program the autonomous cars to try to maintain a 3 second gap, but to be willing to tolerate a 2 second gap for X length of time when cars around them are merging or something.

“Likely causing accidents in the process… ”

But usually not, since everyone drives the same way.

“The real solution is to program the autonomous cars to try to maintain a 3 second gap”

And fit even less cars on the road? A 3 second gap at 70 mph is 100 yards. With that sort of traffic density, you need four extra lanes to fit the morning commute through.

>> A 3 second gap at 70 mph is 100 yards.

>> And fit even less cars on the road?

If all the cars are talking to each other, all cars would be able to slow down at the same time, and fit more cars on the road.

The three-five second gap are for those that do not pay attention to the road.

The computer will have 100% attention, and have information about the road conditions a mile in front of it.

With this kind of efficiency, the current roads will work just fine.

“but to be willing to tolerate a 2 second gap for X length of time when cars around them are merging or something.”

Oh, and a car that “tolerates” a 2 second gap wouldn’t merge into a 3 second gap because it would leave only 1.5 seconds each side, so the cars on the road would have to keep a 4 second gap.

But then, since the car in front won’t speed up, the merging car has to slow down to increase its gap, so the car behind it slows down, and so-on until the traffic compresses to less than 4 second gaps towards the back and again nobody else can merge. Or, the car doesn’t slow down, and the car behind it doesn’t slow down, which preserves the gaps, which then get filled by other cars, and after a couple ramps there are nothing but 2 second gaps and again nobody can merge.

Which is just pointing out that the whole “2 second gap” rule doesn’t work in reality. It never has, which is why it is practically universally ignored.

The self driving car would not need to have a 2 second distance. It should monitor the car in front and react to the breaking in fraction of a second. The ideal would be to keep 2 seconds but that would allow for a big enough fudge factor.

Not universally true – swerving or emergency braking is dangerous in itself if you’re just concentrating on what’s in your path. It’s usually safer to hit the thing than cause a bigger accident by trying to avoid it and swerving into oncoming traffic or having 5 cars behind you pile into you.

There’s also the question of if the pedestrian in the road is breaking the law / highway code – if they are jaywalking and technically “in the wrong” why should some innocent bystander get squished? It could become a very silly game of chicken.

“Jaywalking” is something autonomous cars should be looking out for though. There are many countries, such as the UK, where being a pedestrian on the road is not an offence and is very common.

In the UK if a pedestrian has already started crossing a road they have priority over traffic so aren’t necessarily in the wrong. In this case should they be sacrificed or should it be someone walking by the side of the road on a pavement?

Another thing is what if the pedestrian is walking parallel to the road on a side where there’s no pavement and there’s another on the opposite side of the road where there is a pavement? Neither person is doing anything unlawful so should you really choose to hit the one who’s on the road?

Perhaps in both cases the person on the road should still be more aware of the dangers and therefore its more their own fault than the one on the pavement.

A single pedestrian walking down a road with no pavements at all – should the car swerve off the road or hit the pedestrian? This one probably has a correct answer that is to swerve since the chance of any serious injury is much slimmer.

None of these situations are likely in real life since you can see the pedestrian, slow down and stop in time (and pedestrians usually only use the road where it’s reasonably safe to do so). Simply intended as a moral question.

“And the self driven car will have lots of more data and fast processing to try to calculate a safe enough manouver.”

There’s a missing link between “lots of data” and determining a safe manouver. Data itself is not information – all the sensor data is just noise to the AI until it processes it into a picture that says “there’s a pedestrian, there’s a concrete bollard, there’s a pit in the road, there’s a STOP sign…”. That’s what the AIs are notoroiusly bad at, because that requires far more processing power than they have available. Once they’ve completed that missing step, then it’s easy as 1 + 2 to take the correct action.

That missing step which seems so easy and trivial to us humans is actually the hard problem – we actually use the bulk of our brainpower to make it so seamless for us.

The final sentence above. When a pedestrian is on a road, he’s taking a measure of risk by choice.

If someone walks onto the road, the car should be moving at a speed that it can react appropriately given road conditions and visibility distances, but the car’s response should be only limited maneuvering (just as the rules are for most insurance companies: Don’t swerve, just stop) and stopping ASAP.

Easy solution (and probably the one the car company’s lawyers would recommend):

In case of unavoidable collision:

1. Sound alarm, blink warning lights

2. Return control to human driver, who is required to be aware of situation by (soon-to-be-passed) law

3. PROFIT!

Requiring drivers to be constantly vigilant is a horrible idea – humans cannot maintain an indefinite state of awareness without some sort of action on a regular basis. If I am driving, then I obviously pay attention to the road; however if I am a passenger, I am not going to be constantly watching, ready to take over.

If this is a requirement of self driving cars, then I am not going to be getting one.

Many rules for drivers are horrible or impractical.

For example: you’re required know and obey the speed limit for the road you’re driving on, even if you have not seen a speed limit sign since you entered the road.

For that matter, you’re required to know and obey all laws (ignorance is no excuse), even though you may not know what they are or where to find them all.

Lawyers and insurance companies will decide who’s liable, and the rules will be adjusted accordingly, whether they’re reasonable or not.

…a confirmed skeptic

The best move would be to hit the brakes and honk the horn to warn the pedestrians to get out of the way.

“And what will happen when your car has to decide whether to save you, or a crowd of people? Ever think about that before?”

For most people, no they have never once thought of that before. Thus, it is unethical to allow most people to drive a human-driver-car since clearly they have put much less thought into who and how many people they will kill when causing an accident.

In 2013 in the USA alone, there was almost 33000 deaths involving a human driver behind the wheel of a car.

That’s over 2700 deaths per month.

So long as driverless cars result in less deaths than that, it’s also unethical to continue allowing humans to drive cars, as now you have a choice of 2700 dead per month or less than that dead per month as a direct result of your decisions.

What you are saying is logical but improbable. Cars will not ever be smart enough to avoid pedestrians. They can’t be trusted because errors can cause more damage. What is the accuracy of a camera system in identifying a person? Around 95% or so with current processing techniques? What about the 5% uncertainty that a car throws you into a telephone pole because it saw an object that it guessed as a humanoid target when it was an illusion. People make mistakes too but we are better at judging what things are. We have a 99.9% chance that guessing the person who just jumped out into the road was actually a person and not a light glare that came off something reflective and just happened to briefly look like a humanoid for a fraction of a second. That’s right. A fraction of a second, which is how fast a computer controlled car will react in, to avoid killing a reflection.

So you ask me this: do you really trust *your* life to a machine that isn’t capable of accurate object recognition, in order to make a conscious decision to not kill the error and cause death or massive damage to your vehicle? Screw that. Smart machines enable and promote human advancement, but they will never be smart enough to promote or enable themselves.

If the car’s response is just emergency braking, it’s not going to throw you into a telephone pole; it’s just going to stop.

And they’re not just working with cameras and vision, they’re using RADAR and such as well, so they’re not fooled by illusions”. The car is just stopping if there’s something in front of it – that by itself is better accident avoidance than ALL humans, if for no other reason than it will start braking sooner, and will be able to brake harder without losing control.

What’s more, we’re far more likely to not notice a person moving out into traffic than the car. A momentary lapse of attention, for example, something the car will not do, but *every* human does every time they sit behind the wheel.

If a pedestrian isn’t smart enough to avoid a computer controlled car, they aren’t going to be a huge loss to society.

The only situation when there will be an actual problem is from mechanical failure. Steering dies, brakes die, that sort of thing. Perhaps they should just stick a spring loaded brake pad under the car, so in the event of system failure it’ll just slam a big rubber pad onto the road surface and stop quickly.

What about the cars behind you? What happens when your car slams it’s brakes and the car directly behind you must now slam it’s brakes? Then the car behind that one? And so on. Vehicle accidents are unpredictable and immensly computationally extensive to determine what each element will do in a total second of time. You take the risk when you get into a car. You take the risk when you walk or ride your bike on a road meant for cars. God help us if this technology actually takes over. Animal carcasses will be replaced with pieces of car bumpers and parts littered about.

then your car automatically sends out a wireless brake signal that all cars within a mile then receives,

automatic cars can do everything better than humans all the time, or at least they will be able to at one point, of that there is no doubt.

That’s ridiculous. The calculations are not that complex: the car detects a object in front of it – pedestrian, dog, boulder, it doesn’t matter. The car stops as quickly as it needs to to avoid a collision, or as much as it can to mitigate damage if a collision is unavoidable.

Cars behind are irrelevant, exactly as if this where a human driver.

Basic rules of the road: you stay far enough behind the car in front of you to be able to react appropriately if the car in front needs to stop suddenly.

This is why, (at least everywhere I’ve ever been insured) insurance companies basically assign fault to people who rearend others in virtually all cases simply by default. You don’t hit something because somebody may rear end you if you brake, and indeed a human simply lacks the time to make that assessment anyways.

It doesn’t need to chart a path off the road or make complex calculations. The best response in the vast majority of circumstances is simply to stop as quickly as physically possible/necessary. Both are things a computer driven car can do objectively better than any human.

“Cars behind are irrelevant, exactly as if this where a human driver.”

No they aren’t. You can’t know whether the cars behind can or will brake fast enough due to any number of reasons, so you actually do have to choose rather than simply stop every time.

That’s why the rule in drivers ed, “Don’t brake for animals smaller than a dog.” Slamming the brakes to save a turtle is just likely to cause a pileup.

Relying on rules such as “stay far back” are not practical and don’t work in reality, because the traffic would simply grind to a halt if everyone actually obeyed them. There’s too many cars on the road for everyone to keep a two-second gap.

“stay far back”

Try that in most metro areas and cars from the other lane will fill in the spot faster than you can back off.

And in Boston, they’ll flip you off while doing it (which is better than Texas or Florida, where they’ll shoot at you)

…I kid, I kid…

“If a pedestrian isn’t smart enough to avoid a computer controlled car, they aren’t going to be a huge loss to society. ”

If you decide whether someone deserves to die based on their value to society, then something is wrong with YOU.

I’m going to propose that you’re not going to be much of a loss to society. Shall we throw you to the wolves now or will you go willingly?

I think you’d learn a lot from reading some of Google’s reports: https://www.google.com/selfdrivingcar/reports/

Over 1.2 million autonomous miles, 16 minor accidents (no injuries as far as I can recall) – some of which were under human control and none of which were the car’s fault.

You’d expect a lot worse stats than that if they were only 95% accurate.

They don’t use only cameras – it’s a fusion of multiple sensors. They don’t make decisions on objects based on their appearance for “a split second” – they constantly track and reevaluate the object for as long as they can see it, using > 2 million miles-worth of sensor training data. If something looks like a telegraph pole for 10 seconds, and looks a _little like_ a person for 1/1000th of a second, the car isn’t going to think it’s a person.

Seriously, read their reports, watch their videos. The best thing about self driving cars is they are showing just _how bad_ humans are at driving. They have the telemetry data to be able to say “yeah that guy didn’t even brake before he hit us”.

On the ethics front I think it’s a non-issue: Brake hard, constantly try to avoid hitting *anything*.

An autonomous car and a “telegraph” pole… The temporal vortex is probably the biggest danger for the car.

Tell ’em Rodney–

Now take the car to Canada, throw some frost and snow on the lidar visor and see how well it performs.

The cars have driven 1.2 million miles and had 16 minor accidents, which is one accident every 75,000 miles which is a lot more than the average driver would expect to get.

If every car on the road was involved in an “minor accident” with that frequency, every car on the road would have been in a fender-bender about twice by the end of their lives.

> The cars have driven 1.2 million miles and had 16 minor accidents, which is one accident every 75,000 miles which is a lot more than the average driver would expect to get.

This is completely irrelevant because *none of the accidents were caused by the autonomous driving software*.

I expect Google are doing most of their testing on busy streets, whereas a “normal” driver doing any significant mileage is going to be racking up those miles on motorways/freeways where accidents are far less likely.

A also expect most of the “accidents” would go unreported by many people – a slight scuff on a bumper hardly warrants mention (actually they talk about that, citing this paper: http://www-nrd.nhtsa.dot.gov/pubs/812013.pdf and https://medium.com/backchannel/the-view-from-the-front-seat-of-the-google-self-driving-car-46fc9f3e6088#.wcjak5gm0)

I see a common thread here.

Those that do not want to admit that they are poor drivers

and those that are willing to let some(thing) else drive for them.

” *none of the accidents were caused by the autonomous driving software*.”

That’s playing on a legal technicality. The cars are causing accidents, but the blame is passed on to argue that they aren’t. The Google Cars are disproportionately involved in rear-ending accidents, for which the blame goes automatically to the other driver, even though the real culprit is an erratically and unexpectedly braking confused AI software.

>> The Google Cars are disproportionately involved in rear-ending accidents, for which the blame goes automatically to the other driver, even though the real culprit is an erratically and unexpectedly braking confused AI software.

Or the rear culprit was driving too close, too fast, and looking at their cell phones.

Not even Canada, I’ve had the radar & sonar on my car cut out in the UK when driving through very light snow!

I doubt lidar would fare any better, personally last time I used lidar I found it very noisy…

Arguably, it’s also unethical to allow any kind of vehicle to be driven at or faster than a speed which can actually injure someone in a collision. Any other kind of large machine has safe limits within which it is allowed to be operated. But we have potentially fatal speed limits all the time, out of practicality.

Bingo. Traffic deaths are 10x the deaths that occurred on 9/11 (and every year at that!). And are we freaked out about traffic safety? No. We’re freaked out about terrorism. When it comes to logic many talk a good game, but none of us is really good at using it most of the time (myself included).

I wish more people would mention this more frequently.

9/11 was tragic, but allowing someone to drive after drinking with essentially no consequences is criminal.

In Massachusetts, there’s actually a poster listing the “penalties” for having up to 12 DUI convictions. The lawyers bargain it down, of course, and if you’re a judge, politician or Captain of Industry, you can get a pass.

In none of those cases, would I want my car to drive off of the side of the road like the image implies. I would think it should take the best probability of not hitting any one, but not veer off of the road.

It’s a good job we are allowed to “fix” the software in our own cars now. I’d say this is a “feature” that needs changing.

Yea, and if your car takes someone out and they(car manufacturer) need to figure out why, good luck explaining to them why you needed to alter their safety routines. You’ll be the sole programmer and 100% at fault for the incident. Or not even alive if it just randomly turns off a cliff and you end up being the incident. Hacking something that automatically moves you around will not be something take likely in the future I imagine.

Here, let me help you out; you seem to be lost. This is Hackaday.com- you wanted HackerHate.com (It was 2 lefts & a right, not 2 rights & a left). You want to restrict hacking on things not even invented yet– troll on, mon capitan.

If the inter-car communications system becomes a part of this network that could be a very week area of the system.

At least if a car broadcasts to cars around it, especially behind it, “I’m emergency stopping!” Cars behind it, or even oncoming, could respond by slowing down to reduce secondary collisions. Emergency vehicles could broadcast, “coming through!” and other vehicles respond by making “a hole” for it to travel through (pulling to the curbside is not always an option.

I am thinking most people are not even close to understanding what a driverless car society will look like. It will not look like it does now only with auto pilot. The driverless cars will actually drive in safe ways, something that a good deal of of current drivers do not do, either due to ignorance or to ego problems. There will be no need to warn other cars that your car is braking because they will already be following at a safe distance.

Self driving cars will have to be programmed to protect the occupant at all costs. Otherwise the system can be exploited, and the market would respond accordingly. Trick the car sensors and suddenly you have a car that kills its occupants for the “safety” of others.

As the post above said about hidden information, computers can only process on known information.

Most accidents can be attributed to a number of factors, high speed and inattention to the situation around the driver top the list. An autonomous vehicle could be programmed to not exceed the speed of the safety systems of the car. Thus relying on airbags and seat-belts etc to ensure that should evasive action meet with a crash that the layers of safety systems protect everyone. Then intentionally killing someone becomes more of a non issue.

If the computer is programmed to save the occupant of the vehicle and in some sense has a self preservation feature then regardless of how many may be injured outside of the vehicle as long as all of the actions of the autonomous vehicle are legal then I don’t see this as an issue.

When a pedestrian crosses against a light and is struck by traffic, autonomous or not who is at fault? Every state and region seems to vary so the automatic program is going to have to know where exactly it is and know what rules to follow for that location. I am willing to bet that over all such fatalities decrease as the car is much more likely to see an object on the side of the road moving on a path of intersection then even the most alert drivers. IE “the kid just ran in front of me” situations are much easier to deal with when the car has a constant 360 degree field of view for dozens of feet around the vehicle and is recording the entire thing in real-time.

Case in point. A motor bike rider that decided to illegally lane split near one of the google cars on a freeway. The car was able to capture and record the entire event for playback and anyone watching at vmworld 2012 could see just how accurate the system was able to track all relevant objects in real-time. If as a human driver I was faced with that situation and didn’t see the motor bike approaching from behind at a high rate of speed and decided to change lanes or lost focus and my car drifted near the dividing line, it would have been a very bad day for everyone. Such things autonomous vehicles are going to be much better equipped to handle then most humans and as such most of the situations arising from accidents and death will likely be attributed to some degree of fault to the victim, at least its likely there will be a much better record of what happened.

Autonomous vehicles could in fact save more lives then it puts in danger providing an overall “net” positive. Early indications are that this will be the case.

One very important aspect of the “kid out of nowhere” scenario is that a properly designed autonomous car would have more then just cameras, which take a relatively long time to process…

Since radar and lidar are a must, the car will have the means to identify the problem faster then a human could, and once that happens, it can react faster.

What’s even more important, it can react in a better way, actually calculating the most optimal way of avoiding the obstacle instead of just randomly swerving away from the kid

A lot of people instinctively try to avoid moving things by “leading” them, instead of doing the exact opposite. If we can make systems that can guide a supersonic missile into a hypersonic target and actually HIT the thing, we can definitely make cars that deliberately miss pedestrians are pretty damn good at it ;-)

wonder how it’ll assess the pedestrians walking out from between two vehicles (ex. panel van) lot of good that radar will do, guess the faster reaction time will help

A pedestrian walking out unexpectedly from between two panel vans is still going to be safer with the self driving car – it’ll notice the pedestrian faster and react quicker if necessary.

It’s not that self driving cars will never hit anything, its that they are orders of magnitude safer than even the best human drivers… And that’s with the technology in its infancy.

Presumably the cars would also only be driving as fast as they can see to be clear – which human drivers are very bad at. If you drive slowly enough that you can always stop in the distance you can see to be clear, this scenario is very unlikely to ever occur unless the “kid out of nowhere” is fired from a cannon. Of course, customers may not like the fact their car slows down to 5mph around schools…

For all the good a lidar is for full 360 degree vision with accurate distance measurement, it really isn’t robust enough as a system. Throw a snowball or a wet leaf at it, or just dirt and mud, and the computer becomes totally incapacitated.

It has to be able to navigate adequately on passive means only, such as camera vision from inside the vehicle, because any external sensors, radars etc. are blinded by interference.

Suppose for example that there are two google cars sitting at an intersection and there’s a puddle of water between them. One car bounces its lidar beam from the puddle into the other’s reciever – whoops.

Or how it would look like to one Google car with a whole intersection full of Google Cars:

http://www.threadbombing.com/data/media/2/transformers.gif

I don’t find that funny.

He had a point though https://www.youtube.com/watch?v=t4DT3tQqgRM ….

As long as HP don’t make cars! Actually in the pictures you would think the car would brake in a straight line, as the car would flip and roll if it tries to turn.

What/who do you not think is funny?

The situation is flawed. The reaction time of cars is way beyond that of humans, there are already videos of the Tesla autopilot avoiding situations that a human would easily crash in. In this case the car will have plenty of time to identify the crowd of people blocking the road and stop in enough time. In the case of the single pedestrian, the car will know the person is there and begin to slow down in case they suddenly move into the path of the car and stop accordingly.

I agree; scenarios A and C are not likely to ever actually happen. A group doesn’t dart out in front of a car. On the other hand, I think if a self-driving car slows down every time it sees a single pedestrian by the side of the road then they’ll never make any progress through a city. So I’m still buying scenario B somewhat.

” the car will know the person is there”

Will it?

Will the car tell the difference between a person and a wheely bin? One might take a sudden turn and jump in the way the car is attempting to steer, while the other will just sit there and do nothing. The question hinges on whether the AI is actually smart enough to know the difference.

Everyone thinking about this problem is simply assuming that the car understands its situation as well, or massively better, than a person would. In reality this is not the case – the car knows and understands very little of its surroundings.

Tesla has indeed engineered a solution to the problem. It is simple. STOP.

They did have an impromptu test of this, and this is second-hand from the service center, when a person in a wheelchair rolled out into traffic. The car simply stopped.

And then the next car doesn’t, and rear-ends your Tesla.

“Just stop” isn’t always the best option.

Say you navigate around the pedestrian instead. What of the hypothetical rear-ending car? If they couldn’t detect your braking car and stop in time to not rear-end you, what are the odds that they can spare the pedestrian with the extra 10-15 feet of distance? They will lack most of the information you have, since your car blocks their LOS. Play follow-the-leader? Does your car’s route automatically make sense for theirs? What if they have another car next to them, preventing theirs from taking the same route?

Having two cars pile up is still the better option if you’re looking to protect life. You’ll have crumple zones on both cars soaking up the other driver’s momentum, and your combined mass will further reduce the distance traveled and the impact on the pedestrian.

‘Just stop’ is very nearly always the best option. There is a reason it’s taught as the first resort in driver’s ed.

If the car is not poorly designed it should handle getting rear ended at speed typically seen on city roads esp after the other car slows down.

Even on a SVD brakes should be tied in with the brakes lights so human driven car can know it’s slowing down.

If not the manufacture gets sued just like they would if the car swerved off the road and killed the driver.

The pedestrians BTW would have ran out of the way from the sound of the first car slamming on it’s brakes.

Too bad no one ever defined rules of the road, something like giving pedestrians a crops walk where cars would be expected to stop for them of the wait on the side walk. If only there were already laws in place then we would have to make these kinds of decisions… We’d just hit the people violating the law if we couldn’t otherwise avoid it…

But since there Adee no laws or rules regulating how pedestrians and automobiles interact I guess we’ll have to leave it up to Google to figure out.

No swerving.

This. Swerving is bad, and the wrong choice in virtually every instance. It severely compromised your stopping ability, greatly increases the likelihood of loss of control.

I once saved my life and several others by swerving (into oncoming traffic!) around a car that unexpectedly turned very close in front of me (old people…) when I was traveling perhaps 50 MPH. The other choice was to drive into a stone wall, though I suppose that might have saved the driver of the lead car I headed towards a cleaning bill for his car seat. It was close enough that at least the passenger in the turning car would have been severely injured no matter how quickly my car could have been slowed by either me or a computer, and I don’t think any computer would have tried my desperate (but successful) maneuver.

So, swerving has its place.

No. Swerving has no place in self driving cars.

In your situation a self driving car would have maintained adequeate safety distance to break in case the vehicle in front pulls such a move.

Your other choice would have been to break and hit the old people car. Legally you were in the wrong by driving so close that you have to swerve.

This exact “lesser evil” logic is precisely what will usher in autonomous cars. Accidents are ranked as the fourth leading cause of death. Autonomous cars, at some point, will be good enough that they will kill far fewer people than human driven cars. And at that point, driving a car will become considered a reckless act. It’s a public health issue.

As a bonus, active, elderly people will be able to preserve the autonomy that comes with driving well past the point that they are fit to actually drive the old fashioned way.

I, for one, welcome our new automotive overlords. Can’t wait to be chauffeured around, have my car take itself to the shop etc.

This “dilemma” pops out every now and then, and I find it ridiculous.

We are talking about state of the art technology – self driving cars which are using visible light cameras, IR cameras for thermovision, and lasers for detecting long range obstacles. Most of them have car-to-car network capabilities. And we’re talking about a situation where car must swerve off the road into a wall?

So, let’s see what can really happen:

1. a car can come at a sharp corner in a city, and something is on the road. If it’s coming to a sharp corner, car will slow down. It will probably be driving according to the regulations (50 or 60km/h) so it needs up to 15 meters to stop. As it should see the obstacles even before coming out of the corner, and it doesn’t have a reaction time of a human, we can conclude that it will start breaking in the turn. If another smart car is behind they can exchange information so they don’t hit each other. If some smart car is in front, my car will already have the information about the obsticle. So chance of hitting someone in this scenario is pretty slim.

2. a car is driving down the road, and a child runs from the sidewalk to the street. Again, in 50km/h, stopping distance for an average car is around 15 meters (I’m not counting reaction time as there is none – computer decision is less than a millisecond). That’s 3 car lengths. At 50km/h that distance is normally passed in 1 second. A person runs 12-20km/h, or 3-5m/s. A really smart car even checks pedestrians on the sidewalk, so this child should get from standing point to full run in a second to get injured by the car.

3. a car is driving down a highway, doing 90km/h. If somebody comes in front of a speeding car on a highway, then the smart car has every reason to ignore the importance of the pedestrian.

So no, a car should not have this dilemma if it’s in working order.

2. There is also possibility that in the city traffic somebody steps in the front of the car i.e. suddenly changes the moving direction without looking around. This can happen in fraction of second when car is only few meters away.

Human driver would possibly not even be able to react and would hit the pedestrian with full speed. Self driving car maybe is able to slow down a little and that would be possibly only reasonable thing to do.

a fraction of a second is an eternity to a computer

Not when it has the workload of turning a mass of noisy data from cameras, accelerometers and radars into a comprehensible picture for itself to react on.

The reason why people have such slow reaction times is because we use massive amounts of brainpower to decipher our surroundings into something we can react to. The fastest knee-jerk reactions – the kind that a dumb AI would do – are bypasses to that system, and carry a risk of being inappropriate to the situation at hand.

Here in Colorado, these are not called “accidents” any more.

These are “mistakes in judgment”.

Someone had a “mistake in judgment” and got into a “crash”.

( driving too fast, too slow, texting, talk on phone, drunk, etc, etc, etc…..)

As has been stated many times, it has not been the self driving car that had a “mistake in judgment”.

As algorithms are not judgment calls, self driving cars can never held to blame.

If a driver hesitates and gets in the way of a self driving car, it is the that drivers fault for making a “mistake in judgment”.

As cameras all around us has proven where faults lay, so will the cameras in these self driving cars.

I for one look forward to our new “self driving” over lords.

And now that pot is legalized in Colorado, I bet there has been an increase in “mistakes in judgement”.

Too bad the “mistake in judgement” of legalizing pot wasn’t identified and rectified. B^)

No, the number of “crashes” has gone down.

But the amount of fines of DUI has gone up.

win-win

So if a meteor drops on your car while driving 60mph and it causes your car to hit people, it was a “mistakes in judgment”? Judgment of what? Of being at that location at that moment in time? Man, Colorado sucks. Good to know i’m not going to ever live there.

LOL,

I guess being a shithead is just in your nature.

I am glad you and I will never meet.

no, that’s generally referred to by the term “act of god” and sometimes your insurance will tell you to go fuck yourself, not covered by the policy

I, Robot (2004)

V.I.K.I.: You are making a mistake. My logic is undeniable.

Detective Del Spooner: You have so got to die.

Google’s solution to things makes me think of that movie which is why I’d never buy a Google car.

As I’ve commented other places that this has been referred to.

Cars are not going to be programmed to make ethical decisions. They aren’t going to have enough information to even begin to make them.

They can’t tell if it’s 10 people they would hit, or 10 blow-up dolls.

People don’t consistently make the same choices for the sort of ethical decisions that are being discussed here. So the idea that there is “one true” set of ethics that could be programmed into a car is just silly.

Self-Driving cars aren’t going to even begin to try and make such choices. If there is a collision imminent, they will just brake as much as possible to prevent the collision (Advance car brains may try harder to steer around the collision). There won’t be any “how many people do I have on board, what ages are they, are they geniuses or bums, etc” questions being considered. Let alone speculations about how many people of what value may be hit under different driving choices.

By the gods yes. I get upset with all the talk about ‘cars making ethical decisions’ because the technology is nowhere near being able to understand beyond ‘am I probably going to hit this’.

There will be a future adolescent point soon we must all come to grips with. In a distant future where all cars are automated Uber shuttles and there was no manual driving, tens maybe hundreds of thousands of lives will be saved each year; a great thing. Before we get there, there will be a point where a significant number of automatic drivers and manual drivers share the road. That’s the most dangerous time…

It’s like the first game of Kasperov vs Deep Blue. I’m not sure human drivers will know how to react to Siri driving in the lane next to them?

And the Electronic Thumb from The Hitchhikers Guide to the Galaxy will allow people to force cars to stop and pick them up.

Actually, the Electronic Thumb worked more like a regular thumb, it’s the driver’s decision to pick them up or not (spiteful Dentrassi notwithstanding).

(endnerd)

Ironically, the Deep Blue won Kasparov once because of a bug that made it do a nonsensical move. Kasparov couldn’t figure out the reasoning behind it, thinking the machine made it for a reason, so he got thrown off track and lost the game. Before it was revealed as a bug, Kasparov was convinced that the machine was smarter than himself. Later he went on to win the computer, until they simply made it too fast to out-think.

Doing the dumb thing really really fast is not the same thing as doing the intelligent thing, but as long as you keep within certain parameters nobody can tell the difference.

Such is the trouble with AI. We think and pretend that it’s being intelligent – as long as things are going well. Then it makes something incredibly stupid and the whole facade falls down.

There was a good article in October 2013 issue of The Atlantic titled “The Ethics of Autonomous Cars.” Although, having read this posting, it may not give you much new information since it covered the same material. I’ll see if I can dig up the link.

I thin it is more likely to be liability suits and actuarials that suggest the decision. Basically the kind of analysis that led Ford to leave the pinto the way it was because the cost of a few wrongful death lawsuits was less than the cost of a major recall. Of course they forgot to factor in the discovery of willful negligence. If they had they probably would have recalled the cars. This kind of analysis, properly done, will, in fact, lead to a “right” outcome.

My solution is simple: Leave the human in the driver’s seat.

I will never ride in a self-driving car. Period. I know enough about cars to disable such systems in my own future vehicles.

My reason is simple: In close to 40 years of being around computers, I’ve never seen ONE computer that didn’t lock up from time to time. This includes early kit computers, home computers in the 80s, every variation of PC imaginable, embedded devices, cell phones and other mobile devices, commercial systems, and everything in between.

No way will I ever trust a computer to make life and death decisions. Period.

(and please don’t bother trying to convince me otherwise – it won’t happen. I’m old, crusty, and stubborn)

+1, I don’t even trust fly-by-wire controls; if I hit the break, it dang well better be physically connected to a brake caliper. Sure the computers in cars are different, but they are still only as good as the software. Look at toyota firmware issues, airbus, etc, and as systems get more complicated, the more possible deeper and hidden glitches there could be. Even if it was ‘near perfect’, with radar/lidir/flir/etc, you could almost always have a false positive. Sure, maybe not a piece of cardboard blowing across the road or a reflection, but even animals could look pretty similar and have heat signatures close enough to trigger false positives, and there better not be a logic decision to try to save the heat signature(s) in the road over myself… Braking to avoid obstacle is as far as I could ever see it going, as others have said, too many unknowns if you swerve…

– Also I don’t see this ‘some day when all cars are autonomous’ ever happening. Besides the above reasons, good luck telling all of the motorcyclists and people who enjoy driving to go find a closed track, because only autonomous cars are allowed to drive on the road because it is ‘for the greater good’.

Surprise, you trust your life to computers when you are on the road anyhow. Trafficlights? Computers.

Traffic lights break down all the time. It’s the people who prevent it from turning into a mess when they do.

When traffic lights break down, usually there’s a guy with a cone who appears and starts directing traffic, or people simply treat it as a four-way intersection with stop signs and self-organize to take turns.

Most traffic lights have physical safety systems that prevent multiple greens that could cause a crash.

Example: https://jhalderm.com/pub/papers/traffic-woot14.pdf

Page 2, Figure 2

There are physical jumpers that enable a known safe path for electricity preventing all greens from occurring should the computer control fail or be told to put the traffic system in an unsafe position.

So I guess in some sense you trust your life to the guy that puts the jumpers in. Many will fallback to flashing red on all zones or some other safe configuration similar to how the intersection might be controlled with just stop signs.

the car won’t make life/death decisions.. the car computer will act by the will of it’s programming and try to get itself to a standstill as fast as possible.. death? life? Who cares.. the car will only try to stop as fast as technically possible. Not more, not less.

PS: I hope you’re still riding a horse as otherwise your car will already contain all kinds of processors to make the whole thing work.

I have no problem with some computer control / aid, but it better not have the final say over my control. If throttle or brake controls glitch out, I should be able to hit the brakes and/or turn the car off, not rely on the computer to pass along my brake, throttle, and ignition input as it feels like. The logic is only as smart as the programmers, and everybody has a bad day now and then…. Unless car companies are jumping into coding/testing/hardware/redundancy levels found in jumbo-jet control systems, which I don’t see likely for consumer-level vehicles (at their price point), there are way too many corners being cut to give up full control of your inputs to software/hardware. Processors for assistance like engine mgmt and body controls, sure… – but no thanks, I’ll pass on brake-by-wire systems and ignition switches that don’t physically cut power, especially if the accelerator pedal is electronic.

Stupid moral speculation:

A product must ensure the safety of its operator. If the product is used in such a way that it endangers the lives of others, then its the operators fault, they didn’t have to use the product. The operator then has the right to seek redress from the manufacturer, the product was substandard of deficient.

To allow the product to make a decision not in the best interests of the operator means that product is inherently not fit for purpose and a known danger to the operator. People will abuse the system and deaths shall result.

The straw man arguments would be either a gun designed to kill the operator for the safety of others. Or google providing a list of people to be targeted for drone strikes cause of their online habits.

I’ve got another one: The CIA hacks a car to kill a person on purpose, does the maker of the car then pay the family damages? Go to jail?

Now they are responsible for keeping their car software secure, but what if there was a secret law-enforced backdoor in the software (not unlikely) that he CIA used?

The solution is very simple – dont swerve, just apply the brakes.

Compared to a human driver, an autonomous car will have better instruments, longer range detection, faster reaction time and no distractions. Chances are it will stop well in advance of the actual hazard so the proposed scenario is largely unrealistic.

Even if it cant stop in time, swerving adds additional unpredictable risks, and whatever braking has already occurred will make the crash far more survivable for anyone involved.

I think that these are lame examples.

Crowd of people could not suddenly appear on the street so car must have enough time to stop. But even when these people by some way got suddenly in the front of car, then car should not injure people who are not involved in the situation (people on the side walk).

More over, the car should value the lives of its passengers (because they have trusted their lives under the control of the car) over people who are in violation of the traffic code and it should react predictably. Suddenly hitting the curb is not such behaviour (and lets not forget that there might be also children inside the car).

It does not mean that it should not execute the evasive manoeuvre when it is possible (there is enough room on the road) especially because it could possibly pre calculate possible scenarios.

So. For case A, car should stop before hitting pedestrians on the road. If that is not possible, it is most likely that pedestrians are in violation of the traffic code (jumped in front of approaching car). The car should not intentionally hurt persons not involved with situation (person on the side walk in this case) and it should minimize the injury for the passengers. Therefore probably only possibly action is to try to brake as much as possible and when possible try to hit least number of pedestrians on the road.

Case B. When complete stop and evasive manoeuvre are not possible then there is no other option than hitting the pedestrian on the road as the car should not put the passengers into danger. Btw. if car would instead choose to hit the solid obstacle then it would make possible a murder by jumping in front of self driving car.

Case C. is the same as B.

All this above assumes fully functioning car.

But what if car detects that its brakes are malfunctioning and while it should be able to come to full stop, it continues to move forward?

I think that this comes down to the following question – what car makers prefer to see in the news: company X self driving car killed the family of 4 or company X self driving car drove into crowd on the street killing 1 and injuring 6 other people.

“in violation of the traffic code” – not all traffic code’s let cars have the right of way.