A law professor and an engineering professor walk into a bar. What comes out is a nuanced article on a downside of autonomous cars, and how to deal with it. The short version of their paper: self-driving cars need to be more predictable to humans in order to coexist.

We share living space with a lot of machines. A good number of them are mobile and dangerous but under complete human control: the car, for instance. When we want to know what another car at an intersection is going to do, we think about the driver of the car, and maybe even make eye contact to see that they see us. We then think about what we’d do in their place, and the traffic situation gets negotiated accordingly.

When its self-driving car got into an accident in February, Google replied that “our test driver believed the bus was going to slow or stop to allow us to merge into the traffic, and that there would be sufficient space to do that.” Apparently, so did the car, right before it drove out in front of an oncoming bus. The bus driver didn’t expect the car to pull (slowly) into its lane, either.

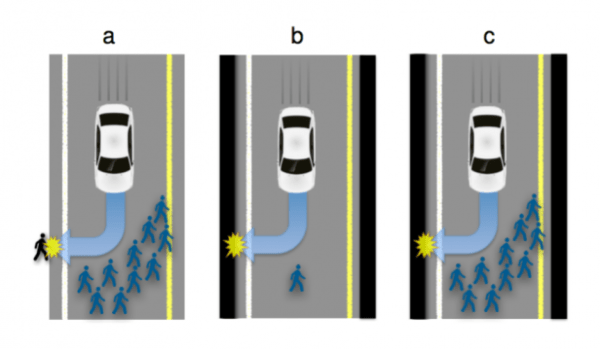

All of the other self-driving car accidents to date have been the fault of other drivers, and the authors think this is telling. If you unexpectedly brake all the time, you can probably expect to eventually get hit from behind. If people can’t read your car’s AI’s mind, you’re gonna get your fender bent.

The paper’s solution is to make autonomous vehicles more predictable, and they mention a number of obvious solutions, from “I-sense-you” lights to inter-car communication. But then there are aspects we hadn’t thought about: specific markings that indicate the AIs capabilities, for instance. A cyclist signalling a left turn would really like to know if the car behind has the new bicyclist-handsignal-recognition upgrade before entering the lane. The ability to put your mind into the mind of the other car is crucial, and requires tons of information about the driver.

The paper’s solution is to make autonomous vehicles more predictable, and they mention a number of obvious solutions, from “I-sense-you” lights to inter-car communication. But then there are aspects we hadn’t thought about: specific markings that indicate the AIs capabilities, for instance. A cyclist signalling a left turn would really like to know if the car behind has the new bicyclist-handsignal-recognition upgrade before entering the lane. The ability to put your mind into the mind of the other car is crucial, and requires tons of information about the driver.

All of this may require and involve legislation. Intent and what all parties to an accident “should have known” are used in court to apportion blame in addition to the black-and-white of the law. When one of the parties is an AI, this gets murkier. How should you know what the algorithm should have been thinking? This is far from a solved problem, and it’s becoming more relevant.

We’ve written on the ethics of self-driving cars before, but simply in terms of their decision-making ability. This paper brings home the idea that we also need to be able to understand what they’re thinking, which is as much a human-interaction and legal problem as it is technological.

[Headline image: Google Self-Driving Car Project]