Speech generation and recognition have come a long way. It wasn’t that long ago that we were in a breakfast place and endured 30 minutes of a teenaged girl screaming “CALL JUSTIN TAYLOR!” into her phone repeatedly, with no results. Now speech on phones is good enough you might never use the keyboard unless you want privacy. Every time we ask Google or Siri a question and get an answer it makes us feel like we are living in Star Trek.

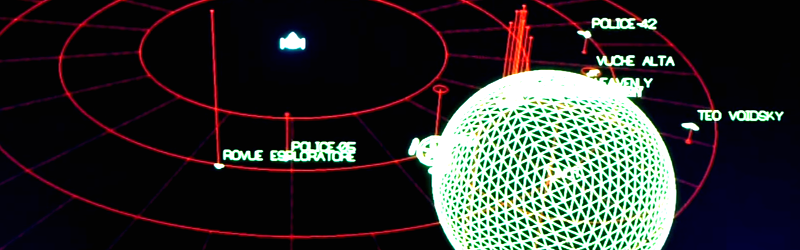

[Smcameron] probably feels the same way. He’s been working on a Star Trek-inspired bridge simulator called “Space Nerds in Space” for some time. He decided to test out the current state of Linux speech support by adding speech commands and response to it. You can see the results in the video below.

For speech output, he used pico2wave and espeak. There’s also Festival, but he couldn’t get that one working. He also used PocketSphinx for speech recognition and provides information on how to pretrain the system for words to make it respond better. In the video, you can see that it isn’t perfect, but it is pretty good.

The other part of the equation is recognizing natural language and [Smcameron] discusses that, as well. If this were a real starship, you might need to do a little work on the user interface to be sure you heard the right thing before taking some drastic action (“I said: ‘blow warp drive’ not ‘go warp five’!”).

If your system isn’t as powerful as a full Linux box, consider uSpeech for the Arduino. You might also check out Jasper.

Fascinating…

Indeed

I love the “kitchen sink” approach that Stephen has decided to take with this project. I admit that I kinda miss the primitive wireframe graphics that the game used in early stages, but it’s fun to watch unusual and unique features make it into production.

Good to see the state of the open source art…it is coming along

not ready for prime time, or my time for that matter, just yet

Speech-recognitionwise, that was less than impressive.

Sorry hear that you are disappointed, would you like your money back?

[https://youtube.com/watch?v=BF1wVv8OnfE]

Life is a lemon…

i love humor in code, lolled when i saw this in the server main()

take_your_locale_and_shove_it();

Ha. smcameron here. This was to work around a problem I ran into reading, iirc, ascii wavefront obj files. Those files contain floats. I was parsing them with sscanf. sscanf expects different things for floats depending on locale, sometimes periods for decimals, sometimes commas, which is kind of crazy since the data files I am reading come wit the program, and I really don’t want per-locale files. This is a starship simulator, we’re post-national here, right? No problem, though, just set the locale with setlocale() at the beginning of your program, right? Well, no, because various libraries seem to keep calling setlocale() — many many times — and undoing your setlocale() — (I’m looking at you, gtk). I got fed up with this nonsense, why is sscanf behaving so stupidly anyway? Why is gtk behaving so stupidly? Fine, children, you want to call setlocale? Fine. So I override it once and for all, and anybody who calls setlocale will get a locale for sure, and that locale will be “C”, and all will be right with the world.

oh i’ve been on that ride before, that’s why i immediately lolled. Thanks for replying, you have a really cool project going on, i hope you continue to develop it. I was looking over the server code after reading the contribution.md, and i would love to contribute at some point, i might join up later.

lots of treasures in smcameron’s repositories, like this procedural spaceship generator that uses OpenScad

https://www.youtube.com/watch?v=VnyerXljmrQ

Shameless plug, we (some guys at Ultimaker) build our own star-trek like bridge simulator as well. It’s FOSS, and can be found at: http://emptyepsilon.org/ and we suck at putting movies online :-)

It’s 2 years in the making. And, it has an HTTP API, which can be use used for all kinds of things.

I tried the same thing as SNIS did, voice control. But then with the google chrome voice API. As the HTTP API is easy to access from javascript. However, I’m not sure if it’s my dutch accent, I found the voice recognition results to be quite bad.

Don’t feel bad. Chekov had the same issues.

Voice recognition running on a local machine is a joke.

I tried it 15 years again, then last year. Almost no difference. Unusable for a real world application.

Cloud processing for voice recognition is a little better, but still unreliable.

So, for the moment, I am stuck with touch screens everywhere. Oh, how I miss physical buttons…

But there is hope with Soli: https://www.youtube.com/watch?v=0QNiZfSsPc0

:o)

Yes that project was amazing, what happened to it?

As for local voice recognition it works much better if there is a limited vocabulary and the system has been trained/biased accordingly.

My biggest problem with voice is the environment here is sometimes very noisy and chaotic with machines and multiple people, it is even hard to have a phone conversation at times. So I agree sophisticated gesture input would very nice and I expect that complex hand signatures could serve as a form of password too. Just sign you name in mid air, or whatever.

It appears to still be demo-ing: https://www.cnet.com/videos/project-soli-the-best-thing-we-saw-at-google-io-2016/

My keyboard never misunderstands me.

My kybard constnatly misiunderstands me…

May speak wreck a nation his tear able. Eye knead two train hit butter.

Simple use local speech recognition to trigger the device like saying Hello Siri, Okay Google, or Hello Jane to start activate the cloud based Speech Recognition. That way you can have your own always listening Speech Recognition system.

Of course once you have the speech to text you might want to use something like https://adapt.mycroft.ai/ or https://opennlp.apache.org/ and maybe throw in http://docs.opencv.org/2.4/modules/contrib/doc/facerec/facerec_tutorial.html

just for fun.

Commercial products work quite well, specially when it’s trained, and I’m not by far a native english speaker..

Just tried the standard OSX mt-lion ASR on the content of the video, via internal mic, works almost better (“warp” isn’t a word), than the video itself !

The big trouble for Open Source are the missing of enough voice profiles, and that were clouded system wins because with each sentence recognized their voices profiles increase. But privacy…

What I hope, is a system that can talk and sound like me, so I could loop this output with the original speech to get way better recognition. Or scam Google,Siri… with boring cooking recipes or marxist books, between my personal requests.

exactly, it’s all about the training. I’m actually sort of surprised there isn’t a proper open source ASR “cloud” option yet. Of course I say that as a person who would USE it, not MAINTAIN it.. so yeah

A GOOD headset is a must too. About open source -> get involved: http://www.voxforge.org/home

So how does the cloud recognition work? Some guys in India listening to what you say? =ducks =

No, they’re in Virginia.

ROFL :)

“Every time we ask Google or Siri a question and get an answer it makes us feel like we are living in Star Trek.”

I think there is a episode in the original series where they go in a parallel universe where everybody is a fascist mean duplicate.

That’s probably the place where they would have Google/Siri/Cortana then.

Try again, in English.

“Every time we ask Google or Siri a question and get an answer it makes us feel like we are living in Star Trek.”

Creo que hay un episodio de la serie original donde van en un universo paralelo donde todo el mundo es un medio fascista duplicado.

Eso es probablemente el lugar donde tendrían que Google / Siri / Cortana a continuación.

Spanish gibberish is still gibberish.

If you are unable to read plain English perhaps some type of professional help like a teacher can aid you.

Perhaps that is also better than to complain to others about your inabilities.

If you are unable to write plain English perhaps some type of professional help like a teacher can aid you.

Perhaps that is also better than to inflict onto others your inabilities.

This troll is purple.

Addendum:

I’m just trying a type of English you might mean. If it’s another type you need please use an online translator yourself.

Complaints about it to complaints@google.com please, thanks.