Although there are a few robots on the market that can make life a bit easier, plenty of them have closed-source software or smartphone apps required for control that may phone home and send any amount of data from the user’s LAN back to some unknown server. Many people will block off Internet access for these types of devices, if they buy them at all, but that can restrict the abilities of the robots in some situations. [Max]’s robot vacuum has this problem, but he was able to keep it offline while retaining its functionality by using an interesting approach.

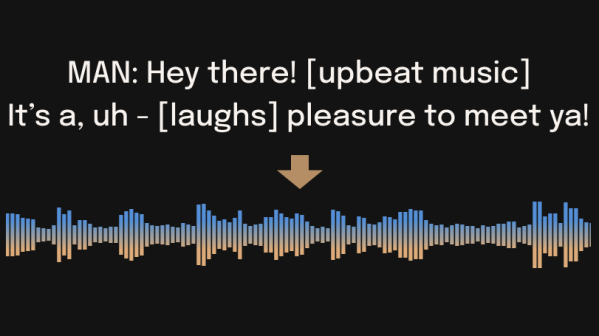

Home Assistant, a popular open source home automation system, has a few options for voice commands, and can also be set up to transmit voice commands as well. This robotic vacuum can accept voice commands in lieu of commands from its proprietary smartphone app, so to bypass this [Max] set up a system of automations in Home Assistant that would command the robot over voice. His software is called jacadi and is built in Go, which uses text-to-speech to command the vacuum using a USB speaker, keeping it usable while still offline.

Integrating a voice-controlled appliance like this robotic vacuum cleaner allows things like scheduled cleanings and other commands to be sent to the vacuum even when [Max] isn’t home. There are still a few limitations though, largely that communication is only one way to the vacuum and the Home Assistant server can’t know when it’s finished or exactly when to send new commands to the device. But it’s still an excellent way to keep something like this offline without having to rewrite its control software entirely.