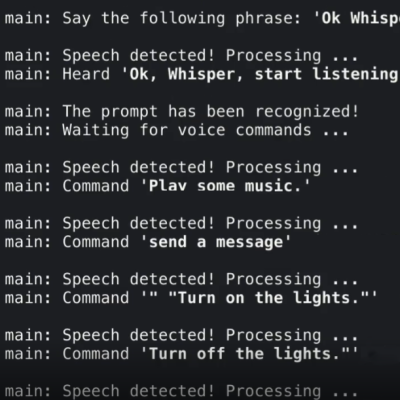

A PCB business card is a great way for electrical engineers to impress employers with their design skills, but the software they run can be just as impressive as the card itself. As a programmer with an interest in embedded machine learning, [Dave McKinnon] wanted a card that showcased his skills, so he designed one that runs voice recognition.

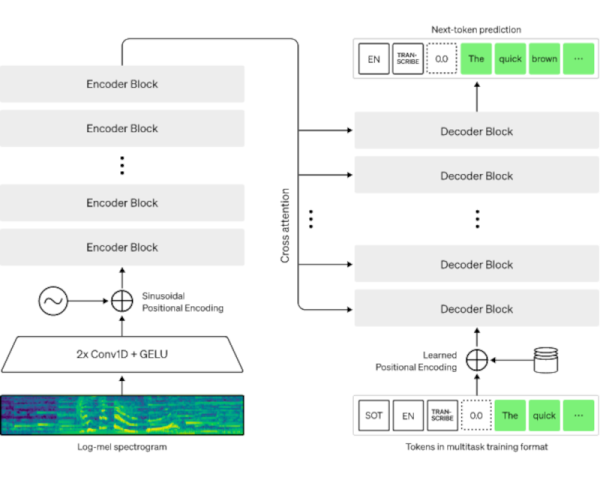

[Dave] specifically wanted to run a neural network on his card, but needed to make it small enough to run on a microcontroller. Voice recognition looked like a good fit for this, since audio can be represented with relatively little data, a microphone is cheap and easy to add to a circuit board, and there was already an example of someone running such a voice recognition network on an Arduino. To fit the neural network into 46 kB, it only distinguishes the words “one” through “nine,” and displays its guess on an LED seven-segment display. [Dave] first prototyped the system with an Arduino, then designed the circuit board around an RP2040.