The Hands|On glove looks like it’s a PowerGlove replacement, but it’s a lot more and a lot better. (Which is not to say that the Power Glove wasn’t cool. It was bad.) And it has to be — the task that it’s tackling isn’t playing stripped-down video games, but instead reading out loud the user’s sign-language gestures so that people who don’t understand sign can understand those who do.

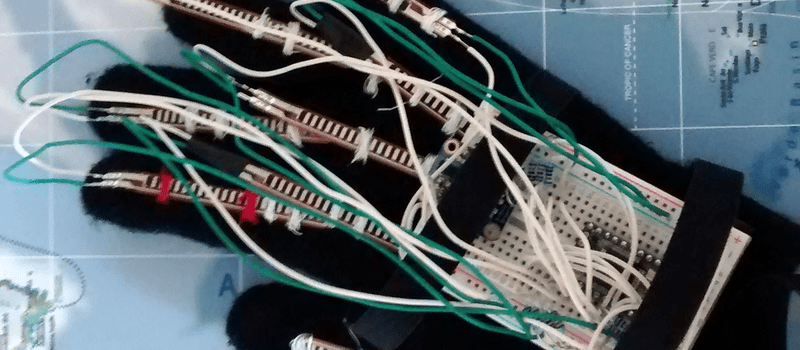

The glove needs a lot of sensor data to accurately interpret the user’s gestures, and the Hands|On doesn’t disappoint. Multiple flex sensors are attached to each finger, so that the glove can tell which joints are bent. Some fingers have capacitive touch pads on them so that the glove can know when two fingers are touching each other, which is important in the US sign alphabet. Finally, the glove has a nine degree-of-freedom inertial measurement unit (IMU) so that it can keep track of pitch, yaw, and roll as well as the hand’s orientation.

In short, the glove takes in a lot of data. This data is cleaned up and analyzed in a Teensy 3.2 board, and sent off over Bluetooth to its final destination. There’s a lot of work done (and some still to be done) on the software side as well. Have a read through the project’s report (PDF) if you’re interested in support vector machines for sign classification.

Sign language is most deaf folks’ native language, and it’s a shame that the hearing community can’t understand it directly. Breaking down that barrier is a great idea, and it makes a great entry in the Hackaday Prize!

Combine this with speech synthesis and you get a very powerful tool for the impaired. I guess it can also be used in teaching sign language.

Kudos!

A cookie to the first person who makes it say “Ugly gorilla.”

there was a ‘team hands-on’ that also made an ASL glove, for the ‘americas greatest makers’ TV show (intel and TBS). are you using their name, or are you working with them in some way?

I heard that the most important part of signing is the expression, interesting if its the glove or the user that interprets this.

but I don’t know if that’s right because I don’t know any sign except a few rude ones of course.

ASL was my foreign language in college and hand-shape is just one small part of the communications protocol.

Also, 5 bend sensors are about 10% of what you’d need to determine hand shape.

It’s cool, and a great start, it’s just important to know that there’s plenty of work to be done to make this minimally useful.

I was wondering about that. I don’t know that an inertial measurement unit can decode something like the sign for the letter “J”, especially considering that you could essentially move your wrist or your finger. I think the resolution requirements on the IMU would be tight, and the positioning of the sensor would be critical.

Can you train a neural network using a PC, then implement it on a MCU or FPGA? The trick is to have a recogniser where all the valid symbols are valleys in a data space so only input that falls on the ridgelines is ambiguous, as all other locations result in a slope toward a single result value. Then you reduce the resolution of your inputs down to the minimum needed to ensure you have a usable map. Another way to enhance the precision of the output is with n-grams to predict the most likely symbol if you get a ridgeline case, based on the value of the previous symbol.