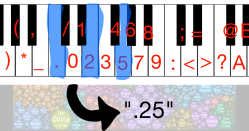

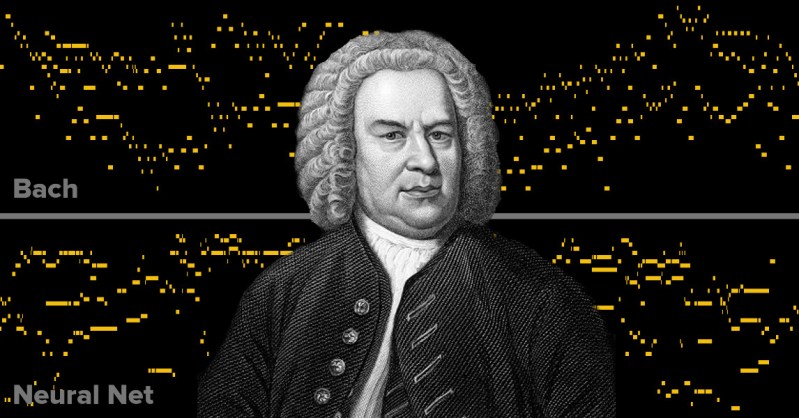

[carykh] took a dive into neural networks, training a computer to replicate Baroque music. The results are as interesting as the process he used. Instead of feeding Shakespeare (for example) to a neural network and marveling at how Shakespeare-y the text output looks, the process converts Bach’s music into a text format and feeds that to the neural network. There is one character for each key on the piano, making for an 88 character alphabet used during the training. The neural net then runs wild and the results are turned back to audio to see (or hear as it were) how much the output sounds like Bach.

The video embedded below starts with a bit of a skit but hang in there because once you hit the 90 second mark things get interesting. Those lacking patience can just skip to the demo; hear original Bach followed by early results (4:14) and compare to the results of a full day of training (11:36) on Bach with some Mozart mixed in for variety. For a system completely ignorant of any bigger-picture concepts such as melody, the results are not only recognizable as music but can even be pleasant to listen to.

The core of things is this character-based Recurring Neural Network which is itself the work of Andrej Karpathy. In his words, “it takes one text file as input and trains a Recurrent Neural Network that learns to predict the next character in a sequence. The RNN can then be used to generate text character by character that will look like the original training data.” How did [carykh] actually use this for music? With the following process:

- Gather source material (lots and lots of MIDI files of Bach pieces for piano or harpsichord.)

- Convert those MIDI files to CSV format with a tool.

- Tokenize and reformat that CSV data with a custom Processing script: one ASCII character now equals one piano key.

- Feed the RNN with the resulting text.

- Take the ouput of the RNN and convert it back to MIDI with the reverse of the process.

[carykh] shares an important question that was raised during this whole process: what was he actually after? How did he define what he actually wanted? It’s a bit fuzzy: on one hand he wants the output of the RNN to replicate the input as closely as possible, but he also doesn’t actually want complete replication; he just wants the output to take on enough of the same patterns without actually copying the source material. The processing of the neural network never actually “ends”; [carykh] simply pulls the plug at some point to see what the results are like.

Neural Networks are a process rather than an end result and have varied applications, from processing handwritten equations to helping a legged robot squirm its way to a walking gait.

Thanks to [Keith Olson] for the tip!

bach is useless, try with jimi hendrix, give some lsd to that network :)

I know you’re joking, but Bach is a really good choice for this project. Particularly his harpsichord music. Because of the mechanics of the instrument it eliminates a ton of variables. Generally speaking, a harpsichord doesn’t have dynamics (volume control) like a piano does when you hit a key harder or softer. It also can’t hold a not like a piano. That’s because the mechanism in a harpsichord plucks the strings instead of striking them, and doesn’t have dampers to stop them from ringing.

For the neural net this means it only really has two variables, pitch and timing of the note attack. It doesn’t need to deal with volume and duration.

Well, you could do that directly with sound waves instead of pseudo-MIDI. It has been done before as a speech synth (WaveNet) and it can be trained with any type of music. It will require a lot more processing power and input data, but results would be more interesting. It could even learn to sing (if you can give it infinite music and processing power).

Hi, Would just point out that you can make this much leaner: All of Bach’s works, and Mozart’s, all cover a range of the keyboard of their time: 61 keybaord notes maximum, not 88….Would save you (and the computer) a lot of time…!

I reckon that Jimmy might disagree with you on that point. Johann Sebastian Bach, did write music for 200+ piece orchestras. And almost ~300 years ago getting 200+ extremely skilled people to act as one is an amazing feat alone.

A bit over on the numbers there. Bach would have been more like 20-30 people in the orchestra.

From 1700 on the orchestra was what we would expect today, it was an event.

Still wrong on the numbers. It was more mid of the 18th centuary and 150 players was and is a very large ensemble.

https://en.wikipedia.org/wiki/Orchestra

@X You are right Bach was alive from 1685 to 1750 and during his lifetime Baroque orchestra (1600-1750) were 35 and 80 players for day-to-day performances, being enlarged to 150 players for special occasions.

So my number was out by 25% sorry, sorry for being out by 25% in events that took place 300 years ago.

https://en.wikipedia.org/wiki/Baroque_orchestra

It’s like he invented the polyphonic synth, but then went “Damn, the tech isn’t here for a couple hundred years yet, let’s just do it iteratively with humans.”

And wrong again. Iteratively is repeating but in sequence. I think the word you were looking for is in parallel.

Hey guys – you can’t allways blame hackaday for being incorrect and then mess up all the facts and words.

Maybe they are humans after all…

That is actually rather easy you just add some randomness to the inter-layer flows of data and you will get a similar effect to what actually happens in the human brain when the various cortical modules are pharmaceutically desynchronised.

I am fascinated by this project. I wonder how good a neural net could get if there was a crowdsourced feedback loop for how much people enjoy the output that it could further learn from.

If you make the music that the general populous enjoys, you’ll end up with crap. Just turn on any top-40 radio station if you don’t believe me.

Figure out what kind of music you want to make, then get people who like that, then get only their feedback. ;)

I smell another machine learning experiment to highlight people who have good taste in music. Beauty is in the eye of the neural net.

“Your taste in music is excellent. It exactly coincides with my own!”

One could also use a clustering algorithm, and then train a separate network on each cluster.

You mean like MS’s twitter bot…..

The one that turned into an internet troll? Hah! Yes :-)

Well, it’s kind of a cross between Mozart and Bach, kind of a Mach…

What do you call this?

im leaning towards Bozart

Really I don’t think giving it the notes as symbols was such a leap, at some point it’s going to deal with them as symbols, even if you give yourself the complexity of making it listen, decide what it’s hearing, break it down to elements it can process… i.e. symbols… though with a bigass neural net it becomes some internally assigned symbolism that you kinda have no chance of making sense out of, or utilising in another way, so yah, KISS, feed it symbols to start with.

So, do i get this right that the RNN did have no clue about timing or rhythm or intensity of the music? It basically only has one speed to play the notes at? Would be interesting to see what happens if you could give the network some more clues about the way the notes are intended to be played in the original piece.

That’s right. Just to re-iterate, the neural net used was originally intended for use with text and it works on a character-by-character basis. It doesn’t actually understand “words” as a concept, but if you feed it (for example) English text, the output is actually surprisingly good English anyway.

Turns out that if you give it music formatted as text (notes represented by letters, and time by spacing) then the neural network happily accepts it and outputs — it turns out — surprisingly good music; once you turn the text output back into music, anyway.

That part of this project was pretty clever. The source music exists as MIDI, but MIDI is more like a log of hardware keyboard events; it’s all this-key-down-at-X-velocity, that-key-up, etc. He had to turn it into something more like, well, more like text so that it would work with the neural network and be structured more like letters (notes), words (chords), and spaces (time). To put it another way, he wanted “Hi” but had to start with “Left-shift-down, h-key-down, left-shift-up, h-key-up, i-key-down, i-key-up”.

Start it off training with simple scales and melodies, then slowly work it up to more complex pieces. Perhaps given a background in the fundamentals, it will apply that knowledge to it’s more complex pieces.

It’s fun to think of it almost like training a student in music theory.

I saw thing on Youtube a few days ago… Pretty neat stuff…My response to I’ll be Bach was always “and I’ll be Beethoven”

Bach had two or more five to six octave keyboards not a single 88 note (modern piano) keyboard. There are dampers in any harpsichord. Loudness is done with stops like a pipe organ, and swell shutters can be fitted to the sound source.

When our crap public station plays it’s overnight “bits of baroque” I find the parts where I vocalize this classic form where 1/16 notes sound like so much poultry.

It’s like a chicken hen-it’s like a chicken hen-it goes Bach Bach Bach Bach Bach Bach-it’s like a chicken hen.

An educational exercise worth doing but as he says in the end the result is rubbish. I think it is because the NN is not aware of all of the scales (geometric not harmonic) that the patterns of Bach’s music operate at. He shows some insight into this when he notes that the music has no beginning or end. A composition is an intellectual exercise but what this NN is producing is just the musical equivalent of “word salad”. What the NN has really found is a representation of a Markov chain algorithm, NNs are actually very good at doing that, finding algorithms and rules, without ever understanding the larger context that gives their use relevance.

what happens if you push computer code through a neural net? what about neural net source code? has anyone tried this?

Not neural network related, but since you say that you might find this read interesting if you haven’t seen it before: https://www.damninteresting.com/on-the-origin-of-circuits/

Dr. Adrian Thompson had an automated process randomly evolve its own code for an FPGA to respond to a 10khz tone (and later, the words “Yes” and “No”.) It worked but when he looked at the code/gate configuration it had come up with, the chip was mostly unused and what WAS used was in part arranged in all these weird feedback loops, functionally disconnected from the rest of the chip; but if you disabled any of them it all stopped working. What had happened was that the evolutionary selection wound up picking out and relying on all these weird hardware level feedback edge cases to make something that actually happened to work. (If temperature or other physical aspects changed too much, it stopped working.)

Point is, the system made something that worked but wasn’t using the chip “properly” at all. I suspect that we’d see something similar with generated computer code, and that might even be the case with this musical generation. It’s listenable and certainly identifiable as music, but maybe that’s about it? If nothing else, it certainly challenges definitions.

the work of a musical idiot.

It would be interesting to see how separating the music into left/right hand at input would affect the compositions at output,Limiting each to what the span of a hand is, and 5 notes per.

This video is such a tissue of cr*p regurgitated ad nauseam and this guy so d*mb and proud of himself, that I almost wanted to kill myself after watching it ! I can understand his hopes about prosthetic Artificial Inteligence for his own disability, but his Youtube account should immediately be restricted for sanity and youth protection.

Now one thing is for sure : no AI in a foreseeable future will be capable of achieving this level of bullshit per minute (however that “brilliant” mind could pursue a great carreer as a torture researcher at Guantanamo Bay).

Frankly, setting aside the thought and intentions that even the most primitive or trivial music fundamentally conveys, many musical rules relative to Bach and baroque music are so simple and bounded than it used to be, for the last two centuries, the academic way of teaching musical harmony as an introduction to composer (of written music) apprenticeship.

This has be done before, and a 100 lines program with a reasonnably short data set of modulations and “cadences” could be enough to solve most of the first-year’s harmony excercises (where, for a given melody, you have to find the straightforward melodies sung by 3 other voices, which combined, coud form a proper succession of chords) as well as any freshman getting progressively used to it, and even random melodies could be turned in seamless polyphonic music with this musical technique.

I’m not sure, but wouldn’t be suprised, that a monkey just slightly more informed and less self-satistified than this youtuber could do it too, but many monks definitely composed a myriad of polyphonic (plain-)songs through the Middle-Age using these rules applied to their random melody of the day.. and now that this brainwashed Internet exhibitionism has become the rule, i suddendly understand all their Millienial(s) fear and terror !!

With the end of the last 200+ music venue in Lafayette Indiana the present and future arts have come to an end. I will not listen to code trying to “entertain” me, any AI that claims a sexual identity or emotion will be shamed into shutting up or simply silenced. It’s just code that is all. I have watched over the last decade or so a malaise of the arts, even talking to people that come in from elsewhere. They reported the same conditions. An old classic movie theater is doing something, but I don’t want to vape the fog filling the space so the LED panning strobing lights show up as beams of light literally X-ing out the stage talent which is only back-lit and has faces in shadows. Live sound is fed thru a digital board and comes out smashed and louder than hell.

Alex-a and the rest can go to hell. One of them uses my handle to make it worse. I am glad I will not see the overlook of AI in my life.

Go suck pandora’s teat. Suckle it’s synthemilk. You won’t be human.