Tech artist [Alexander Reben] has shared some work in progress with us. It’s a neural network trained on various famous peoples’ speech (YouTube, embedded below). [Alexander]’s artistic goal is to capture the “soul” of a person’s voice, in much the same way as death masks of centuries past. Of course, listening to [Alexander]’s Rob Boss is no substitute for actually watching an old Bob Ross tape — indeed it never even manages to say “happy little trees” — but it is certainly recognizable as the man himself, and now we can generate an infinite amount of his patter.

Behind the scenes, he’s using WaveNet to train the networks. Basically, the algorithm splits up an audio stream into chunks and tries to predict the next chunk based on the previous state. Some pre-editing of the training audio data was necessary — removing the laughter and applause from the Colbert track for instance — but it was basically just plugged right in.

The network seems to over-emphasize sibilants; we’ve never heard Barack Obama hiss quite like that in real life. Feeding noise into machines that are set up as pattern-recognizers tends to push them to the limits. But in keeping with the name of this series of projects, the “unreasonable humanity of algorithms”, it does pretty well.

He’s also done the same thing with multiple speakers (also YouTube), in this case 110 people with different genders and accents. The variation across people leads to a smoother, more human sound, but it’s also not clearly anyone in particular. It’s meant to be continuously running out of a speaker inside a sculpture’s mouth. We’re a bit creeped out, in a good way.

We’ve covered some of [Alexander]’s work before, from the wince-inducing “Robot Bites Man” to the intellectual-conceptual “All Prior Art“. Keep it coming, [Alexander]!

Elliot, seems a [Name] failure occurred the last PP.

–A

Quite right, should be Alexander. I’ve made the changes, thanks!

Colbear

Also, I’m glad they upgraded the voice effects for the new season of Twin Peaks

Ha! That reference takes me back. I’m surprised I didn’t hear it the first time I watched the demo.

I knew that I heard “this is a Formica table” during the Rob Boss whisper segment.

Recently they came up with a way to adjust video of people speaking so that a 3rd person could ‘move their mouth’ (look at the video and you’ll understand: https://youtu.be/ohmajJTcpNk

The output of this article of voice emulation may now sound creepy and artificial, but once the neural networks get more training data, one day they will produce voices that are indistinguishable from reality.

I bet that in “certain countries” suddenly foreign heads of state will start saying really interesting things..

I wonder will royalties go to dead actors, currently living family, who are recycled by a merger of the two technologies. I’m sure SAG-AFTRA (Screen Actors Guild‐American Federation of Television and Radio Artists) will block it until the royalties are sorted out.

Presumably these if going to be something that the actor owns rather than the studio with actors who want the cutting room floor scraps for training their doppelganger, taking home slightly less in the short term for the promise of eternal CG youth.

Now that was impressive – I can see it being really good on films that need a re-dub for voices (say for foreign language, script changes or even, er, ‘PG-13′ classifications reasons). That way one wouldn’t get quite so distracted by the visuals not matching the audio and forcing them to pick bad re-dub words (see all the oddball variants with Bruce Willis’ infamous “yippee-ki-yay..” line in DieHard)

And…

dubbing in different languages in the actors “real” voice for foreign distribution

Next stop, Max Headroom.

who is Obamer?

The Russian sounding one.

Pretty much sounds like sweeping the shortwave dial during high magnetosphere activity, hmmm that might be voice of america, that might be BBC world service, that might be something french…

garbage in, garbage out

You’ve never heard Barack Obama hiss like that, huh?

Besides the obvious joke, that bigot has one of the most pronounced sibilance problems of any modern American celebrity. Only Paul Harvey had it worse. Perhaps we rationalize away the faults of those we adore. It was unsurprising to me the neural net picked it up.

yaaaaay let’s bring your political views into a blog post on neural networks

Train a Neural net with Political decisions and outcomes world wide for the last 2000 years and see what it produces.

cerberarchy

Attempting to derail a point with an emotionally triggered response. That is all.

This is how religious figures justify their heinous teachings. I,e. “omgg

think of the CHILDREN and their precious little Sunday school.

Look, a pure example of conservative virtue signaling. I bet the precious snowflake thinks that’s something only “those nasty lie-beral sjw” do.

http://arcturi.com/sitebuilder/images/Obama_Reptilian-270×270.jpg

Rob Boss whisper is what you hear coming from the shadowy corners of your cabin in the woods when you think you are alone and slowly going insane. This is both creepy and hilarious. Imagining that one coming out of a bust of Bob Ross now continuously and its freaking me the hell out

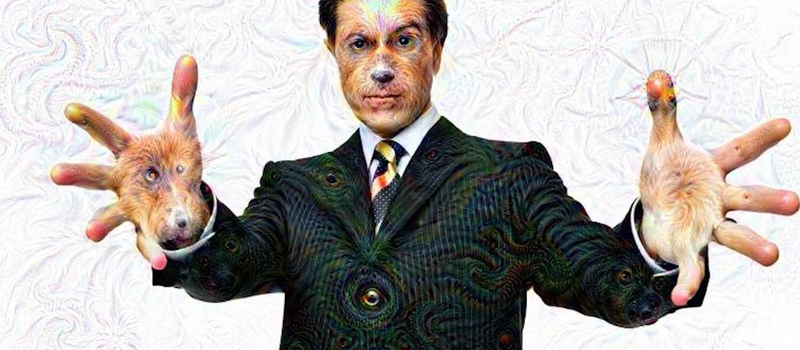

The random whispers was one thing, the whispering along with the dog mountain google dream interpretation put it over the top.

were^^

Make it animatronic and you’re guaranteed to win the HaD prize.

Rob Boss is reminiscent of garbled cell phone noise.

Just sounds like an old cassette played backwards. If I wasn’t too lazy I’d reverse it just to make sure.

A new weapon in our war against automated robocallers!

Train this with Rick Astley and Justin Bieber. But don’t listen to the output if you do that.

A few decades ago I saw it coming, Howard Cosell or Walter Cronkite reading to you the news. Sadly like TV-movies-CGI it will be the reason for turning it all off.

Like laptop Data Jockeys making “music” of someones work into just worthless noise, end run. Power off.

Not quite unsettling enough. I know! Lets train it on anime characters.

https://youtu.be/FsVSZpoUdSU

Ah that is the video I wanted to point out, much better, and informative.

And without creepy subliminal “lizard people” references.

9000 is pretty funny: “AAAAAAAAH! AAAAAAAAAAAAAAH! AAAH! AAAAAAAAAAAAAAAAAAAAAH!

Is this really an NN? It seems more like Markov chain using sound instead of text.

Never thought about applying the term uncanny valley to audio, until now…

thats good, how you can do it?