Robotics is hard, maybe not quite as difficult as astrophysics or understanding human relationships, but designing a competition winning bot from scratch was never going to be easy. Ok, so [Paul Bupe, Jr’s] robot, named ‘Goose’, did not quite win the competition, but we’re very interested to learn what golden eggs it might lay in the aftermath.

The mechanics of the bot is based on a fairly standard dual tracked drive system that makes controlling a turn much easier than if it used wheels. Why make life more difficult than it is already? But what we’re really interested in is the design of the control system and the rationale behind those design choices.

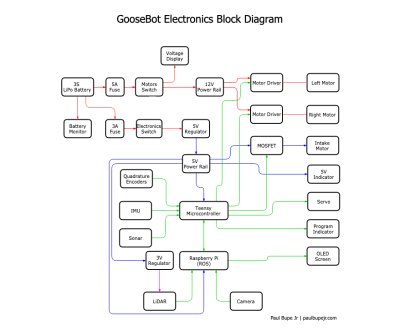

The diagram on the left might look complicated, but essentially the system is based on two ‘brains’, the Teensy microcontroller (MCU) and a Raspberry Pi, though most of the grind is performed by the MCU. Running at 96 MHz, the MCU is fast enough to process data from the encoders and IMU in real time, thus enabling the bot to respond quickly and smoothly to sensors. More complicated and ‘heavier’ tasks such as LIDAR and computer vision (CV) are performed on the Pi, which runs ‘Robot operating system’ (ROS), communicating with the MCU by means of a couple of ‘nodes’.

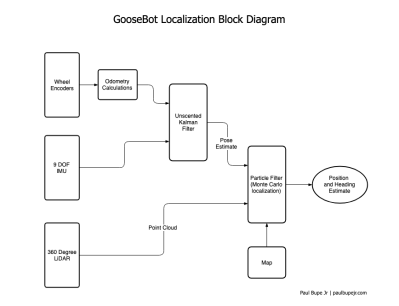

The competition itself dictated that the bot should travel in large circles within the walls of a large box, whilst avoiding particular objects. Obviously, GPS or any other form of dead reckoning was not going to keep the machine on track so it relied heavily on ‘LiDAR point cloud data’ to effectively pinpoint the location of the robot at all times. Now we really get to the crux of the design, where all the available sensors are combined and fed into a ‘particle filter algorithm’:

What we particularly love about this project is how clearly everything is explained, without too many fancy terms or acronyms. [Paul Bupe, Jr] has obviously taken the time to reduce the overall complexity to more manageable concepts that encourage us to explore further. Maybe [Paul] himself might have the time to produce individual tutorials for each system of the robot?

We could well be reading far too much into the name of the robot, ‘Goose’ being Captain Marvel’s bazaar ‘trans-species’ cat that ends up laying a whole load of eggs. But could this robot help reach a de-facto standard for small robots?

We’ve seen other competition robots on Hackaday, and hope to see a whole lot more!

Video after the break:

Hmm, nice one lots of good technical effort, well done. Thanks for post.

When I saw odometry followed by unscented Kalman in the block diagram I got impression for a second or two the robot was designed to chase various odours, such as follow the gal with the right perfume being heavier than air – I mean the perfume. Timing of robot utility in terms of when letting it loose after meals would be a good thing such as to avoid odour errors, in any case it’s helpful then that methane rises carrying whatever with it are likely out of measurement range ;-)

Thanks! It was quite a bit of work but I enjoyed it. I have a ton more pictures and drawings that I didn’t include in the write-up!

I’ve always chuckled at the name “Unscented Kalman Filter” — there definitely has to be a more technical term they could have used but this is way more amusing!

What about add an LIDAR on it to make it autonomous? ^.^

it has one in the picture

yes, I miss that. it’s not in the video

It does have one! It’s re-purposed from one of these vacuum cleaners https://www.neatorobotics.com/robot-vacuum/xv/xv-11/. I didn’t go into much detail about it because it’s a fairly well documented technology and I wasn’t doing anything special to it.

That’s not a cat.

It did tend to behave like one and it was great at ingesting cubes…

Bizarre=strange

Bazaar=open air market

English is hard! https://www.etymonline.com/word/bizarre