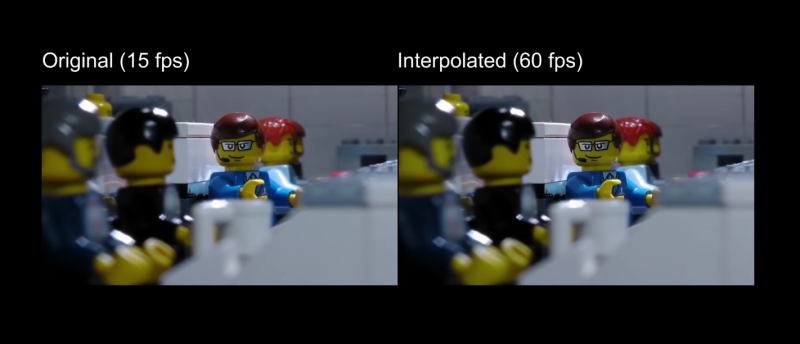

The uses of artificial intelligence and machine learning continue to expand, with one of the more recent implementations being video processing. A new method can “fill in” frames to smooth out the appearance of the video, which [LegoEddy] was able to use this in one of his animated LEGO movies with some astonishing results.

His original animation of LEGO figures and sets was created at 15 frames per second. As an animator, he notes that it’s orders of magnitude more difficult to get more frames than this with traditional methods, at least in his studio. This is where the artificial intelligence comes in. The program is able to interpolate between frames and create more frames to fill the spaces between the original. This allowed [LegoEddy] to increase his frame rate from 15 fps to 60 fps without having to actually create the additional frames.

While we’ve seen AI create art before, the improvement on traditionally produced video is a dramatic advancement. Especially since the AI is aware of depth and preserves information about the distance of objects from the camera. The software is also free, runs on any computer with an appropriate graphics card, and is available on GitHub.

Thanks to [BaldPower] for the tip!

It does make me wonder how far this can be pushed. Like, could we take a 4 fps video and get 60 fps? That would be a real game changer for stop motion in general.

Or even just 4 to a film-like 24 fps.

Hollywood becomes obsolete by AI and a small pile of photographs…

The further you try to push it the more wrong its likely to look. Not to mention vastly increased computing time to fill in so many extra frames. So making something that looked alright butter smooth is much easier than taking 1fps or less up to the minimum watchable frame rates 15fps to 60fps is only 4 times more frames.. so effectively creating only two new frames between known points. To take 1fps up to 10 is 9 new frames invented in the gaps…

I would say with something like a LEGO stop motion as all the parts are rigid it becomes easier to create good fill frames – especially should the process know about the geometry options in LEGO. Something more like an aardman animation where everything is flexible you could not back fill as easily as there are no simple rules on what shapes can exist in-between frames.

wonders of being half asleep it is of course three new frames when the rate is 4 times…

Though I only noticed when I returned to point out another way of looking at it – the time you are making stuff up to fill – starting at 4 fps you have a massive gap to fill compared to 15fps – so any errors are around long enough to be much more noticeable. Makes it much harder to fool the eye as mistakes can easily last 1/10th of second – more than long enough to really be seen – where starting at 15fps you can almost put anything in the gaps the errors are already certain to be up less than 1/15th of a second probably more like 1/30th, and the eye and brain will filter out the odd mistake much easier.. Still very noticeable if you are really paying attention and the error is large, but small errors and short duration much harder to spot.

It’s stop motion not rendered 3d. So creating twice as many frames would be more than twice as much work thanks to the increased hand work precision required of the movements as well as double the number of shootings. And that’s just the problems I can think of getting to 30FPS with no experience doing the work.

Welp this will be my thesis (kind of) finding the limits of this approach…

Personally, I barely notice the difference between the 15 and 60 fps versions

The differences I notice are the artifacts.

watch the hands, in some sections there was not enough image data to properly recreate the inside of the hand so you see a bit of clipping or something. At least that’s what I saw in the short example used in this post.

There’s one point where it looses track of the floor, and the studs all slide one position over.

At 1:40, you clearly see the hand disappearing. When played at 15fps, it obviously didn’t happen. That’s why it looks so unreal at 60fps.

Seems like Disney has done something similar to this for their animated movies (even the old ones) while streaming

It really is quite good, but not perfect. At time=1:39, one of the spacemen’s helmets teleports forward.

That’s the biggest problem with AI image processing: when it guesses something wrong, it guesses VERY wrong.

It probably also guesses less wrong, but you don’t notice!

AKA perception bias.

and look at the hands during that period too.

I don’t know why he says several times that there are “no visible artefacts” when there are so much! Almost at each scene cut, and during continuous scenes too.

But when it works well, it is really impressive!

Wonder how bad the artefacts are on the original live footage – remember its been compressed and compiled into a unified video format, uploaded and streamed to you at probably a different frame rate.. So the problems could be caused after the AI has done its pass with ““no visible artefacts”” – though I doubt it.

I wonder if they use this stuff in anime.

Probably not. Anime is generally done using line art, which is represented as curves defined by lists of points in animation software. Inbetweening is done at the curve level, interpolating the points between key frames for each curve. What this is useful for is stop-motion animation, such as clay, paper cutout, and Lego animation styles, which are done photographically.

Oh, if Ray Harryhausen could see his work now…

https://www.youtube.com/watch?v=yLVD58lF0jg

That is pretty cool to see, now I wanna see it with the ED-209 from Robocop. It’s animations at times were pretty clunky and kinda took me out of the film’s world.

https://www.youtube.com/watch?v=l4r0NLoDdms

They should convert the DAIN result back to 30 or 24 fps so that it could be compared without the weirdness of 60 fps.

now if only they could make the human acting more lifelike :) Obviously they are limits but a real step forward. I think the real application of this will be as it’s used to improve films made in stop action now.

There is already a thing called mvtools which works with the vapoursynth python library that is integrated with mpv.

That means you can interpolate to 60 FPS at play time. I had that working for a while, but everything kept exploding, the results where pretty good and it was awesome for anime.

Mvtools is not AI based or anything, it just cuts the video into blocks and tracks the motion of them between frames to generate the intermediate ones. It indeed had problems with depth of field blur but anime does not have this problem and the results were near perfect. The main problem is that it ran on CPU only which limits the number of blocks, but there is a commercial video player that recreated the lib with opengl, don’t remember the name of it.

It was also quite painful to get that working on debian, I used an ubuntu ppa that required me to recompile everything that came out of it and mpv (as it’s not compiled with vapoursynth support for debian).

The thing that mvtools did and the AI clearly isn’t is detecting scene change. With mvtools it simply works by counting the number of blocks that are not found on the next frame and if the number was above a threshold it just duplicated the frames instead (those blocks were linearly interpolated otherwise). The odd fade effect was present with mvtools if the threshold was not set well.

http://avisynth.org.ru/mvtools/mvtools2.html

http://www.vapoursynth.com/

Is that banner picture supposed to be comparing something? Both sides are exactly the same frame. See, here’s the thing: if you do frame interpolation, you don’t do any processing of the frames that are already there; you just compute new frames to place between the original ones. So yeah, looking at one of the non-interpolated frames is doing exactly an A-A comparison.

The one on the right is a 60 fps image :)

– Same here – glad it wasn’t just me. Either it’s an A to A compare, or if it is actually an interpolated frame, you’d need to show the two originals the interpolated was generated from, to give it some context of what kind of a job it did.

Piecutte’s comment wasn’t there when I posted, but “vapoursynth” in the video is probably the mvtools plugin I was talking about, you can do that real time.

Interesting stuff, but you are downloading a fully trained network, not the actual dataset used to train that network (which is going to be difficult anyhow due to copyright). So it is not like you can make any tweaks and fully retrain a totally new model to fill in say 60 frames given 16 frames (Lumières) or 40 frames (Edison’s films). I’m going to guess that the above model would have been tuned for optimal performance at 24 frames a second.

The colourisation (colourization for north Americans), is interesting as well (one of the videos from the linked DAINAPP page). This would have cost about 300k per film in the 1980’s using actual humans to aid the computer by selecting each individual fill colour (color for north Americans) for multiple frames. It still looks really bad, but not as bad as it was.

https://www.youtube.com/watch?v=0fbPLR7FfgI

https://github.com/jantic/DeOldify

colorization for north americans, colourization for canadians

Canadians aren’t North Americans?

Congrats, lookup twixtor for after affects and see what others have been using for over the past decade for vector-based motion interpolation. Now you can “blow your mind” for not having googled it. Not to be condescending but AI (short for better marketed algorythms) have been around for at least a decade to solve this problem.

Here’s a test I created from slow motion footage shot on iPhone XS

https://www.youtube.com/watch?v=BH-o7k3K6QE

Could we apply this to real life? It seems to me that there are a lot of events in the world that could be improved with a little interpolation.

With the proper interpolation I could last at least 4 mins with the ladies

It needs to be cut aware. when the frames differ by a large margin and just let them be different. Leads to a strange soft cut wipe affect I don’t like. Everything else is great about it.

What Animaniacs (1993) WiLL Look Like in 60 FPS (i Wonder How Ai Works)

Noice, MAM!!!

When played at 15fps, it obviously didn’t happen. That’s why it looks so unreal at 60fps.