The short answer to the question posed in the headline: yes.

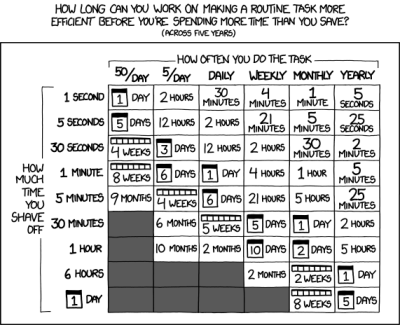

For the long answer, you have to do a little math. How much total time you will save by automating, over some reasonable horizon? It’s a simple product of how much time per occurrence, times how many times per day it happens, times the number of days in your horizon. Or skip out on the math because there’s an XKCD for that.

For the long answer, you have to do a little math. How much total time you will save by automating, over some reasonable horizon? It’s a simple product of how much time per occurrence, times how many times per day it happens, times the number of days in your horizon. Or skip out on the math because there’s an XKCD for that.

What’s fun about this table is that it’s kind of a Rorschach test that gives you insight into how much you suffer from automatitis. I always thought that Randall was trying to convince himself not to undertake (fun) automation projects, because that was my condition at the time. Looking at it from my current perspective, it’s a little bit shocking that something that’ll save you five seconds, five times a day, is worth spending twelve hours on. I’ve got some automating to do.

To whit: I use pass as my password manager because it’s ultimately flexible, simple, and failsafe. It stores passwords on my hard drive, and my backup server, encrypted with a GPG key that I have printed out on paper in a fireproof safe. Because I practice good cookie hygiene, I end up re-entering my passwords daily. Because I keep my passwords separate from my browser, that means entering username and password by cut-and-paste. There’s your five seconds, five times per day. Maybe two seconds, ten times, but it’s all the same. It shouldn’t take me even as long as twenty minutes to whip up a script that puts username and password into selection and clipboard for one-click pasting. Why haven’t I done this yet? I’m going to get on it as soon as I’m done with this newsletter.

But the this begs the question. If you spend up to twelve hours on every possible 25-second-per-day savings, when will you ever get your real work done? Again, math gives us the answer. One eight-hour workday * 25 seconds * 12 hours (pessimistically) of labor = 1.58 years before everything that needs automating will be. Next week’s newsletter might be a little bit delayed.

What do you see in the XKCD “Is it worth the time” table? Automate more, or step back from the cliff edge?

I have devised a rule for myself: no matter how long it takes, and how often you do it, never automate anything that is fun or pleasant to do. This is one reason why I never got a reflow oven: applying solder paste and reflowing boards is a chore, soldering the components by hand or using a hot air gun/hot plate is fun (to me).

+1 on the fun part.

That being said, what you find fun may not be for someone else and thus at least share knowledge about automating it with others that could benefit, too! :D

Sorry i may have just reported this. Blame fat fingers on a mobile phone :-(

Another thing that should never be automated: things where paying attention is part of why you do it. My mother writes down the temperature and precipitation every day. She could get a device to do this for her, but then she’d stop paying attention, and lack the connection to the data. Similarly, I wrote a few scripts to automatically log my weight as I was dieting a few years back, and noted that my progress was slower than when I manually wrote it down, simply because I wasn’t paying as much attention.

I’m finding it increasingly difficult to find Yaks around here.

Put a different way, I need to step away from the cliff!

That should be hairy Yaks. I am terrible at making jokes!

“Yak Shaving Day” is a Holiday celebrated by many senior administrators and operations people.

In terms of choosing when to automated tasks, I simply ask myself if it needs documented for someone else to get something working later. If it is going to take 4 hours repeating myself to colleagues or more than 1 support email, than a script with inline contextual documentation is convenient. Wrapping a ssh session in a TCL/expect shell instance also can make deployments relatively painless.

I wish there was something standard like the https://letsencrypt.org installer for commercial deployments.

;-)

See my comment below.

I use three criteria:

(1) do I have to do this more than once?

(2) is it prone to error if i do it “by hand” ?

(3) is it too complicated (or boring!) to do by hand.

Yesterday i wrote a Lua script to compile a large set of files in different folders; with different filenames and content of some of those files in each folder. If it do that by hand, the probability of one or two errors is high. And then i discovered i had to do it all over because one of the source files had an error. Thanks to the script, it only took a few seconds to redo.

What Mark S says ….

Some tasks, even if they only need to be done once can be more time consuming than automating them.

I have a task (data updates to a reference table) that needs to be repeated about once every 3 to 5 years. By hand, it would take me weeks of effort and be prone to many, many errors. It was tedious enough just creating the formatting of the file, it would be soul-destroying to do the job entirely by hand.

By automating (even though it took several days to write and debug the script to my satisfaction), I can ensure that the task can be completed, error free in under a couple of hours.

Oh, and @Deon van Schalkwyk – I can email you a few hairy (or fresh shaven) yaks if you wish.

Items 2 and 3 are very closely related. Agree on those heavily.

I used to do a lot of component tests that were very fiddly and easy to mess up. Automating those tests probably didn’t save a lot of time, but the quality of the results was a LOT better.

Also, tests got run more often because it was easy to do so. I caught a few major “Aw Shits” because it was easy to run the tests.

The prone-to-error / million small details case is another good one! That’s like a time multiplier; if you have to do the same thing twice because you missed a fiddly bit, then it’s twice as valuable to automate. And times howevermanymore if it ends up publishing something public-facing.

I used to have that XKCD chart printed and hanging next to my monitor at work for years – but ignored it most of the time :)

The one crucial aspect it doesn’t consider:

By automatizing, will I have to learn a new skill or understand something new? if yes, will it be useful later? If yes again, spending more time might be worth it.

My first automation scripts took me way too long to write, but now, I don’t hesitate to tackle substantially larger project anymore. All thanks to working through the initial hurdles and “toy” projects.

Learning how to use ReGeX.

I agree here. I see the chart more of a baseline. If the amount of efficiency gained is enough on its own than usually I’ll automate. If it is not so clear cut but be knowledge can be gained, then for sure that weighs even more than raw time saved and I’ll probably automate anyway!

You nailed it @Peter.

The benefits calculation in this neglects indirect payoffs – actually learning how to get practical results from new mode of programming, running the data through an unfamiliar method of analysis for comparison, or getting off your tail and building the labor-saving device or instrument that you’ve been thinking of.

These are very non-linear since the larger part of the benefits accrue to future challenges rather than the immediate task at hand, but are what make many of these projects worthwhile.

*Also “To wit:”

Additionally, the automation will probably involve steps that you now know exist in that process, and can be cut/pasted into new work.

Lots of times I do something and forget the exact arcane invocation needed to accomplish the task, but I also know that the step was in the middle of a previous project and I’ll go cut/paste the relevant section into the new project.

Many times I end up refactoring the code (changing variable names and coding style to correspond to the new project, without changing the action), but it’s still more efficient than typing in new code because of typos: the original code has no typos, and the time saved from that is substantial.

For example, to start an application and then enter keypresses (to make it go full screen, for example, or to load the previous file) is a non-trivial task involving xtoolwait, sleep, and xdotool. It took many rounds of experimentation to get that to work (for Firefox).

But now it’s done and I can cut/paste that code to use it in any future application.

Nit pick: Surely COPY and paste rather than “cut”? Cut suggests that the data is removed from the source.

There are also tasks where you don’t realize how much time automating will end up saving.

This isn’t automation, but I just built a tailstock for an indexing head for gearcutting and it allows me to feed six times faster than I could previously, entirely repaying the time it took to fabricate with a single gear. I did not expect anywhere near this much improvement, so it was far more useful than I thought it would be.

For tasks done infrequently, it’s often efficient to write down the steps needed to do the task. For example, I have all the steps needed to configure a linux system written down in a text file. It takes about 8 hours of downloads and system changes to make a linux system friendly, but I’ve got a list of “do this next” steps that I can run down. Any time I add a really useful app or change a config setting, I make the corresponding change in the text file. I configure a new system infrequently, but the process is really fast when it happens and I don’t forget steps.

I have a similar list of instructions for starting a new git repository. I do that infrequently and struggle to remember the steps each time, but I’ve got the commands listed so it’s just a cut/paste whenever it needs to be done.

I like to have a particular workflow on my computer: workspace 1 holds the browser, E-mail, and a file explorer, workspace 2 holds 3 shell windows, 1 explorer of the menu system, and 1 explorer onto my current work dir, and workspace 3 holds documents and a spare unsecured browser.

(Really useful and fast to know that documents are on workspace 3, so CTRL-ALT-right) switches to a datasheet instantly, and so on, alt-tab lists the windows in order, and so on.)

Getting all those windows up and positioned was taking most of a minute every morning at login time, so I automated the system. There’s a directory “startup” on my system containing scripts that are run in order (named 10-xxx 20-xxx and so on to enforce the execution order and allow me to insert scripts in between), and each script is responsible for configuring one aspect of the window setup. All done by command-line commands to switch workspaces, start apps, and so on.

You are so close! What if those steps were written down in an ansible config, or chef recipe? You coul push a button and walk away; in 45 minutes your fully operational battle station would be at your command.

Will it take 12 hours to learn enough Ruby to write a chef recipe? Maybe, but you only write it once. You probably build new systems more often than that.

Yes, however, since you do this so infrequently, at least some of the packages, commands, or configurations will have been changed by someone else and your script fails to execute. Then you’ll spend the next three days backtracking, re-doing, and figuring out what went wrong and how you can make it work again.

So you might as well do it by hand anyways.

Why do it again, base images are joy.

Because you want to keep up with the times?

In my experience very few of those sorts of packages are that unstable. Most packages add features over time, but don’t break CLI compatibility as they do so. So he could easily expect >95% success right out of the box, and maybe a few minutes of troubleshooting.

A build management system like Chef doesn’t mean writing a 1200 line shell script to install 100% of everything. It usually means you’ll build the system using of a runlist containing dozens of recipes; recipes that are themselves open source and readily available from supermarket.chef.io. These recipes are also maintained (like everything FOSS add “more or less” as needed). Many recipes solve issues like distro independence, so you can even try things out on different platforms. You can install the most recent versions, or you can version constrain your recipes to the known good versions you need. The default attributes are typically set up to match a fresh install; by adding attributes that override the defaults you can customize things, often in a version independent manner.

Plus, if you’re ambitious, you can run your recipes in a test kitchen, where you can try them out in just about any container environment you have available (like vmware, virtualbox, docker, openstack, etc.), you can even abstract away your virtual test environment using Vagrant.

If you need to repeat a build, there’s virtually no reason to do it by hand.

Ansible, chef, blah blah.

PWalsh is already writing down the commands in a text file? If the comments are commented out, later on you can just run “sh ./whateverfile”. (That’s what I do.)

The automation tools pay off when you’re doing the meta-automation frequently enough.

Until then, a well commented batch of scripts is better than nothing. And the combination of good notes and runnable scripts is awesome.

“To wit: I use pass as my password manager because it’s ultimately flexible, simple, and failsafe.”

“fail-safe” made me chuckle :)

Pass? I use a rust implementation of Bitwarden running on my server with this funcionality already built in. So reinventing the wheel is also a consideration. But then there should be a table about how long to look for an existing solution and time investment changing the found solution to your specific needs.

Betteridge’s law of headlines, “Any headline that ends in a question mark can be answered by the word no.”

“Should we strive for world peace?”

“Should we cure cancer?”

Those clearly aren’t headlines

If they were, the actual headline would be something like “Russia is threatening WW3 by invading eastern Europe again – should we strive for world peace?”

Or, “New discovery: a gene transfer from the naked mole rat to humans makes our cells extremely resilient to DNA copying errors but makes 50% of children autistic – should we cure cancer?”

Those aren’t headlines; who is the “we?”

Military leaders aren’t writing headlines, so it isn’t comparable to this story, where the author asks about something a tech media author might regularly ask about.

And your examples are from the editorial page anyways. If though editorials have “headlines,” the more general word “headlines” is short for “news headlines.”

No

More broadly, any question can be answered by the word ‘no’. It may not be correct, but it IS an answer.

How old are you?

No

:) Well done, sir!

What are the odds of this working?

50/50. It will either work or it won’t.

Doesn’t matter if there’s a 90% chance of it failing *if you only have one opportunity* to do the thing. The odds are 50/50, it’ll work or it won’t.

If it’s a life or death thing, it works so you don’t need to do it again, or it doesn’t work so you’re dead and can’t do it again. 50/50

That’s an interesting hypothesis; that half the things people ask about are actually true!

But even Socrates knew we are not nearly that wise; our ignorance is nearly complete! Almost anything that we think up is wrong. It takes a lot of work just to get from totally wrong to mostly wrong, at which point you’ve uncovered some profound detail.

Simplistic and too narrow and shallow analysis of ‘automation’.

Most automation is applied to the low-hanging fruit as it has the most immediate ROI; but this probably will not implement the most effective changes. Process automation is not necessarily the mechanization of a process. Robotics can be the automation of mechanical process, or just support the process, or simply exist to exist (no short-term gain).

The process of automation is important only to the engineer. The product of automation is important to the society, where the principle is to remove the human from the process to increase production yield and/or to increase product reliability.

Your extrapolation from reducing one user’s mouse-clicking to the overall role of automation across the arc of human history exhibits more than a little bit of scope creep.

Maybe he automates scope creep?

So if I practiced good cookie hygiene and had to enter usernames and passwords multiple times per day it would probably be worth my while to create a Lego Technic contraption to operate my Mooltipass for me with a single button

Now you’re talking!

In general I agree with you, but I also include the “stress factor” and the “just shot myself in the foot factor” as part of the economic calculation of automation — meaning, even if it is only used occasionally, but the cost of screwing something up is SO HIGH that I get stressed even thinking about taking that next step, then it is worth automating the step, and writing in boundary checks and all sorts of stuff to make sure I do not make a total F*** up (I do the work inside a sandbox before deploying BTW). Yea, this has slowed down a couple of things, but at the end of the day I am a LOT less stressed.

There are also places where the calculation breaks down… I automated some things in one of my families business not because I wanted to cut the people out, or that it was more cost effective, but because of the training factor — it would take us months to train someone to do certain operations consistently well enough to trust them to be unsupervised. A number of times our recent trainees decided after working for awhile to branch out on their own and compete with us. None of them understood what it takes to run a business, the overheads (where you have to amortize cost of electricity, gas, as well as raw materials, that you need to have a CPA, etc.) so that at the end of the day you are making any profit at all. So typically within 6 month to a year of them branching off on their own they come asking for their old job back because we were paying them twice what they were making on their own… Needless to say, we never took any of them back. It was cheaper for me to to spend way to much time automating simple tasks than it was to occasionally find, hire and train people to do the meticulous work. One last thing. We paid all of our people based on piece work, and usually after a couple of hours of working with them I could get them making more than a minimum living wage. After a few weeks of teaching them lots of tips and tricks most were making 2 or 3 times that – assuming they had the hand/eye coordination. The experts were good enough to make twice that (meaning 4 to 6x living wage, but they were fast and on their game when they worked). Yea, you can spend WAY to much time automating simple tasks, but sometimes there are other factors than just your time in the automation.

Two things: It sounds like amortize doesn’t mean what you think it means; and

You’re describing a bagel bakery.

If you have to ask, you are NOT a Hacker.

More accurate xkcd Automation info graphic:

https://xkcd.com/1319/

It’s the maintenance costs that get you.

Yeah, right? This is the next-level game.

My experience (system admin of a one-user system for 25 years) is that the more complicated the tools/solution, the more maintenance is required.

I spent less time porting my rc.local file from late 90s Slackware to early-2000s Centos, to first-generation Ubuntu, to mid 2010s Arch than I have trying to get systemd to fire a script when the freaking WiFi is connected to my home network. (Still unsolved.)

Again, single user (now-grey beard) experience — probably doesn’t compare to kids these days with their computers all whizzing around up in the cloud… :)

This is the reason why the answer is “no” most of the time. There are things one can automate that do indeed end up working implausibly reliably with zero maintenance – those can be worth the effort; a PIR-sensor controlled light that Just Works for the next twenty years or so might not do all that much for you when it replaces a manual switch, but nonetheless it’s quite convenient for basically zero maintenance.

However, most modern automation (whether code or hardware) is absolutely NOT like that. Code will start rotting on you the second you commissioned it, and so will everything else supporting it, be it incompatible changes incurred by a new version, changed dependencies, OS updates, changes in the cloud, or just having to redo everything due to failing hardware you cannot replace 1:1 anymore. And hardware will be no different – staying with the previous example, perhaps you thought that a PIR is way too dumb for 2021 and you’d rather go with some smart home gear; yeah, well, no, that’s game over – first, you’ll never get it to actually work juuuust the way you wanted it, and second, you’ll spend all of whatever spare time you have left trying to figure out why that light that you could turn on remotely yesterday no longer responds today.

It’s a fool’s game. Only truly stupid-simple things that are guaranteed to remain maintenance free are worth the effort – or laborious things that you do actually do all the time.

Is it “automation” when other humans do it? If you write down the instructions for another human to follow, how is that any different from computer software? Humans are most certainly sophisticated competing machines.

Of course, and we’re just full of bugs.

“raises the question.”

“begs the question” is something else entirely.

Incorrection!

You are referring to a “lesser used and more formal definition”, and claiming that the normal English usage is wrong.

https://www.merriam-webster.com/words-at-play/beg-the-question

He’s right, though. You’re just arguing.

It might be a poor idea for you to reply to comments, because your natural tendency is to argue, which is toxic and bad for both you and the site.

Is that a grown up Kid Vid from the Burger King Kids Club in the featured image?

One nice thing with using a password manager coupled to the browser (I use the embedded one in Firefox) instead of copy/pasting is the inherent protection against fishing. The real websites I have an account on will have the fields auto-filled with the characteristic yellow tint. If one day the auto-fill doesn’t work, I know something is wrong and I will carefully look for a typo in the domain and check the certificate. Everybody, sometimes, is tired, in a rush, or doing 12 things concurrently and can be tricked by a dodgy link.

always automate so other people know how to do it too.

Several issues with that, though.

1) No automation is applicable in an unchanged form anywhere else by anybody else. The daily equivalent of this that everyone has experienced is that there is no such thing as a tutorial you can follow exactly to solve a problem you have – somewhere before you’re done it will stop applying to you, and then you’re either savvy enough to figure out on your own what needs to be different, or you’re utterly hosed.

2) Automating something is the action by which something that everyone knew how to do becomes something that only you know how to do (as soon as the automation stops doing it for you and everyone else – not if, but when), which is rapidly turning into “nobody, not even you know how to do any longer” because who the heck remembers a year later what this thing does exactly and how it is even supposed to be doing it.

Recently for a personal project I had to use some random freeware that would take a bitmap of a character font I drew up and generate an array to display on an oled. The problem however was with how that array was ordered based on how the accompanying library would render the image to compatible displays that was different from how my display worked so either I had to spend time rewriting bits of a library to bodge it to work as is or more simply I programmed an atmega32u4 to act like a usb keyboard, copy the output from the freeware program, paste into a new notepad window, and go through each element and reformat it to work with my library. It only took me 10-15 minutes to write the atmega program and test on a sample array to make sure it worked and I let it rip through all 256 characters which only took a few minutes, typing formatting out way faster than I could’ve manually. It was fun to watch too, as it zipped through large blocks of the array, cutting and pasting as it went.

I automate a lot, using vba. It saves a lot of time every month for some things, and for other things it saves an hour a day.

It occurs to me that optimizing the organization (of parts, tools, etc) could count as a form of automation, given how small the relevant time saving increments are. Like, instead of “automate” it’s “optimize” but use the same chart.

For example: if I reach for and use a particular tool — say, a drill — once per day, and I can shave 1 second off the time it takes to do so, that’s worth spending up to 30 minutes of time in re-organizing whatever is needed to effect a (lasting) 1 second savings in doing that task. Maybe that means hanging it on a wall instead of storing it in a drawer across the room, or maybe it just means making sure the drill bits are organized so I don’t have to look for the right bit for quite as long. Even saving as little as a second can make a lasting difference if the task is long-lived enough.

Makes me think in a lot of different directions.

We have such a system implemented. It’s called 5S. Everything is organised and documented. It is checked regularly and graded. Part of the paycheck calculation takes that grade into account. Higher the grade, more money for the employee.