If we were to think of a retrocomputer, the chances are we might have something from the classic 8-bit days or maybe a game console spring to mind. It’s almost a shock to see mundane desktop PCs of the DOS and Pentium era join them, but those machines now form an important way to play DOS and Windows 95 games which are unsuited to more modern operating systems. For those who wish to play the games on appropriate hardware without a grubby beige mini-tower and a huge CRT monitor, there’s even the option to buy one of these machines new: in the form of a much more svelte Pentium-based PC104 industrial PC.

In A World Of Cheap Chips, Why No Intel?

Having a small diversion into the world of PC104 boards after a recent Hackaday piece it was first fascinating to see what 486 and Pentium-class processors and systems-on-chip are still being manufactured, but also surprising to find just how expensive the boards containing them can be. When an unexceptional Linux-capable ARM-based SBC can be had for under $10 it poses a question: why are there very few corresponding x86 boards with SoCs giving us the commoditised PC hardware we’re used to running our mainstream distributions on? The answer lies as much in the story of what ARM got right as it does in whether x86 processors had it in them for such boards to have happened.

Imagine for a minute an alternative timeline for the last three decades. It’s our timeline so the network never canned Firefly, but more importantly, the timeline of microprocessor evolution took a different turn as ARM was never spun out from Acorn and its architecture languished as an interesting niche processor found only in Acorn’s Archimedes line. In this late 1990s parallel universe without ARM, what happened next?

When Intel’s Pentium was the dominant processor it seemed that a bewildering array of companies were fighting to provide alternatives. You’ll be familiar with Intel, AMD, and Cyrix, and you’ll maybe know Transmeta as the one-time employer of Linus Torvalds but we wouldn’t be surprised if x86 offerings from the likes of Rise Technologies, NexGen, IDT, or National Semiconductor have passed you by.

This was a time during which RISC cores were generally regarded as the Next Big Thing, so some of these companies’ designs were much more efficient hybrid RISC/CISC cores that debuted the type of architectures you’ll find in a modern desktop x86 chip. We know that as the 1990s turned into the 2000s most of these companies faded away into corporate acquisition so by now the choice for a desktop is limited to AMD and Intel. But had ARM not filled the niche of a powerful low-power and low-cost processor core, would those also-ran processors have stepped up to the plate?

It’s quite likely that they would have in some form, and perhaps your Raspberry Pi might have a chip from VIA or IDT instead of its Broadcom part. All those “Will it run Windows?” questions on the Raspberry Pi forums would be answered, and almost any PC Linux distro could be installed and run without problems. So given that all this didn’t happen it’s time to duck back into the real timeline. What did ARM get right, and what are the obstacles to an x86 Raspberry Pi or similar?

Sell IP, Win The Day

If you know one thing about ARM, it’s that they aren’t a semiconductor company as such. Instead they’re a semiconductor IP company; you can’t buy an ARM chip but instead you can buy chips from a host of other companies that contain an ARM core. By contrast the world of x86 has lacked a player prepared to so freely licence their cores, and thus the sheer diversity of the ARM market has not been replicated. With a fraction of the numbers of x86 SoC vendors compared to ones sporting ARM there simply isn’t the cheap enough competition for those ten dollar boards.

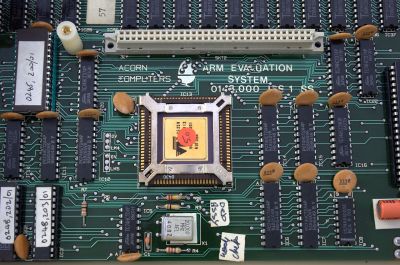

Then there is the question of power. There is a tale of the very first ARM chip delivered to Acorn powering itself parasitically from the logic 1 signals on its bus when its power was disconnected, and whether true or not it remains that ARM processors have historically sipped power compared to even the most power-efficient of their x86 counterparts. Those x86 chips that do reach comparable power consumption are few and far between. Thus those small x86 boards that do exist will often have extravagant heatsink needs and power consumption figures compared to their ARM equivalents.

Bringing these two together, it creates a picture of a technology that’s extremely possible to build but which brings with it an expensive chipset and support circuitry alongside a voracious appetite for power, factors which render it uncompetitive alongside its low-power and inexpensive ARM competition. If there’s one thing about the world of technology though it’s that it defies expectations, so could the chances of a accessible x86 platform ever increase? Probably not if it were left to AMD and Intel, but who’s to say that an x86 softcore couldn’t tip the balance. Only time will tell.

Header: Oligopolism, CC0.

There is a component missing in the hypothesis stated above.

What if Linux had never come along?

Would there be a place for any cup that doesn’t run windows? Arm, risc or otherwise.

If Linux hadn’t come along, maybe IBM wouldn’t have ditched OS/2.

More to the point, there would still have been the BSDs. I’m quite sure they would have gotten a fair bit of the attention that Linux got instead.

IBM fired the OS/2 developers at microsofts demand, the epic failures in charge capitulated to greedy bill’s monopoly demands and killed off the far better OS. The reason windows is here isn’t because it was better, quite the opposite…. the absolute bottom of the barrel OS is here because of unfair business practices, from the very first deal they did. Daddy gates taught bill how to take over companies and destroy the competition, never a moment of how to make a functional product, since that was never their goal, it never happened.

I agree 100%. All they cared about is the GREEN BANK. Is making money and more money the greed and lust.

Only an extreme fanboy would call Windows “the absolute bottom of the barrel OS”.

Agreed. I used OS/2 professionally in the late 90s and found it to be a fine os, but it definitely wasn’t vastly superior in the way it’s fans claimed. I know I preferred Windows NT at the time

On the server side, Windows was a real pain. Windows NT needed booted every couple weeks… and then the stack of user licenses I had to deal with. Finally suggested a Red Hat server. They went for it!!! No more booting and the license headache went away. Maintenance required almost went to zero. That system just ‘ran’ and from then on we used Linux on the back end. Users were happy (they just saw it as a file/print server from their Windows clients). And management happy as no more bleeding financially other than a hardware upgrade down the road.

I liked OS/2, but management was heavy Windows users… So stuck with M$. Hind sight that was a good idea as OS/2 fizzled.

—

As for x86 SBCs, we built a protocol translator using an x86 SBC back then that used a stripped down version of our software that was running on PCs. Problem was later there wasn’t an ‘upgrade path’, so the product just went out of date. We tried to go to a Linux based ARM SBC for it, but just to much rework, even if it was PC-104…. Later we did use an ARM 104 SBC for another product and that worked ‘ok’. Problem there was working around a non-realtime OS (Linux) for what we wanted to do at the time. Couldn’t use Vrtx or some other RTOS as we still needed Ethernet and Disk services (like what Linux gives you out of the box) which BSP didn’t give you. Anyway. Nuff said. Industry moved on, and ARM (and maybe RISC-V) seems to be the trend … We just have to move with it!

I’m no fanboy, but I do have personal experience with OS2 in the early 90’s. By comparison Windows, at that time, absolutely sucked. Sorry, that’s the truth.

Back in the day, I watched OS2 deliver four video streams with a ‘486 when a Windows box with the same specs could barely do one. The irony was that even the native Windows app software we had at the time ran better and faster on OS2 than it did on Windows itself.

OS2 was lean, fast, and managed software malfunctions in a disciplined manner. In OS2 if things really went south, a window might crash. When things went south in Windows, you usually ended up having to reboot.

I’ve run Linux for years now and have no horse in the OS2 vs Windows race. But If OS2 hadn’t been squashed and its development continued, I’d probably be running it now.

It objectively was, though. That’s not fanboyism.

Well if it is objective, then you can give a detailed explanation of why and for what use, and not just make an assertion

when did Microsoft fix the memory leaks? For many years you had to reboot the OS almost daily to keep it running. I saw many systems with timed reboots between midnight-3a and there was a famous LAX communications system which was ported to Windows and had mandatory reboot every 30 days but a NOOB thought all was running well so didn’t need rebooting and CRASH at day 38 or 39 or so. LAX went dark with all flights for over an hour. This was SOP for Windows into the early years of 2000. And I left Windows in the mid 90s BECAUSE OF UNRELIABILITY. Ctl-Alt-Del was made famous by Microsoft, nobody else.

I loved OS/2,it was an incredible OS. If one of my VMs stopped running, i could kill it and not effect the others. It was a real preemptive multitasking multi-threading OS. Imagine how awesome it would be today vs Windoz10. But the best tech doesn’t always win. The court of opinion is lead by the uneducated. Beta vs VHS, VooDoo vs NVIDA. Voodoo had superior video quality but fanboys wanted speed. They vad no idea that 120fps on an 80Hrz CRT was no different than Voodoos 80fps.

And the reality was that the monitor was the weak link in the chain as the tech could only produce a little more than 40fps. Even the expensive for the time, 120Hrz monitors could only reach about 65 fps. The bigger 120 and 140 were contentious bragging rights that drove the market. The product with the best marketing wins.

Never mind it’s ‘genesis’ in the calculated theft (which seems to be the general philosophical bent of Microsoft, i.e. ‘TCP/IP stack lifted from BSD as well’, etc.) of CP/M from Gary Kildall – who even while drunk coded circles around gates (or perhaps anyone else MS has thrown money at over the years to further mine the ‘nix ecosystem for anything that might serve as a ‘bulwark’ as things begin to topple, i.e. ‘oh crap – we need to be able to do container dev…let’s hire some Google people for a million bucks each…, then set to work on closing down the source once they get pissed at how mind-bogglingly insecure every single aspect of the software has been for decades – and has perhaps only gotten worse -, and leave for fear of being lumped into the same heap of opportunistic, zero-integrity/credibility creeps ala ‘Kevin Mitnick working the .gov scene’ for the remainder of their careers…) – and were it not for Kildall’s premature death (which, yeah, maybe it would have worked out better for everyone, obviously including him, if he could have eventually gotten loose of the drink…), may have even worked his way full-circle into something amounting to ‘open-source firmware that can’t be absorbed by a certain chip manufacturer/bloatware alliance..’ i.e.that isn’t beholden to absurdly anti-intellectual property, and goes ‘Full-Franklin’ in it’s opposition to patent-trolling, privacy-invading, expensive af trash.

My first experience with OS/2 was in 1992, just before I wiped it and tried this new Linux thing (I already was working with UNIX by then so Linux was more familiar). Linux stayed in the hobbyist realm for a number of years until the late 90s, so there was plenty of time for OS/2 to take over if it was able to. I think the dominance of Microsoft and thus Windows in that era was what doomed OS/2.

correct, it was the vast market control Microsoft had which stalled OS/2 growth. EVERY PC vendor was required to pay Microsoft if MS DOS or Windows was or was not installed. BeOS had the same problem and bailed quickly since they didn’t have the capital IBM had. In Germany where Microsoft had far less control the top PC selling in the country was pre-loading OS/2 and in 4 months sold millions of copies and OS/2 thrived along with some German ISVs. But it didn’t last long since Germany is not enough.

I was able to get OS/2 running well on a number of computers and also with a UNIX background I loved the fact there was a GNU subsystem for OS/2 and the XfreeOS2 X server. That OS, OS/2, ran DOS really well, ran Windows 16/32s really well, ran *NIX really well, ran Java really well along with many others. And at one time I trimmed it down to just the PM GUI system and kernel so it ran in just a few MB of RAM so it even scaled down very well. Blazed on dual CPU systems and ran circles around NT on any number of CPUs.

The years of the late 90s through 2010 were almost like dark ages as Windows was the only thing getting press coverage.

Linux was there growing and growing and showing up embedded in things like routers, TiVo, etc. But my 2010 it was pretty obvious Linux was the future and Windows would have to figure out how to exist with it or slowly die. ARM and the rPi has spread the understanding of Linux greatly and why Microsoft tried to attack it and the robotics sector so often found using it.

By the late 90s, Linux was getting a lot of coverage – VA Linux and Red Hat had their IPOs, you could buy distros off the shelf in Circuit City or Comp USA.

True and in the geek sector there were places to gather and stay connected. There was Coral Linux and the Linux Desktop Summit and Red Hat, etc but the general population and most non programmers had no clue. I was using desktop Linux 100% by the late 90s but it was still a battle explaining to friends, relatives, etc that the future was not Windows, that Windows was not even close to being the best OS etc.

Now, I can go to a robotics club and everyone knows of Linux and most use it. Maker spaces too and even in general conversation if I say I’m building a Linux system for x or try booting Linux to see if your hardware is working people generally know something about it. It was no so much that way through the early part of the century. Calling it the “dark ages” was over the top now that you pointed out the things which were happening.

By the way I still have OS2 in the box! IBM on the cover.

I too still have a couple of in-the-box copies of OS/2 along with a 3′ tall stack of books in the shed.

I suppose I could install eCommStation onto the AtomicPi and play with it some. Of all the tech in OS/2 I was hoping we’d see OpenDoc and the WorkplaceShell ported to Linux. The workflows that desktop system provided was amazing and the consistency… When things are inherited from base components intuition takes over when new components are added and stuff just works they way you expect + extras.

Also I still have Widows 3.1 in the shrink wrap! LOL

For use with Ferengi I take it. ;-)

I did mean Windows 3.1, I like the Star Trek reference!

Ferengi was the code name for OS/2 for Windows, a version of OS/2 which came without Windows pre-loaded and was less expensive as it did not require IBM to pay Microsoft for every copy of OS/2 sold. You used your stanard Windows 3.1 install disks.

When people write Microsoft with a dollar sign, I can’t help to think that they are too moved by success hatred to take their opinions as unbiased.

For almost 2 decades I looked at that usage as a showing they were far more motivated to protect their dollars than create good ….. no better technology. It’s been also bandied around for decades that they are far more a marketing company than a technology company. Those who have never seen or worked with better don’t get it, call it being jealous, being Microsoft haters, etc etc. Facts are, they built inferior versions of things and with market share command forced it on users in order to crush they competition. Even resorted to naming things similar which is yet another marketing trick to steal mindshare.

Most techies don’t give a crap about the money they pulled in but what they used that money for was again obvious and detrimental to technological advances. Funding SCO through back channels…. paying ISP’s to push Internet Explorer over Netscape while paying off Netscape contracts. And the worst, funding their partners to join ISO to stuff ballots they voted on Microsoft Office Open XML as an ISO standard. Just some of the reasons why some will write Micro$oft when referring to THAT company. And this goes back to experiences in the late 80s and early 90s all the way into the 2000s.

As i recall, Linus Torvalds said that if 486BSD had been a little bit farther along he never would’ve created the Linux kernel. He just wanted to play with something unixy on his cheap IBM-compatible, not start a revolution.

It seems likely the same people that pushed Linux so hard in the early days would’ve pushed 486BSD instead.

Linux isn’t the first or only very portable (at least if you/somebody puts in the effort) opensource OS, nor perhaps the best – there are so many reasons Linux was inferior and you can argue still is, but the same the other way too. So one of the many other more defunct unix likes or perhaps the still very alive and kicking BSD stuff would probably fill the role just fine.

I think the bigger question is if things like the Pi’s had never come along would small portable general computers exist at all – things like the forrunners to the smart phone and early portable game console often used ‘custom’ silicon and very basic microprocessors anyway, so the explosion of low power consumption but very versatile easy to use full computers may never have happened. It was largely the Pi’s success that drove that market into existence.

I think you mean ARM rather than Pi – The RPi is a veritable babe in arms compared to portable games consoles and smartphones, and hasn’t driven any market into existence.

Portable consoles and smartphones have never been and still are not a general purpose computer. They might have the makings of one, but its always locked down software and hardware designed to do only one thing and almost impossible to, turn into a NAS, host a web page, drive a robot, create an affordable cluster, control a …

The Pi’s release triggered a realisation there is an actual market for real computers with all that versatility and really did drive that SBC market into existence – There were other SBC – but never as cheap, powerful etc – very very niche products, at by todays post RPI standard stupid prices, now there is a slew of great options so easily available and at specs slanted towards every need.

in late 90s and early 2000s where were portable hand-held devices that ran WinCE and the MIPS RISC CPU tended to be the most popular choice

I worked at a company where we developed a turn-by-turn navigation system that used these – attached to a unit that supplied GPS and CDPD wireless data network

Went around the metroplex testing this system out pretty much a decade before it became a thing for most people to be using this kind of navigation

But yeah, the MIPS CPU would have been a great alternative to ARM

And both of us forgot the evil money printing empire that is Apple as well, they are not windows, nor always run on ‘normal’ windows CPU architectures.

Then it would have been the alternate branch that was the 6502. But that’s going further back in history to a different division.

https://medium.com/@emabolo/did-commodore-more-than-apple-contribute-to-the-birth-of-the-personal-computer-a96201131fa

That article is just fighting for the label. People use different definitiins to be first. It mentions the Sphere, should have mentioned the Sol-1, and did mention the Altair (but not the Mark-8). At best, the article is trying for “most influential”.

Not to mention the whole suite of players running Unix/Xenix on 680×0 CPUs. Including Apple, Radio Shack, IBM, Convergent, Mototorola, Sun & Apollo.

Or the folks running UNIX on MIPS, SPARC, and other RISC systems by 1985.

Or folks running UNIX on DEC.

Or AT&T 3b systems.

My personal collection includes several of the above. AS does my resume. ;)

We would probably use Minix while we wait for GNU Hurd to be finished.

There was Xenix early on, maybe the best selling version of Unix, certainky at that point. And a Microsoft product.I

There were lots of Unix-like operating systems.Of varying compatibility. Mark Williams had Coherent in the eighties. I mentioned it once, and Dennis Ritchie said it was very Unix.

Since you bring up Minix, there’s also Xinu (Xinu Is Not Unix), like Minix intended for teaching, but books available. I don’t remember if it couod be had on disk.

Unix had limited use, but it was a hot commodity. People started to write about it in Byte in 1980, or 81. Presented as this great operating system. So it was sought after, or rather, emulated. In 1984 I bought a Radio Shack Color Computer to run Microware OS-9, multiuser and multitasking, Unix-like in a broad fashion.

So when Stallman wrote about GNU about 1986 in Dr. Dobbs, it was exciting news. When the variant of BSD was written about, exciting also. Linux seemed to come a bit slower, but of course it came to dominate.

In my alternate history, MWC would have put a bit more effort into their Unix clone, Coherent OS… adding TCP/IP, SCSI, and other enterprise features early on, while still selling for 10% the price of any other Unix OS. They would have killed off SCO OpenServer in short order, and probably stolen substantial market share from Digital Unix, HP-UX, IBM AIX, SGI Irix, and the rest. It might have delayed or prevented the adoption of Linux entirely, but it would certainly have undermined the Microsoft Windows monopoly much earlier as well.

Coherent was ported to PDP-11, M68000, and Z8000, beside x86, so if they were around as Arm was on the rise, there’s every reason to believe a port would have been made.

Coherent was released as open source in 2015, according to wikipedia. I suspect it’s too late catch up.

I got a clearance Atari ST in 1989, and about a year later got a clearance copy of Marck Williams C compiler. That’s when I started reading about Unix, it came with a BASH shell, maybe some other utilities. I was already to put Coherent on the ST, but they didn’t offer a version for it..

I worked for Mark Williams Company in the mid to late 1980s.

I had MicroPort and Consensys UNIX on 386 and/or 486 back in the day. Serial ports and VT terminals for multi-user multi-tasking. I probably still have the 40 or so 3.25″ floppy disks. There was even an AT&T UNIX which I think was the first I had X running on. UNIX tainted me knowing what real mulltitasking is. Dos, Dos/Windows and even Widnow 95 held no candle to what was runnign 10 years earlier on lower end hardware.

Some of what sold Unix back in the day was the support contracts. I think that’s partially what made Coherent less successful than what big corporations had to offer. Even getting technical parity at a fraction of the price is likely to have shifts that many customers over.

For what it’s worth it was a fine OS, and I remember seeing the ads for it in the computer magazines. But I never ended up getting it. My memory is hazy, but I think it cost extra to have the full development environment and the basic OS wasn’t useful for my purposes if I couldn’t re-write some of my BBS’s utilities for it.

I’m not a fan of “What if’s”… What if Eve hadn’t come along and Adam was left on his own?

Adam wouldn’t have been introduced to his first “Apple”. Look what happened because of that. 😁

I’ve seen the $10 x86 board. Way back in the 90’s, it was a high speed laser printer prototyped with pc hardware. They just cloned all the functions of a 386 et all into an fpga. Sure there wasn’t disk access or graphics, but it did run at the equivalent of 300mhz. And yes all the tools were DOS compatible. I’m guessing a second fpga could do most of the rest.

Again like the OP pointed out, it’s just a matter of IP cores vs chip houses set on building pc’s.

Weirdly what we need is those goofball Soc’s seen on super budget pc’s back then. Motherboards with two main chips, ram and bios.

Can be done, will it get done? Ask intel or amd.

I remember seeing ads for inexpensive 386 and 486 System On a Chip devices back in the day and often dreamed about the neat things that could be done with them. Funny, how it takes so long for some things to really catch on.

there would have been FreeBSD – it was an entirely suitable POSIX Unix; Linux leapfrogged it because AT&T bogged BSD down in litigation for a few years

so there’s yet another alternative universe to ponder – what would the world be like in a FreeBSD dominated unixy world vs a Linuxy dominated world

A little different because there is an official distribution of FreeBSD, while there isn’t one of Linux. That would have left less open ground for the commercialization of OS support. But I think that companies still would have found a way to do it, either by simply offering support contracts or by offering their own versions of FreeBSD bundled with their own “enhancements”.

Another difference is that FreeBSD is offered under the liberal BSD license, while the Linux kernel is under the GPL v2 license. The BSD license allows companies to make improvements to it and not share them, so we could have ended up with more commercialized forks that didn’t share their code.

But in the end things would have ended up much the same. Whatever differences in capabilities exist between the two operating systems are mostly the result of far more development resources finding their way to Linux. FreeBSD would have been a solid base for building out server infrastructure, just as Linux has been, and in that scenario it would be the dominant OS running web sites and online services. And it still wouldn’t have made much headway on the desktop, except in the form of operating systems that built a very different user experience on top of the kernel (things like macOS and Chrome OS).

Everyone seems to forget that back in the 1980s, Microsoft was the biggest player with UNIX on x86 IBM PCs with their XENIX. There were even some common parts of code between XENIX and first versions of MS-DOS to aid compatibility between these two systems. And… well… UNIX ran on numerous different platforms already back then and usually was at least partially compatible thanks to POSIX standards. So, even if Linux didn’t come, there was UNIX which could have become the dominant player.

I needed such x86 SBC and ended up buying a Dell WYSE 3040 Thin Client (surplus). An Intel Cherry Trail x5 Z-8350 (1.44 GHz Quad Core) that pulls < 2W and does NOT need a fan. I wish there were more raspi-like x86 boards around. Ultimately "it's a software issue", not only because you might be forced to use x86 binary blobs, some FOSS is not ready for prime time on ARM or does not even build, even common tools software like PHP.

That’s what puzzles me about the relative dearth of Pi-alike x86 boards.

It isn’t like Intel doesn’t have the parts(or, if for some obscure product segmentation reason, couldn’t easily enough tweak clock speeds or laser off features on an existing part); they have Atoms in some fairly aggressive power envelopes(certainly not microcontroller-level; but at least more or less at parity with your average beefy ARM application processor for general purpose OSes, it’s not like the Pi has avoided getting ever closer to ‘active cooling encouraged’ over time); and there was a period(back when Win8 was supposed to be the glorious future of Windows Ipads) when about a zillion Atom-based tablets were out and about for dirt cheap.

Had they wanted, nothing would have prevented the same parts from being shoved onto little SBCs for similar(or less, no screen and battery) money.

Those things certainly weren’t fantastic(usually built right down to price, and Windows on limited RAM and garbage eMMC isn’t fun); but they were certainly full x86s that ran normal x86 OSes credibly enough.

Unfortunately, for the tinkerer, these systems tended to be horribly I/O starved; often a single USB-OTG port and maybe a microSD slot; often no host USB port; and firmware that was really, really, picky about boot devices was common. Inside the situation was no better, as expected, consumer tablets don’t generally come with liberal GPIO and neatly labeled pin headers.

I can only assume that Intel just didn’t want to deal with that market, since catering to it would have been vastly less effort than the ‘Quark’/Galileo/Edison stuff(also abandoned) that actually made some fairly serious changes and required a customized OS and similar inconveniences(and was dogged, throughout it’s short life, by poor documentation and limited cooperation with developers.

The one that you do see is J1900-based devices intended for PFSense: I’m not quite sure how that particular CPU became the patron saint of cheap DIY network gear; but it always seems to pop up when you look at cheap x86s with more than one NIC built in.

I think the dominance of the J1900 is simply a matter of supply. Intel theoretically offers a large number of low end CPUs but most of them are not available for buys for new designs. At any given time all the cheap Asian x86 products have one of two or three CPUs, which reveals the models that Intel is interested in selling and will make good deals for.

We don’t see a lot of Pi-alike x86 boards because the processors aren’t cheap enough and available enough. One that has seen the light of day is the Odroid H2+, but it’s out of stock most of the time because they can’t get the chips to build them, and it’s a lot more expensive than a Pi ($119 with no RAM included).

Another upcoming one in a somewhat larger factor is the Hackboard 2. It was originally supposed to ship in the spring but has been postponed until September because of parts supply issues (though not the CPU this time). Still more costly than a Pi at $115 (or $140 with a Windows Pro license), but that includes 64 GB of storage (originally announced as soldered-on eMMC but it will ship with a M.2 SSD instead because of unavailability of eMMC modules) and 4 GB RAM so it’s ready to go at that price.

I’m not sure if there was some loss-leadering going on, or Intel taking a gamble on Microsoft’s hopes that Win8 would actually not be a laughingstock as a tablet OS(and thus take back some x86 market from the non-intel tablets and phones); but it seems like the pricing and availability of Pi-like x86s has actually gotten worse over time:

A few years back I was able to pick up a couple of Z3736G-based tablets for $50/ea, new (the “SuprapaPad i700QW”, a device and brand of deserved obscurity); that got you a 4-core Atom in the 1.5GHz range, 1GB of RAM, 16GB of flash, a (not very good) 1024×600 touchscreen, wifi/BT, two bad cameras, and battery.

As an attempt at tablet computing it was…not good..but had the same basic internals been in dev-board format it could easily have compared favorably for many applications with any rPi prior to the 4; and if it could sell for $50 with a battery and screen and case and such the price probably wouldn’t have been terribly prohibitive.

More recently, though, I just haven’t seen the same sorts of things in x86 flavors(still plenty of bad tablets around; but pretty much universally Android on some bottom-feeder ARM); the result is often a better product(since the higher cost of entry means fewer systems cost-optimized so brutally that they are basically unfit for purpose), but suggests that Intel simply isn’t interested in trying to treat low-end Atoms as a meaningful alternative to the assorted A-53 or similar ARM application processors of the world.

There were some slightly better models with 1280×800 displays, 32 GB flash, and 2 GB RAM. Micro Center used to sell some under their WinBook brand. The ones with that spec are still somewhat useful now; they have enough horsepower to run one application such as an SDR. You may be able to upgrade those old tablets to Windows 10 if you can find the drivers. There is a downloadable bundle driver bundle for the Micro Center models.

The lower spec models with only 16 GB flash and 1 GB RAM are REALLY difficult to install Windows 10 on because of the limited space. You have to wipe all the existing partitions and start from scratch, and you’ll need to put in a Micro SD card for additional work space along with the USB stick that you install the OS from. (It CAN be done; I have Windows 10 on a WinBook TW700.) You also won’t be able to install most new Windows builds in place due to the lack of storage; you’ll have to start fresh each time.

Tablets like those have disappeared from the US market. But you can still buy some models in the $100-150 range from places like Banggood and AliExpress. Many of them have somewhat better specs than the models from a few years ago, including an Atom x5-Z8350 Cherry Trail processor rather than Bay Trail (similar CPU performance but a much better GPU) and possibly a 1920×1200 display. Still useful for a dedicated application, but you won’t be doing much multitasking with only 2 GB of RAM.

Tablets aren’t much use in control applications unless you’re willing to open them up and expose their guts. Otherwise the only I/O available is the ports that are already present, notably the USB port or ports and the audio jack. The ones I see on Banggood right now only have a single USB Micro-B port (which can be used with a USB OTG adapter so you can charge the tablet and use the port at the same time); the Micro Center models had two (one Micro-B plus a USB-A port).

‘Quark’/Galileo/Edison were just garbage neither here nor there, I wonder what bad drugs they on when they came up with them and imagined they would fit in any marked

That seems to be the stumbling point. Most of the x86 platforms that are available or affordable have no real I/O options. Which is fine for things like small appliances or application servers, but is a pain in the arse for any interfacing to the real world.

Thankfully the internet of things is giving us a whole lot of options for arm based nodes and an x86 core if that is the preference, but that is a small niche of the diy space and no real options for any kind of development kinda suck.

I just got a Minis Forum J4125-based mini computer with 8GB RAM and 250GB SSD for around $250. Yup, that’s a small multiple of an rPi but it also runs circles around it like crazy. There are a couple of sub $200 options with a little slower processor, a little less memory, and smaller SSD. Given the performance boost over an rPi4 or an Odroid-XU4 the price multiple is actually not unreasonable.

but that’s laptop prices

The specs I’m seeing really don’t show it being better than a Pi4 by much. Certainly not enough to call that price increase good.. Perhaps for your usecase its much faster, they certainly do have some extra horsepower options – which I admit intrigue me, but cost to performance it seems like it doesn’t actually play out well for it.

Also seems like you are more locked in to having to spend bigger money, but the pi you can be very selective in what you add to whichever model you want, meeting your needs potentially at vastly cheaper prices (though worth noting a top spec CM4 or Pi4, and some of the addons that would be needed to really push top performance add up – for a Pi4 the case with decent cooling is important to performance and remarkably expensive to buy – So I don’t do that yourself and fit even better cooling because you can).

Intresting looking little thing, seems like a good option for some needs, but does seem somewhat pricy – particularly when there are vastly more powerful laptops out there second hand which are often fit the use case just as well or even better…

Better is a relative term. If you have to run x86 for whatever reason, than a native x86 board may be better for what you are trying to do.

If you are talking raw price to performance for apps/usages you control, I would agree with the Pi being better. I just think the question should always be: better for what?

Indeed, I have been looking for a good amd64 option for that reason Jock – trying to run wine via qemu is tricky and getting good performance far from certain, but at that price why wouldn’t you take a second hand probably vastly more powerful laptop, that already comes with lots of useful extra.

“But had ARM not filled the niche of a powerful low-power and low-cost processor core, would those also-ran processors have stepped up to the plate?”

Like Celeron. Or at least tried.

The bigger question isn’t who would have stepped up. There were plenty of contenders for the niche of routers and mobile devices and eventually desktop computers. We could easily have seen MIPS or Power in those spaces, or maybe even Alpha.

What is less clear is who would have stepped DOWN and offered processors for the embedded space. What would be using now instead of Cortex-M0, M3, and M4 processors? Perhaps MIPS; Microchip does produce the MIPS-based PIC32 line, though they’ve never driven its prices down into the territory of their 8-bit or even 16-bit lines. Or something else might have moved into the vacuum. Or perhaps that space would have just stayed a mishmash of 8, 16, and occasional 32 bit microcontrollers until RISC-V came along.

Agreed, I’ve seen lots of MIPS and PowerPC in embedded devices but don’t know about how the manufacturing or designs were licensed. Clearly ARM did something different or maybe it was just a single product like iPod and then phones which catapulted them forward. But I do believe you nailed who would have filled in if ARM wasn’t there. x86 was just too bloated and inefficient for mobile and Microsoft software made it worst.

ARM absolutely dominated in the cellphone application processor space way before Apple was even thinking about designing a phone.

So they did have a major foothold in that space. And in general they ware extremely common in the handheld device space due to to the low power.

There ware some competitiors like Coldfire and and it’s predecessors that ware 68k based or derivates of it.

I thought Celeron was the “Whirlpool Roper” of the segment, not an alternative architecture.

Basically, it was still a Pentium x86 chip. It was just a cheap and old one.

It was generally a lower binned part if memory served. I recall the Slot 1 celeron being lower cache but sometimes able to achieve a higher clock (oddly, because of the smaller cache).

Same die though, I thought.

I think the only ones that weren’t are more recent rebrands of the ATOM.

The BP6 motherboard and those cheap Celerons actually helped kick off Linux further into the server space.

I had one of those systems setup server 5 developers when our Solaris boxes were not keeping up.

I forget if Microsoft was “killing the baby” at that time and preventing users from using multiprocessing on Windows NT clients and making the “server” version so much more expensive. Remember it was a “server” if some of the Windows Registry bits were flipped and nothing more? Oh the games those companies played to keep their market position.

The original Slot 1 Celeron 300 was a horrible dog because it had no L2 cache at all. It sold poorly. So Intel gave the design a refresh by giving it L2, but a smaller amount of it than the Slot 1 Pentiums.

But to keep costs down, Intel put the Celeron 300A’s L2 cache in the CPU rather than as separate chips on the CPU PCB. That meant the smaller L2 ran faster. The Celeron 300A (and subsequent versions) could be overclocking monsters, able to be cranked far higher thanks to the L2 cache all cozy cuddly with the CPU rather than a fair bit away, communicating with traces on a PCB.

Then when Intel switched to Socket 370, the Slotket was invented to plug those Celerons into older single and dual slot 1 boards that had the capability for big overclocking and running dual Celerons despite the chips supposedly not supporting that.

Ah, I thought I recalled the slot 1 dual socket boards being press-ganged to run Celerons with a manufacturer BIOS work-around!

Maybe we would have ended up with MIPS or another revision of the classic RISC design. Which is a shame because I really think ARM’s 16 registers instead of 32 in favor of many more op-codes is one if it’s strengths.

Don’t forget the used market. Rapid change in the high end means lots of low end equipment cheap.

That is what I have found, secondhand corporate gear is a very good choice for a lot of applications, expecaly at scale, as in pallet loads of gear at auction.

I think one of the main reasons that x86 haven’t had as much of an impact on the SBC market is mainly that there isn’t a lot of x86 CPUs/SoC that are applicable to that type of market. ~~Though, technically, most laptops these days have a soldered in CPU and RAM, sometimes even storage is soldered to the board, this is technically a Single Board Computer…~~

Though, personally I would like to see more SBC focus on “typical” SBC applications.

Ie, things like:

Routers, managed switches, NAS, etc. All tend to require decent networking, usually more than 1 network port. And the NAS one could benefit greatly from a smattering of SATA ports.

I would honestly pay easily 100-200 bucks for an SBC with at least 10 SATA ports and a pair of M.2 slots on the back, and preferably with a 10 Gb/s Ethernet port, I don’t mind if it is an SFP+ port since that is actually better in some cases as long as there is at least one gigabit Ethernet port as well.

any mobo + sas card can get you there easily.

Yes. But that is typically less compact.

And a fair few SBC also tend to idle at lower power than a typical PC.

Odroid has the H2+?

Oops! It’s out of stock at the moment.

Out of stock, and it’s not clear whether it will ever be back in. Also a lot more expensive; $119 with no RAM or storage. But it has a quad core CPU and those dual 2.5 gigabit Ethernet ports are appealing.

Getting reasonable x86 performance under $100 ain’t easy. Your next targets would be the “stick” computers that typically use very low end z8350’s. Those have very limited I/O options, but it depends on what the use-case is.

Udoo also makes something similar to Odroid, but it’s also around the same price point.

An interesting aspect of this that’s missed here is the effect licensing strategies had on the technologies used by the various manufacturers. Arm’s decision to license their cores rather than fabricate them decoupled the core design from the fabrication process.

Intel have always pushed for the maximum performance their current fabrication process can deliver. Old parts keep getting manufactured using old processes. So you won’t find a super-power-efficient 486 part today, even though it would be possible to manufacture a 486DX (say) using a modern 10nm process and it would be super efficient; there’s just not the market there to make it worthwhile.

But because Arm have decoupled design from fabrication, lots of different companies can mix-and-match core designs with fabrication processes to give the product they’re after. So quite old Arm cores are being fabricated on quite modern processes, giving very low power parts. This is part of what has made fanless SBCs possible.

I agree. I don’t think Intel can license x86 without severe restrictions and still manage to sell chips themselves. Even if they decided to support new licensees, it’ll be only for manufacturers targeting a market segment that Intel doesn’t see value in and won’t contain any logic, just the right to manufacture the ISA.

I don’t think “there’s just not the market there” is quite correct, there obviously IS a market for lower-power devices, but Intel aren’t interested in servicing it.

Intel have previous form in this area, for example they bought Mindspeed who had bought PicoChip, and then closed down the PicoChip technology.

Then they tried to replace the ARM core on the Mindspeed CPU’s with an Atom, which went very badly, I don’t think that product was ever released.

totally agree that it has more to do with licensing and those restrictions which limit x86 and how it is the opposite for ARM and why the ARM design has spread so widely. My main concern for what NVidia would do and if they’d kill ARM with restrictions to push their own agenda.

I would love an x86 SBC with 90’s style IO options for retro stuff. The ones I found are limited in disk IO ports and the prices are more than a laptop sometimes. A more modern SBC would also be great. It seems NAS devices are using more ARM CPUs, too. I think Intel misses the market because they focused on high end systems and volume partners. They also missed the home server market. The NUC is OK but a single 1GbE network is too limited.

Missing the obvious question of why do PC/104 products cost four figures where Pi cost two figures.

Its all the quantity and long term replacement plan. The reason you can buy a $1000 PC/104 board today using a 486 chip is 30 years ago some five million dollar milling machine mfgr did the Jedi mind trick wave saying “you WILL have this spare part available thirty years from now” and they said yes but it’ll cost $1000 each in those quantities for those decades of repair stock.

Replace “milling machine mfgr” with “DOD armament suppliers”, I suspect would make more sense.

Chips manufactured with a 1 µm process are inherently more radiation tolerant (think of a bullet vs a glass of water vs bullet vs lake of water). And P-type or N-type doping (which slowly continues even after chips have left the fab, just much much much slower at room temperature) will take ridiculously long using a 1 µm process before the chip fails due to homogeneity. Old tech can have non-intuitive advantages.

I priced replacing a servo amplifier on a circa 1990 CNC milling machine. The manufacturer of the amplifier is still in business and can make new copies of pretty much anything they ever made, but the prices are huge. Cost less to strip off all the vintage electronics, replace the massive high voltage DC servo motors with smaller, faster, higher torque steppers etc. ‘Sides that those old Anilam systems are slllloooooowwwww. They simply could not move a mill very fast because the control computer that mostly filled a large cabinet with multiple boards couldn’t calculate the movement very quickly.

> In this late 1990s parallel universe without ARM, what happened next?

We got i960 from Intel, but mainstream SBC use MIPS, may be SPARC and 68k SoCs. Also there could be some rise of SuperH8, z380 and other SoCs. RISC-V is inevitable too in one form or another. In any case x86 will had never become a choice for SBC as we know them now. Too power greedy, too closed source (open-source BIOS? No way! It’s x86! You have to reverse-engineer all that crap for years just to get some outdated hardware to correctly initialize memory controller or even processor itself), too little peripherals.

Why wouldn’t a laptop motherboard count as an SBC?

Some are wacky shapes, but I’ve seen many on Newegg that seemed very reasonable for prototype building.

A set-top box with a Ryzen3 laptop motherboard ($50ish) seems like it would be more popular than it is on HaD…🤔

Case in point:

Check this out on @Newegg: HP 2133 Netbook Motherboard w/ 1.6Ghz Intel Atom CPU 501047-001 https://www.newegg.com/p/1HD-001F-009P9?Item=9SIAH8WCU83469&Source=socialshare&cm_mmc=snc-social-_-sr-_-9SIAH8WCU83469-_-06172021

Or look on eBay for laptops with broken screens. Plug in a monitor, and maybe an external keyboard and mouse, and you get a very cheap desktop computer (with it’s own built in UPS).

Intel not only screwed up the transition to 10nm, they also badly underestimated demand and that’s why they had a huge supply crunch even before Covid. Since their more profitable high-end processors are still made on essentially the same 5 year old process as the entry-level ones, it’s natural the prioritized the production of the more lucrative part on their constrained production capacity.

One part left out of the puzzle is how much open source and open access played in the positioning of ARM and restrictions of x86. RepRap and 3D printing would not be where it is today had RepRap not shared and opened everything so others could ply their skills and improve the original. ARM is the same way as they license the core but let vendors add around it to fit the markets they seek. X86 is tightly controlled by Intel and only because of slight of hand did AMD and Citrix get a chance to play in that game.

And besides, Intel has for over 30 years used it’s advanced chip processes to sell the latest chips at high costs and slowly wean the market of the old chips. They’ve had little motivation selling to the low end and loved their relationship with Microsoft as the Windows OS got bloated and bloated and slower and slower. And Microsofts tight control allowed them to dictate exactly how Windows was used and exactly what was preloaded and shoved into the default package. They even dictated the screen orientations and sizes on many versions of Windows.

Contrast that with ARM and their open licensing approach, on Linux distributions letting vendors provide only what they want and modify to their needs( while sharing it too ).

x86 SoCs are nowhere because the only sales advantage would be to run Windows and to do that adds tons of cost to provide the performance needed so nobody does it but niche players in high cost sectors. Intel keeps its fabs making expensive chips instead of low cost chips.

Think about it, at the height of the PDA years Intel owned StrongARM and sold it off. The top ARM design at the time.

So I think it has more to do with how open systems provides great growth and innovation and more springs from that than tight control.

They aren’t nowhere, there is the Vortex86 line, I just came across this: http://www.zfmicro.com/index.html

They are out there, but aren’t really hobbyist friendly

There was the 86Duino EduCake — https://www.86duino.com/?p=9600 — that used the Vortex86. Very hobbyist-friendly

“cheap” is subjective, but I *REALLY* like the PC Engines APU boards (and the Alix boards before). Lots of ethernet ports, reasonably horsepower, low power (just passive heatsink is enough).

They do well as routers. Can easily do gigabit with a basic iptables ruleset. Can just about hit that with a full complex ruleset.

Limited expansion for the robot builder I guess. Few GPIOs, but there is an SPI bus, so you can add anything (and I2S I think?)

LOL, I saw that brief mention of IDT up there. Someone missed a few dots that need connecting. Many, many modern thin clients — which can be had on the secondhand market (read: eBay) for pennies on the dollar — use VIA CPUs, which are the modern legacy of the IDT WinChip.

Over at the [dot[IO side, I’ve got a project going for the Reinvented Retro contest. It’s just a steampunk build, but that’s retro look, and that’s enough for me. It’s called “The Mighty Quill” (groan) — both out of a reference to the Bob Dylan cover that I like a bit more than the Manfred Mann original (let’s quibble about that elsewhere plz) and to the old quip about pens and swords, but with a nod towards steampunk — and a grin towards the inevitable off-color jokes that follow, since I’m a hopeless wise*** ;) Here, have a link. The latest project log (“A Quick Update”) went up yesterday and has a highly condensed version of the story… https://hackaday.io/project/180346-the-mighty-quill

Oh, sorry, shameless plug… I hope nobody minds!

Well, IDT and Cyrix (via Nat Semi) for VIA and maybe RiSE (later SIS) with the Vortex86.

You’ll find all sorts of weird stuff in those SBC industrial/retail boxes. More than I knew according to Wikipedia: https://en.m.wikipedia.org/wiki/List_of_x86_manufacturers#x86-processors_for_embedded_designs_only

Now if you’ll allow me to return to travelling down this new rabbit hole. :)

There is a talk from the Embedded Linux Conference 2014 that gives a little perspective on this topic. You can find a link to the slides here: https://elinux.org/ELC_Europe_2014_Presentations however, the video (if there ever was one) has been lost. It gives some pros and cons on x86 vs. ARM SOC development. Interesting that this question is still being risen 7 years later. There is a lot of history behind these things.

Another options is the UP Board https://up-board.org/up/specifications/ $99 for an x86 SBC. Still not as cheap as a Pi, but if you need x86, it’s not too bad.

Hi folks, tyvm for memory lane & supposal. I note that no one mention Western Digital for their 32bit 6502 upgrade processor. not pin replacement but certainly a viable cpu for either timeline. It’s too bad its not found a more public existence now. Maybe HackADay could find a use for it?

@Monk Western Digital or Western Design Center? I am not aware of WD ever being in the 6502 business. Publicly WDC has the 6502 (8-Bit), and 65816 (16 bit), and theoretically the IP for Terbium a 32 bit version, but I don’t think there are any publicly available products that support it. Can you link to what you are talking about?

“Western Design Centre”. But it seemed a common thing to mix them up. The names, not actually the companies.

It’s what I figured, but back in the day WD made enough silicon — for example the MCP-1600 CPU in the 70s, and they made graphics cards in the 80s, etc — that it was possible. They make custom silicon for their drives as well

Even after Western Digital sold off their video card product line, they maintained an archive of manuals and drivers on their BBS for quite a while.

Exactly so. And they are a major manufacturer of ARM and now RISC-V cores (and released and open source Implementation).

The Atomic Pi is ~40 on Amazon. So apparently someone can kick an x86-64 out the door under 50$.

Although they might be EOL. I don’t have any personal knowledge of them, just one in a box.

I have 2 or 3 of the Atomic Pis and they are nice kit. Great price because they were excess from a very large failed product so while there have been thousands sold already, it’s only until the inventory is gone.

The Rock Pi X is another Pi-like board. It’s even in the Raspberry Pi 1-3 form factor. There are two models: Model B (has 802.11ac WiFi, Bluetooth 4.2, and PoE support) and the Model A (does not have those things.) Other specs are the same: Atom x5-Z8350, one USB 3.0 type A port, three USB 2.0 type A ports, power via USB-PD on a USB-C port (18W minimum, accepts 9V through 20V). Prices on Amazon range from $90 (Model A, 2 GB RAM, 16 GB eMMC) to $160 (Model B, 4 GB RAM, 128 GB eMMC).

I have a Rock Pi X Model B and it’s … okay. Its performance is lacklustre: as someone else said, an Atom from 5 years ago, as with the Atomic Pi. The DDR3 memory really slows it down compared to the Raspberry Pi 4’s DDR4. The Rock Pi X is possibly even worse than the Raspberry Pi for needing expensive add-ons: you must buy the case, or at least the huge heatsink that forms the base of the case. You need to remember to buy the BIOS battery, unless you want to do a cold-boot reconfigure every time you restart it. And the USB-C PD power adapter isn’t cheap either: I got one from Radxa, but it was trash as the power pins aren’t long enough to engage with the power outlet, so add another $30 for a decent PD adapter. If you want to run it headless, it also needs one of those HDMI fake monitor plugs or it won’t give you any display, even remotely.

But, for all that … it is an x86 machine. Your choice of OS is much bigger. No surprises about which packages are available.

Yeah, sigh, it’s like Atom from 5 years ago is still the only choice, sad, but can’t blame them, more profitable high end parts are always prioritized.

My alternate universe has the Xerox altos in 1980 becoming a cpu that anyone could buy. Along with the Wysiwyg desktop and programmable micro code.. then I could play maze wars at home rather than in the computer center… one can dream

i just want an sbc that can run freedos and old school dos applications. or perhaps a lightweight linux distro. many of the intel sbcs have aimed for windows 10 as its os of choice and as a result needed to be powerful enough to meet the minimum requirements. this no doubt set the bar for cpu power, memory and storage so high that you end up with an expensive product. none of that is needed for a dos nostalgia box.

I was thinking the same thing. I wonder how difficult it would be to design an SBC with a 486, basic DOS era video, a Sound Blaster 16 compatible chipset, 8 MB of RAM and 100 MB of storage? I am sure FreeDOS hobbyists would vacuum them up even at $100 each, but I am also sure the market share would be really small.

So basically that would be a rPi 3B+ running QEMU emulator which has been around for decades.

https://retropie.org.uk/forum/topic/11551/free-dos-games-on-gog-com

Emulation is not the same, and often has caveat that it often changes how the program functions, probably for the better more often than not now… But certainly not always.

That said I’m with you, so much easier, and the hardware would actually be useful for more than pretending its still 1990 something..

Look up terminals on ebay. There’s also a website that’ll tell you which ones are standard 586/686 x86 boards.

They go for about 10~20 USD.

Fantastic article! As something of an SBC buff, and an x86 SBC fan, this is a question I’m always thinking about. The cheapest x86 maker boards I know of are the Atomic Pi and Rock Pi X at around $35 and $75 respectively. But the price difference in ARM and x86 SBCs is crazy. Hopefully one day $5-$10 x86 maker boards will abound! https://www.electromaker.io/blog/article/best-x86-sbc

Many older thin clients are built around some low power x86 SoC and can run some version of Windows, Linux, or the manufacturer’s own proprietary thin OS, which usually works as a Citrix (or some other version) client to run software off a server.

The WYSE (and shortly after introduction by acquisition, DELL) Sx0 series had options for Windows XP Embedded, Linux V6 (no idea of what distro that’s based on) or the WYSE proprietary OS. The original S10 model could only run the proprietary OS and had no 44 pin IDE port or SODIMM socket. The various other models were noted by the first digit, with S90 being XP Embedded. I figured out how to ‘crosgrade’ operating systems. The BIOS is matched to a key file for each OS but nothing matches the key file to the OS. All you have to do to flash any of the OEM options is to have the key file that matches the OS an Sx0 originally shipped with. Prepare the (must be 1 gig or smaller!) USB stick with the desired OS then replace the key file.

The Sx0 has a “feature” where early in the boot process the IDE device is hidden. Of course the three OEM operating systems are fixed to ignore that and keep going. Linux can be patched to ignore the hiding and still see the IDE device.

But no DOS, MS-DOS, PC-DOS, and FreeDOS all strictly obey and when the BIOS says “IDE? There’s no IDE!” they believe it and act exactly like they would on a normal PC if the drive cable was unplugged.

I just want to get MS-DOS 6.22 booting on one to see if its memory map will allow software EMS so I can try running the ancient DOS software for ProLight PLM CNC milling machines. But boot DOS off a USB stick (or USB floppy drive) and there’s “no hard drive”. Take the SSD out and use another computer to install DOS on it, put it back into the Sx0 and it’ll just get started booting then stop hard, as though the drive has been disconnected.

This “feature” seems to be a special thing only in this series of x86 thin client.

Many “old” thin clients are a nice alternative to the ubiquitous PIs.

You get some CPU, lots of USB-2/3, network, a case, power supply, slots for memory and disks, decent low power, all that for 50 bucks/euros.

There are some Dell Wyse Zx0Q for 55 €, HP T630/620 on the cheap at 50 €… (European Bucht). Beats a PI at ease.

https://www.parkytowers.me.uk/thin/ is a nice place for all the specifications.

I had a Texas Instrument TI 99/4a computer. It had a 16-bit processor TMS9900 CPU running at 3 MHz. The first 16-bit computer from 1979. What if … they didn’t die in 1984? I miss Extended basic and loading games by cassette deck. Honestly, they were ahead of their times. One of the first 16-bit personal computers to have a speech synthesizer, 16-colors. RS232 port which ended up taking up your entire desk after connecting all your peripherals.

TI failed because they stayed in Texas = everyone else went to silicon valley where companies traded employees, saw what everyone else was up to, and rejected bad ideas. TI took no advantage of that as one could see with their expansion chassis for the 99/4a and forcing users to buy pre-formatted floppy disks for their PC compatible.

“TI failed because they stayed in Texas”

The TI 99/4 failed because Jack Tramiel specifically targeted them for destruction by selling Commodore hardware so cheaply, one of the major factors which ultimately killed Commodore, too.

A big factor in the failure of the TI 99/4a is that they snubbed the hobbyist community. Development information was readily available for the competitors (Apple, Atari, Commodore, Radio Shack) but scarce for the TI. You could program it in BASIC readily enough, but if you wanted to take things further with direct access to the hardware you hit a roadblock. Hobbyists were only a small part of the market by then but they were key influencers, and they were telling all their friends not to buy TI.

TI had a development board for the 9900, it was so easily recognizable because the “front panel” was the casing from a TI calculator. Someone I knew had one.

Godbout talked abkut a 16 bit computer in the fall of 1975, even had a contest to name it or guess the CPU. But then nothing later.

TI was selling real computers, the 9900 ran the same instruction set. That may have factored into their approach with the 99/4. Other companies weren’t burdened with such dealings. And IBM basically set up a small garage operation to design the. “PC”, with a willingness to pay attention to the existing small computer industry.

The problem wasn’t about information about the TMS9900 processor itself. It was about the peripherals in the TI 99/4a: the display controller, the sound interface, and so forth, stuff that you needed data about to write a good game for the platform.

TI made available to purchase technical reference manuals containing all the schematics, logic diagrams and more. They even included some stuff for a keyboard concept that was intended to connect to the joystick port, which I assume is the information Milton Bradley used to design their MBX or Milton Bradley eXpansion.

Then there was the Editor-Assembler manual which came with their Editor Assembler cartridge, which was used by commercial companies and hobbyists writing software.

TI was working with the Myarc company on a double density floppy drive controller, which TI had only prototyped a few of their own design, best described as “iffy”. There was also collaboration on a single board computer, mostly 99-4/A compatible (only one rarely used TMS9918A video mode isn’t supported) but it was left adrift when TI pulled the plug. That SBC was eventually released as the Myarc Geneve 9640.

What was a major factor in the end of TI’s Home Computer division was that the company treated its various divisions as separate entities and anything one division needed for its products, which was manufactured by another division, was charged off as a full retail price expense. Thus the budget for the Home Computer Division had huge retail price chunks cut out of it every time they “bought” CPUs, VDPs, ROM, GROM, audio, Speech and other TI made chips.

So by the accounting books, TI could not cut the price of the computer by any method other than simplification of the circuitry to reduce parts count or finding lower cost materials and methods, which they did once or twice, but not by much. Changing from the metal topped black case to the all plastic beige case and removing the power LED was another cost trimmer, and got its aesthetics in step with micro computer models *not* having their roots in the late 1970’s.

But then some nut at TI got the brilliant idea to block cartridges not produced by Texas Instruments from working on the final (QI or Quality Improved) revision of the hardware. Buying a new TI and a Pole Position cartridge shortly before the end and having it not work likely did not make buyers happy with TI. But there was still a large number of the earlier versions in the back stock. My parents bought me a new beige (not QI) 99-4/A console at a JC Penney store for $50 on a clearance sale in 1983.

At the show where TI announced the end of their Home Computer, they had a prototype of their new 99/8 Home Computer literally sitting behind the curtain, ready to be rolled out, had they chosen to roll the dice with the new model instead of killing it all off. What could have been… https://www.99er.net/998art.html

No they tried to beat Commodore in a price war specifically trying to compete against the Vic-20 and C-64.

If you ever seen the inside of C64 and a TI/994a you could see there is no way the 99/4a could be built for the same cost without a lot of redesign and integrating components.

“ARM processors have historically sipped power compared to even the most power-efficient of their x86 counterparts.”

i have believed so strongly in this comparison, and been stunned by its fantastically large magnitude and intel’s continuing unwillingness or inability to make any dent in it, and the incredible manifestation of it in extremely poor consumer experience, that i present this counter-example which i think just proves it all the more thoroughly.

using very low quality data from the built-in laptop battery monitor, as near as i can tell, my new celeron n4000 (2017, 14nm) consumes roughly the same wattage as my old rockchip rk3288 (2014, 28nm), while delivering 2x-3x the performance.

so it took intel a much newer silicon process and a couple years of engineering catch up, but there is an intel processor out there that seems to be at a better spot on the heat vs performance curve than a totally unremarkable budget offering from a me-too entrant in the arm ecosystem. stunning!

and not one mention of DRDOS…..

*cough* MIPS *cough*

arm is good firm, look at IBM (free open ISA Power8-9), many motorola version isa is licencing, looka at parallela (academic idea) etc.

Sorry, ARM create good soc better than other. I hate ARM because is complicated and not documented but it working and are eficient

Theres a taiwanese manufacturer that makes a basic 80486sbc with grafics card, but no sound, and 2 rows of pins that allow expansions to be connected, but they are not easy to come by… Anywho the price is nice, it’s about 50$. Not near arm prices, but acceptable for a 486DX4 133mhz.

Don’t recall the name right now, but going to have a look.

I have seen few PLC cards with ethernet interface that were i386. So there should be some low power implementation of i386. So why would anyone use them if there are cheaper more powerfull ARM/MIPS available? Unless they are cheap than why they are not easy accessible?

Alternate time lines have their pros and cons. Firefly would be in it’s 8th season with 5 movies, Trek Wars staring Captain Jean Luc Skysolo was cancelled after 2 seasons, Cov what ?, and the TRS 9000 running CP/M is the computer everyone has…. 😄😄😄😂😂

I work in a company that was a subsidiary of Intel until a couple of years ago, so we got to attend Intel internal sales rallies, etc. Intel very deliberately abandoned the low-end market for one simple reason: profit margin. The margin on Xeon CPUs is high, and for low-end chips like the quark, profit margin is low. So put all your fabs to work on high profit chips, right? Also Intel couldn’t compete with ARM in the low-end space and the value of X86 instruction set everywhere (a mantra they touted 10 years ago) was severely undermined by Linux support for ARM.

TI failed because of their flimsy keys.

*tenderly patting my collecion of HP calculators of then*

;-)

We had been talking about computers, not calculators. TI is still in the calculator business, though they get a huge boost from the fact that their scientific calculators are just about the only ones approved for student use during standardized admissions tests.

The HP calculators were certainly better built than the TI models. I heard a story about a demo event in the early days of scientific calculators where both companies were presenting. Each rep showed the features of their calculators, proving that both models had pretty much the same capabilities (though you got to make the choice of RPN vs non-RPN). Then the HP guy took his calculator and threw it at a wall, calmly picked it up, showed that it still worked, and dared the TI guy to do the same. Needless to say, the TI rep did not attempt to duplicate that feat.

for the gloryof it: Flytech Carry-I

Pictures at https://www.mikrocontroller.net/topic/473513

I worked for Seagate in Scotts Valley CA in the early 80’s. We were manufacturing 40 Megabyte Hard drives for IBM to be used by The strategic air command. So as we see, things change! I currently have 32 Gigs of memory four SSD’s. What I have seen in the tech field is awesome over the years.

Most are at your nearest McDonald’s or Wendy’s

https://youtu.be/8fSdLKx5HlU

when market make a demand, producers provide answer. if no ARM and only AMD/Intel and marked ask for tiny board, fanless, no power hangry, Zilog, Motorola, Microchip or otter uC/uP producer will grow – as already did – 16 bit and 32 bit uC/uP and day ove day make it powerfull enough to run Dos or whatever OS. Maybe we could bave a grandson of Z80 or Pic or whatever at 32 bit, 16 gegisters, ecc ecc. In late 80s, Sinclair release QL that was a Motorola 68k at 32 bit. https://en.wikipedia.org/wiki/Sinclair_QL

Not really sure uCPU’s would have filled in since there’s way more to the SoC design on SBC boards which really helped enable the growth of the platform. The Beaglebone was one of the early low cost boards followed by the BeagleboneBlack. They are ARM based so hypothetically wouldn’t have existed but TI would have done something else like MIPS and probably came out with a board.

Phone vendors would have used something else like MIPS, PowerPC, etc and the demand for more features in phones would have resulted in a similar market we have now. So it’s likely something like the Raspberry Pi, which was very inexpensive because it used very inexpensive, high volume SoCs used in phones.

Arduino’s goals had not been to grow into a computer/SoC but the growth to more powerful micro controllers has moved them up to using low end ARM for embedded things, not SoC computer scale designs.

You can buy cheap used terminals with low power AMD APUs (or older VIA CPUs – very cheap) if you need small x86 board. The only problem is there is no GPIO

If Arm did not happen I think MIPS and PowerPC would be dominating the embedded space including Raspberry Pi like boards.

A lot of network hardware used MIPS and still does while PowerPC is extensively used in aerospace and automotive.

worked with both MIPS and PowerPC devices and in small portable devices I liked the MIPS better

and yeah, it very well could be a worthy alternative to ARM

Also don’t forget the Sun stuff, SPARC was open sourced, SPARC-like cores like Leon have been popular in some embedded niches (aerospace included) for quite a while.

A Raspberry Pi running DOSBOX emulator is already a cheap x86 SBC

Ugh, x86. Let it die the slow death.

Maybe Intel should revive their iAPX 432 efforts… I’ve got a system behind me from 1984, and it certainly was an interesting, if fatal step in a weird direction.

Honestly, I’d love to see someone make a very small 386 or 486 SBC kit. Interface the 486 onto an FPGA or something to act as the chipset, and use PC-104 connectors for the ISA bus. Use an onboard SD card slot for the hard drive, and PC-104 kits for graphics and audio (I believe both have been designed before…).

I’m nostalgic for that era of PC, but even just as a desk piece, or perhaps as some kind of 386/486 DOS powered ‘rugged’ computer.

OOO, Or a 486 ‘cube’ computer. CPU on top, in a ZIF socket, and then there are layers below it not much larger than the CPU itself, comprising of the chipset, cache, RAM, BIOS, IO controllers… uh… power… yeah. A ‘cube’ of PCBs and in the end you have a small cubed 486 PC that blinks lights. And has a PC speaker. Yes, I want this.

Oh, cheap PC-104 boards were a thing in the early 2000’s. I used one to build a print server:

https://saccade.com/writing/projects/Prince/LinuxPrintServer.html

I’m not really keen of ARM SBCs for the most part. But what can we do? They’re dirt cheap.

Price has always been a driving factor. Cheap dominates most of the time.