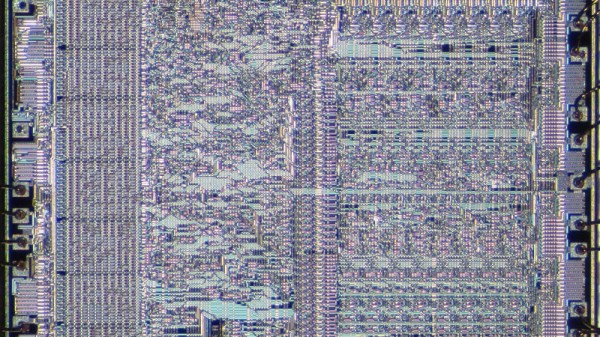

Nothing ever made is truly perfect and indeed, CPU architectures like x86, RISC-V, ARM, and PowerPC all have their own upsides and downsides. Today, I aim to make an architecture that learns from all these mistakes and improves architecture design for everyone.

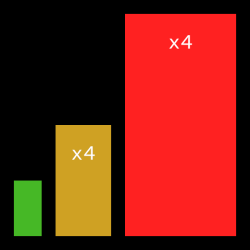

I’ve consulted with many people opinionated on the matter, both from a software perspective, and from a hardware perspective. I have taken all their feedback in mind while creating this initial draft of the WheatForce architecture (PDF). It is inspired by pieces from many architectures: segmentation inspired by x86, hash table-like paging from PowerPC, dynamic endianness control from RISC-V and PowerPC, and more. Let’s look into each feature in a little bit more detail. Continue reading “WheatForce: Learning From CPU Architecture Mistakes”