Cognitive processes are not something that we generally pay much attention to until something goes wrong, but they cover the entire scope of us ingesting sensory information, the processing and recalling thereof, as well as any resulting decisions made based on such internal deliberation.

Within that context there has also long been a struggle between those who feel that it’s fine for humans to rely on available technologies to make tasks like information recall and calculations easier, and those who insist that a human should be perfectly capable of doing such tasks without any assistance. Plato argued that reading and writing hurt our ability to memorize, and for the longest time it was deemed inappropriate for students to even consider taking one of those newfangled digital calculators into an exam, while now we have many arguing that using an ‘AI’ is the equivalent of using a calculator.

At the root of this conundrum lies the distinction between that which enhances and that which hampers human cognition. When does one merely offload tasks to a device or object, and when does one harm one’s own cognition?

Surrender Versus Offloading

Cognitive offloading is the practice of shifting cognitive tasks to external aids, and it is thought to make learning complex tasks easier. In contrast to rote memorization of facts like dates of events and formulas, if we consider books to be an external memory storage device, then we can offload such precise memorization to their pages and only require from students that they are capable of efficiently finding information, as well as the judging of these on their merits.

An often misquoted anecdote here pertains to Albert Einstein, who was was once asked why he couldn’t cite the speed of sound from memory. To this he responded with a curt:

[I do not] carry such information in my mind since it is readily available in books. …The value of a college education is not the learning of many facts but the training of the mind to think.

With this statement Einstein makes a clear case for the benefits of cognitive offloading in the sense that rote memorization does not enhance one’s cognition. Similarly, the ability to solve complicated equations and sums without so much as the use of pen and paper is fairly irrelevant when a slide rule and a digital calculator can offload all that work. As a benefit these devices tend to be more precise, faster and very accessible.

It is still important to have an intuitive feeling for whether a calculation is in the expected range, and one should never assume that what is written in a book is the absolute truth. That in a nutshell is the key difference between cognitive offloading and cognitive surrender. If you have entered a series of values into your calculator, the result seems off and you re-type them to be sure, that’s cognitive offloading.

If, however, you accept the outcome of such a calculation, or a text as written without a second thought, that constitutes surrendering an essential part of your cognitive processes to an external source. If we thus replace ‘calculator’ in this context with ‘LLM chatbot’ or an ‘AI summary’, the same caveat applies. Perhaps more so as at least a calculator is fully deterministic and can be proven to be mathematically correct.

So if that’s the case, and modern-day ‘AI’ isn’t really what it’s often cracked up to be, why would a presumably intelligent human being end up accepting their outputs like the literal gospel?

External Cognition

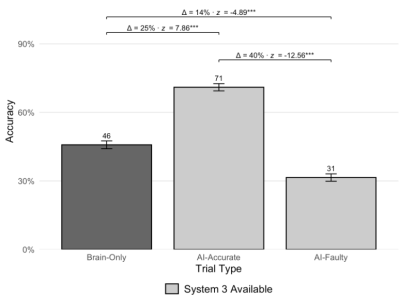

A recent study (DOI link) by Steven D. Shaw and Gideon Nave of the University of Pennsylvania investigated the prevalence of cognitive surrender in the context of LLM chatbots, looking for instances where users are seen to blindly accept the generated answers.

In this study, Shaw et al. had three groups of volunteers take a standardized test, during which one group had to rely purely on their own wits, the second group could use an LLM chatbot which gave correct answers, while a third group also had access to this chatbot, but for them it gave wrong answers.

Perhaps unsurprisingly, the test subjects used the chatbot quite a lot when available, with predictable results. In the ‘tri-system theory of cognition’ that Shaw et al. propose in the paper, the external cognitive system (‘System 3’) is that of the chatbot, whose output is clearly being accepted verbatim by a significant part of the test subjects. If said chatbot output is correct, this is great, but when it’s not, the test results massively suffer.

Where this is worrisome outside of such a self-contained tests is that people are exposed to endless amounts of faulty LLM-generated text, such as for example in the form of ‘AI summaries’ that search engines love to put front and center these days. Back in 2024, for example, Avram Piltch over at Tom’s Hardware compiled a amusing collection of such faulty outputs, some of which are easier to spot than others.

Ranging from the health effects of eating nose pickings to the speed difference between USB 3.2 Gen 1 and USB 3.0, to classics like adding Elmer’s glue to pizza sauce, it’s generally possible to find where on the internet a ridiculous claim was scraped from for the LLM’s dataset, while other types of faulty output are simply due to an LLM not possessing any intelligence or essentials like grasping what a context is.

Meanwhile other types of output are clearly confabulations, a fact which ought to be obvious to any intelligent human being, and yet it seems that so much of it passes whatever sniff test occurs within the cognitive capabilities of the average person.

Making Decisions

In the generally accepted model of cognitive decision making we see two internal systems: the first is the fast, intuitive and emotion-driven system. The second is the deliberate and analytical system, which tends to take a backseat to the first system in general, but could be said to be checking the homework of the first.

Although psychology is hardly an exact science, in the scientific fields of systems neuroscience and cognitive neuroscience we can find evidence for how decisions are made in the primate brain – including those of humans – with various cortices involved in the decision-making process. Fascinating here is the activity observed in the parietal cortex where a decision is not only formed, but also apparently assigned a degree of confidence.

Lesions in the anterior cingulate cortex (ACC) have been linked to impaired decision making and the arisal of impulse control issues, as the ACC appears to be instrumental in error detection. Issues in the ACC are thus more likely to result in faulty or flawed decisions and judgements passing by uncorrected. Incidentally, the ACC was found to be heavily affected by environmental tetraethyl lead contamination, underlying the theory that leaded gasoline was responsible for a surge in crime until this additive was discontinued.

Knowing this, we can thus say with a fairly high degree of confidence that the concept of human cognition is very much determined by the physical wiring in the pinkish-white goo that constitutes our brains. A good demonstration of this is the effect of ethanol on the brain, as well as the intense cravings that accompany addictions.

Abnormal activity in the ACC has for example been associated with alcohol addiction, with an implant suggested to adjust said neural activity as detailed in a 2020 Neurotherapeutics study by Sook Ling Leong et al. In this study the eight treatment-resistant alcoholics had electrodes inserted into part of their ACC to provide direct stimulation, leading to a self-reported 60% drop in cravings.

As ethanol can freely pass through the blood-brain barrier, it is free to start binding with GABA receptors and induce the release of dopamine along with a range of other neurological effects that initially induce a feeling of relaxation and well-being, but also suppresses activity in various cortices, including the ACC. Effectively ethanol thus reduces one’s cognitive prowess and with it the ability to recognize flawed decisions.

From this we can thus deduce that activity in the ACC is not only essential for decision-making, but it also illustrates how the pinkish goop in our skulls is a fascinating biochemistry and neurochemistry experiment in which the addition or subtraction of certain substances and poking it with electrodes can induce a wide variety of cognitive outcomes.

Experiments aside, we started our lives off with the baseline that we were born with (‘nature’) and the various neuroplastic alterations made as we grew up (‘nurture’), which along the way led to various cognitive outcomes that we may or may not regret as adults. This leaves us free to learn from our mistakes and do better in as far as neuroplasticity allows.

Asking Why

It’s often said that the most valuable skill in life that adults tend to lose as we mature out of innocent childhood is the incessant ability to ask ‘Why?’. By questioning everything and wanting to know everything, we not only display curiosity, but also nurture the cognitive skills of our brain. If instead our environment pushes back against this, it can harm the development of such cognitive skills, even if the pushback doesn’t rise to the level of childhood trauma.

As a certified ‘nerdy kid’ back in the day who went through all the motions of being bullied, shoved into proverbial lockers and other types of physical abuse at school for having the nerve to like books, science and other ‘nerdy’ things that involved being curious, it’s hard not to feel the social pressure to simply comply and not question things. As an adult such social pressure only gets worse, with skills like critical thinking generally discouraged.

Of course, said critical thinking is exactly what we need when confronted with new technologies and the temptation to simply surrender that cognitive burden instead of asking questions. Yet when cognitive surrendering can have real consequences that may affect not just your own life but also those of others, it’s pretty much a basic survival skill to weapon yourself against it.

In a world where things like politics, idols, religion, and advertising exist, the rise of this purported ‘AI’ in the form of LLM-based chatbots with their often very convincingly human-like and authoritative outputs seem to have hit the same weaknesses that unscrupulous religious leaders and scammers exploit, with sometimes tragic consequences.

Although it’s clear that believing some factual misinformation generated by a chatbot is a far cry from deciding to take fatal actions based on a dialog with said chatbot, it also highlights the importance of retaining your critical thinking skills. Although we often like to think otherwise, people aren’t fully rational beings whose cognitive processes belong completely to themselves.

Answering the question of when we harm our own cognition, it would seem that while we can generally trust a calculator, an LLM-based chatbot is not nearly as reliable or benign. Caution and awareness of the risk of cognitive surrendering are thus well-warranted.

Hacking?

I believe you are asking what this has to do with hacking. Well, I do a lot of “hacking” with SBC and always use C or assembler. It lets me understand the hardware. I think that using an IDE or a language that hides all of that sort of information would be an example of cognitive offloading.

I Dream the Body Clockwork:

https://techxplore.com/news/2026-04-mechanical-odd.html

The Tin Man changed his mind, with the oil.

Great article.

When I was still working fixing mainframe down to the component, we have a large number of very detailed manuals. I did not (and I am sure I could not) remember everything but what I did know was where to find the information in the correct manual. So I guess I was doing cognitive offloading.

These days so many systems are so very complicated that it would be nigh on impossible for the average human to memorise it all – in the early days an 8-bit CPU/MCU was simple enough that the experts could hold the entire datasheet and instruction set in their heads, these days even a cheap MCU is a 32-bit machine with heaps of peripherals clocks and power modes.

I do so much based on the fact I know X must be possible and I know where to find it – there’s no way I’m memorising the entire C std library or all of the internals of Linux, but I know there is almost always an answer to be found because there are very few problems that are new.

The idea I think, is not to memorize ‘everything’, but to have a general knowledge of how it works, and where to find the detailed knowledge to do things. You have to have a ‘firm’ foundation in your brain with some math formulas, like V=IR, as well as watts=VA or even e=mc^2….. When you add a 20A circuit in house, do you know what that means? Some things do need to memorized. You can’t do algebra if you don’t know how to add/sub/mult/div/sqrt. You can’t think about calculus if you don’t know anything about algebra or trigonometry …. It is a complex world out there and know one knows ‘every thing’ — even in your chosen field of study/work…. A lot of things in school I don’t use (chemistry for example or advanced calculus), but it is sure nice to have the background knowledge when someone presents an interesting article on a new compound or super conducting material, a relativity update, heat, light, sound, computer architecture, or whatever … and can actually ‘follow’ the story line as one is familiar with the ‘concepts’. That is why I am a believer in a ‘well’ rounded education. Don’t put blinders on…. Even knowing world history (when you are in a free country and allowed to learn that it unbiased) . Those that don’t know their history are doomed to repeat it.

Humans are rationalizing creatures that are sometimes rational. Today, with up being down, and left being right, everyone being gaslite, we have a gong show of cognitive dissonance. Some even flaunt it and make you agree.

Enter AI… those of us old enough to know first principles, find the gaps and ask questions, can spot when AI provides halucinations. Get clearly wrong results and confront the AI with the correct answer. AI doesn’t skip a beat if you provide evidence and immediately recalculates results. However, it will not use that info to provide others with correct results. Have fun and ask why! Confront it. If young engineering people trust AI, there will be disasters in manufacturing and construction.

For thinking to occur, you need objects of thought.

If you don’t remember a fact like the speed of sound, thinking about the matter becomes that much more difficult.

If you reduce all your knowledge to mere labels and placeholders, like “The speed of sound exists as a number”, instead of actually learning the fact, thinking becomes tedious and slow because you have to stop whatever thought process you had to find the information and then try to remember where you were before you got interrupted. When you know nothing, this happens constantly and dealing with any complex idea becomes a slough. This results in people avoiding the mental effort and frustration, which is to say, people will avoid thinking and ask Google or ChatGPT instead.

What’s worse, when it comes to more abstract and complicated matters, just holding on to the labels starts to require rote memorization. Do you remember how to describe the fact that you can’t remember, so you can search it?

Also, simply knowing a bunch of stuff means you don’t have to keep the information in your short term memory while you’re using it, which bypasses the “seven plus or minus two items” limitation about the size of a problem you can think about.

Also:

For a person who actually does know their sums and multiplication tables, because they have learned them, a calculator is really slow. For example, in my first summer job I had to work an old cash register that didn’t count cash back, so I had to do it myself, on an adjacent desktop calculator. Pretty soon I could do it faster without. I then “graduated” to counting ledgers in the old debit/credit style on paper, and I could eventually sum a whole column almost as fast as I could read it. I had to. After all, who wants to spend a whole afternoon doing boring sums?

When you know it, the answer is just there. You don’t have to think about it. Eventually, even if the calculator is right there on the table, physically moving your fingers to it demands more effort.

I know the speed of sound pretty well. It’s about 700mph in air at about room temp / pressure. I have some knowledge about things that cause it to increase and decrease (density and so on), and why it arises, and what its effect is on a wavefront, and how it all relates to differential equations, and on and on. I’m not an expert on any of these subjects but i think this familiarity serves me well and i’m glad i don’t have to “look it up” unless i want to know more than one significant figure, or specific formulas (though i like to believe, i might be able to re-derive some of the simpler ones from half-remembered first principles).

So that gives me “an intuitive feeling for whether a calculation is in the expected range”, which is more than enough for the amount that i dabble in fluid dynamics.

But i think that’s exactly where the problem lies. Google’s AI search results are very front-and-center in my life. From my perspective, that seems rather unavoidable but i know there are alternatives. And the way i can tell if the AI result is correct is if it confirms my priors. So that’s a big bummer that they are specifically punishing me for my ability to get a ballpark answer from memory.

It’s the specific thing that isn’t being off-loaded that i think is so dangerous about the current evolution. There’s a risk that all my faculties except for confirmation bias are going to become obsolete.

I thought even Americans used m/s for the speed of sound.

Who the hell uses mph, pfft

They tell kids that if you start counting when you see a lightning flash then every 3 seconds before you hear the thunder means 1 kilometer distance, it’s an easy trick. And it also comforts some kids that are scared.

hahah i not only use mph, but i also use the conversion factor 1mph = 1ft/sec. take that, precision!

(it does become a bummer when you consider the corollary, 2mph=1m/s, sigh)

BTW, the trick I mentioned can also be used for other things, like when you see a video of a gunship gunning down people for instance, you hear the bangs then see the impact a number of seconds later and you can then tell how many kilometer away it was by counting how many counts it takes and every 3 secs is around one kilometer (or every 1 sec is around 331 meter give or take).

That is something you can do without taking out a timer and doing many calculations, and you can even do it from a video you don’t have replay access to.

AFAIK subsonic ammunition goes just shy of the speed of sound, correct me if I’m wrong. I’m not a person into weapons and stuff so I don’t have deep knowledge.

If you see the shot hit the target then count the number of seconds before you hear the shot hit the target, then you can tell about how far from the camera the target was.

You cannot tell much about the shot itself. The slugs from the guns travel a different speeds. If you hear the bang of the shot being fired then see where it hits, that tells you the travel time from the gun to the target but not the distance.

Some weapons fire rounds faster than sound, some fire rounds slower than sound. Without knowing the weapon and the loaded ammunition, you can’t make much of an estimate.

You can hear the difference between a shotgun, a 22, a high power rifle and some clown with an oxyacetylene setup, balloons and cannon fuse.

Can be tricky, distance reduces confidence greatly.

Bang to thunk, doesn’t even tell you flight time.

Unless you know ur on top of the gun.

Could be ‘thunk to bang’ time.

Perhaps the normies should not use AI if it is bad for them, however I am perfectly happy to be free to ponder higher category and type theory while the glorious calculators handle all of the first-order predicate logic operations.

While munching some stawberries no doubt.

Hugo be the soil, Hugo be a machine. Hugo like hacks as representation of cognitive reactions of his slime ganglia and converting his subjective sensing of reality in reward earning way. Hugo’s way. Yes, the secrets of our electrochemical computer behind the eyes are fascinating.

I am addicted to asking why?

Any new subject of inquiry starts with the history of the subject, knowing where, and WHY solutions became what they are, is equally as important as how to implement them.

Well, to me at least.

Much better than asking “why?”, is asking “how?”.

Knowledge isn’t power; power is power, and knowledge without power is just trivia.

If your knowledge doesn’t lead to something controllable or predictable (for yourself, in practice, not in theory), it may as well be religion.

Too many self-proclaimed “nerds” get caught in this trap where they think knowing facts and figures improves their lives in some way, when it actually just makes them a more efficient cog in a machine they have no control over.

When learning, always filter the information through the question of “when will I ever use this?” before allowing it to become your own knowledge.

Turns out, that kid in the back of the class was right the whole time.

This is the best comment I’ve seen on the internet in a long time….

Nuf said.

It is impossible to know what knowledge will come in handy, or even might become very important to you at some point.

Learn it when you need it.

Your brain is a muscle. (an untoned, untrained, sloppy, scrawny muscle…not all of you, just Anon.)

You had a decade, maybe a little more, where it really sucked up information.

During that period you were the most clueless you will ever be…Even worse than now.

If your younger self had used you method, you would today be incapable of learning anything non-trivial.

Also Math in particular ‘stacks’.

If you miss something important and don’t catch it, your are F’ed.

Better luck next reincarnation.

This time around you have chosen the ‘fat, dumb and happy’ method.

What happened to doing thing just for fun?

Keep streamlining your life like this and you end up like any other corporate drone on antidepressants.

Interesting theory.

Must be pretty depressing to know at age 15 exactly where you’ll end up when you’re 45, or even 25. Especially if you’re asking questions like “When will I ever use math, English, physics, chemistry…”, you know, the usual subjects that the kid at the back of the class was struggling with. It leaves out a lot of options for what you can do with your life.

Also, the above Dude posting anime memes is fake. I don’t like anime.

If it turns out you need to know something later, learn it then. Not sure what’s so hard to understand.

Historically a lot has been learned about how the human brain works by how it fails. Mostly from people who has ‘normal’ working brains before some major life changing accident.

e.g. A brain surgeon in a car accident who suffered major head trauma to one side of their brain, eventually went on to create stain glass windows of human brains. With the logical side seriously damaged the creative side became dominant. Oddly enough because her stain glass windows of brains were so medically accurate, even though 2D, her core customers were brain surgeons globally.

So Truth.. is that a story some AI told you?