If you measure a DC voltage, and want to get some idea of how “big” it is over time, it’s pretty easy: just take a number of measurements and take the average. If you’re interested in the average power over the same timeframe, it’s likely to be pretty close (though not identical) to the same answer you’d get if you calculated the power using the average voltage instead of calculating instantaneous power and averaging. DC voltages don’t move around that much.

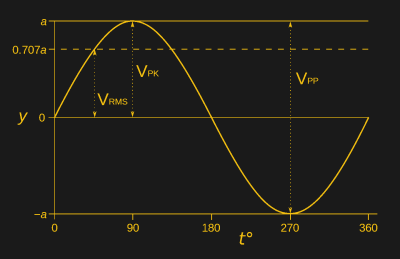

Try the same trick with an AC voltage, and you get zero, or something nearby. Why? With an AC waveform, the positive voltage excursions cancel out the negative ones. You’d get the same result if the flip were switched off. Clearly, a simple average isn’t capturing what we think of as “size” in an AC waveform; we need a new concept of “size”. Enter root-mean-square (RMS) voltage.

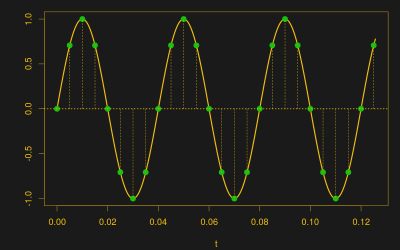

To calculate the RMS voltage, you take a number of voltage readings, square them, add them all together, and then divide by the number of entries in the average before taking the square root: . The rationale behind this strange averaging procedure is that the resulting number can be used in calculating average power for AC waveforms through simple multiplication as you would for DC voltages. If that answer isn’t entirely satisfying to you, read on. Hopefully we’ll help it make a little more sense.

Necessity

When it comes to averages, the ideas of “big” and “little” for AC and DC voltages are fundamentally different. DC waveforms are roughly constant, and what matters is the distance from zero. AC waveforms are always wiggling around a center point, and this is often ground. If the waveform is symmetric, and you take enough samples, it’s going to average out to zero.

One way to measure the size of AC voltages is to take the maximum and minimum over time: the peak-to-peak voltage. Another possibility would be to take the absolute value of each voltage and average them together. That works too. A third choice is to square all of the individual voltage measurements before adding them up. This has the same effect as taking the absolute value — all of the individual terms are positive now and don’t cancel out — and has the additional side-effect of making the big values bigger and the small values smaller. Which do we choose?

Physics

Using the squared voltages in the average gets the physics right. If you’re interested in the power that you can get out of the AC signal, it’s the squares of the voltage that are relevant anyway. Let’s pretend you’re driving a resistive load for now — maybe you’re heating your apartment or using an electric stove — and do a tiny bit of algebra.

Remember that power is equal to the current flowing through our imaginary device times the voltage being dropped across it: P = IV. And who could forget Ohm’s Law? V = IR or I = V / R. Put them together, and P = V² / R. The power in the system, at any given instant, is proportional to the voltage squared. The average power over time is thus proportional to the average of the squared voltages. Sounding familiar? Since the average of squared instantaneous voltages is in units of volts squared, taking the square root at the end (“root of the mean of the squares”) brings it on home.

The same logic holds for RMS current measurements as well. Substituting Ohm’s Law the other way, you get P = I² R and power is proportional to current squared. Average current in a balanced AC waveform is zero, but RMS-averaged current, squared, is proportional to power.

If you have an AC voltage that’s riding on top of a DC component, the RMS value still delivers. In that case, the squared DC component adds up n times before dividing by n again, and you get something like this: , where

v is just the pure AC voltage.

Rules of Thumb

One place you’ll see RMS voltages is in mains power. Indeed, the 120 V in the US (or 230 V in the EU) coming out of your walls right now is an RMS figure. For sine waves, like what you get from the electrical company, the peak voltage is a factor of sqrt(2) higher than the RMS voltage. The peak voltage in the States is something like 120 V * sqrt(2) = 170 V, and the peak-to-peak is 340 V. That’s 650 V peak-to-peak in Europe; yikes!

This also means that if you’re lacking an RMS meter and need a quick-and-dirty estimate of something that’s sine-wave-like, you can take the amplitude and divide by 1.414, or take the peak-to-peak and divide by twice that.

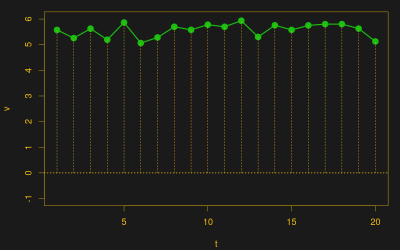

Another waveform you might care about is the PWM’ed square wave that we often use to drive motors from microcontrollers. Clearly, if you alternate between zero volts and twelve volts, it’s only supplying power to the motor when it’s at twelve volts. Correspondingly, you won’t be surprised to hear that the RMS voltage of a PWM waveform is the square root of the duty cycle times the on-voltage.

Wikipedia has you covered for triangle waves and other funny waveforms.

RMS Everywhere

It turns out that you’re often concerned with squared quantities. Kinetic energy is proportional to speed squared, for instance, so RMS speed is used in calculating temperature from the average velocity of molecules in a gas. If you have a measurement procedure that may be right on average, but you’re worried about the spread of the results as well, you might like to minimize RMS error. The statistician’s concept of standard deviation is similar, with the average value subtracted off beforehand.

You even calculate the hypotenuse of a triangle by the same procedure, just without dividing by n. (OK, that’s a stretch, but square roots of sums of squares are everywhere!) I’m going to leave it to the mathematical philosophers among you to duke it out in the comments as to why the L2 norm appears so often. For the electrical hackers out there, it’s enough to remember the Ohm’s law rationale: when you’re interested in power, you’re interested in squares.

In the 70’s there was an interesting “battle” between dbx and Dolby.

Dolby’s system had problems with compression/expansion on tape because the low frequencies would get shifted in phase by the recording/playback process. And Dolby used average, not RMS, detection. So expansion did not match compression. You need a system that ignores the phase shifting, which RMS does.

We (dbx) used true RMS detection. And our VCAs were heads above the simple gain control circuit of the competition.

But like many tech things – the better technology doesn’t always win.

Of course that was another life ago, and I was just an employee (product engineer) at dbx. But fun times.

Wasn’t Dolby-processed audio more “listenable” without decoding than dbx? That is, a preencoded tape played in deck that didn’t support decoding. Or am I thinking of something else?

Regardless, I remember thinking that dbx was superior.

I recall being taught that the RMS of AC was the heating equivalent of DC.

That works too! Why? Because heat = power, and power is proportional to V^2 (or I^2), and RMS is an average of squared quantities.

(Man, I could have saved a lot of typing…)

We were taught this very graphically.

RMS is the *area* between a complex (or simple) waveform and the x axis. It is also called the Integral.

The vector that is the tangent to the wave form at any specific point is called the Derivative.

Now add a wave that is Proportional to the original wave and you have the components of PID.

The RMS voltage was also called the DC effective voltage because it would have the same effect as that DC voltage into a DC load.

The sqrt(2) or 1/sqrt(2) appears everywhere in linear power supply design or anything really to do with AC voltage / current / power from a sine wave.

Be careful about what you were taught, or what you remember. Most of the above is wrong. Here’s some hand-waving about the math that might make things clearer.

Averages are a lot like integrals in that they’re sums. The average is taken over a discrete number of points, and the integral is the sum over _all_ of the infinitessimal points. In the average, you divide by N. In the integral, you multiply by the width of the infinitessimal, dx. One way of conceiving of integrals, actually, is the average value as the number of samples in the average goes to infinity, divided by the range. https://en.wikipedia.org/wiki/Riemann_integral

The integral under a centered sine wave is zero, just like with the average. RMS is the integral of the squared value: sin(x)^2, which keeps the positive half-cycle from cancelling the negative. Just like the RMS average, it’s the squaring that works the magic.

http://www.wolframalpha.com/input/?i=integrate+sin(x)+from+0+to+2*pi

http://www.wolframalpha.com/input/?i=integrate+sin(x)%5E2+from+0+to+2*pi

And that’s exactly where the 1/2 slash 1/sqrt(2) comes from that you’re used to seeing. The integral of sin^2 over [0,2pi) is pi. Divide that by the range (2pi), and you get 1/2. Square-root that, and there’s your factor in RMS voltage versus instantaneous voltage. (That only works when you’ve got a sine wave.)

(This is stuff that didn’t make the cut for the article — already too mathy — but that might interest the interested reader.)

I haven’t thought about the PID stuff, but it strikes me as only loosely related. Not sure if any of that is right or wrong, but my spider sense is tingling.

And there we are ;)

But your explanation is much better than my was:

http://hackaday.com/2017/04/04/the-shocking-truth-about-transformerless-power-supplies/#comment-3503810

You know, I can’t resist telling my RMS story, but then I remembered I already had: https://hackaday.com/2016/08/04/root-mean-square/

I couldn’t believe a EE professor at a fairly well-respected school would say something like that!

The whole thread is full of win.

I enjoy Elliot Williams articles a lot more than those by Al Williams.

Based on the questionable quality of several of his own articles, I don’t think *Al* should be bragging about putting a professor in his place about RMS.

In contrast, Elliot’s articles are usually thorough, well thought out, and informative.

Well, I think we have identified Al’s professor. Nothing against Elliot, but I enjoy the articles that both of the Williams’s do. And I learn a lot from both of them and all the other guys too. I will say that a lot of the small posts from the blog are kind of dodgy for all of the writers, but that’s why I don’t have to read any of them that don’t look interesting to me.

That shoudl be P = i^2 / R in one of the grey little magic CSS box thingies….

P = V^2/R

P = I^2 x R

P = IV and V = IR, so P = IxIxR = I^2 x R

P = IV and I = V/R so P = V^2/R

What Steve said. P = IV , V = IR. P = I^2 R

But thanks for the heads-up on the CSS magic box thingy. They make hell with fancy math formatting. Fixed.

MathML would be nice.

Rather P = (I^2) * R:

P = I*V and V =I*R so P = I*(I*R). Not [P = I^2^ R], wat?

(V/R) noticed that too :P But it was a laughably long time before I realized the P was also the rate of heat energy getting wasted at every nonzero R during nonzero I.

I like those kind of articles, but if there is some math in it, please use also numbers and not only words.

If it is math, don’t be afraid of use notations, I am sure that people will still read with the same enthusiasm if it is kept the current verbosity :)

That stifles me a bit. It easy to do things like n^2 or sqr(n) or sqrt(n) but when it comes to integrals and derivatives the keyboard is not a useful tool.

What do you mean?

I mean that a basic every day keyboard doesn’t have the symbols on it to express more complex math.

I am sorry but this was only a choice of the author, not due to a lack of instruments, that is why my suggestion come from.

They have a whole website platform, but even in a basic free WordPress you can write in Latex whatever you want. So, that is not an excuse :)

And there are also other more stupid methods/arrangements…

And it does not exist a magic keyboard to write formulae, but math still exist…

Hi

You can use online calculators for calculation of RMSE It is free and so simple http://agrimetsoft.com/calculators/Root%20Mean%20Square%20Error.aspx