If you measure a DC voltage, and want to get some idea of how “big” it is over time, it’s pretty easy: just take a number of measurements and take the average. If you’re interested in the average power over the same timeframe, it’s likely to be pretty close (though not identical) to the same answer you’d get if you calculated the power using the average voltage instead of calculating instantaneous power and averaging. DC voltages don’t move around that much.

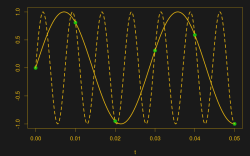

Try the same trick with an AC voltage, and you get zero, or something nearby. Why? With an AC waveform, the positive voltage excursions cancel out the negative ones. You’d get the same result if the flip were switched off. Clearly, a simple average isn’t capturing what we think of as “size” in an AC waveform; we need a new concept of “size”. Enter root-mean-square (RMS) voltage.

To calculate the RMS voltage, you take a number of voltage readings, square them, add them all together, and then divide by the number of entries in the average before taking the square root: . The rationale behind this strange averaging procedure is that the resulting number can be used in calculating average power for AC waveforms through simple multiplication as you would for DC voltages. If that answer isn’t entirely satisfying to you, read on. Hopefully we’ll help it make a little more sense.