[Zack] watched a video of [Dan Tepfer] using a computer with a MIDI keyboard to do some automatic fills when playing. He decided he wanted to do better and set out to create an AI that would learn–in real time–how to insert style-appropriate tunes in the gap between the human performance.

If you want the code, you can find it on GitHub. However, the really interesting part is the log of his experiences, successes, and failures. If you want to see the result, check out the video below where he riffs for about 30 seconds and the AI starts taking over for the melody when the performer stops.

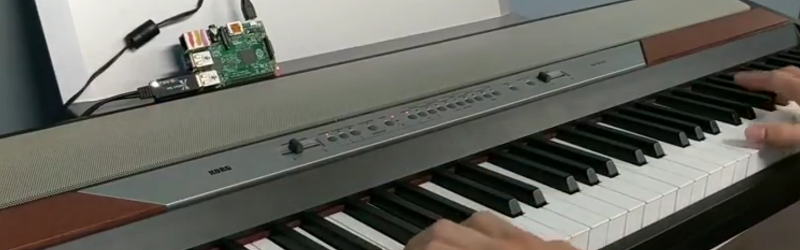

The initial version uses Python, but later versions use Go. [Zack] used a few different algorithms ranging from a simple echoing of the human player to developing a Markov chain based on prior notes. The initial results were not very good–there are videos of the early trials. Part of the problem is the Python implementation was slow, but the Go version performs better.

[Zach] also tried neural nets. However, that didn’t pan out well, either. Finally, he settled on a modified Markov chain algorithm based on his own style of play.

We would love to see this AI matched up with the right actuators. Or perhaps it should be built right into a piano.

Like autotune for instruments… the algo suits jazz particularly… nice.

so, Markov chains generators are AI ?

It would be simpler to write a few hundred “licks” from diffrent famous solos and compositions and styles, transpose them into the keys used, the mode used, the rythems used, and output them randomly. Being in the key and rythem, It should sound alright. The AI part could be that it learns new licks from you.

Ugh. There is enough assembled riffs and sounds posing as music already. Kawai used this in portable keyboards hyped as “ad lib”.

Ray Kurzweil is right, watch out for AI and don’t let it come into our human sphere.

That’s right, it will mess everything up.

Jazz is an accident.

Posting is an accident too.

Spinal Tap on Jazz