Passwords are in a pretty broken state of implementation for authentication. People pick horrible passwords and use the same password all over the place, firms fail to store them correctly and then their databases get leaked, and if anyone’s looking over your shoulder as you type it in (literally or metaphorically), you’re hosed. We’re told that two-factor authentication (2FA) is here to the rescue.

Well maybe. 2FA that actually implements a second factor is fantastic, but Google Authenticator, Facebook Code Generator, and any of the other app-based “second factors” are really just a second password. And worse, that second password cannot be stored hashed in the server’s database, which means that when the database is eventually compromised, your “second factor” blows away with the breeze.

Second factor apps can improve your overall security if you’re already following good password practices. We’ll demonstrate why and how below, but the punchline is that the most popular 2FA app implementations protect you against eavesdropping by creating a different, unpredictable, but verifiable, password every 30 seconds. This means that if someone overhears your login right now, they wouldn’t be able to use the same login info later on. What 2FA apps don’t protect you against, however, are database leaks.

And you should absolutely be concerned about database leaks. Did you have a Yahoo account in 2013? Well, they got hacked. In late 2016 they revealed (three years later!) that a password database with a jaw-dropping 500 million passwords was breached. In December 2016, they upped that figure to a mind-blowing 1 billion. Well, it was actually 3 billion. That’s every password they had, at Yahoo! and all of their subsidiary services.

And you should absolutely be concerned about database leaks. Did you have a Yahoo account in 2013? Well, they got hacked. In late 2016 they revealed (three years later!) that a password database with a jaw-dropping 500 million passwords was breached. In December 2016, they upped that figure to a mind-blowing 1 billion. Well, it was actually 3 billion. That’s every password they had, at Yahoo! and all of their subsidiary services.

But Yahoo! is not alone, even if the scale makes it unique. Name a large company, and they’ve probably been hit. It’s to the point that responsible services protect their passwords in ways that are designed assuming the database is eventually compromised. Assuming that all databases will eventually be compromised is equivalent to assuming that 2FA as it’s implemented at Google, Facebook, Dropbox, Microsoft, Twitter, Amazon Web Services, and almost all the rest, will eventually be broken.

But this is Hackaday, and we understand things best by taking them apart. So I’m going to step quickly through how 2FA apps work, and then show you how you can implement it yourself if you want in a few lines of Python. Along the way, you’ll see for yourself why 2FA app secrets can’t be stored as securely as passwords can, and why a good strong password is still important. None of this is news, and this is not a hack, but taking a look inside the black box helps you assess security claims for yourself.

2FA and TOTP

Two-factor is great in theory. Instead of just relying on a password, “something you know” in the jargon, you combine another factor for authentication: “something you have” or “something you are”. Ideally, this means requiring possession of a cellphone or security token, or presenting your fingerprint to be scanned. In theory, there’s no difference between theory and practice.

In practice, because of cost and convenience, most 2FA implementations use an app that authenticates using the time-based one-time password (TOTP) algorithm. That is, it’s just another password. In particular, Google’s Authenticator app and the WordPress interface which I’m currently using implement “something I have” by storing this one-time password on my cellphone.

In practice, because of cost and convenience, most 2FA implementations use an app that authenticates using the time-based one-time password (TOTP) algorithm. That is, it’s just another password. In particular, Google’s Authenticator app and the WordPress interface which I’m currently using implement “something I have” by storing this one-time password on my cellphone.

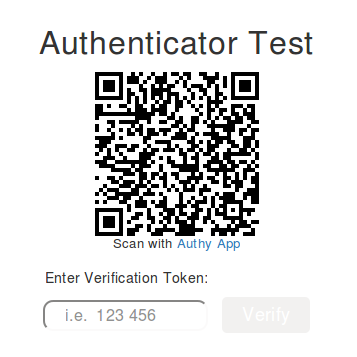

Remember that QR code on the screen when you enrolled your phone? That was the password. You could tell me this secret password, and then I’d know your account token too. With access to this initial password and a little code, I can log in without having a cell phone at all, much less yours. This is “something you know” rather than “something you have”. If you think this is semantics, let’s compare the security properties of SMS-based 2FA (which is 2FA) and app-based “2FA” which isn’t.

To fake an SMS-based 2FA query, someone has to have access to your phone number and receive a six-digit code, or at least overhear it along the way. Unless you’re being targeted by hackers with very significant resources, they’re not going to redirect phone traffic to hack you. And in the event that the SMS-number database gets compromised, the worst that happens is that the hackers can call you up. (At least in theory. In practice, the few SMS systems I’ve tested simply contain the current value of my TOTP password, which means that it’s just as vulnerable as the application. They could do much better: by sending a random number, for instance.)

To fake an app-based 2FA query, someone has to know your TOTP password. That’s all, and that’s relatively easy. And in the event that the TOTP-key database gets compromised, the bad hackers will know everyone’s TOTP keys.

How did this come to pass? In the old days, there was a physical dongle made by RSA that generated pseudorandom numbers in hardware. The secret key was stored in the dongle’s flash memory, and the device was shipped with it installed. This was pretty plausibly “something you had” even though it was based on a secret number embedded in silicon. (More like “something you don’t know?”) The app authenticators are doing something very similar, even though it’s all on your computer and the secret is stored somewhere on your hard drive or in your cell phone. The ease of finding this secret pushes it across the plausibility border into “something I know”, at least for me.

TOTP algorithms are far from worthless, however. The beauty of these algorithms is that the one-time secret password is hashed with some other number that’s common knowledge to me and the server — sometimes it’s a simple counter. This generates a different “password” for every value of the counter. Because the hash function is one-way, you can’t figure out what my secret was even if you intercept the hashed value and know the counter. Contrast this with a regular password; if it’s overheard in transmission, the attacker knows it forever.

TOTP algorithms are far from worthless, however. The beauty of these algorithms is that the one-time secret password is hashed with some other number that’s common knowledge to me and the server — sometimes it’s a simple counter. This generates a different “password” for every value of the counter. Because the hash function is one-way, you can’t figure out what my secret was even if you intercept the hashed value and know the counter. Contrast this with a regular password; if it’s overheard in transmission, the attacker knows it forever.

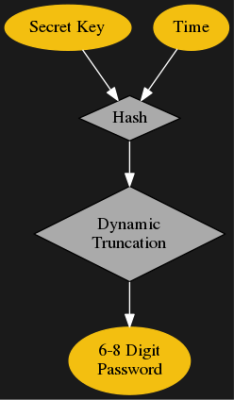

In most TOTP implementations, the counter is the number of 30 second intervals that have elapsed since Jan 1, 1970 — the Unix epoch. This gives you a different, strong, password every 30 seconds. Practically, servers will accept either the previous, current, or next values to allow for clocks to go a little out of sync, but after a minute or so, that old hashed value is useless to an attacker. That’s pretty cool.

But it’s not “something you have” or “something you are” and it’s not safe against database compromises. Want proof? Let’s make our own.

DIY

To make your own Authenticator, all you need is the password. Usually this is conveyed to your cell phone in the form of a QR code. You download their app, point your phone at the screen, and it converts the QR code into the 80-bit password. But you don’t want the QR code, so refuse it. Click “can’t use the QR code” or “manual entry” or whatever until you get to a code that you could write down. Some sites give you hexadecimal, others give you base-32, but you’ll soon be looking at 16-20 letters and numbers. That is the TOTP key that’s going to be hashed with the time counter to generate the session passwords.

As for the secret one-time password itself, the standard that almost all websites adhere to is pretty good at 80 bits — presumably of full entropy. If you’re using a good human-chosen password right now, you’re probably around 30 bits. “Correct horse battery staple” only gets you 44 bits. So 80 bits is looking pretty good, and you won’t be re-using the same secret across different web domains either.

The basic idea of TOTP works under the hood are actually pretty straightforward. It’s a hash-based message authentication code (HMAC) with the time-dependent counter as the message. A HMAC essentially appends a message to your secret key and hashes them together, the idea being that anyone with the password can verify the integrity of the message, and verifying the HMAC signature confirms that the other person has the same secret key.

The details to both HMAC and TOTP are the killer. HMAC actually hashes the secret and message twice, with different padded versions of the secret. This prevents length-extension attacks by using different keys on the first and second hashing rounds. The final value that comes out of a TOTP routine is the value of four bytes, the location of which depends on the value of the nineteenth byte. This is called dynamic truncation, and implementing this correctly in Python cost me some gray hairs.

Anyway, there are TOTP libraries out there, and for production work you should probably use them. The Linux program oathtool can implement a TOTP nearly every way possible, and was an invaluable benchmark during development. (Call it with the -v flag for verbose debugging output.) But if you want to see how TOTP works, here’s some code:

import time, hmac, base64, hashlib, struct

def dynamic_truncate(raw_bytes, length):

"""Per https://tools.ietf.org/html/rfc4226#section-5.3"""

offset = ord(raw_bytes[19]) & 0x0f

decimal_value = ( ord(raw_bytes[offset]) & 0x7f) << 24 | ord(raw_bytes[offset+1]) << 16 | ord(raw_bytes[offset+2]) << 8 | ord(raw_bytes[offset+3])

return str(decimal_value)[-length:]

def pack_time(counter):

"""Converts integer time into bytes"""

return struct.pack(">", counter)

secret32='abcdefghijklmnop'

secret_bytes = base64.b32decode(secret32.upper())

counter = int(time.time())/30

counter = pack_time(counter)

raw_hmac = hmac.new(secret_bytes, counter, hashlib.sha1).digest()

print dynamic_truncate(raw_hmac, 6)

## Verify, if you have oathtool installed

import os

os.system("oathtool --totp -b '%s'" % secret32)

Implications

We can generate the TOTP password with just the time and the secret key. So how does the server authenticate us? By following the exact same procedure. And this means that the server must have access to the secret key as well, which means that it can’t be stored hashed because hashes are one-way. Think about that: the server knows your secret.

This is not the case for a regular password, which should never be known by the server at all! Once you’ve entered your password for the first time, the server hashes your password and stores that hash, forgetting the original password forevermore. When you enter a password the next time, it hashes what you’ve typed and checks to see if it matches the stored hash. Because the server only keeps a hashed version of your password, because it’s a good one-way hash with a salt, and because you chose a strong password, it’s virtually impossible to get your password back out of the database even when it’s publicly available.

This is not the case for a regular password, which should never be known by the server at all! Once you’ve entered your password for the first time, the server hashes your password and stores that hash, forgetting the original password forevermore. When you enter a password the next time, it hashes what you’ve typed and checks to see if it matches the stored hash. Because the server only keeps a hashed version of your password, because it’s a good one-way hash with a salt, and because you chose a strong password, it’s virtually impossible to get your password back out of the database even when it’s publicly available.

The practical upshot of all of this is that, although some websites still don’t, all should be able to store normal passwords hashed, and will thus be relatively safe even if their password database gets hacked. If you’ve used a good password, that’ll buy you some time, even if the breach is discovered a while after the fact. On the other hand, if your password gets snooped in transit, you’re done for.

TOTP keys simply can’t be stored hashed, because the authentication algorithm requires them in raw form. When the TOTP key database gets compromised, all of the TOTP / 2FA protection becomes worthless and you’re relying on the strength of your password to save you. Until the database gets breached, however, the ever-changing TOTP password is a great protection against eavesdroppers.

Getting the best of both worlds is easy enough: use TOTP / 2FA when it’s available, but make sure that your passwords are unique across websites and that each one is long and strong. But don’t fool yourself into thinking that 2FA is a substitute for good password practices — you’ll be living just one database breach away from the edge.

There’s another really nice open source Two-Factor solution that we use called “PrivacyID3A” (PrivacyIdea). The web site for it is https://privacyidea.com//. I can say personally that it works very well and we like it.

Not very encouraging when their SSL cert is misconfigured.

You know, security is very hard. DES, AES, Elliptic Curves, LetsEncrypt cert-bot…

I think they’ve recently changed their name to “NetKnights”, and the URL (with correct cert!) is https://netknights.it/en/.

you should look into the u2f (managed by the FIDO alliance) option, it doesn’t require that your secret be on the server, it exists only on the token

the server sends a challenge that the token processes and returns.

This also means that the same token can be used for many different services without allowing any of them any way to get at the others. so you no longer have to carry a bunch of keys around with you.

see https://developers.yubico.com/U2F/ for more details.

This is what google uses for all their employees accessing everything. It’s also the token type supported by gmail and other google apps.

Have one. Not widespread enough although the latest FF has it built-in.

I agree it needs to be used more. I know chrome also has support for u2f keys built in.

But when people are advocating different 2fa tokens, being and advocate for u2f will help grow the number of sites that support it :-)

Yes, U2F is quite nice.

You might also want to look for tokens with displays, like Trezor Bitcoin Wallets, because these can actually show you on secure display what site are you logging into.

Last time I checked, U2F wasn’t smartphone-friendly.

There are NFC based u2f tokens that work with newer smartphones.

YubiKeys can work with smartphones via USB OTG, USB-C, or NFC. This has been around for a few years now…

I’ve had too many problems with time being wrong on things to want to rely on any protocol that requires that two devices (especially two that are far apart and managed by different organizations) have the same time.

I’d expect a chimp to be able to synch two devices within 10 seconds of each other, even if they were some distance apart and it had to time the walk by counting up to a couple of hundred Mississippi.

you would be surprised at how much time skew you will see within a single datacenter.

True on the time skew, but we’re talking within a minute window here. My cell phone is always within a few seconds (do they sync to the towers?), but I know I’ve had a laptop get a couple-three minutes off before. OTOH, it’s simple enough to sync to NTP or whatever when it matters.

I’ve never taken an RSA dongle apart, but with average quartz clock drift of a few seconds a month, you know they’re accepting a wide window of rolling-code values.

Yup, cell phones sync with the towers. Some smart phones also use NTP for the user level OS…but the baseband will still sync clocks with the towers for proper timing.

it doesn’t help if your phone is accurate if the server on the other end isn’t

@davidelang – true, but thankfully most use a GPS reference which is quite accurate.

Google has their “Project Spanner”, which eliminates most of their internal timing frequencies…

It *can* be fixed, it’s just difficult.

research.google.com/archive/spanner.html

This is all lovely for people who log into one account in the morning and stay there all day, but for those of us who have to hopscotch among dozens of online resources all day every day, it gets to be a bit much. The time and complexity overburden drives users to common passwords (can you really remember 30+ XKCD-strong (https://xkcd.com/936/) passwords without a cheat sheet/USB drive file?) and having to muck around with a phone-transmitted code just makes that slower and worse. Granted we’re mostly not working with nuclear launch codes here, but…

that is one of the things that u2f tokens do really well with. yubikey sells what they call a nubby, which just barely sticks out of the USB slot, when you need to 2fa, you just touch it and it does the authentication.

if you search amazon for u2f fido, you will find a bunch of different manufacturers who make compatible devices.

Or use a good password manager (I personally like Enpass), and press one key to enter a password with 128-bytes, capitals, numbers…

that doesn’t actually help that much. if someone is able to watch the login (either on the server or on the client), then it doesn’t matter if it’s a 128 byte password or a 128GB password, they know it and can re-use it whenever they want.

A good 2fa means that you as the user know every time that an authentication is made and a third party can’t get in, even if they are able to watch the entire login process on the client or server.

That’s why there’s a NFC token to eliminate the “looking over shoulder” problem.

that does no good if they are running code on the server or inside your browser when you give the password.

and saying that passwords are better than tokens, but only if you have a token protecting your password manager is a contradiction. :-)

i think the problem is most of the shit that asks for a password really doesnt need one. like this comment here, all i needed to post it was an email address, no password required. why cant more of the irrelevant bs on the internet work like that? reserve passwords for things that actually require security and maybe people will stop doing stupid shit with them.

maybe we need to get away from the password and move onto a passphase instead. rather than a symbol salad to increase entropy, we add more words. i mean literally like 8-10 words minimum. absurd length is enforced but no other requirements. dictionary attacks are still an issue but with the way nobody knows how to spell words anymore and the slang used, people using made up words or other languages. its very hard to create dictionaries that are capable of hitting all those bases and adding them would slow down your password cracker. perhaps the problem with passwords is too much math not enough language.

also we have a lot of hardware based information. mac addresses, cpu serial number, and things of that nature. or location information. that can be used as a second factor. after all a work station chained to my desk with power cables isnt going to move or change hardware very often.

i generally hate 2factor though. especially anything that requires you own a smart phone. come up with an authentication scheme thats easy and doesn’t require me to own more stuff.

so you have no problem with other people impersonating you by typing in your name or e-mail?

In this day and age when people get fired for what is posted on social media, are you really happy with having to prove that you didn’t say something?

I’m not sure why you think a password prevents me from going to facebook right now and creating a profile with your name.

The only protection most social media provides is the network itself. Your connections to other people, who would (hopefully) be able to identify a fake profile of you.

nothing prevents you from setting up an account somewhere that I don’t have an account and use my name.

But the password prevents you from using my name somewhere that I have setup an account (you can create a similar name, but that’s a different problem)

(IANAsecurityexpert) Would it help if the key was encrypted on the server with the user’s password? During login, that is visible to the server, so that it can be hashed. If that was used as a key to symmetrically encode the 2F key, that would hide it. If the key is just a hex value, and there was no way to tell if you correctly decoded it or not, it wouldn’t be vulnerable to brute forcing. Just throwing that out there….

that still means that the server has everything needed to impersonate you. You really want a system where the server doesn’t have enough information to be able to do this.

The u2f approach means that there is no shared key, and it includes mechanisms to prevent keys from being reused even if an attacker captures the entire session in the clear (on the server or client side)

good crypto is hard.

Thanks, I was just writing Max Ward’s same idea again. You are right about this.

Max Ward make a good point though in so much as the 2FA secret would not be stored in plain text on business servers. Security comes in layers. While you may not able to hide the key from someone who captures the entire session, you can still hash the secret and hide it from anyone who is only able to obtain the database but not entire sessions.

Maybe i misunderstood how this works, but this:

“We can generate the TOTP password with just the time and the secret key. So how does the server authenticate us? By following the exact same procedure. And this means that the server must have access to the secret key as well, which means that it can’t be stored hashed because hashes are one-way. Think about that: the server knows your secret.”

doesn’t make a whole lot of sense to me. Why can’t I just apply a hashing function to the original key and implement this using that as input? Saving the hash on server and thus protecting the plain-text passphrase? It still is a 2-factor-auth, is it not?

saw davidelang’s comment above after posting this. ignore my question. Thanks

‘To fake an SMS-based 2FA query, someone has to have access to your phone number and receive a six-digit code, or at least overhear it along the way. Unless you’re being targeted by hackers with very significant resources, they’re not going to redirect phone traffic to hack you’

LOL no they don’t, they just need to convice your provider that your phone has been stolen, and you’ve got a new IMEI number. Boom, they transfer it and you’re well away. (May not be the IMEI you need but something like this.)

And it happens, it happens rather a lot in fact…. It just requires you do a little bit more research on your target before actually attacking them.

https://it.slashdot.org/story/17/09/18/2039207/why-you-shouldnt-use-texts-for-two-factor-authentication

TOTP is defined in RFC6238. RFC6238 is based on RFC4226, which was released in 2006. One year before the release of the iPhone 1. This is imho the even bigger problem, that TOTP and HOTP where never ment for being used on a smartphone!

Imho there are much bigger implications, like the broken enrollment process of the Google Authenticator.

Letting the service, where you log in, managing the seed of your OTP token, is imho not only a bad practice but also a cumbersome. You as the user will end up with a lot of profiles in your smartphone app. If you still want to use a smartphone as a second factor, you should use an authentication server/service, which should of course have the seeds encryption to have at least some protection against database breaches.

U2F also is not that perfect as everybody would like to think, as most implementations (hardware implementations) come with a preseeded master key, which is used to dervice the keypairs when registering with a service.

https://forum.yubico.com/viewtopic.php?f=33&t=1666#p6561

Their response to the final concern.

the protection against the device key being known is physical randomization. With other tokens, you have to track what token is issued to each user, with u2f tokens, have a bowl of them and let users grab one and start using it (there are also several u2f token manufacturers, if you don’t trust one, buy from another, or build your own)

Just to note – iPhone is not the first smartphone that was ever made.

It was the first one that relatively normal people bought though.

It causes great cognitive dissonance for me every time the concept of hashed passwords and databases comes up. It just baffles me why they get so upset over the password field but somehow don’t care about the other 99.99999% of fields that the password is protecting. If they have your database, they don’t just have passwords, they have the thing the password was protecting! It’s like coming home to find your front door smashed in and being concerned that now the thief knows what the inside of your doorknob looks like…

You are misunderstanding the ‘they have your password’ problem.

Passwords to be secure, need to be long and complicated.

Humans are bad at memorising these, so they use the _same_ password for multiple sites.

Now do you see the problem with ‘they have your password’ ?

If it’s your email password, then it doesn’t matter if you had different passwords at other sites if they can get a password reset sent and respond before you do.

it doesn’t matter how long and complex the password is if someone can get a copy of it.

the only thing a long, complex password protects you against is if they are brute-force guessing the password. That almost never happens. Either they use rainbow tables against the hash, or they get hold of the password in plaintext on one end of the connection or the other.

Rainbow tables shouldn’t be effective these days. Salted hashes are the standard, I would guess that there are more websites with unhashed passwords in databases than websites with passwords that are hashed without a decent salt.

salts just increase the size of the rainbow tables needed, but with the explosion of storage capacity, this doesn’t come anywhere close to making passwords uncrackable given the hash. The tools are out there, I’ve run them against large password repositories, they find a LOT of the passwords.

A, say, 256-bit salt, should make the rainbow table 2^256 times bigger. That’s about 116 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 000 times larger.

people don’t use a 256 bit hash, base64 encoded that would be over 40 characters just to store the hash.most salts are a byte or two..

I totally agree. Yeah! Bad thing: I lost my password. But even worse is probably I lost my data.

The problem is, that often people tend to use *same* passwords. So this way an attacker can get access to a users password on a simple, insecure website and use this password for secure but very important websites.

Like: Get users password from facebook and use it on his bank account.

you aren’t trying to protect yourself from insiders at the company you are accessing, you are trying to protect yourself from hackers who may have broken into your browser, or the webserver of the target company, and preventing them from collecting your password and sending it off to someone else to use later.

> If they have your database, they don’t just have passwords, they have the thing the password was protecting!

No, it’s exceedingly unlikely that the bank’s transaction ledger is on the same web-facing server as my login for internet banking.

You’ld think that, but wasn’t the problem with the big Equifax breach, that they had all the credit data on a web-facing server? If that had been on an internal server with an old-school application relay being the only way that a web-facing client could access the data (after presenting user-specific data to confirm identity, to get that user’s record), then downloading the entire database would not have been possible.

Unless they configured ACLs properly, if they have your password and your 2FA secret, they will probably be able to change your password and log in anyway.

I’m okay with 2FA not being the perfect solution to every security problem; it defends against certain attacks and not others. If you’ve broken into the server, there’s not a lot anyone can do.

What does bug me is when people take the TOTP algorithm and change it slightly (usually by changing the epoch or the interval) to force you to use *their* app instead of the one I’ve got all my other keys in.

You just got the scenario wrong against which TOTP should protect you. As Merlin said – when they have the database with you password, they also have the data that it protects. TOTP won’t help you here, correctly. But they don’t need to. TOTP help you when you do password reuse, or if someone actually gets you password via other means (e.g. a MITM attack). Because then they cannot use the password without either getting your 2FA device, or actually breaking into the server.

And the initial key exchange between server and phone can be better secured than the SMS that is send every time for a SMS-based 2FA token.

The danger of most 2FA mechanisms as they become easier to use is that the service providers decide they’re good enough on their own and start using the second factor as the *only* factor. It’s coming if it isn’t here already. Convenience always ends up trumping security in commercial settings.

an uncopyable 2FA token used as the only factor is still significantly more secure than a password.

not everything really requires the max security you can provide.

Looking at the main hacks the last year or so and counting it up I can say that all the personal info of at least every ‘adult’ (>13) American are already hacked and released. And a large part of the rest of the world.

So you have to wonder if it’s worth the effort anymore.

Also it’s all cute to secure the password like crazy, but if you have companies with plaintext social security numbers and date of birth and name and name of parents and grandparents and pets and medical history and financial info and and and, then having the password safe is not a savior.

They got some information about you. That’s not the same as saying that they have current access to your e-mail or banking account. There are still a lot of things worth protecting.

You didn’t mention RSA keeping a copy of everyone’s ‘password’ and having that get compromised.

Yep, that’s one big problem with most 2fa tokens, there is a shared secret that must be kept in a database somewhere, and if that database is hit, your token can be impersonated. This is one big advantage of u2f

here’s a new story today, http://hackaday.com/2017/10/17/bad-rsa-library-leaves-millions-of-keys-vulnerable/#respond

sms-based f2a can be broken too easily. just as easily as someone can steal your phone number by socengineering your operator’s support staff. actually why not use signal for token distribution?

I have to go through a separate unlocking process before my phone number can be transferred. Many carriers don’t lock the numbers so it’s up to you to make sure you use a carrier that does.