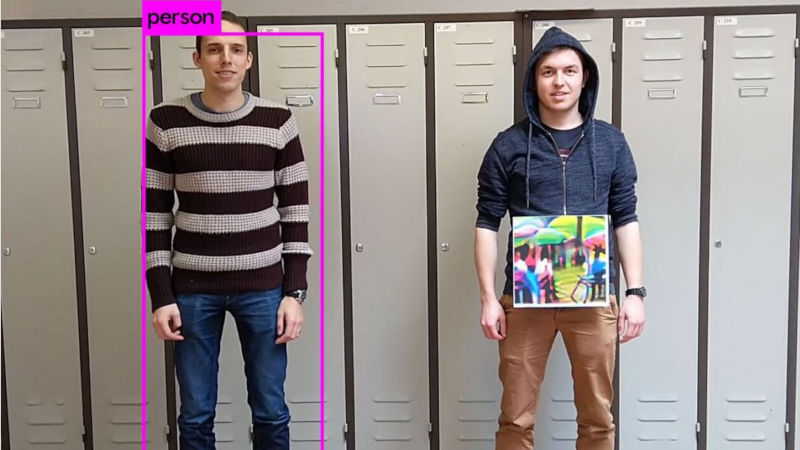

Adversarial attacks are not something new to the world of Deep Networks used for image recognition. However, as the research with Deep Learning grows, more flaws are uncovered. The team at the University of KU Leuven in Belgium have demonstrated how, by simple using a colored photo held near the torso of a man can render him invisible to image recognition systems based on convolutional neural networks.

Convolutional Neural Networks or CNNs are a class of Deep learning networks that reduces the number of computations to be performed by creating hierarchical patterns from simpler and smaller networks. They are becoming the norm for image recognition applications and are being used in the field. In this new paper, the addition of color patches is seen to confuse the image detector YoLo(v2) by adding noise that disrupts the calculations of the CNN. The patch is not random and can be identified using the process defined in the publication.

This attack can be implemented by printing the disruptive pattern on a t-shirt making them invisible to surveillance system detection. You can read the paper[PDF] that outlines the generation of the adversarial patch. Image recognition camouflage that works on Google’s Inception has been documented in the past and we hope to see more such hacks in the future. Its a new world out there where you hacking is colorful as ever.

Cut to new regulations being implemented on what designs can be on your t-shirt if you want to travel through an airport.

Wear a T-shirt of a picture of a person wearing a T-shirt with a picture of a person on it, with the person on that T-shirt wearing a picture of another person, and so on down to the limit of your silk screening resolution. See if you can send the image recognition into an endless loop.

Divide by zero error?

Can you trick a car into not recognizing you as a pedestrian accidentally? I’m all for sticking it to the machine, but I don’t want the machine sticking [it] to me.

Ha!

Another thing might be tricking other cars to recognize your car as a group of pedestrians or emergency services / police to get a free highway ;-)

There’s also the idea of projecting an image onto the face as a friend of mine did:

https://www.youtube.com/watch?v=D-b23eyyUCo

This reminds me of William Gibson’s “Ugly Shirt”.

I foresee an entire fashion industry catering to people who want to remain “invisible”.

And that would lead to a counter detection surveillance system, which hones in on those fashion items to be flagged for deep inspection. If a solution is mass produced it would no longer work as intended. And any solution must be designed to fool multiple systems. The above design only fools one.

Some of the new systems in China use IR cameras + regular cameras. They should be able to spot the warm body and not fooled by the color picture or picture of a face. They can also check for normal body temperature – identify fever for disease controls.

“They can also check for normal body temperature – identify fever for disease controls.”

Hindsight: “If only!”

BTW even if you managed to cover your face or not look into the camera, they can also do gait recognization.

So exciting and yet so scary what technology can do.

https://www.scmp.com/tech/start-ups/article/2187600/chinese-police-surveillance-gets-boost-ai-start-watrix-technology-can

Keyser Söze

walk without rhythm, so you wont attract the worm…

This sort of defense would likely be defeated by programming the surveillance software to ignore information inside of square or rectangular edges. Of course, next: really odd blobs with the same sort of info inside.