A massive power outage in South America last month left most of Argentina, Uruguay, and Paraguay in the dark and may also have impacted small portions of Chile and Brazil. It’s estimated that 48 million people were affected and as of this writing there has still been no official explanation of how a blackout of this magnitude occurred.

While blackouts of some form or another are virtually guaranteed on any power grid, whether it’s from weather events, accidental damage to power lines and equipment, lightning, or equipment malfunctioning, every grid will eventually see small outages from time to time. The scope of this one, however, was much larger than it should have been, but isn’t completely out of the realm of possibility for systems that are this complex.

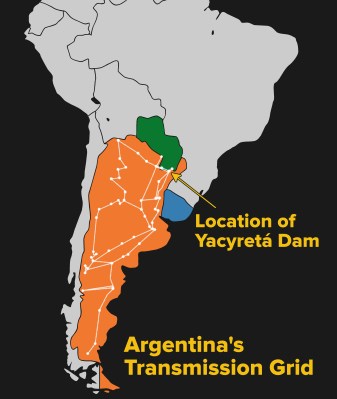

Initial reports on June 17th cite vague, nondescript possible causes but seem to focus on transmission lines connecting population centers with the hydroelectric power plant at Yacyretá Dam on the border of Argentina and Paraguay, as well as some ongoing issues with the power grid itself. Problems with the transmission line system caused this power generation facility to become separated from the rest of the grid, which seems to have cascaded to a massive power failure. One positive note was that the power was restored in less than a day, suggesting at least that the cause of the blackout was not physical damage to the grid. (Presumably major physical damage would take longer to repair.) Officials also downplayed the possibility of cyber attack, which is in line with the short length of time that the blackout lasted as well, although not completely out of the realm of possibility.

Initial reports on June 17th cite vague, nondescript possible causes but seem to focus on transmission lines connecting population centers with the hydroelectric power plant at Yacyretá Dam on the border of Argentina and Paraguay, as well as some ongoing issues with the power grid itself. Problems with the transmission line system caused this power generation facility to become separated from the rest of the grid, which seems to have cascaded to a massive power failure. One positive note was that the power was restored in less than a day, suggesting at least that the cause of the blackout was not physical damage to the grid. (Presumably major physical damage would take longer to repair.) Officials also downplayed the possibility of cyber attack, which is in line with the short length of time that the blackout lasted as well, although not completely out of the realm of possibility.

This incident is exceptionally interesting from a technical point-of-view as well. Once we rule out physical damage and cyber attack, what remains is a complete failure of the grid’s largely automatic protective system. This automation can be a force for good, where grid outages can be restored quickly in most cases, but it can also be a weakness when the automation is poorly understood, implemented, or maintained. A closer look at some protective devices and strategies is warranted, and will give us greater insight into this problem and grid issues in general. Join me after the break for a look at some of the grid equipment that is involved in this system.

Protective Devices at Work in a Power Grid

First, it’s worth diving into some of the protective devices used on large power grids. When a major fault occurs on a transmission line, it is detected by a sensing device called a relay which can automatically disconnect large breakers, typically within a few cycles of the power system’s base frequency. Disconnecting a fault quickly helps limit or prevent damage to major equipment like transformers or generators. While these relays function in a similar way to a relay that might be used in a car or in an electronics project, they can trigger for things other than current. Overcurrent relays are certainly common, but there are also overvoltage relays, undervoltage relays, frequency relays which can detect over-, under-, or mismatched frequencies in different parts of the grid, as well as a large variety of other types of relays. There are also specifications for time for each of these relays, so a smaller fault will typically take longer to trip a main breaker than a fault with a greater magnitude. Older relays are electromechanical in nature, and typically discrete units (i.e. there will be an overcurrent relay and a frequency relay working together but which are functionally separate units). Newer systems use computers to simplify these functions into single units like this sample from SEL, a company known for their robust digital relays.

With all of these protective relays in virtually every system on the grid, it can get difficult to make sure they all work in harmony together. For example, an overcurrent relay in a power generation station should typically be set at a higher trip setting than the overcurrent relay on a circuit in a downstream substation, so that if a fault were to occur on the transmission line (from a lightning strike, for example) only the substation relay trips the circuit offline, rather than the generator’s relay tripping the entire generation facility offline for a fault which wasn’t in the generation facility at all. Problems like these are known as “coordination” problems and must be solved at every level to prevent nuisance trips and power outages, as well as keep parts of the grid powered up even when other parts are having problems.

Possible Scenarios at Work During This Blackout

With that background in mind, we can look at some of the details of this blackout with some help from a more detailed article in TIME. The article reports that there was a frequency issue of some sort, which may indicate that a frequency-sensitive relay operated when it shouldn’t have, or that it failed to operate when it should have, or was not coordinated properly with other frequency relays. This could have led to the removal of a part of the grid from service that was necessary for stable operation. Maintaining the proper frequency on the power grid is especially difficult. Generators located hundreds or thousands of miles away have to spin at exactly the same speed in exactly the same position in order to avoid causing harmful oscillations on the grid itself. Bringing a large generator online requires synchronization between itself and the grid frequency, and mistakes in this process are unforgiving.

On the other hand, another report of the incident (Google translate from Spanish) claims that high humidity may have caused a fault over an insulator on the transmission system, where electricity was able to follow the moisture around the insulators to cause an overcurrent fault. Disconnecting this much generation capacity may have caused an undervoltage or frequency fault on the grid, starting the cascade. Regardless of initial cause, though, cascades should always be planned for and stopped before they get out of control.

The TIME article also reports that there was some existing damage to transmission lines in the area due to storms, which while not directly responsible for the massive blackout itself could have been a contributing factor. Power grids are particularly susceptible to cascade failure, a type of positive feedback loop where one small failure causes more failures, which in turn cause even more failures. In this case, the damaged transmission lines could have been taken out of service, placing more load on the remaining transmission lines in the area. If an overcurrent fault occurred it would have removed yet another line from service. If greater and greater amounts of electricity start flowing down fewer and fewer lines, the result can be the entire grid tripping offline. This was the case with the 2003 Northeast Blackout in the United States and Canada.

Of course it could have been all of these issues. The frequency may have been the start of the cascade failure, further exacerbated by an already-stressed, damaged transmission line system.

Fast Restoration is Good Sign

Whatever the cause may have been, it is encouraging that the grid operators were able to restore almost all of their customers in less than a day. In blackouts resulting from major damage, like hurricanes and earthquakes, the restoration efforts can take weeks, or in particularly bad situations like Puerto Rico after Hurricane Maria repairs can last for months.

The Energy Government Secretariat reports that today at 07: 07hs there was the collapse of the Argentine Interconnection System (SADI), which produced a massive power outage throughout the country that also affected Uruguay. The causes are being investigated and are not yet determined. Recovery has already begun in the regions of Cuyo, NOA and Comahue and the rest of the system is being opened to continue with the total recovery, which is estimated to take a few hours.

From reading update put out by electricity distribution company Edesur during the incident you can see they identified the issue right away, the isolated and eliminated ot quickly in order to ensure that more problems didn’t occur shortly after bringing the power back online. However, risks like this can never be completely eliminated from systems due to the complexity of the grids. Large blackouts continue to occur for many reasons, and in many ways this was a best-case scenario for restoration efforts. Argentina and the surrounding area have a multitude of hydroelectric power stations with blackstart capabilities — able to restart from a total shutdown without needing external power — in turn providing power to other power stations to bring the grid online quickly and reliably. The amount of cooperation across country lines is also impressive in this situation, as five countries are able to operate the grid reliably together every day, facilitate the transportation and sale of electricity across borders, and perform restorations together after a blackout such as this one.

This Could Happen Anywhere

Finally, it’s important to realize that South America’s grid is not fundamentally different from power grids in other parts of the world. Outages like this can occur anywhere, especially if the equipment is aging or poorly maintained. The American Society of Civil Engineers, for example, gives out grades on various parts of infrastructure from time to time and as of 2017 gave the power grid in the United States a D+ grade, citing that most of the grid was built in the 1950s and 1960s with a 50-year life expectancy. The power outage in South America, and other outages like it, may be more of a cautionary tale than an academic curiosity.

Want to learn more about power transmission lines? We have a field guide for that!

Sounds like an article on power grid modeling would wet some juices.

Nice gremlin reference in the illustration.

(Glad to see Joe Kim is back.)

If you’re in Copenhagen and interested in power systems and big engines, it’s well worth the trip out to see http://dieselhouse.dk It was the largest diesel engine in the world for 30 years after it was installed in 1933, and served to supply electric power for many years after that.

It remained a black-start capable plant even after it was economically not worth running all the time. That black start capability helped in the massive (for Denmark & Sweden) blackout in 2003, when it helped restart the grid. Not bad for a 70 year old engine.

The last time I was there they told me it’s not connected to the grid any more, so can’t help in any more blackouts, but they still start it up regularly just for fun and to entertain nerdy tourists.

It looks like it will be started tomorrow.

Hurry up Elliot, get there and cover it for us!

B^)

Oh, wait, my Deutsch is not good, I guess it will be a smaller motor they will be starting.

(sigh!)

Sorry, try http://dieselhouse.dk/en/

The big one gets started on 1st & 3rd Sundays of the month.

It Danish not German language.

Donkey chain!

And right next to it (in the same building) there’s a 132 kV electrical station, and just across the parking lot there’s a 400 kV transformer.

Building power grids is a complicated thing to do, after all, 50 and 60 Hz is fairly low frequency, on a typical PCB we can realistically say that both ends of a conductor will have the same voltage at the same time. Though, on a power grid, this frankly isn’t the case anymore….

Cascade failures can be “planned” for, though if the plan is to have X amount of extra capacity, then things like routine maintenance, minor faults, and others things can quickly eat up that buffer.

One could implement substations with some form of short term energy storage technology, handling the sporadic peaks. Like using larger UPS systems using battery banks and inverters (expensive and high wear), pumped energy storage (cheap if you have water nearby, also requires lots of land), or flywheels. (Very “fancy”, and only good for a few hours. Also low energy density.) Practically smoothing out the highs and lows throughout the day.

Energy storage systems are though not going to “power” the grid. But they can in the best case stop cascade failures from occurring to start with. Or at least hold off major failure for enough time to decrease the major consumers demands, or bring on typically more expensive local generation.

Sometimes I wonder to myself, if a switch yard had a large 3 phase AC motor spinning a large flywheel, would it be able to smooth out the worst spikes on the grid. (Like those times all lights flicker for a bit. Or brownouts)

The grid already has large rotating flywheels, in the form of the generators themselves. There’s also “spinning reserve” in the form of generators running and synced to the grid, but not actually producing power. It’s there in case of a generator trip, or a line fault, or some other big transient on the grid.

Here in Ontario, there is about a gigawatt of generator capacity performing that function (on top of the 10-20 GW of normal load). Nowadays, it’s also there to bolster the output from those unreliable solar farms and fickle wind turbines too.

“The grid already has large rotating flywheels, in the form of the generators themselves.”

Doesn’t really do anything note worthy if the spikes in power demand overload the transmission lines between the generator and the load itself. Having more local energy storage is a bit more useful when it comes to not letting spikes in demand overload the transmission lines.

A bit like a bypass capacitor on a PCB, though there it is more about lowering EMI and ensuring that the chip doesn’t suffer from large amounts of voltage drop.

And yes, solar and wind farms are generally not all that good at giving constant power. And having more energy storage on the grid would be an important step forth in making these energy sources more useful.

Personally I wouldn’t be too surprised if a large solar farm might house a room or more full of batteries/capacitors, since a solar farm needs a large inverter regardless. And through that allowing it to output a more “constant” amount of power to the grid. (Talking about timescales of a few minutes here, not hours.) As to allow slower generation facilities to react without creating brownouts every time a cloud darkens the sky.

Wind farms at least have a “bit” more inertia in them compared to solar cells, so their output would likely not go from full output to no output in seconds like solar cells usually do. So a bit easier for other generation facilities to react to it.

Wind farms are no better. Since electricity is being generated at different frequencies, all Wind Farms have a parasitic loss going from AC to DC + cooling of the battery farm + DC to AC.

A sufficiently spread out battery bank would likely not need much cooling though.

Especially when the wind is blowing, since then most power wouldn’t even see the batteries.

Not to mention that I wouldn’t be surprised if a large bank of capacitors would be sufficient for just bulk decoupling for the Inverter.

The network operator in Australia, AEMO, and the generators are learning quickly about stability and intermittency in a grid where the demand is 30-35GW and about 20% (quite variable) of the supply is renewables (wind, solar and pumped storage). Most new proposals for renewable generation in Australia comes with batteries (as is happening across the globe at the moment); synchronous condensers have been mandated by the network operator here in some cases where there is a high proportion of wind.

AEMO is also battling with aged coal generators suddenly going offline in our period of peak demand which is during summer. The large batteries already connected to the grid are responding within milliseconds to those faults to the relief of the network operators.

There is much to be learnt still about configuring a network which is changing from a smaller number of generators (‘baseload’ is the Aussie jargon) running 24/7 to a much larger number of intermittent, renewable generators dispersed widely across our large continental area.

All this is important in our commitment to a low carbon energy regime.

I think you are describing a synchronous condenser which is a fairly old bit of kit often used for power factor correction. They are coming back into fashion providing a form of artificial “inertia” for wind farms. The inertia is the generation equipment’s resistance to outside changes to the electrical signal, and comes from a generator’s rotor mechanical inertia.

The island at the bottom of Argentina (tierra del fuego) has their own system, thus, they didn’t get the blackout.

I always thought Tierra del Fuego was part of Chile, this map shows it split with Argentina.

I wonder what happens in a hydro-electric station when the load is suddenly removed from the generators. Do they have to quickly stop the water flow to keep the turbines from overspeeding? Interesting to think about.

Isn’t that what clutches are for?

I don’t know, that is why I asked :-)

Yes, the gates close to reduce water flow automatically. A surge tank takes the brunt of the water momentum, otherwise the penstock would rupture.

There’s no clutch. There’s usually a “brake” of sorts that moves up to hold a turbine/generator rotor up off the bearing to avoid damaging it when it is stopped, but that’s all.

There are external oil pressure pumps to lift the shafts of the bearings surfaces. And I think when it has stopped, it can be layed down without the danger of damage. Although I think two remember that in some power stations the have to slowly spin the generator turbine assembly even if it is not operation to avoid sagging over time.

Of course there is no clutch. If you open the clutch while the turbine is still getting power, it could be destroyed. The generator itself is only a small load if no power is taken from it.You need to avoid overspeed.

This:

https://www.youtube.com/watch?v=fJVBlhgt9j8

(starts slow, but impressiv end)

Yes very impressive.. thanks

If you want more hydro, check out this installation that’s not too far from where I live..

https://www.youtube.com/watch?v=K18-jAIYsUY&t=704s

Some generators have governors which control the pitch of the blades on the kaplan design. That controls the speed of the turbine and the frequency of the power out as well as using the wicket gates.

http://www.aegps.com

Argentinian here, the black out sadly was due to mostly politicals issues. The electric company (gob and private administrated) refuses to update the grid system and never acknowledged to the public that power was being exported to Chile, Uruguay and Brasil. Witch causes mayor problems to the end user: fast and heavy voltage fluctuations (240V-140V it sould be 220V) that result on exploding trasformers and burning cables, also the voltage drops makes it so that most appliances dont work or break (had to buy a industrial voltage booster-stabilitator to keep my work equipment safe, it broke twice already)

What’s the problem with exporting energy? On a Sunday at 7am? There is pretty much no power consumption in the country at that time, we should have exported more so more distributed generators were working and the system would had better chances to avoid tripping.

Uruguay doesn’t buy much energy from Argentina since a lot of time (https://portal.ute.com.uy/institucional/informacion-economico-financiera/ute-en-cifras) instead Uruguay is selling energy to Argentina.

And the blockout in Uruguay was for a couple of hours.

“the black out sadly was due to mostly politicals issues”

The Argentinians will probably be the only humans in this planet that will blame anything and everything to … THA GOVERNMENT !!

As if THA GOVERNMENT is not elected by those blaming them … hilarious!!!!

“Not me, not me … it is the government! …” = national mantra of Argentina!!

Nahhh, a lot of us Yankees blame the government for stupid actions too!

We (supposedly) have a Representative form of government, and so we could blame ourselves for voting for it (or more likely, not voting at all).

But as we often say, “we have the best Congress money can buy!”

B^)

The very little power that goes to Brasil from there would not account for much of this. Argentina buys a lot of power from Brasil and Paraguay ( Itaipu dam ) also.

Mostly, one generation facility got disconnected, then a cascading failure occured It has happened in the ´states, it has happened in Brasil, can happen in other places also.

Of course, the government ( and the people that voted in them ) have their part of the guilt, but do not forget that the facilities, projects, cabling, etc, are administered, installed and controlled by people. Not everybody did their best to do a good job ….

I’m a proud survivor of that blackout

And we (i.e. I and many other readers here) are survivors of the https://en.wikipedia.org/wiki/Northeast_blackout_of_2003 where 55 million people were without power for up to two days. Some, sadly did not survive due to fires and general mayhem, but their numbers were more than made up for nine months later, so it is said… Though Snopes says that’s fake news, and north eastern USAians and Ontarions aren’t so spontaneously fecund — it will be interesting to check in with Argentina in nine months.

I would predict a baby boom in nine months. Not much else to do on a sunday with no tv or internet.

Hi Bryan, another Argentinian here. In this link:[https://youtu.be/OYdAcLh31Og] you can see the official version about the incident. It’s a ~three-hour-long (surprisingly technical for the place, IMO) hearing that went on 2019/07/03. Long story short, they were doing some maintenance on one of the biggest transmission lines and “forgot” to update the Automatic Generation Disconnection parameters upstream. Later on, a common line fault (aggravated by human factor) led to a full blackout.

Hi Bryan, another Argentinian here. In this link: https://youtu.be/OYdAcLh31Og you can see the official version about the incident. It’s a ~three-hour-long (surprisingly technical for the place IMO) hearing that went on 2019/07/03. Long story short, they were doing some maintenance on one of the biggest transmission lines and “forgot” to update the Automatic Generator Disconnection parameters upstream. Later on, a common line fault (aggravated by human factor) led to a full blackout.

This video shows the synchronization process and why it matters. https://www.youtube.com/watch?v=RGPCIypib5Q

I didnt readl all replies but for those who are interested in this blackout some days ago i found a very good explanation of what cause this blackout. Im not the guy from the YT channel. Cons: The video is in spanish.

https://youtu.be/0RuaksAT8Dw

I remember that power outage, I was in the office and I watched in real time how It affected uruguay, argentina, and parts of brazil and chile. I can’t say anything about paraguay because we don’t have any installation in that country.

Very impressive article. I’m not an engineer but I worked on and around protective devices like those mentioned for about 30 years. The coordination between devices is certainly complex and I’m sure gets more so on even larger systems. SCADA systems that monitor some of these devices can provide data with timestamps to determine what the process was. Keeping timestamps accurate among many devices can be problematic.

Almost but not quite! Here at Argentina, half the population blames the governing party, and the other half blames the opposition.