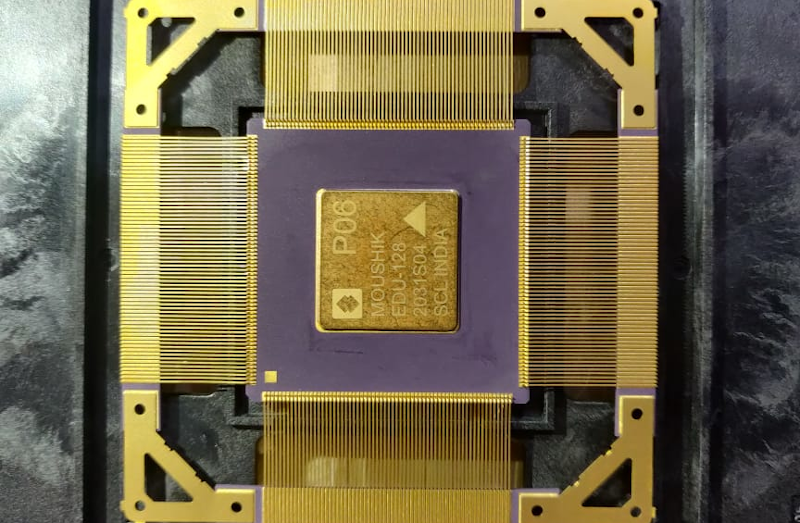

There was a time when creating a new IC was a very expensive proposition. While it still isn’t pocket change, custom chips are within reach of sophisticated experimenters and groups. As evidence, look at the Moushik CPU from the SHAKTI group. This is the group’s third successful tapeout and is an open source RISC-V system on chip.

The chip uses a 180 nm process and has 103 I/O pins. The CPU runs around 100 MHz and the system includes an SDRAM controller, analog to digital conversion, and the usual peripherals. The roughly 25 square mm die houses almost 650 thousand gates.

This is the same group that built a home-grown chip based on RISC-V in 2018 and is associated with the Indian Institute of Technology Madras. We aren’t clear if everything you’d need to duplicate the design is in the git repository, but since the project is open source, we presume it is.

If you think about it, radios went from highly-specialized equipment to a near-disposable consumer item. So did calculators and computers. Developing with FPGAs is cheaper and easier every year. At this rate it’s not unreasonable to think It won’t be long before creating a custom chip will be as simple as ordering a PCB — something else that used to be a big hairy deal.

Of course, we see FPGA-based RISC-V often enough. While we admire [Sam Zeloof’s] work, we don’t think he’s packing 650k gates into that size. Not yet, anyway.

Very nice! And it’s cool to see a leadframe like that.

Does it have an MMU?

From The Register (https://www.theregister.com/2020/09/24/shakti_moushik_cpu_india/) it looks like it’s a “E-Class” processor which doesn’t look like it’s Linux compatible (http://shakti.org.in/processors.html). So I’m guessing there’s no MMU in this one.

The motherboard they’ve made for it does have Arduino compatible headers so someone might be able to have some fun with it

It seems like the comment system might have eaten my last reply. Basically, an article on The Verge lists it as a “E-Class” chip which Sakti doesn’t list as Linux compatible (they explicity state that the “C-Class” which appears to be a higher spec chip is Linux compatible). So I’m pretty sure this doesn’t have an MMU.

The motherboard does have Arduino compatible headers in case anyone wants to play around with those

Sorry, that should the The Register not The Verge

Actually, that’s not correct exactly. The design supports MMU and is capability of running operating systems like Linux and Sel4. See the C class section in the below link. I have gone through some of the device documents and confirmed that the chip supports linux kernel. I am not sure if there is public kernel out there, but it for sure that the chip supports linux.

https://engineersasylum.com/t/shakti-the-open-source-indian-microprocessor-microcontroller/664

I looked it over and searched the repo and it doesn’t look like it has an MMU. However, “is0lated” is incorrect in implying that not having an MMU prevents you from using Linux. Linux can run on MMU-less chips. Having enough RAM is really the only limitation for Linux.

You are partially correct. You can run Linux sans MMU/MPU, however you do require a specialized kernel. Trying to run a standard kernel on a unit without MMU/MPU will outright fail. I believe this misconception largely comes from the PlayStation Portable homebrew community which never did get a proper Linux running on the PSP, largely blaming this on lack of MMU in the unit.

https://elinux.org/Processors#:~:text=ARM%20processors%20without%20MMU.,memory%20protection%20unit%20(MPU).

Thank You for this facinating article… The race is on to developing a floating subzero temperature version of this chip… Best of Luck to those very clever Indian Engineers and Technician working on this Open Source Framework…

nice

Everyone is talking about open CPUs on FPGAs, but it seems like a fully open source FPGA architecture itself might be just as important, or more important than an open CPU. If FPGAs were open and standardized maybe we’d finally start seeing them available for hardware acceleration like GPUs are.

We can’t just keep upgrading CPUs every time there’s a new thing we want to optimize, we need a standard like OpenGL for “accelerator blocks” with their own RAM and such that can be quickly configured.

Then RiscV would get a lot more interesting, because you could custom design exactly the core you want for your application, and run it in its own sandboxed domain. Even a web app could come with the definition of a soft CPU.

It might also be exactly what we need for a true general purpose integrated SDR chip that’s not tied to any one modulation, without making it too hard to embed or use too much power.

Use wallet voting to make it happen. That means putting money your mouth is.

FPGA isn’t going to be price and performance competitive with off the shelf CPU. There are a lot of resources (i.e. chip area) for the extra routing that you won’t waste space on a CPU (which is an ASIC). You’ll be doing a really good job if it comes within 1/10 of the clock speeds and similar instructions per cycle. AMD is current at “7nm” vs Xilinx at 16nm, Altera at 14nm(?)

For acceleration, it is probably okay, but someone have to code the hardware block you need.

The other thing about upgrades… Even with FPGA, you won’t be able to go to faster memory without changing the main board. e.g. DDR4 to DDR5 as the memory module slots will have different connectors and pinouts.

>Even a web app could come with the definition of a soft CPU.

It won’t happen because people that can code FPGA is worth a lot more money than code monkeys that churn out web apps. The other issue is that it takes a very long time to translate, place/route FPGA. So if your “app” is a piece of CPU code that takes minutes to run, you are much better off using a CPU. Only when it is tens of hours or days when this make sense.

Forget about multitasking when each program tries to have its own configurations. Web apps have some level of protection from the browser. You can be also run them in a sandbox or VM. Can you trust the unknown piece of code that you let it reconfigure the FPGA to do whatever it wants to do?

You haven’t think it through.

It’s been attempted – by Linus Torvalds was involved too – see the wikipedia entry for “Transmeta”. It didn’t work out then, but perhaps there’s mileage in mixing fpgas and multi-core cpus to allow for architecture customisation?

The Transmetta Crusoe wasn’t an FPGA. It was basically a custom RISC CPU with a portion that morphed x86 instructions to RISC instructions in real-time at a slight performance penalty. I think a 1ghz Crusoe was in the ball park of the performance of a 800mhz Intel chip of the time. The big benefit of these chips was the significantly reduced power consumption. They had power consumption somewhat like an Intel Atom chip years before those ever came out. I still own a laptop that has one of these Crusoe chips in it. In theory new x86 instructions could be added to the chip by updating the microcode to translate those new instructions to RISC. In theory it could also work with other instruction sets than x86 as well. I don’t think, at least officially any microcode was ever released for other architectures. It would be interesting to see someone reverse engineer the microcode of these chips to add new/custom instructions or work with another architecture.

It’s like that ultra high level object oriented CPU from the 80s. This stuff can probably be made better than current tech, but it’s easier to sell simple, direct, and proven to other business people, nobody wants to wait for new tech to catch up.

Web code is done with reusable frameworks, and easy layout tools are possible. Multitasking can easily be done by having four or eight accelerators, albeit you won’t be able to run more than that many accelerated programs.

I don’t see why FPGA work has to be any harder than GPU work with better tools.

Sandboxing can be done just by giving accelerators their own memory and tightly controlled IO. Your SDR accelerator needs hardware access, but some things can be done just like GPUs and webGL are today.

Hmm… Even at 180nm at this die size (5x5mm) this may prove to be a relatively expensive chip (~$10 depending on volume)… The performance of this sounds a bit like a mid-range Cortex-M processor? I guess that’s not terrible for what it is.

Where can we buy this? And use this chip on an Arduino like board?

When are they expected to sell these open source chips? Are we going to have an actual libre, open-source “arduino” that uses this chip?