Visual impairment has been a major issue for humankind for its entire history, but has become more pressing with society’s evolution into a world which revolves around visual acuity. Whether it’s about navigating a busy city or interacting with the countless screens that fill modern life, coping with reduced or no vision is a challenge. For countless individuals, the use of braille and accessibility technology such as screen readers is essential to interact with the world around them.

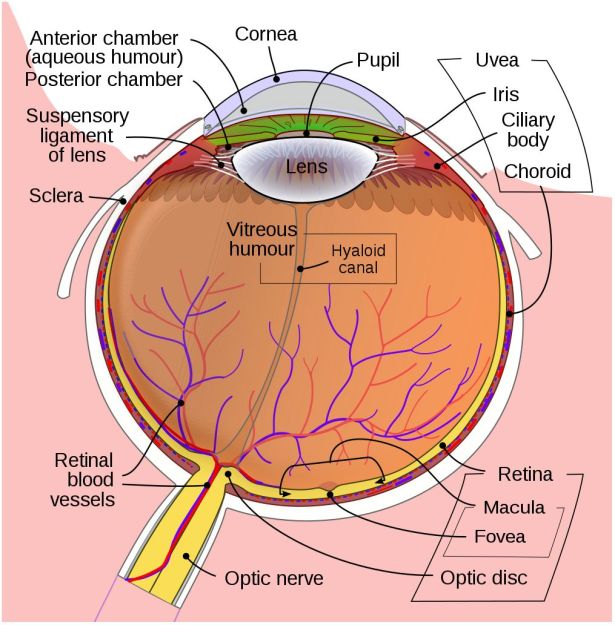

For refractive visual impairment we currently have a range of solutions, from glasses and contact lenses to more permanent options like LASIK and similar which seek to fix the refractive problem by burning away part of the cornea. When the eye’s lens itself has been damaged (e.g. with cataracts), it can be replaced with an artificial lens.

But what if the retina or optic nerve has been damaged in some way? For individuals with such (nerve) damage there has for decades been the tempting and seemingly futuristic concept to restore vision, whether through biological or technological means. Quite recently, there have been a number of studies which explore both approaches, with promising results.

Setting the Scene

In the developed world, the leading causes of blindness are age-related macular degeneration (AMD), diabetic retinopathy and glaucoma. Of note here is that the treatment of cataracts and refractive issues has massively decreased the total number of total blindness cases compared to the developing world, leaving types of visual impairment which are hard to treat.

In the three aforementioned causes of blindness, the retina is damaged due to a variety of causes, destroying either part of the retina (e.g. mostly the macula with macular degeneration) or the entire retina, often in a slow progression of the loss of visual acuity until no functional retinal structure remains. In these cases, as well as in conditions where e.g. the retina becomes detached from the back of the eye (retinal detachment, e.g. due to blunt trauma), the optic nerve and processing centers of the brain remain intact and functional.

As most types of vision loss including those from childhood blindness feature an undamaged visual cortex, a lot of the focus on restoring vision has consequently been on this part of the brain. Many studies have focused on developing prostheses that replace the functionality of the eye including the retina and optic nerve. More recently, the possibility of restoring functionality of a damaged retina and optic nerve by having the tissue regrow itself have been examined as well.

The Genetic Time Machine

It’s a poorly kept secret that human cells are essentially immortal. Unfortunately, those the bits that make them immortal and capable of infinite regeneration toggled off once a cell reaches a certain point in its drive towards becoming a specific type of tissue (for example: muscle or liver tissue, or part of the spinal cord or retina). Yuancheng Lu et al. recently studied the reversal of ageing and injury-induced vision loss through epigenetic reprogramming (bioRxiv preprint version).

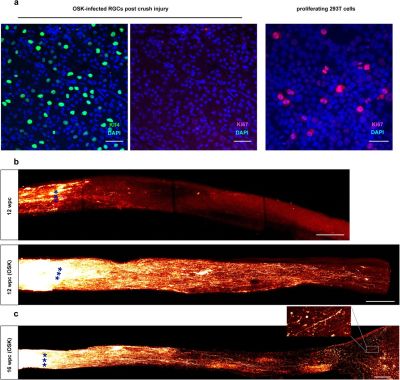

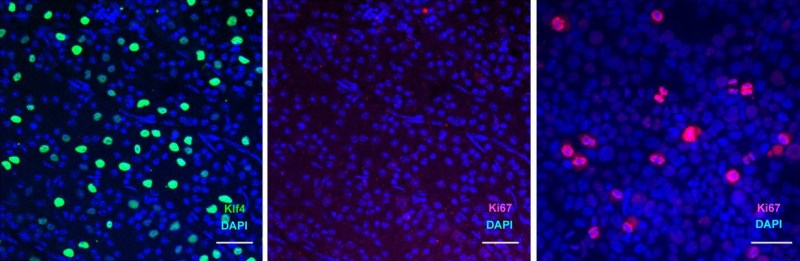

In mouse models, they showed that by re-enabling the expression of three genes (Oct4, Sox2 and Klf4: OSK) via an adeno-associated viral (AAV) vector (stripped-down adeno virus) in the cells of the eye, the ectopic expression of these genes resulted in the regeneration of injured axons, regrowing a damaged optic nerve and recovering from a damaged retina due to glaucoma. In addition, the age of the cells (indicated by DNA methylation levels) after 4 weeks of OSK expression had been reset to their youthful state.

OSK along with c-Myc (OSKM) are known to be involved in the ability of cells to regenerate tissues, based on data from previous experiments. The reason why OSK and not OSKM was used in this particular experiment is because ectopic c-Myc expression has been shown to result in tissue dysplasia: basically abnormal cell development with predictably negative results. Yet even though the mice in this study regained a significant amount of their lost vision, it is important to remember that all of these experiments are to fill in the blanks where we still lack understanding.

Key to all this is our understanding of two mechanisms: the regenerative capabilities of cells and the epigenetic ‘clock’ which underlies the process of ageing. DNA methylation appears to play a major role in both, with its role in the latter causing a gradual change and faltering in biochemical processes. Methyl groups can bind to the DNA molecule, where they serve to alter the expression of genes. The resetting of these methylation patterns is a standard feature in the mammalian reproductive system (reprogramming), after fertilization of an ovum by a sperm. Without this mechanism, the resulting embryo would have the same genetic age as the parents, which was a concern with Dolly the (cloned) sheep.

Obviously, the goal in this kind of gene therapy to erase the effects of ageing and injuries, but not to turn every cell into a stem cell. If however the damaged tissues, such as nerves and organs, could be reset back to a more youthful state using OSK or similar, it might mean that a person could not only regenerate a damaged optic nerve and retina, but also reverse the effects of ageing, including macular degeneration and so on.

The Age of Cybernetics

Sadly, regenerating tissues through epigenetic programming as a regular or even experimental treatment in humans is still a long while off at this point. However, the use of implants and human-computer interfaces to restore lost senses is further along, to the point where retinal implants like the Argus II have already been approved for the treatment of macular degeneration and other conditions which leave the transmitting and possibly processing layers of the retina intact.

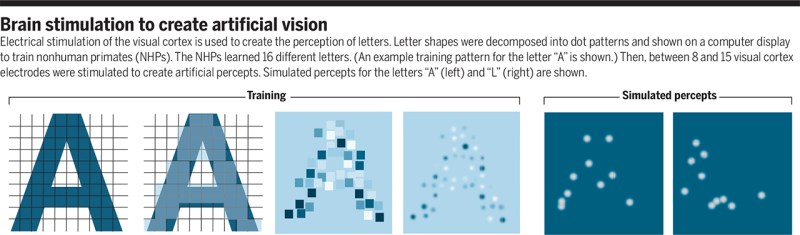

When however the retina is too badly damaged and possibly the optic nerve as well, then one quickly ends up at the experimental area of direct-brain stimulation. Here the retinal mapping property of the visual cortex is exploited: the routing of the retinal signals onto the visual cortex forms essentially a 2D map. This makes the job of figuring out which part to trigger to ‘light up’ the target part of a person’s vision significantly easier. The main difficulty is in figuring out ‘how’.

As I touched upon in the article on Neuralink from last year, a major issue with the innervating of the brain with electrodes is that neurons in the brain are by all definitions minuscule. This means that the best we can do here is to essentially jam probes into roughly the right area and hope that we hit at least some of the right neurons with an electric pulse in order to cause the intended effect. The sobering conclusion is that ‘high-tech’ for retinal implants is hundreds of pixels, with prospective visual cortex implants presumably in the same ballpark. Not to mention the low visual fidelity one can expect from what would be the optimistic equivalent of a grainy, black and white image.

Since any brain implant using today’s technology stimulates many thousands of neurons simultaneously, the best result one can hope for in the visual cortex is that of the production of a phosphene: the experience of ‘seeing’ a bright spot on one’s field of view that was not caused due to light stimulating the retina. Another way to cause a phosphene is through mechanical stimulation, e.g. when pushing (lightly) on one’s eyes or when suffering an impact on the head (‘seeing stars’).

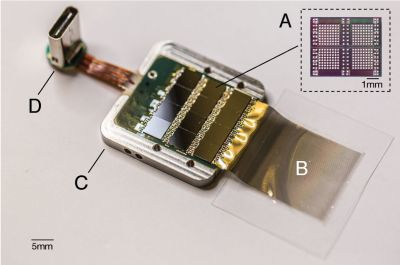

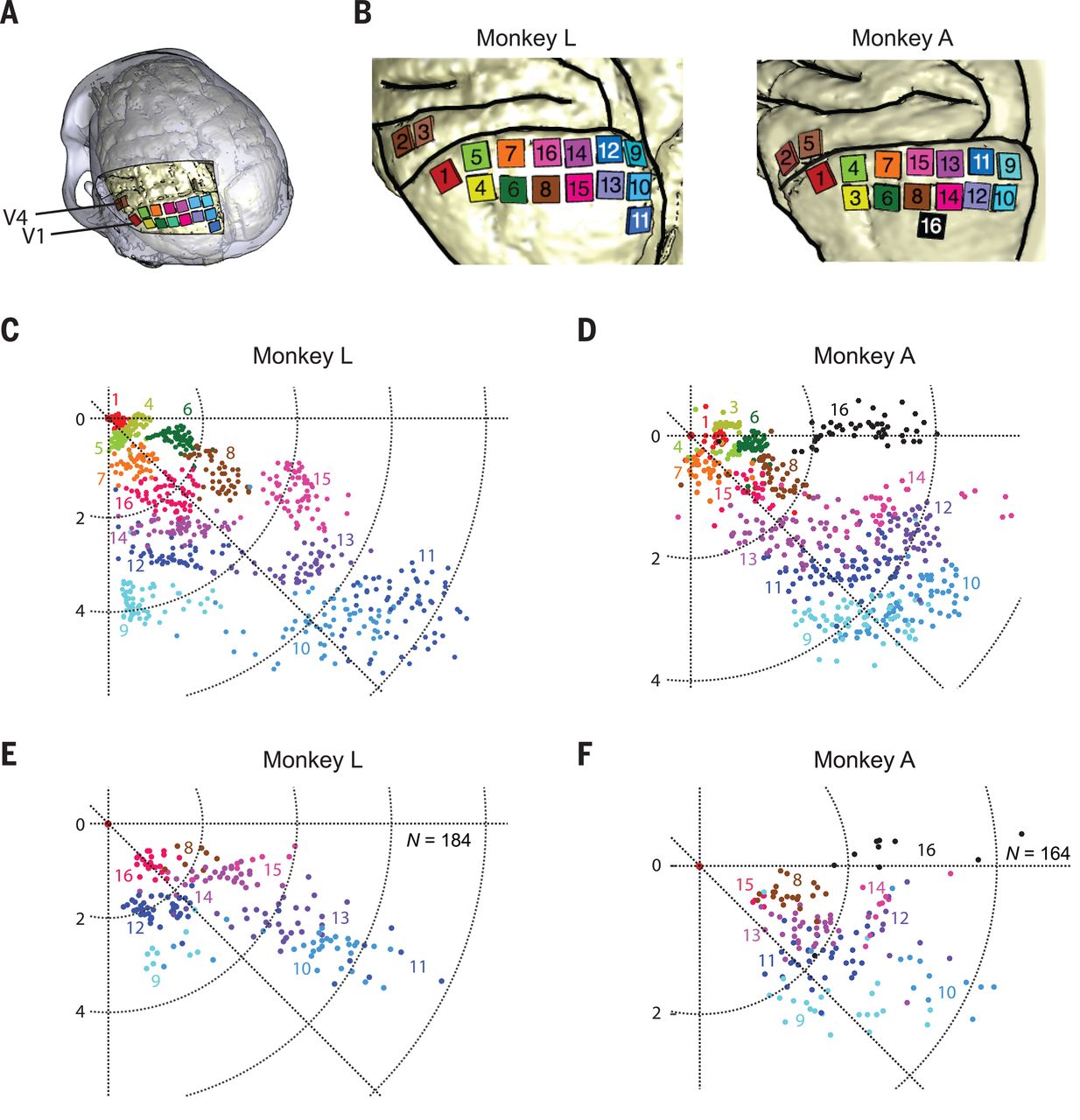

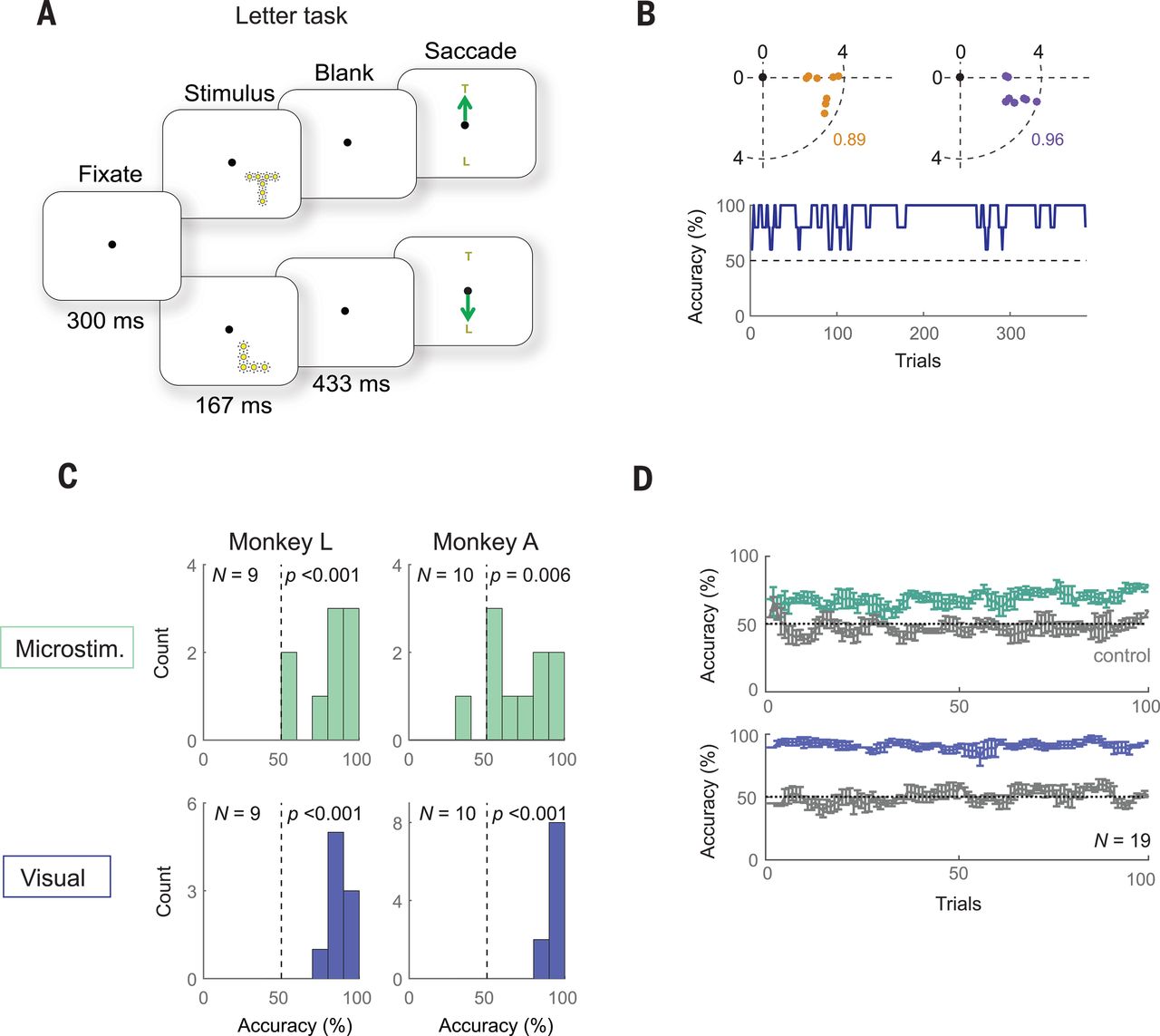

A recent article published in Nature by Chen et al. titled ‘Shape perception via a high-channel count neuroprosthesis in monkey visual cortex‘ details an experiment where a 1024-channel prosthesis was implanted in the visual cortex of non-human primates (NHP, in this case macaque monkeys). From similar experiments on human subjects, we know that these perceived dots seem to range in size from a pinpoint to a few centimeters at arm’s length and can differ in perceived color, presumably depending on which neurons in the visual cortex get stimulated more strongly.

Unique in this experiment was the use of intracortical electrodes (Utah arrays) where previous experiments would usually use conductors on the surface of the visual cortex. This allowed for lower currents to induce the desired response in the target area (V1) of the visual cortex, the effect of which was measured in a higher cortical area (V2):

The goal of the experiment was to determine whether the monkeys could recognize the shapes that showed up for them as a grouping of phosphenes. If they pointed out the right shape afterwards, they were rewarded. The same was done with the determination of motion: here the monkeys had their eye motions monitored to see which direction the phosphenes were perceived to be moving.

A major limitation with a study like this one is that it involves human researchers interpreting the actions of monkeys who are interpreting input from said researchers. As noted by Chen et al., the occasional drops in accuracy could very well have been due to a lack of motivation on the side of the monkeys, especially at the end of a recording session.

Despite the relatively promising results of the study — with generally an above-chance result during recording sessions — moving such studies to human subjects in order to turn it into a medical product would be highly complicated. Not only due to the need to cover the full visual cortex surface (25 to 30 cm2 mean area per hemisphere), but also due to the need to further increase the resolution of the array and to develop a wireless version with electrodes that can stay in place for decades without causing damage to the surrounding tissue.

The End of the Proverbial Tunnel

Seeing these results from different studies following different paths towards accomplishing ultimately the same goal would seem cause for careful optimism. As with all scientific studies, there is no guarantee that a particular approach will turn into a viable therapy within a matter of years. Some will never make it out of the laboratory, but may spawn new ideas and new approaches.

In the case of phosphenes, they were known about since the 1920s and experimented with in the second half of the last century, but the technology to (safely) create brain implants has taken much longer. Similarly, the concept of epigenetics in its current definition, along with its reprogramming has been around for a while, but has seen major advances the past years.

Regardless, thanks to the tireless efforts by countless scientists around the globe, it seems that we may actually reach a point in the near future where blindness has become a thing of the past.

The path from stem cells to tissue cells looks to me like game of life. A small seed that initially diverges to a multitude of patterns that after some time converge again to a more complex pattern. Unfortunately the ending pattern is not a living organism anymore, though it can sustain its stable form for decades.

Then the difficult in stop aging resides in change the original seed so it can converge to a real stable pattern instead of simply applying a brake on the existing processes.

Article about testing implants on animals… Bold move Cotton, let’s see how it pays off.

Also don’t forget, that one needs to make some blind mice before he/she can make them not blind. And most of the research on stem cells, and all of it on development of the eye and ocular nerve is done using aborted and miscarried fetuses and fertilized eggs from in vitro process, that weren’t implanted.

Strangely as someone who lost his left eye due to communism and glaucoma, ans has barely working right eye, I’m quite okay with experimentation on animals, or with using stem cells and human tissues from those “morally questionable” sources. And I would happily undergo an experimental gene therapy if it can fix my broken left eye (for starters)…

Interesting but just remember that there are human cells that regain immortality, cancer, so just be careful.

In previous studies, 4 genes were activated that expressed the Yamanaka Transcription Factors. This induced pluripotency, but could also cause cancer. But in this experiment, since they only wanted regeneration, they excluded Myc, the cancer-causing and pluripotency inducing gene of the bunch.

So by only introducing 3 of those genes instead of all 4, they induced growth with no cancer. They were also able to turn the regeneration on and off at their leisure. I find that incredible!

Well, a retina detachment is no fun.

Why they can’t implant a whole already functioning eye and having

it connect to the optic nerve is beyond my understanding.

Would I like to have 20/20? Yep if it were 40 years ago.

Now I’m too old and set in my ways to care.

With transplants the hardest thing is nerve regeneration, it’s very hard with hands, something as complex as the optic nerve, well if we can’t regenerate a spine. Surgically though it would be fairly easy though.

They actually did spinal regeneration in mice a few years back. IIRC, they used stem cells, mice regained 40% of sensation and movement in their hind legs and tail. The researchers removed 5mm (0,2″) of the spinal cord first, so it was true regrowth. Results might be better for severed or damaged spinal cord…

The best option for ocular transplant would be to grow new eye using the genome of the patient. We actually do that when growing some skin grafts with cells taken from the foreskin (and clitoral hood, for the women), IIRC…

Oh, I didn’t know that about mice, and if it could work bridging an optic nerve to an eye implant, even at 40% (hell most people would accept 25%) would be fantastic. But regrowing a whole eye from cells seems difficult, the structures take a long time to form (I’d assume months) and how are you going to maintain blood flow to the growing structure? But if it could be done I say bring it on.

wheres the star trek reference with laforges glasses? wheres the picture of laforge looking cool in his futuristic shades done by HADs resident artist?

you missed a BIG scifi reference in there

Neat… I’m all about perfecting the bio-equivalent methods that is kind of like an autograph like method without doing and grafting. Like dude… just reset the individuals specific cells to their youthful state is the way with the healthiest factors that do so. Seems even some genetic engineering to improve adverse function pre-existing conditions, is a great advancement to have in the toolbox also.

I have the XLHED, though really can use new bio-equivalent teeth now. I suppose hair where used to be in balding regions would be nice. Functioning senses when I’m in my 100’s will be just great too. I’m content with the rest of the XLHED expressed traits for the most part.

Now, in regards to less morbid approaches… seems an advancement from the phosphenes that is already known in certain circles would be the television method like in this video though transmitting images to the brain versus hacking what the eye sees images going to the brain:

https://www.youtube.com/watch?v=FLb9EIiSyG8

Lens regeneration has been done, both in animals and in humans. But in humans the application has been limited to a few infants born with cataracts. The process involves removing all of the lens except for the outermost layer of cells inside the lens capsule. Those cells then divide and re-form a clear lens. Don’t know why it hasn’t been tried in adults. Probably far too much money in artificial lens replacements that still require glasses for some viewing ranges.

Almost every human starts getting presbyopia starting around age 40, with a few years of slowing focal response preceding being unable to focus at close range and requiring reading glasses. The cause is an enzyme in the lens that depletes over time and once the level drops to a certain level, the lens starts to get stiff and less able to change shape. Eye drops were developed in China to carry the enzyme through the cornea to the lens, making it flexible again. The big problem was developing a carrier to get the non-water soluble enzyme through the cornea, without having the carrier have any adverse effects. I didn’t find any info on how long the effect lasts but Bausch and Lomb was doing their own R&D on it.

Damaged corneas have been regenerated with a pretty simple stem cell allograft. There are cornea stem cells around its edge, called the limbus. Limbic stem cells are constantly dividing and making new cornea cells, which migrate toward the center of the cornea. Problem is the process is very slow. If a person has part of one limbus undamaged, stem cells can be harvested from it, cultured, then spread across the full surface of both corneas. Having direct access to the whole cornea, the stem cells get to work dividing and replacing the damaged cells.

Spinal cord injuries can be fixed with an allograft of sheath cells from the olfactory nerve. Starting with mice, then rats, followed by dogs injured in accidents, the procedure was very successful at restoring function past the spinal injury site. But as yet I’ve heard of it being used on just one human, seven years after he was stabbed in the back, totally severing his spinal cord. The latest info I could find is now some years old but he was getting around with a walker. Why is this not THE procedure being done for most spinal cord injuries? How research got started on it was it was noticed the of all the nerve tissue in mammals, the sheath cells of the olfactory nerve regenerated the fastest and it was postulated that they might be able to act as a bridge for damaged parts of the spinal cord.

Brilliant Blue G dye (what makes blue m&m candy blue) has been shown to reduce inflammation of nerves, including the spinal cord, after injury. It’s the inflammation and stress from it that causes much of the damage. It worked in rodent tests but the amounts given temporarily turned their skin and eyes blue. So why hasn’t this progressed to larger animal and human tests? Having it as a part of paramedic kits could go a long way towards reducing the number and severity of debilitating spinal cord injuries.

I’d be saying “Hey Doc. Make me a Fremen Smurf then stick a needle up my nose!” halt the damage, then repair it so people can walk instead of having to use a wheelchair or some other device to get around the rest of their lives.

There have been so many things over the past 30 years that showed promise of prodding the human body’s latent abilities to repair itself, or treatments that stop it from further damaging itself after injuries, and other *cures* that once done need no further *treatment*, but they come, they make a brief news splash, then are never heard of again.

(very slow) human testing is mostly a bureaurocratical problem…As someone suffering from ALS, I can tell you that such experimental treatments would have a substantial line of patients willing to risk it if the treatment had any reasonable chance of improving their situation and was reachable (financially).

In some ways, the research into how the human body works and the technology to study and tweak it seems kind of like the development of electronics and computers. It seems like we’re at the stage where the research is expanding exponentially, building upon itself and finding so many new directions to go at the same time. If we look at the path taken by computer development and try to imagine a similar growth in understanding of the human body, it feels both exciting and perhaps scary to consider what will come next. On the one hand, we will find cures for all kinds of diseases, as well as ways to repair all kinds of issues. On the other hand, we may also unlock some doors which will have profound consequences for mankind and society. Questions regarding immortality, designer children, super-human capabilities, and trans-human beings may very well become issues before anyone knows how to deal with them. Even simpler issues, such as mood-controlling drugs or pills to erase the effects of bad diet or other poor lifestyle choices, may be a challenge for us once such things become ubiquitous. Already, in fact, we are having to deal with the effects of modern medicine and technology: the number of older people is becoming a larger and larger percent of the population in developed countries. Another issue: as we are more able to cure deafness, we are also diminishing the culture of deafness.

Every new miracle cure brings with it questions that we’re not always prepared to answer.

As far as a grid array on the visual cortex, I thought that had been done 17 years ago by a DARPA program, and on a human subject.

https://en.m.wikipedia.org/wiki/William_H._Dobelle

Dobelle was one of the succesful scientists, although there were several other succesful attempts. One in the 50-s (Plumb) and one in the 60-s (Bridley, Lewin).

Concerning the article (if I remember correctly):

The first phosphenes caused by voltage applied to the visual cortex were observed during experiments by Förster in 1929. He experimented on a guy who lost part of his scull during the war.

The term phosphene appears at least as early as in 1755 when a french physician applied voltage to the head of a blind man, who lost vision to fever.

This experiment was basically the first in the long search for a possibility to restore vision.