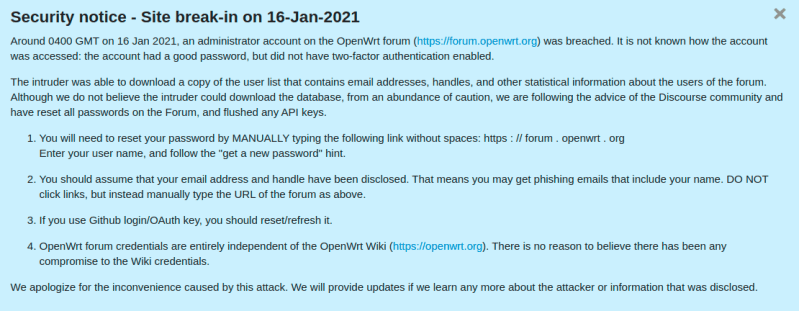

OpenWRT is one of my absolute favorite projects, but it’s had a rough week. First off, the official OpenWRT forums is carrying a notice that one of the administrator accounts was accessed, and the userlist was downloaded by an unknown malicious actor. That list is known to include email addresses and usernames. It does not appear that password hashes were exposed, but just to be sure, a password expiration has been triggered for all users.

The second OpenWRT problem is a set of recently discovered vulnerabilities in Dnsmasq, a package installed by default in OpenWRT images. Of those vulnerabilities, four are buffer overflows, and three are weaknesses in how DNS responses are checked — potentially allowing cache poisoning. These seven vulnerabilities are collectively known as DNSpooq (Whitepaper PDF).

Favicon Fingerprints

One of the frustrating-yet-impressive areas of research is browser fingerprinting. You may not have an account, and may clear your cookies, but if an advertiser wants to track you badly enough, there have been a stream of techniques to make it possible. A new technique was added to that list, Favicon caching (PDF). The big problem here is that the favicon cache is generally not sandboxed even in incognito mode (though thankfully not *saved* in incognito mode), as well as not cleared with the rest of browser data. To understand why that’s a problem, ask a simple question. How hard is it to determine if a browser has a cached copy of a favicon?

The exact scheme the authors suggest is to use multiple domains with discrete favicons, each one representing a single bit. Each user can then be assigned a different value by sending them through each domain through a redirect chain. As the favicons are loaded, that bit is set to true. You may wonder, how does that data get read back by the server? The client is handled in a read-only mode, where each of those favicon requests return a 404. By watching the requests come in, the server can rebuild the unique identifier based on which favicons were requested.

When I first read about this technique, a potential catch-22 stood out as a problem. How would the server know whether to put a new connection into a read mode, or a write mode? It seems, at first glance, like this would defeat the whole scheme. The researchers behind favicon fingerprinting have an elegant solution for this. The favicon for a single fixed domain works as a single bit flag, indicating whether this is a new or returning user. If the browser requests that favicon, it is new, and can be funneled through the identifier writing process. If the initial favicon isn’t requested, then it should be treated as a return visit, and the ID can be read. Overall, it’s a fiendishly clever way to track users, particularly since it can even work across incognito mode. Expect browsers to address this quickly. The first step will be to sandbox cached favicons in incognito mode, but the paper calls out a few other possible solutions.

Orbit Fox

The long-standing pattern in WordPress security is that WordPress itself is fairly bulletproof, but many of the popular plugins have serious security problems just waiting to be found. The plugin of the week this time is Orbit Fox, with over 400,000 active installs. This plugin provides multiple features, one being a user registration form. The problem is a lack of server-side validation of the form response. On a site with such a form, all it takes is to tweak the response data so user_role is now set to “administrator”. Thankfully, this vulnerability is only exposed when using a combination of plugins, and the problem was disclosed and patched back in December.

Patch Tuesday

Another patch Tuesday has come and gone, and the Zero Day Initiative has us covered with an overview of what got fixed. There are two patched vulnerabilities that are noteworthy this month. The first is CVE-2021-1647, a flaw in Microsoft Defender. This one has been observed in-the-wild, and is a RCE exploit. If your machine is always online, you probably got this patch automatically, since Windows Defender has its own automatic update system.

The second interesting problem is CVE-2021-1648. This vulnerability is the result of a flubbed fix for an earlier problem. From Google’s Project Zero: “The only difference between CVE-2020-0986 is that for CVE-2020-0986 the attacker sent a pointer and now the attacker sends an offset.” Thankfully this is just an elevation of privilege flaw, and has now been properly fixed, as far as we can tell.

Hacking Linux, Hollywood Style

Hacking Hollywood style occasionally works. You know the movies, where an “elite hacker” frantickly mashes on a keyboard while blathering nonsense about firewalls and reconfiguring the retro-encabulator. Sometimes, though, keyboard mashing actually finds security problems. In this case, a screensaver lock can be broken by typing rapidly on a physical keyboard and the virtual on-screen keyboard at the same time. The text from the bug report is golden: “A few weeks ago, my kids wanted to hack my linux desktop, so they typed and clicked everywhere, while I was standing behind them looking at them play… when the screensaver core dumped and they actually hacked their way in! wow, those little hackers…”

Zoom Unmasking

Imagine with me for a moment, that you work for Evilcorp. You’re on a Zoom call, and the evil master plan is being spelled out. You’re a decent human being, so you start a screen recorder to get a copy of the evidence. You’ve got the proof, now to send it off to the right people. But first, take a moment to think about anonymity. When your recording hits the nightly news, will Evilcorp be able to figure out that it was you that leaked the meeting? Yes, most likely.

There is a whole field of study around the embedding, detecting, and mitigating of identifying information in audio, video, and still images. This discipline is known as steganography, and fun fact, it’s named after the trio of books, “Steganographia“. The first written in 1499, Steganographia appears to be mystical texts about how to communicate over the distances via supernatural spirits. In a very satisfying twist, the books are actually a guide to hiding encrypted messages in written texts, the whole thing hidden using the techniques described in the hidden text. For our purposes, we could divide the field of steganography into two broad categories. Intentionally hidden data, and unintentionally included indicators.

At the top of the list of intentional data is Zoom’s watermarking feature. The call administrator can enable these, which includes unique identifiers in the the call audio, video, or both. The video watermarks are pretty easy to spot, your username overlayed onto the image. There’s no guarantee that there isn’t also sneakier watermarking being done. Next to consider is metadata. Particularly if you pulled out a cellphone to make the recording, that file almost certainly has time and location data baked into it. A GPS coordinate makes for quite the easy identifier, when it points right at your house.

Unintentional steganography could be as simple as having your self-view camera highlighted at the top of the call. There are more subtle ways to pull data out of a recording, like looking at the latency of individual call members. If speaker B is a few milliseconds ahead in the leaked version, compared to all the others, then the leaker must be physically close to that call member. It’s extremely hard to cover all your bases when it comes to anonymizing media, and we’ve just scratched the surface here.

“The long-standing pattern in WordPress security is that WordPress itself is fairly bulletproof, but many of the popular plugins have serious security problems”

Ah, so that’s why HaD is so basic.

it’s not basic, it’s less insecure ;-)

That’s a feature…

Another year of buffer overruns from crappy C code (there is no other kind of C code).

So,which computer language is so versatile as to be run on most computation architectures, and so close to the machine code to allow fine tuning and compactness of execution should I look into learning?

Rust.

The language itself contains fine-tuning mechanisms like `#[naked]` functions for writing interrupt handlers and other superlowlevel things which C misses and must by added via GCC specific nonportable pragmas and __attribute__s.

Number of available targets including MCUs galore is quite brutal also thanks to LLVM layer. https://rust-lang.github.io/rustup-components-history/

They also have and official documentation for MCU’s on their https://www.rust-lang.org/learn documentation landing page. Look for *Embedded book*. MCUs are not a niche area in Rust.

From that list: You’ll need at least a 32-bit ARM chip before it would run and qualify for the you-are-on-your-own tier. The notible exception is the MSP430.

I’ll just leave it here: https://www.avr-rust.com/ (8bit and it works. I’ve personally tried it.)

Regarding 32bit there are also RISC-V targets.

Thanks [Grawp], I’ll look into it.

one can inline assembly with C, so how low do you want to go exactly?

Saying this in HaD comments section is a blasphemy! :D

Soon here will come crusaders with their teachings of how system languages like Rust are immature and they’ll try to shove their dogma that [the ability of doing buffer overruns and pointer aliasing is a feature] down your throat!

I think the real take-away here is that children have utility for fuzz testing software.

I think the takeaway for me is that a larger attack surface equals more potential bugs. Like did they really need/want the virtual keyboard ?

I kind of like the OpenBSD philosophy, extremely basic default install, and then YOU need to enable (and configure) what you actually want. OK, for 99.999% of users this would be a living hell (I do not know what there is, how do I know what I should want ?, what do I need ?), but I can still admire the philosophy.

Any computer with X11 installed is a security joke.

Totally true. But in the past (~20 years ago) I have securely* logged into machines 1,000+ km (~600+ miles) away and worked on then like they were sitting directly in front of me (albeit a bit slower). Going forward that is something that is currently lacking in Wayland, which may not be a bad thing (do one thing and do that one really well). I think that I was using a diskless SPARCclassic (50 MHz) at that time (booted from the network), so it would have been 10BaseT. I do not remember what the WAN link was but it was not much.

Yes the security in X11 is very bad, because it is just so complex – it has a massive attack surface, but Wayland makes UNIX feel more like Microsoft windows (single user desktop). One step forward, two steps back. If you have ever seen the full set of physical programming manuals for X11, it was basically half a trees worth of wood pulp. Maybe X11 was being far too ambitious, the systemd of it’s day. But I have used it over 2Mbit/s links and it works.

* X was restricted to the loopback address (using multiple firewalls) and X11 was forwarded from the remote machine over ssh to my client.

Modern desktop remote protocols use compressed streams and partial updates, no need to pass individual graphics primitives over the net. This is progress, not regression. Look forward to a bright future and stop whinging over what was.

“One step forward, two steps back. If you have ever seen the full set of physical programming manuals for X11, it was basically half a trees worth of wood pulp.”

Had, don’t miss my CDE days.

@X I was not “whinging over what was”, I was pointing out even a “security joke” can historically have mitigation’s put in place to minimise the attack surface. Personally I do not like Wayland nor systemd, I think both are a misstep and both will eventually be upgraded, but that is just my opinion having worked with UNIX for nearly 40 years, starting with SunOS and DEC Ultrix.

I am actually a little worried that no OSK will be a default in everything-buntu now, I have been dealing with some systems that need some configuration before you can get a hardware keyboard working with them. I know that’s not real typical, but it was handy for me. I might have to use specific “accessibility” targeted distros instead or something.

On the other hand. I gotta remember this trick for as long as it works, I’ve got one really dumb machine (It’s a superannuated Turion dual core hanging together with spit and string, a real frankenstein hardware wise) that the login freezes on about once in 50 times coming out of screensaver, doesn’t crash all the way, now I could switch to a terminal and shutdown or kill things that way, but it’s PITA (Usually because I forget exactly how and have to look it up) and I would find it helpful if I could just hammer the thing into submission, so I could close some things more gracefully (Make sure they save stuff) and restart the godforsaken piece of trash.

I still have an old serial terminal for logging into machines with busted consoles. Just start a getty on the serial port and you’re in.

They don’t have native serial ports and I have not yet determined whether a USB serial adapter will autoconfigure at boot to enable serial login.

Life is way too short for messing with frankenstein hardware. I focus on results and I discard stuff that gets in my way.

Not just software but basically everything that can be fuzzy tested.

A few years ago I was tasked with figuring out why the light switches in the corridor of a flat were sometimes causing circuit breakers to trigger and why one didn’t work at all any more.

One side of each of the ~5 switches were connected to one latching relais in the breaker panel but, as it turns out, the other sides were not on the same phase of the 3-phase system.

-> When children were playing around and mashing two switches on different phases at the same time they immediately short circuited over ~400V AC :-)

That’s not something any “normal” test would notice and the electricians before me fucked up other stuff in that house too (I replaced ~three 500mA fault current GFCIs with “standard” 30mA ones an after that every time a specific motion detector triggered one or two of the new GFCIs would trip. -> Same problem as above: The motion detector was wired across two of those GFCIs *shakeshead*).

Yikes, that sounds like quite the mess to untangle. Is 3-phase power common in residential settings across the pond? I wired my house, but it was all single leg split-phase, real simple stuff (And got it properly inspected, for the record). I can do that all day long, but I don’t want to touch 3-phase power. Sounds like black magic to me.

The author of the Favicon tracking paper is pretty disingenuous. The technique is nothing new. Here’s a 2013 stack overflow post describing it: https://stackoverflow.com/questions/16828763/use-favicon-to-track-user-visit-to-a-website

Furthermore, Firefox was not vulnerable to this technique, so the first thing the author did was submit a Trojan horse bug report to introduce the vulnerability to Firefox: https://bugzilla.mozilla.org/show_bug.cgi?id=1618257

So the 2013 post is something similar. This paper is a bit of a novel development past that. This technique used fake URLs to store unique identifier, rather than just detect if a user has accessed a site before. But yeah, good catch.

And that bug report… Yeah, that looks bad. I’m willing to give the author the benefit of the doubt, that it wasn’t intentional, but it should have been handled better. For what it’s worth, he just responded, and accepted some blame: https://bugzilla.mozilla.org/show_bug.cgi?id=1618257#c10

OpenWrt, not OpenWRT… (ref: https://forum.openwrt.org/t/what-does-wrt-stand-for/27074/5)

It occurs to me that fuzz-testing and Muntzing could have some very interesting convergence topologies.

https://en.wikipedia.org/wiki/Muntzing