It isn’t just a spy movie trope: secret messages often show up as microdots. [The Thought Emporium] explores the history of microdots and even made a few, which turned out to be — to quote the video you can see below — “both easier than you might think, and yet also harder in other ways.”

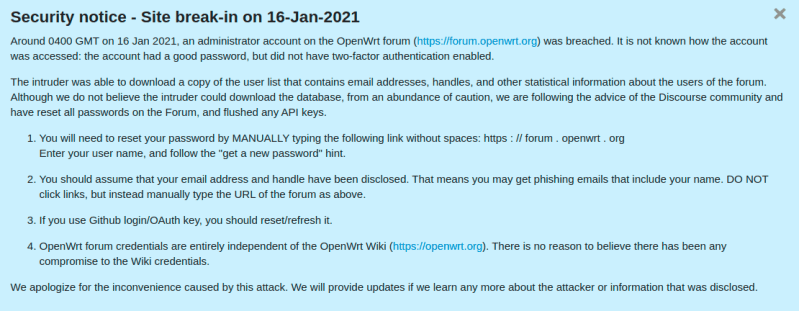

If you want to hide a secret message, you really have two problems. The first is actually encoding the message so only the recipient can read it. However, in many cases, you also want the existence of the message to be secret. After all, if an enemy spy sees you with a folder of encrypted documents, your cover is blown even if they don’t know what the documents say.

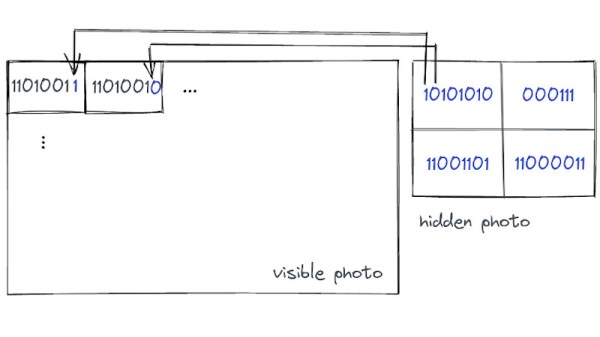

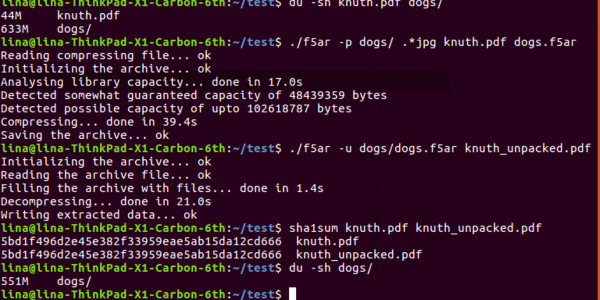

Today, steganography techniques let you hide messages in innocent-looking images or data files. However, for many years, microdots were the gold standard for hiding secret messages and clandestine photographs. The microdots are typically no bigger than a millimeter to make them easy to hide in plain sight.