Ever since the world decided to transition from mechanical ball mice to optical mice, we have been blessed with computer pointing devices that don’t need regular cleaning and have much better performance than their ancestors. They do this by using what is essentially a tiny digital camera to monitor changes in motion. As we’ve seen before, it is possible to convert this mechanism into an actual camera, but until now we haven’t seen something like this on a high-performance mouse designed for FPS gaming.

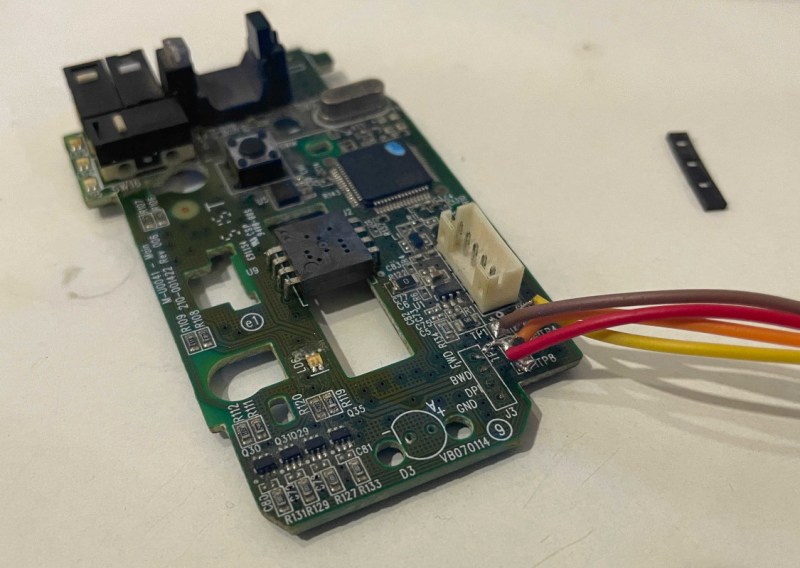

For this project [Ankit] is disassembling the Logitech G402, a popular gaming mouse with up to 4000 dpi. Normally this is processed internally in the mouse to translate movement into cursor motion, but this mouse conveniently has a familiar STM32 processor with an SPI interface already broken out on the PCB that could be quickly connected to in order to gather image data. [Ankit] created a custom USB vendor-specific endpoint and wrote a Linux kernel module to parse the data into a custom GUI program that can display the image captured by the mouse sensor on-screen.

It’s probably best to not attempt this project if you plan to re-use the mouse, as the custom firmware appears to render the mouse useless as an actual mouse. But as a proof-of-concept project this high-performance mouse does work fairly well as a camera, albeit with a very low resolution by modern digital camera standards. It is much improved on older mouse-camera builds we’ve seen, though, thanks to the high performance sensors in gaming mice.

There are programs floating around the internet that let you see the raw image from optical mice. They make interesting random number generators, not to mention text readers. Stitch all the images together, using the position data, add lots of hand waving and you’ve got a scanner.

There used to be ADNS sensors with a simple serial interface that let you access the image data directly. Where’d all those go?

They unilaterally decided to stop selling to the proletariat: https://www.mouser.com/PCN/Avago-5-16-12-Avago%20NID%20announcement%20letter%20May%202012.pdf

We serfs aren’t worthy enough for those toys. They’re only for the big companies to make products out of for use to dutifully consume.

But I saw the EOL notice and bought a half dozen before they pulled the plug anyway, just because.

And they still have the guts to start such a letter with “Dear valued Customer”.

The only way they value their customers is by the amount of money they can export by attempting to create artificial shortages. Just like the USB contortion Corporation where you have to cough up USD5000 with the sole benefit to become the owner of a 16 bit number. (You have to pay extra for the rights to use the USB icon)

That sort of makes sense, though, because otherwise those 16-bits would run out very quickly and be wasted. Maybe they should have gone with more than 16 vendor bits though!

The resolution appears to be 32×32; there’s one dead pixel.

This is a cool project. I’m always in awe of someone who figures out how to use somebody else’s serial data stream.

To be fair, it’s in the datasheet, and supported and documented AFAIK by every operating system all the way back to DOS (as INT33). I built a text scanner out of an optical mouse like this in 1995 or so. A 25 MHz ‘486 had no trouble keeping up.

I remember seeing something similar many years ago… Ah, here it is:

https://hackaday.com/2006/01/07/optical-mouse-based-scanner/

Link in article is broken, here is archived version:

http://web.archive.org/web/20060826040012/http://sprite.student.utwente.nl/~jeroen/projects/mouseeye/

Main difference is that he didn’t reuse MCU on mouse, he just connected some wires on sensor and connected to PC.

Where can I see the resulting images?

You can view them here: https://qcentlabs.com/posts/g402_hack/

As many others I used the numerous ADNS chips, salvaged from optical mice. Currently I’m playing with this one:

https://www.tindie.com/products/onehorse/paa3905-optical-flow-camera/

35×35 pixel at moderate supply current with a pretty good frame rate and it comes with a lens for >80mm.

Pixart’s business condcuct is annoying as well, I had to learn the datasheet is hidden behind an NDA,

but there is a well documentated code in GitHub, that even I was able to adapt to my favorite controller.

Peter/DL3PB

I’ve been wondering lately about using a mouse sensor with different optics to track the position of a CNC machine’s tool-head relative to a ceiling or wall, to close the servo loop without cumbersome and expensive rotary encoders and automatically eliminating the effects of belt slippage and backlash. Has anyone attempted this? Is it even possible to adapt the optics to a long range like that?

Another use might be “inside-out” tracking of VR HMDs and input devices (with quite a bit more math, of course).

Seems like you could read a line on the gantry, or rails, or whatever mechanism you hold the tool with (I think this is how optical encoders work for CNC anyway) instead of pointing the camera up unless you have the tool held by a quad copter or something. Seems to me that would be a low cost improvement for cnc and 3d printers if the resolution is enough. Maybe magnifying the image that the sensor sees for better resolution is where the improvement in optics could be focused. (ha)

Good point, but then you’d be using a 2D sensor to read one dimension, and end up needing one sensor per axis. Not a big deal, but a little wasteful.

My inspiration was the Shaper handheld CNC router, which uses computer vision to compensate for human hand motion.

I’ve thought about using a relatively cheap webcam looking at a ruler and some image processing, but in the end it’s just not worth it. Rotary encoders with 1024 slits cost about EUR 30. Hall sensors with 12 bit resolution cost EUR4 and if your hardware is so bad you have to consider belt slippage, you just not going to get it to work propery in any way.

Also, looking at something far away is not ever going to work because small angular deviations get magnified a lot.

Homofaciens has done some experiments with mouse sensors as CNC position feedback and has gotten it to work. That was years ago and only a proof of concept though.