Over the history of this business, a lot of people have foreseen limits that look rather silly in hindsight– in 1943, IBM President Thomas Watson declared that “I think there is a world market for maybe five computers.” That was more than a little wrong. Depending on the definition of computers– particularly if you include microcontrollers, there’s probably trillions of the things.

We might as well include microcontrollers, considering how often we see projects replicating retrocomputers on them. The RP2350 can do a Mac 128k, and the ESP32-P4 gets you into the Quadra era. Which, honestly, covers the majority of daily tasks most people use computers for.

The RP2350 and ESP32-P4 both have more than 640kB of RAM, so that famous Bill Gates quote obviously didn’t age any better than Thomas Watson’s prediction. As Yogi Berra once said: predictions are hard, especially about the future.

Still, there must be limits. We ran an article recently pointing out that new iPhones can perform three orders of magnitude faster than a Cray 2 supercomputer from the 80s. The Cray could barely manage 2 Gigaflops– that is, two billion floating point operations per second; the iPhone can handle more than two Teraflops. Even if you take the position that it’s apples and oranges if it isn’t on the same benchmark, the comparison probably isn’t off by more than an order of magnitude. Do we really need even 100x a Cray in our pockets, never mind 1000x?

Image: ASCI Red by Sandia National Labs, public domain.

Going forward in time, the Teraflop Barrier was first broken in 1997 by Intel’s ASCI red, produced for the US Department of Energy for physics simulations. By 1999, it had bumped up to 3 Teraflops. I don’t know about you, but my phone doesn’t simulate nuclear detonations very often.

According to Steam’s latest hardware survey, NVidia’s RTX 5070 has become the single most common GPU, at around 9% total users. When it comes to 32-bit floating point operations, that card is good for 30.87 Teraflops. That’s close to NEC’s Earth Simulator, which was the fastest supercomputer from 2002 to 2004– NEC claimed 35.86 Teraflops there.

Is that enough? Is it ever enough? The fact is that software engineers will find a way to spend any amount of computing power you throw at them. The question is whether we’re really gaining much of anything.

At some point, you have to wonder when enough is enough. The fastest piece of hardware in this author’s house is a 2011 MacBook Pro. I don’t stress it out very much these days. For me, personally, it’s more than enough compute. If I wasn’t using YouTube I could probably drop back a couple generations to PPC days, if not all the way to the ESP32-P4 mentioned above.

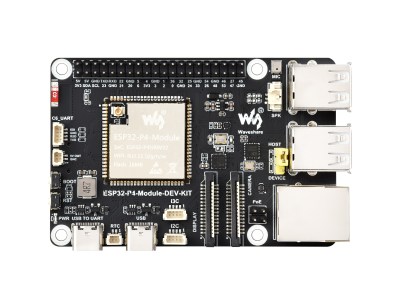

Image: ESP-32-P4 Dev Board, by Waveshare.

The 3D models for my projects really aren’t more complex than what I was rendering in the 90s. Routing circuit diagrams hasn’t gotten more complicated, either, even if KiCad uses a lot more resources. For what I’m working on these days, “enough compute” is very modest, and wouldn’t come close to taxing the 2 Teraflop iPhone. That’s probably why Apple was confident they could use its guts in a laptop.

How about you? The bleeding edge has always been driven by edge cases, and it’s left me behind. Are you surfing that edge? If so, what are you doing with it? Training LLMs? Simulating nuclear explosions? Playing AAA games? Inquiring minds want to know! Furthermore, for those of you who are still at that bleeding edge that’s left me so far behind– how far do you think it will keep going? If you’re using teraflops today, will you be using petaflops tomorrow? Are you brave enough to make a prediction?

I’ll start: 640 gigaflops ought to be enough for anybody.

Not a lot of the stuff I have is any newer than about 2012-2014, with my daily driver being a T430 from 2012. (I’d like more ram, but don’t really need more speed.) I have maybe something like a slightly better low-end AMD GPU from 2016 here or there. The biggest exception is a fairly new machine with an nvidia 30 series GPU whose only purpose was to train small vision models in pytorch. The oldest machine is from 2006 and that’s really the only place there’s an “every day” struggle since it’s CPUs aren’t quite enough to watch video streaming from twitch, though it’s 32GB of ram is quite luxurious for anyone who keeps a lot of tabs open during a project.

For any given task, there’s some minimum amount of compute required to accomplish it comfortably, and really the compute that is “enough” is the maximum of that set.

If you only need word processing, the floor is quite low. Add web browsing and it goes up a fair bit (adblocking helps), add video streaming and it goes up a bit more, and then training AI models is probably the worst offender. (though perhaps a fast GPU could still be coupled to a fairly old system) Gaming straddles a wide spectrum, with some games being very light and some not too far below model training.

In terms of the absolute minimum requirements, I typically want anything that isn’t a single-purpose device to have an MMU, since debugging system crashes from errant applications is no fun. I’d also probably want it to have support in the latest NetBSD to have useful libraries and applications available, though they still support the VAX 11/780 from 1977 so eg. the specific arm boards they support tends to be more limiting than age.

Turns out, the really interesting stuff you could do requires an order of magnitude more computing power than what you have available.

So you’re consigned to do the same old stuff that doesn’t actually need the latest and greatest.

For example, you’re not running fully ray-traced voxel based games with environments you can destroy to the last grain of sand because you’d actually need a supercomputer in modern day terms to run it, so you’re doing AI algorithms to fake better visuals out of old fashioned polygon graphics and thinking “This is no different than what we had 10 years ago. We’re not doing more, we’re just polishing a turd.”

I rather agree, but all depends on what you wish to do, how much electricity costs or how quiet you want it to be as the modern stuff should improve both significantly for the same performance.

However the big issue being how many devices do you have – if you are only going to have 1 or 2 devices then you probably will need something fairly modern and fairly powerful to be able to do all the things you want to do, potentially many of the simultaneously. Where if you have heaps of more dedicated to a task devices you could easily keep using that core2duo etc in many places – its going to run your CNC controller or the software for that old serial lab equipment just fine, still be a perfectly good writing platform, maybe even good enough to run your Security cameras/NAS, though getting old enough actually getting a supported software stack to make it reasonably safe on the internet not so easy.

This could become an issue of real import if asia explodes into a war over taiwan. Thankfully, there are TONS of ewaste computers from the last 1.5 decades that are perfectly serviceable for a lot of everyday needs.

Yep. My daily driver is a Samsung Chromebook 3 with linux bought for $30. Works fine for the day to day. If I need to compile I have a Core M5-Y71 laptop that is only about ~5min behind my Ryzen 9 6 series desktop on most things. I do use the desktop for a small amount of gaming since it has a dgpu but don’t intend to replace it any time in the next 5ish years.

I like older tech bought for next to nothing and it works fine.

Been running a halium/hybris Furilabs FLX1 for nearly 2 years now as well. Mid-tier specs but it works really well with full debian userspace, I can also use that for code/compiling/whatever in a pinch. I’ll use that thing until it dies.

I haven’t replaced my main PC for a decade or so, and all the while it’s supported by Linux I’ve no particular reason to do so.

i bought a low-end CPU+MB in 2019, and in 2024 i bought a new CPU for the same MB…got it used…the high-end 2019 CPU, the fastest that was compatible with the MB at the time. ryzen 3 2200G -> ryzen 7 3700X. first time i upgraded the CPU halfway through the lifespan of a motherboard (about a decade imo).

and i actually enjoyed the upgrade! 8 cores! “make -j” is so fast…i remember when i started at my job in 2001, i tried to avoid re-compiling the world because it took 10 minutes but now i can do it in about 10 seconds.

can’t imagine wanting anything faster :)

IBM CEO Thomas Watson, Sr. never said, “I think there is a world market for maybe five computers.” Unfortunately, this spurious quotation has been repeated so often, in so many places, that it has taken on the patina of truth.

According to IBM, this is a misquote of a statement by his son, Thomas Watson, Jr. from IBM’s stockholder meeting in 1953:

“We believe the statement that you attribute to Thomas Watson is a misunderstanding of remarks made at IBM’s annual stockholders meeting on April 28, 1953. In referring specifically and only to the IBM 701 Electronic Data Processing Machine – which had been introduced the year before as the company’s first production computer designed for scientific calculations – Thomas Watson, Jr., told stockholders that ‘IBM had developed a paper plan for such a machine and took this paper plan across the country to some 20 concerns that we thought could use such a machine. I would like to tell you that the machine rents for between $12,000 and $18,000 a month, so it was not the type of thing that could be sold from place to place. But, as a result of our trip, on which we expected to get orders for five machines, we came home with orders for 18.'”

That’s a lovely quote. Can you cite a source? It would be great to have that the next time someone misquotes Tom Senior.

The quotation comes from the FAQ section of IBM’s history website circa 2007. The website has since been replaced, but the FAQ can still be found in this PDF on page 26 (thank you Wayback Machine): https://web.archive.org/web/20071010071202/http://www-03.ibm.com/ibm/history/documents/pdf/faq.pdf

I’ve always heard the quote attributed to IBM’s giving away rights to PC computer’s DOS to Micro$oft because IBM didn’t see value in PC’s. I always thought it to be ironic that the prediction of “I think there is a world market for maybe five computers” was just ahead of its time if you think about companies like Goggle and Fakebook and Amazone pushing all our devices to omnipotent “cloud” based computing. AI is the pinnacle ode to cloud computing.

That was more IBM hedging bets: the original design was multipurpose and could be used for a variety of other product lines (such as in the 5250 and 3270 series terminal lines and stand-alone word processors. The stand-alone word processor market was a VERY ripe one, at the time, as there were a number of smaller and midsize companies in the field, many of which used a CPM base under the proprietary WP software. IBM didn’t have a presence there to speak of, as they were still flogging the System34, among others, at smaller targets). There wasn’t a good business case for going whole hog into PC’s, given the state of the market and the established market for IBM in the commercial world.

The lowest cost and lowest risk entry point was to license an existing OS, so they did. That Billy G did a shuck-and-jive to sell them what he didn’t own and didn’t understand is more the lack of institutional understanding of the stand alone market then IBM seeing no value in the product.

(I still have nightmares about punching FORTRAN using a 5252. Better than punch cards, mostly, but that is like saying, from today, that antibiotics have made TB manageable. It’s TB in the end…)

It will never be enough, because there are diminishing returns on optimization. In the dial-up days, you had to make sure the pictures on your website were tiny and used sparingly, or else no one would be able to use your website. But as compute gets cheaper and everyone gets to the point where they can load a high-res image (or video, or game, or…) without an appreciable delay, it doesn’t make sense to spend any developer time on stuffing those into smaller or more efficient packages. Even if you could optimize it to the point where it ran without appreciable delay on archaic hardware, it doesn’t financial or time-management sense to do so.

OK, but there’s still got to be a limit. At some point it is going to be enough. Your website or game or whatever is transmitting information to humans, and there’s a limit to how much information our sensorium can take in.

Start with visuals: the human eye has a resolution. There are different estimates of that, but 530-576 megapixles seems common for the entire FOV of both eyes. 30FPS enough to distinguish movement, but apparently the brain updates in the 300 FPS range. Colour depth is about 10 million colours, so should be handled by 24 bit. Go to 32 bit just for fun.

So. If you are beaming information to human eyes, let’s say at 600 megapixles, 300 FPS, and 32 bit colours, that’s 5760 gbps ignoring all compression, optimization, whatever. For delivering visual information to human brains, any hardware that can handle that throughput will be enough.

You can guesstimate how much info is in the other senses, but it’s going to be roughly as much on an order-of-magnitude level. So say on the order of a couple of tbps. That’s the “fool a brain-in-a-jar” limit. It’s a crazy limit, but it is a limit. I can’t see how, if you’re talking about a system interacting with a human being, that maxing our their entire sensorium can’t qualify as “enough”.

I assume you are limiting your examples to consumer-style computing if the limiting factor you’re choosing is the sensing ability of a human, otherwise it’s not the best metric to use as a compute-demand ceiling.

If, instead, you opt for the Steve Jobs “bicycle for the mind” concept of a computer then the ceiling will always be a function of the needs/interests of the user and their budgetary limits.

The “bicycle for the mind” concept is rather interesting if taken at face value. How much does a bicycle help?

It gets you about four times the speed and distance of travel in a day for the same effort. It’s not significantly different from walking in how much stuff you can carry, and it’s very dependent on infrastructure like good level roads and bicycle lanes separated from the rest of traffic. Bad weather and winter will slow you down, or make you uncomfortable enough to not want to go and take the bus or the car instead. In the end, you’re still confined to a rather small urban area and modest amounts of things you can do with it.

So, the “bicycle for the mind” would be a device that lets you think four times more effectively if what you were thinking is a limited subset of intellectual activities that are not too difficult to perform and are mostly related to urban infrastructures and people.

It would probably be something like, consuming or producing media, making blogs, appearing on social networks… hey, would you look at that. Steve Jobs was right.

You can of course do a 100 km bicycle tour to make a point. I personally tend to get too tired at 50 km so I don’t want to. Meanwhile, someone with a car may drive 100 km just to visit IKEA and do a bit of shopping on a lazy afternoon.

So if a computer is a bicycle for the mind – what is a car?

What is a car?

For my wife, a mobile garbage bin. :)

heh i think i agree with your punchline.. but a couple nits.

biking is indeed about 4x the distance / time for me, but it is much less effort during that time interval. i’ve had on the order of a hundred 40 mile days on the bike. but i don’t think i’ve had even a single 10 mile day on foot, and when i have come close to that figure i am worn out much more deeply than biking.

as for the limitations of a bike, it doesn’t make much sense to talk about them that way. the limitations of a bike compared ot a car or bus are irrelevant…and as for needing good weather and good roads, that’s actually in common with walking. there is a small range of conditions where you can walk but not bike, but for the most part walking is inhibited by the same things that inhibit biking. like, i can walk in deeper snow than i can bike in, but snow is definitely a hazard when walking too.

but like i said i agree with your endpoint :)

8 bits per channel still has noticeable banding, especially if you have to process the image to do gamma-correction, because it results in quantization errors.

Unfortunately many monitors still only really do 6 bits per channel and dither to fake the 8 bits.

8 LINEARLY SCALED bits per channel. The sensitivity of the human eye is logarithmic. This means that the base sensitivity is to a CHANGE of about 1/200-ish in brightness, and is a bit more involved for colors. In RGB, color banding is essentially imperceptable at about (in RGB terms… It is really more complicated) one part in five hundred for each channel, depending on the base color.

There are some nice references, showing the relative sensitivity to changes as a function of color, the best being maps on the CIE chart. I can’t be sussed to find one on my cell, tho

(Interesting fact: there were systems in the 1970’s that used this to advantage to give really good color with four to six bits/channel, logarithmicly scaled)

The problem becomes when you have gradients that aren’t pure red, green, or blue, and they aren’t fully 0-100% gradients

In those cases you’re dealing with one color that might get two bits of resolution across the range, and another that gets maybe 4 bits, and you’re supposed to make a smooth transition out of that. Of course it’s going to end up with visible banding, and the only remedy is to dither the output so it looks right if you squint your eyes a little, or look at it from a distance.

If you had 10-12 bits of resolution per channel, that would make it instantly easier to make subtle transitions from one color to the next. Unfortunately it also takes more bandwidth, so the TV transmissions just compress it out and create banding artifacts anyways.

Agreed. For more than a decade, when people ask me what computer they should buy that is fast enough for their needs, I say: any one will do. I didn’t really notice a significant increase in picture quality when I bought a 4K TV, and it’s 43″ because I don’t need a larger one. Do I need more than 24bit 48KHz resolution music? My hearing is not that good. 24 megapixels is plenty for my camera. Since I got a fiber optic internet connection, I don’t even know what its speed is.

I actually find satisfaction in knowing that stuff around me covers most of my needs with some headroom to spare.

I used images as an example because they’re easily understood, and enough of a solved problem now that we can look back on those days with a bit of objectivity. But it doesn’t end there, of course. Once image streaming took negligible time, we didn’t stop expanding our bandwidth or hardware needs. If people are willing to wait 0 seconds for an image, maybe they’re willing to wait 5 seconds for a video? Once the tech improves such that you wait 0 seconds for a video, maybe people would be willing to tolerate a half second of latency to stream a video game? (Turns out we aren’t, but the attempt was made) The natural next thought once resources increase in availability is “what new thing can we do with all these resources?”

There’s also a parallel phenomenon wherein more resources increases accessibility for less competent developers. Eg websites uploading a 4k image and shrinking it down to a tiny banner. Admittedly this irks me, but it DOES enable more people to have websites than they otherwise would, which in turn enables things I like, like small businesses, an easy way to distribute my portfolio, and so on.

Running local LLMs really needs a lot of calculation power. The RTX5070 with its teraflops mentioned is even to slow to run local LLMs. In short: The race for faster hardware like in the 1990-2000s has just started again to run the AI software.

That is why I upgraded from an LX2K (16core @ 2GHz) ARM workstation to an 80-core Ampere. Honestly the LX2K had plenty of grunt for what I was doing for all of my work, but now wanting to play with LLM’s, build for many architectures in parallel, and testing an anti-spam botnet.

I do like the race for faster hardware in the sense there is a new world actually utilizing the silicon, the AI hardware price increases aside. As long as it can run modern web browsing thats all I require. Which is why my quad-core G5 with 16GB or RAM sits on a shelf while I could actually run my business and tasks on a Pi5.

Is this a negotiation…

I’ll start the same place I start the money negotiation, when they insist I start.

‘All of it. I want all the money and stock.’

“I’d hate to preclude anything you might offer; I’ll take the high end of your range.”

No, we won’t be using much more compute tomorrow. Its similar with internet connection speeds. After you already have 1Gbit, and you and your household is satisfied and can watch movies and play games and do video conf at the same time, there is not much need to have a faster internet subscription.

laughing hollowly – and after a long delay – at the end of my 30Mbit microwave relay link :)

man i might be in the minority but i 100% agree with this take…they offered me gigabit to my doorstep for almost the same price i was paying for about 80megabit and i just couldn’t be bothered, for years. i finally did switch but only because the uptime on that 80Mbit connection was abhorrent. cable->fiber was a huge reliability improvement but i didn’t notice the speed

“The fastest piece of hardware in this author’s house is a 2011 MacBook Pro.” My last laptop was a 2011 MBP. That had an i7, 1GB of VRAM, 16GB of RAM, swapped CD Drive for a 2nd SSD, upgraded to the HD display. Perfect Laptop. Lasted 11 years until the GPU started artifacting, and wasn’t able to ever reflow it back… :)

My last desktop was a 16-core ARM workstation (LX2K) @ 2Ghz, and got me an article here on Hackaday! That was actually more horsepower than what I really needed for most tasks other than playing games with x86 translation layers.

That said, if I had to I could absolutely work and do my daily tasks on a Pi5/8GB model, just need a lot of USB expansion hahaha

Be careful what you wish for. Big Brother is watching.

That said, yes. I do need that computational through-put. As for the I/O-bound stuff, no I seldom need what a modern device can provide.

But my computational stuff is distributed – we have (I think) about 25 Teensy and ardy clones sprinkled around our property. Each far-flung processor controls what it is supposed to, does analytics, and makes it own decisions. They talk to two geriatic (IBM) Thinkpads, whose only job is to talk to one router and three radios and display situational data that these distributed processors have already crunched.

Like I said, I need that computational power, but not all in one device or subsystem. Those of us that did not go to engineering schools based on the Klingon culture know how to do this; with ease.

sounds like a cool project; do you have a writeup?

Big Brother is watching via the pocket supercomputer. ;)

I am a lot like the author here.

I get by with my most used devices being sub 4GB of RAM and years old CPUs. I do have a computer that can crunch numbers with a GPU and double digits of RAM. That computer gets turned on a month or so out of the year Whenever I need to do heavy analysis or modeling.

Most of the time I am beyond fine at 8GB of RAM or less, even for pretty large tasks. 2GHz CPUs are a okay. Arduino unos are often overkill for my projects and they can be doing some pretty intense data acquisition and some computing. I guess we call that edge compute now or something. I have heavier mcus but more often then not they are overkill. It feels wrong to use them.

One of my family members upgraded their windows machine and 16GB of RAM instantly became insufficient for their daily tasks. It was a scary realization. We live in an era of software slop. I don’t think there’s any other way to say it. People have found it profitable to try to offer convenience by abusing the hell out of resources.

You’re my hero. Honestly, how do you even use big bloaty Firefox or Chrome with only 4GB?

Yeah I’m still running a 2012 or so era Core2 Quad, but I put in a lot (for the time) of RAM (32GB) because my old computer before that was constantly freezing with swap storms. And this is still plenty unless I need to crank up several VMs.

First of all you have to use Linux and strip everything down you don’t need. The other trick is, don’t live in the browser and change the settings. Some people have upwards of 30 open tabs, can’t do that. Firefox focus is okay for a few reasons. Pinhole or other firewalls or ad blockers can help cut the crud too. The real ram killer is modern IDEs.

This is a story that a lot of younger people don’t believe. I used to develop 3d games, not triple A’s but IDE, compiler, browser, IRC, and game running on a 2GB laptop. It really helped me learn about performance.

One does not need a super computer for the guidance of a missile!, nor for a phone for yak-yak and texting with some web capability. The first iPhone …a knock off was powered by 8052 microcontroller. It was out 6 months before the genuine article

2005/2006 Shanghai!

Apple doesn’t have leaks or is not compromised…fully? Lmao!

The specs floor is rising rapidly. Postman is the slowest, most inefficient program on my computer. Ya know, the API testing tool? Just opening it makes my 2-terraflop laptop lag. It’s atrocious. I got an i7 for compiling and VMs but the biggest resource hog is Javascript UIs.

OpenSCAD is a worthy consumer of 2 tflop. Game emulators too, playing PS2 games rendered at 1080p is fun. But I’ve not kept current with GPUs so my RX Vega 64 is “only” 12 tflop.

The latest gen of GPU isn’t worth the price IMO. The current bleeding edge is raytracing, which should be cool but it’s still too slow to run natively at 60fps. So raytraced games like Cyberpunk rely on AI upscaling and frame interpolation. Not a fan. It looks bad in motion, there’s smearing and artifacts.

Postman is an abomination

OpenSCAD renderer’s is awfully slow on nearly any machine, but as long you’re not (yet) doing the heavy calculation, it does take very, very few resources to run, which is nice.

Before AlexNet (2011, wow) increases in computation where mostly gratuitous. Websites where becoming ever more bloaty with ever more inefficient frameworks (css class systems completely ignoring the underlying system). Video games driving GPU demands.

But then AlexNet showed what you can do with a lot of extra computational power. That you can find a cat in a picture. Here we are 15 years later without a ceiling.

I would like setup to run a few 128GB models at home, but that is not gonna happen. As far as price goes we are back in the early 80’s.

Typo in the title, “compute” is a verb, not a noun.

Gentoo compiles and OpenSCAD would by my two biggest uses of computer power at home. I’m not sure the GPU can be much help with either. Gentoo can build in a screen terminal while I do other things but occasionally long OpenSCAD renders can be a little annoying. I have a library which produces a lot of curved surfaces and uses a lot of hull(). I love the end results but not so much the render times. I think I can re-design some parts to replace hull() with polyhedron() and I bet it will get faster. It’s going to be a real pita to do so though.

Yeah, hulls and Minkowskis will kill ya. And after getting a new work computer (which I also use for hobby OpenSCAD stuff) to replace my 9yo ThinkPad… it doesn’t perform that much better! OpenSCAD really just needs to go multi-threaded.

fwiw, i have openscad 2021.01 that comes with debian these days, and it’s much faster than older versions (but still pretty slow), and it is pretty reliable…but i also have 2025.01.25 that i had to build from source, and it’s much faster, but sometimes it crashes. i think it’s mostly improvements to caching and multithreading but i don’t really know. i’m holding out hope that a newer openscad will be faster still.

It’s extremely heartening to see all these people crowing about their ancient tech. I thought I was doing pretty good with my 9yo laptop and 11yo phone, but you all are taking it to the next level. So much respect for the value in existing silicon.

Now I’m writing this on a fresh Framework 16 (I was getting into more Rust at work and C++ at home, you see) and I feel like an impostor!

I still hesitate when I reach for an ESP32 when an ATMega or even an ATTiny would probably do the trick. But ESPs are sooo cheap now.

I am replying on a ~16-year old bargain laptop that was bought on sale, because it was already two generations behind back then. Original (slow-mo) hard-drive, etc.

The only upgrade it had was quadrupling the RAM from piddly (and even slower-mo) 4GB to slightly faster (and slightly more modern) 16GB. No hard drive paging, which was forced off and stayed off ever since. Linux Mint made sure it runs.

Linux Mint.

Workstation CPUs now have 128 cores, enough to simulate entire networks while decompressing large datasets and playing the latest video games at the same time.

“Average” people who would rather have a Workstation than a new car can donate their CPU to crunch scans of other planets and galaxies, or fold protiens looking for new medicines and treatments.

If you provide a generic tool people will find a use for it.

Even a personal mainstream CPU is $200 for 18 cores, $300 for 24 cores. That is crazy cheap, 1/6 the price of something lile a 486 25mhz when launched in 1989

Yes, you say that, but as soon as you start trying to manipulate complex STEPs imported into Solidworks you realize that no amount of compute is enough. (yes, I know, but just try to file a bug report that says, Please multi-thread all your rebuilds :-) )

But, and this is the funny thing, Claude Code literally complimented me on how fast the processor was while it was training AI models.

Wait, what? you say? Training AI models? Yes. Something I never in my life thought I’d be doing on a machine in my office yet here I am.

So more compute will open more doors will require more compute. . .

my computer is edging in on 5 years old and still does everything i need it to do. it took 6 months to build it and i was only able to build it because i had 2 gpus crypto mining during covid. otherwise it wouldnt have been something better than the 8th gen intel rig i was running at the time (an 8086k which still runs great mind you). the ever rising cost of computer parts is turning what was once a full build every 2 years into a 6 year cycle with computers being upgraded in stages. alternating core components, gpu, everything else and sometimes a fourth cycle where i get a fancy monitor or some expensive peripheral. the performance gains just aren’t there anymore. while were far from peak compute, the bang for your buck options dont seem to be improving much. besides i feel like im under utilizing what i already have, and so effort turns away from improvements and towards maintenance (which i just didnt need to do when i was still on a 2 year cycle, and the irony is those machines still run).

Same here we only have computosaure at home.

Debian allow older guys to perfom enough…

Of course, I dumped Arduino gui… vim/arduino-cli is way more efficient.