Kiki bills itself as the “array programming system of unknown origin.” We thought it reminded us of APL which, all by itself, isn’t a bad thing.

The announcement post is decidedly imaginative. However, it is a bit sparse on details. So once you’ve read through it, you’ll want to check out the playground, which is also very artistically styled.

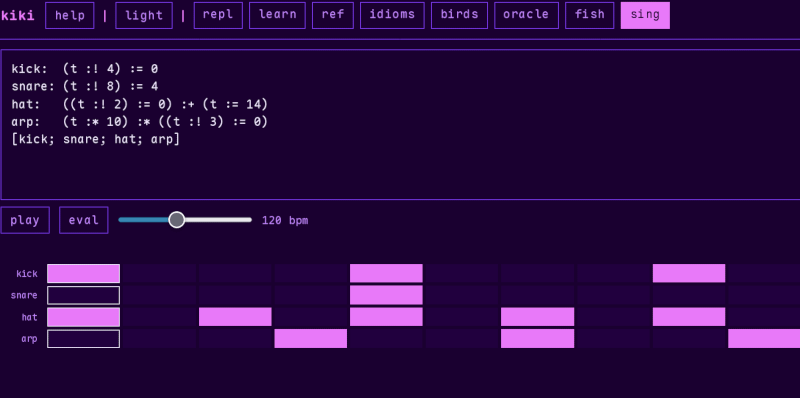

If you explore the top bar, you’ll find the learn button is especially helpful, although the ref and idiom buttons are also useful. Then you’ll find some examples along with a few other interesting tidbits.

One odd thing is that Kiki reads right to left. So “2 :* 3 :+ 1” is (1+3)2 not (23)+1. Of course, you can use parentheses to be specific.

If you are jumping around in the tutorial, note that some cells depend on earlier cells, so randomly pressing a “run” button is likely to produce an error.

Would you use kiki? There are plenty of array languages out there, although perhaps none that have such poetic documentation. Let us know if you have a favorite language for this sort of thing and if you are going to give Kiki a try.

If you want to try old school APL, that’s easier than ever.

It’s called RPN (Reverse Polish Notation).

Invented by Jan Lukasiewicz whose work in building a new (non-aristotelician) logic is massive ! And this in turn would prove central to the development of the computer (as a materialization of the new logic). Frege’s Begriffsschrift is also quite amazing in that regard, because it is the foundation of a logical language entirely abstract and separated absolutely from natural language. It is a language the symbolic meaning of which id entirely self-referential. It was also written in a bi-dimensional style. Whereas logic as defined by Aristotle and until Frege was mainly about the syntax of natural language (which did not permit easily what would become the logical basis of electronics). Another exception to aristotelician thought must be Babbage.

I typically use Lukasiewicz notation for Symbolic Logic (vs Principia notation, which is more common), and, being a long-time LISP programmer, I find the whole concept attractive. However, what I’m seeing in Kiki does not parse like Lukasiewicz notation., and Lukasiewicz notation is Polish notation, not RPN.

Not RPN (formally Postfix notation).

THis is right-associative infix. We think of algebra as, generally, left-associative with precedence by operator– generally, as conventional algebraic notation has special cases, such as exponents and unary negation– but this is (as far as I understand the structure) strict right-associative, with parentheses to group.

Contrast this to prefix notation (the closest we generally see are languages in the LISP-ish genealogy– DYlan, Clojure, Racket, Scheme, for example– though they are usually parenthesized for parameterization)

I don’t think it’s even JUST right-assoc infix, because AFAICT the operators are reversed as well. (IOW, “1 :+ 3” written LTR would become “3 +: 1”.) The article description may actually have been the most accurate one: it reads right-to-left.

You know, I take that back, because then you have lines like “kick: (t :! 4) := 0”, which is CLEARLY LTR. “The definition of kick: if t is not 4 (?), set kick to 0”. You can’t read that RTL and make sense of it. I don’t get why they would use LTR syntax with right-assoc math.

(Looking again, It seems to be “if t is not a multiple of 4”.)

Referring specifically to the example in the HaD article, it appears to be right-associative. It is most assuredly not Postfix (RPN). The language as a whole seems to, like most, to not clearly fit a right-associative nor left-associative model for all cases. Without digging a bit, and I have no real desire to at this point unless I find a use case, I can not speak to the language as a whole.

Right-associative operators are kind of non-intuitive to most people, except for the obvious cases like assignment (which some languages still manage to make a mess of)

The documentation looks in the same vein as Why’s (Poignant) Guide to Ruby.

Looks like generated word soup. Github / git links don’t show the repo. Doubt it has any functional purpose, other than “I promoted an LLM. Look, it’s art”

Big eyerolls from me.

LL languages are read from left to right, and reduce the leftmost production first. LR languages are read from left to right and reduce the rightmost production first. RL and RR languages can also exist, but aren’t common.

For the sake of vocabulary, ‘terminal symbols’ can’t be reduced from any combination of other symbols in the language, while ‘nonterminal symbols’ can only exist by reduction from combinations of other symbols in the language. That can lead to confusion about whether the next input symbol marks the end of the current nonterminal, the middle, or the beginning of a new nonterminal.

Robert Floyd proposed a workaround by creating a class of ‘operator grammars’ that always have at least one terminal between any two nonterminals. Operator grammars still have some ambiguity about connections, but Floyd dealt with those by assigning each symbol right- and left- ‘binding power’.

Vaugahn Pratt built on that by replacing the binding powers with ‘precedence’ values, and used that to develop the Pratt Operator Precedence Parser. That’s recently become popular thanks to Robert Nystrom’s book ‘Crafting Interpreters’.

The language above looks like an RR operator grammar: it’s read from right to left, there are nonterminals separating the expressions, and ‘:+1’ has enough information to create an addition expression with ‘1’ as its first operand.

Kiki is a harsh sounding language, but Bouba is more well rounded.