Talking to computers is all the rage right now. We are accustomed to using voice to communicate with each other, so that makes sense. However, there’s a distinct difference between talking to a human over a phone line and conversing face-to-face. You get a lot of visual cues in person compared to talking over a phone or radio.

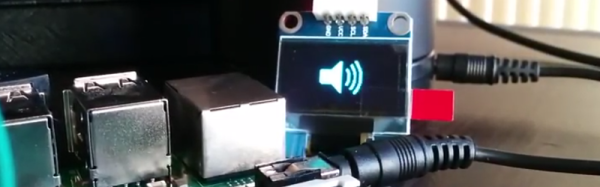

Today, most voice-enabled systems are like taking to a computer over the phone. It gets the job done, but you don’t always get the most benefit. To that end, [Youness] decided to marry an OLED display to his Alexa to give visual feedback about the current state of Alexa. It is a work in progress, but you can see two incarnations of the idea in the videos below.

A Raspberry Pi provides the horsepower and the display. A Python program connects to the Alexa Voice Service (AVS) to understand what to do. AVS provides several interfaces for building voice-enabled applications:

- Speech Recognition/Synthesis – Understand and generate speech.

- Alerts – Deal with events such as timers or a user utterance.

- AudioPlayer – Manages audio playback.

- PlaybackController – Manages playback queue.

- Speaker – Controls volume control.

- System – Provides client information to AVS.

We’ve seen AVS used to create an Echo clone (in a retro case, though). We also recently looked at the Google speech API on the Raspberry Pi.