Talking to computers is all the rage right now. We are accustomed to using voice to communicate with each other, so that makes sense. However, there’s a distinct difference between talking to a human over a phone line and conversing face-to-face. You get a lot of visual cues in person compared to talking over a phone or radio.

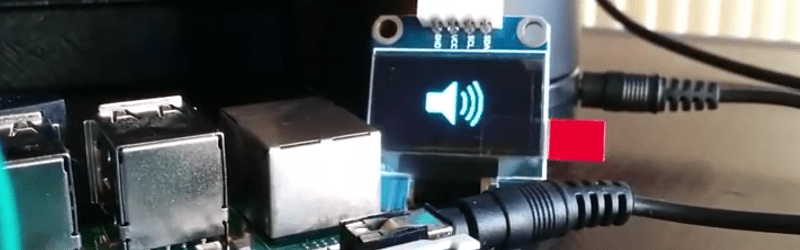

Today, most voice-enabled systems are like taking to a computer over the phone. It gets the job done, but you don’t always get the most benefit. To that end, [Youness] decided to marry an OLED display to his Alexa to give visual feedback about the current state of Alexa. It is a work in progress, but you can see two incarnations of the idea in the videos below.

A Raspberry Pi provides the horsepower and the display. A Python program connects to the Alexa Voice Service (AVS) to understand what to do. AVS provides several interfaces for building voice-enabled applications:

- Speech Recognition/Synthesis – Understand and generate speech.

- Alerts – Deal with events such as timers or a user utterance.

- AudioPlayer – Manages audio playback.

- PlaybackController – Manages playback queue.

- Speaker – Controls volume control.

- System – Provides client information to AVS.

We’ve seen AVS used to create an Echo clone (in a retro case, though). We also recently looked at the Google speech API on the Raspberry Pi.

“A Raspberry Pi provides the horsepower” I’m lost, how many MIPS or MFLOPS do you get out of an average horse brain?

An adolescent horse brain can conceive 13 concurrent processes while galloping and 15 at a full run. It also has more mass than a motorcycle and runs on grass to reduce its carbon footprint.

I can guarantee a motorcycle eats far less than a horse.

Very true but the carbon footprint based on emissions when comparing farm stock to two-wheel human transport is comparable to within an order of magnitude.

(I tried indicate on my first message that I was being sarcastic but it didn’t get posted. I’m just clowning.)

Quit horsing around and cycle back to the mane topic!

If a Raspberry Pi draws ~1.5 watts of power, it effectively is running at 0.002 horsepower.

A Raspberry Pi runs at aprox. 0.002 horsepower, don’t forget to factor this into your computations!

And a horse-mounted Raspberry Pi runs at 1.002 horsepower.

Shouldn’t we be subtracting the power the RPi uses from the horse? The horse is a source, and the RPi is a load after all.

A horse is a horse of course.

And no one can talk to a horse, of course. Unless of course…

Unless that horse is the famous Mrs Alexa?

obligatory xkcd: https://xkcd.com/1720/

IF you go on the official alexa avs build page it will guide you on how to install and set it up on the rasspbery pi WITH ALEXA WAKEWORD DETECTION!

I’ve done it and it works quite well with the 360 kinect microphone. i also created a mouse input script to automate the login process on startup. (it’s the only way there is because it’s a java gui). ill post a video sometime.

Thank you for helping us protect the world by sharing your life with us!

It would be really awesome if he could connect it to a graphical LED display so it would look like KITT from Knight Rider when it speaks.

+1

So no one else feels embarrassed by talking to a lump of plastic and silicon?

Luddite.

Nope. I find it awkward talking to people as well

PS: I just changed my username. Doesnt the comment section change all the old comments to the new name? And why is there no edit button? This system needs updating