Eye tracking is a really cool technology used in dozens of fields ranging from linguistics, human-computer interaction, and marketing. With a proper eye tracking setup, it’s possible for a web developer to see if their changes to the layout are effective, to measure how fast someone reads a page of text, and even diagnose medical disorders. Eye tracking setups haven’t been cheap, though, at least until now. Pupil is a serious, research-quality eye tracking headset designed by [Moritz] and [William] for their thesis at MIT.

The basic idea behind Pupil is to put one digital camera facing the user’s eye while another camera looks out on the world. After calibrating the included software, the headset looks at the user’s pupil to determine where they’re actually looking.

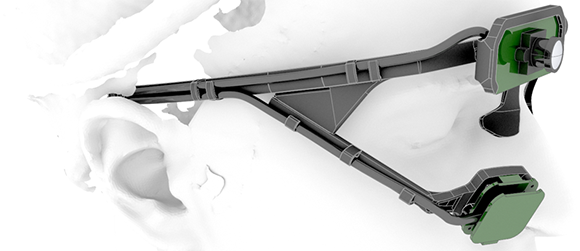

The hardware isn’t specialized at all – just a pair of $20 USB webcams, a LED, an infrared filter made from exposed 35mm film negatives, and a 3D printed headset conveniently for sale at Shapeways.

The software for Pupil is based on OpenCV and OpenGL and is available for Mac and Linux. Calibration is easy, as seen in the videos after the break, and the results are amazing for an eye tracking headset thrown together for under $100.

How long before our robotic overlords (or meat space bosses) will require us to wear these, to know what we are reading?

Why would they do that? You are only allowed to read what they want anyway

Would be nice to see how fast my eyes move while playing COD.

should be rather slow compared to many other games.

This could be useful for quadriplegics as well. This would require some modification to handle clicking via blinking or possibly a mouth control as well. Very cool project.

check out eyewriter!

That’s great, thanks Caleb!

Who can be the first to make a mouse out of it?

Second camera for your other eye? dare you to be able to use it as multitouch and do scaling/rotation :-)

Just ROFL, I started imagining it, and…

Check these guys out: Grinbath.com they have a complete system already made. You can do a lot more than click a mouse with this…

Shame that they don’t release the 3d model. I’d like to print it myself…

By the looks of the photos it’s not that complicated. I’m sure you can model it yourself.

No windows but they have a macos version? Seriously?

Yes, seriously, both Mac and Linux are a lot more alike than Windows, especially when it comes to code building toolchains, like GCC, and libraries associated therewith. I know, it’s shocking, such a novel concept, isn’t it?

Ha, true!

The simple truth is that Will and I just use Mac/Linux. A Windows version is easy to do though! I will try to build it on windows when I have some spare time.

I’d buy/build this right now if I could control my windows mouse with it.

Resolution? Arc seconds? You need high speed cameras and high resolution to be meaningful enough to use as a mouse replacement.

1080p at 30 frames/sec good enough? I hear some fruit computer will be selling that for $25 per camera.

Use it to aim a turret similar to military helicopters.

Could this, combined with something like occulus rift, be used to solve some of the dof issues with vr?

I considered something like this to track pupil convergence in the oculus. The problem is that the eyepieces are so close to your eyes that you’d have a difficult time getting a camera in there. The only possibility would be to mount the camera with the screen, but again, I think the distortion from the lenses might cause a major issue.

Could you use something similar to a ray or path tracing algorithm to account for lens distortion?

nah, you can do an even simpler glsl style distortion effect, then take the inverse of that.

Its what games for the Rift already do to get rid of the distortion.

I know some other guys in Boston — a startup company — who are several years ahead.

Those cameras are not that cheap on amazon like the website states.

Hi,

We try to keep the list up to date. Check the “more buying choices option”. The cameras should be listed much cheaper there. Also you can buy used (does not matter really) and go even lower. Hope this can clarify some things.

saw this project the other day.

i wonder if it could be used to control a mouse cursor properly.

A good eye tracker can control a mouse with enough precision to do most tasks — certainly no worse than a trackpad, and maybe better. I don’t know if this is a good eye tracker, but I’m inclined to say that it’s not ready for those kinds of apps. It still needs a PC to do the computations, which are non-trivial, and OpenCV is a paragon of bloatware, so it’s not doing them fast. Here’s a link to the company I mentioned previously http://www.army.mil/article/74675/ This is special purpose for now, but ultimately it doesn’t use any exotic components and it could scale to a low-cost model, say under $400. The processing is done internally… tablet is for diagnosis.

Brilliant, well done and all that. But, does anybody else find the eyeball close-up freaky just a bit? It reminds me of A Clockwork Orange.

r u sure direct ir beam nearby eye is ok?

Yeah, it’s safe. Even for high-fps systems, where the cameras need more light, the IR level is way below the safety limit.

WOW. Pupil is on Hackaday!! That’s awesome! Thank you very much.

Pupil on Hackaday. Super cool! Thanks!

They could swap the eye tracking camera with a nude PS3 eye as that captures at 120hz albeit at 320×240 vs.Microsoft HD-6000 which does 30hz at 640×480. The 120hz would be useful in capturing saccades (~100hz).

http://www.doc.ic.ac.uk/~afaisal/FaisalLab/resources/AbbottFaisal-2012-JNeuralEng.pdf

http://www.faisallab.com/

This is great. Like the neat 3D printed headset.

Yes, following on from above we are working on a similar project here in London but aimed at helping people with severe motor disorders since 2009. We hope to use 3D gaze interaction to control wheelchairs and prosthetic limbs.

See our progress:

http://www.youtube.com/watch?v=9gU8RqttXeo

http://uk.reuters.com/video/2012/09/09/eye-control-glasses-offer-new-world-to-s?videoId=237615205

http://edition.cnn.com/2012/09/24/tech/mci-eye-tracking-gadget

http://www.faisallab.com/

For more tech info see our recent paper in J. Neural Eng:

Ultra-low-cost 3D gaze estimation: an intuitive high information throughput compliment to direct brain–machine interfaces.

http://iopscience.iop.org/1741-2552/9/4/046016

Could you use this in a similar way to VNG goggles? i.e. to track eye pursuit smoothness/ accuracy of saccades?

You need to check out http://www.imotionsglobal.com. They’re already doing all this, AND syncing it with EEG, GSR, and any other sensor you can think of etc…

I’m interested to make it my self as I need for research..can I write to you and ask further about it?

Is this still an ongoing research projecy? I am very interested in a low cost eye tracking device, as I suffer from MND.

How can this be made for $90 when you have to begin by buying their pre-printed headband from shapeways (with a “small markup”) at $200? (Yes, that’s a two followed by two zeros. Are they using platinum-infused filament?!? ;-)) This article is advertising in (a very thin) disguise and does not belong on a DIY site. IMO.

Where is the software to run this?